## Dual Line Charts: Accuracy and Token Usage vs. Training Progress

### Overview

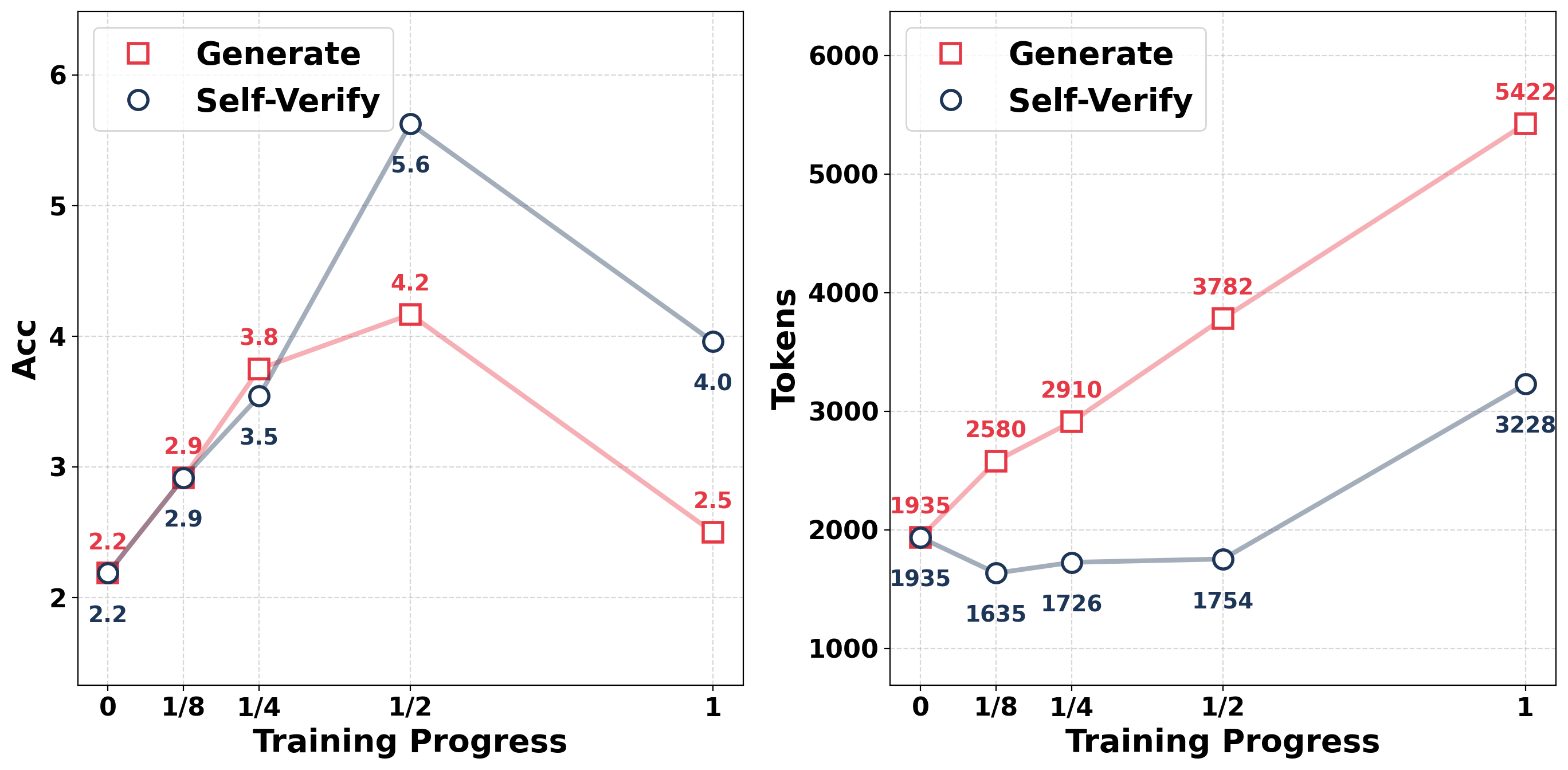

The image displays two side-by-side line charts comparing the performance of two methods, "Generate" and "Self-Verify," across different stages of training progress. The left chart tracks accuracy ("Acc"), while the right chart tracks the number of tokens used. Both charts share the same x-axis representing "Training Progress" with discrete points at 0, 1/8, 1/4, 1/2, and 1.

### Components/Axes

**Common Elements:**

* **X-Axis (Both Charts):** Labeled "Training Progress." The axis markers are at the following positions: `0`, `1/8`, `1/4`, `1/2`, and `1`.

* **Legend (Both Charts):** Located in the top-left corner of each chart's plot area.

* A red square symbol (□) corresponds to the label **"Generate"**.

* A blue circle symbol (○) corresponds to the label **"Self-Verify"**.

**Left Chart - Accuracy:**

* **Chart Title/Type:** Line chart showing Accuracy vs. Training Progress.

* **Y-Axis:** Labeled **"Acc"**. The scale ranges from 2 to 6, with major gridlines at intervals of 1 (2, 3, 4, 5, 6).

* **Data Series:**

* **Generate (Red line with square markers):** The line is a light red/pink color.

* **Self-Verify (Blue line with circle markers):** The line is a grayish-blue color.

**Right Chart - Token Usage:**

* **Chart Title/Type:** Line chart showing Tokens vs. Training Progress.

* **Y-Axis:** Labeled **"Tokens"**. The scale ranges from 1000 to 6000, with major gridlines at intervals of 1000 (1000, 2000, 3000, 4000, 5000, 6000).

* **Data Series:**

* **Generate (Red line with square markers):** The line is a light red/pink color.

* **Self-Verify (Blue line with circle markers):** The line is a grayish-blue color.

### Detailed Analysis

**Left Chart: Accuracy (Acc)**

* **Trend Verification:**

* **Generate (Red):** The line slopes upward from 0 to 1/2, reaching a peak, then slopes sharply downward from 1/2 to 1.

* **Self-Verify (Blue):** The line slopes upward from 0 to 1/2, reaching a higher peak than Generate, then slopes downward from 1/2 to 1, but remains above the Generate line at the final point.

* **Data Points (Value at each Training Progress marker):**

* **At 0:** Generate = 2.2, Self-Verify = 2.2

* **At 1/8:** Generate = 2.9, Self-Verify = 2.9

* **At 1/4:** Generate = 3.8, Self-Verify = 3.5

* **At 1/2:** Generate = 4.2, Self-Verify = 5.6

* **At 1:** Generate = 2.5, Self-Verify = 4.0

**Right Chart: Token Usage (Tokens)**

* **Trend Verification:**

* **Generate (Red):** The line shows a consistent, strong upward slope across all training progress points.

* **Self-Verify (Blue):** The line shows a slight initial decrease, then a gradual, modest upward slope from 1/8 to 1/2, followed by a steeper upward slope from 1/2 to 1.

* **Data Points (Value at each Training Progress marker):**

* **At 0:** Generate = 1935, Self-Verify = 1935

* **At 1/8:** Generate = 2580, Self-Verify = 1635

* **At 1/4:** Generate = 2910, Self-Verify = 1726

* **At 1/2:** Generate = 3782, Self-Verify = 1754

* **At 1:** Generate = 5422, Self-Verify = 3228

### Key Observations

1. **Accuracy Peak:** Both methods peak in accuracy at the halfway point (Training Progress = 1/2). The "Self-Verify" method achieves a significantly higher peak accuracy (5.6) compared to "Generate" (4.2).

2. **Accuracy Degradation:** After the peak at 1/2, both methods experience a drop in accuracy by the end of training (Progress = 1). However, "Self-Verify" maintains a higher final accuracy (4.0) than "Generate" (2.5).

3. **Token Cost Divergence:** The token usage for the "Generate" method increases linearly and substantially throughout training. In contrast, "Self-Verify" uses fewer tokens initially (after 0), grows slowly until the halfway point, and then increases more rapidly, but its final token count (3228) is still significantly lower than that of "Generate" (5422).

4. **Initial Parity:** At the very start of training (Progress = 0), both methods have identical accuracy (2.2) and token usage (1935).

### Interpretation

The data suggests a clear trade-off and performance narrative between the two methods during the training process:

* **"Self-Verify" is a more efficient and ultimately more accurate strategy.** It achieves a much higher peak accuracy and maintains better final accuracy, all while consuming considerably fewer tokens by the end of training. Its token usage pattern suggests it may be more selective or efficient in its operations, especially in the first half of training.

* **"Generate" shows signs of overfitting or degradation.** While it improves accuracy initially, its performance peaks lower and then collapses dramatically in the second half of training. This decline coincides with a relentless increase in token consumption, indicating it may be generating more content without a corresponding gain in quality, and potentially even harming its performance.

* **The halfway point (1/2) is critical.** It represents the optimal training stage for accuracy for both methods. The divergence in their trajectories after this point is the most telling: "Self-Verify" manages its resources better to retain more accuracy, while "Generate" expends more resources for diminishing and ultimately negative returns.

In summary, the charts demonstrate that the "Self-Verify" approach is superior in this context, offering a better balance of high accuracy and controlled computational cost (tokens) over the full course of training.