## Diagram: DeepSeek-R1 Model Training Pipeline

### Overview

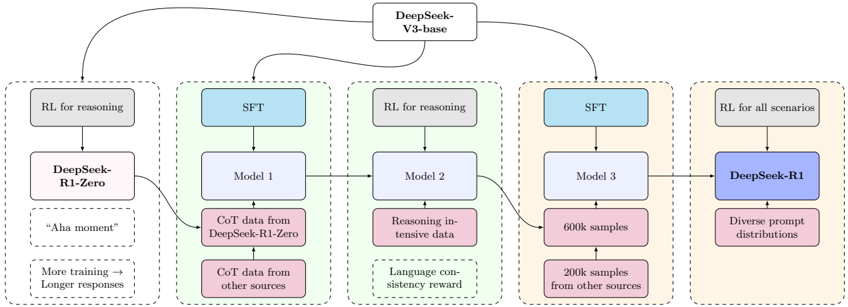

This image is a technical flowchart illustrating the multi-stage training pipeline for the DeepSeek-R1 series of reasoning models. It details the progression from the base model (DeepSeek-V3-base) through five distinct training pathways, each with specific objectives, data sources, and intermediate model outputs, culminating in the final DeepSeek-R1 model. The diagram uses a left-to-right flow with dashed boxes grouping related processes.

### Components/Axes

The diagram is structured as a flowchart with the following key components:

1. **Root Node:** `DeepSeek-V3-base` (Top center, blue box).

2. **Training Pathways:** Five distinct vertical columns, each enclosed in a dashed rectangle, representing parallel or sequential training tracks.

3. **Process Blocks:** Rectangular boxes within each pathway, indicating training methods (e.g., `RL for reasoning`, `SFT`), model checkpoints (e.g., `Model 1`, `DeepSeek-R1`), or data sources (e.g., `CoT data from DeepSeek-R1-Zero`).

4. **Flow Arrows:** Solid black arrows indicating the direction of data flow and model progression between blocks and pathways.

5. **Annotations:** Text within dashed boxes at the bottom of some pathways, providing additional context or outcomes (e.g., `"Aha moment"`, `More training → Longer responses`).

### Detailed Analysis

The pipeline is segmented into five primary pathways, described from left to right:

**Pathway 1 (Far Left): Initial Reasoning Model**

* **Input:** `DeepSeek-V3-base`.

* **Process:** `RL for reasoning` (Reinforcement Learning).

* **Output Model:** `DeepSeek-R1-Zero`.

* **Key Annotations:**

* `"Aha moment"` (suggests a breakthrough in reasoning capability).

* `More training → Longer responses` (indicates a correlation between training duration and output length).

* **Flow:** The output `DeepSeek-R1-Zero` feeds into Pathway 2.

**Pathway 2: Supervised Fine-Tuning (SFT) Stage 1**

* **Input:** `DeepSeek-V3-base` and `DeepSeek-R1-Zero` (from Pathway 1).

* **Process:** `SFT` (Supervised Fine-Tuning).

* **Output Model:** `Model 1`.

* **Data Sources:**

* `CoT data from DeepSeek-R1-Zero` (Chain-of-Thought data generated by the initial model).

* `CoT data from other sources`.

* **Flow:** `Model 1` feeds into Pathway 3.

**Pathway 3: Reasoning Refinement with RL**

* **Input:** `Model 1` (from Pathway 2).

* **Process:** `RL for reasoning`.

* **Output Model:** `Model 2`.

* **Data/Feedback Sources:**

* `Reasoning-intensive data`.

* `Language consistency reward` (a reward model signal to improve fluency).

* **Flow:** `Model 2` feeds into Pathway 4.

**Pathway 4: Large-Scale SFT**

* **Input:** `Model 2` (from Pathway 3) and `DeepSeek-V3-base`.

* **Process:** `SFT`.

* **Output Model:** `Model 3`.

* **Data Sources:**

* `600k samples`.

* `200k samples from other sources`.

* **Flow:** `Model 3` feeds into Pathway 5.

**Pathway 5 (Far Right): Final Model for All Scenarios**

* **Input:** `Model 3` (from Pathway 4).

* **Process:** `RL for all scenarios`.

* **Final Output Model:** `DeepSeek-R1` (highlighted in a darker blue box).

* **Data Source:** `Diverse-prompt distributions`.

### Key Observations

1. **Iterative Refinement:** The pipeline shows a clear iterative process: initial RL creates a zero-shot reasoning model (`R1-Zero`), which is then used to generate data for SFT, followed by further RL refinement, another large-scale SFT, and finally a broad RL stage.

2. **Hybrid Training:** It combines Reinforcement Learning (`RL`) and Supervised Fine-Tuning (`SFT`) in an alternating sequence, suggesting a strategy to first explore reasoning capabilities (RL) and then consolidate and scale them (SFT).

3. **Data Scaling:** The data volume increases significantly in the later SFT stage (Pathway 4), with 800k total samples mentioned, indicating a move towards broader generalization.

4. **Specialization to Generalization:** The final stage (`RL for all scenarios`) and the use of `Diverse-prompt distributions` indicate a shift from training focused purely on reasoning to creating a robust model for varied applications.

5. **Model Lineage:** The diagram explicitly traces the lineage of `DeepSeek-R1` back through `Model 3`, `Model 2`, `Model 1`, and `DeepSeek-R1-Zero`, all originating from `DeepSeek-V3-base`.

### Interpretation

This diagram outlines a sophisticated, multi-phase methodology for developing advanced reasoning AI. The process is not a simple, single training run but a carefully orchestrated pipeline.

* **The "Aha moment"** annotation for `DeepSeek-R1-Zero` is particularly significant. It implies that the initial pure RL phase led to an emergent, non-trivial reasoning capability, which then served as the foundational seed for all subsequent training.

* The **alternation between RL and SFT** suggests a deliberate balance: RL is used to discover novel reasoning strategies and optimize for correctness, while SFT is used to stabilize, standardize, and scale these capabilities using curated datasets.

* The **progression from `RL for reasoning` to `RL for all scenarios`** demonstrates a strategic expansion of the model's objective—from mastering a specific skill (reasoning) to performing reliably across a wide spectrum of tasks.

* The pipeline's complexity indicates that achieving high-performance reasoning in LLMs likely requires this kind of multi-stage, data-generative approach, where each model iteration creates better data or signals for the next. The final `DeepSeek-R1` is the product of this extensive refinement cycle, designed to be both a powerful reasoner and a versatile general assistant.