# Technical Data Extraction: Comparing Test-time and Pretraining Compute

## 1. Document Overview

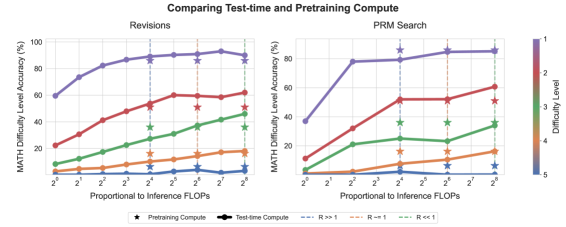

This image contains two line graphs comparing the effectiveness of increasing test-time compute versus pretraining compute across different problem difficulty levels on the MATH dataset.

* **Main Title:** Comparing Test-time and Pretraining Compute

* **Sub-charts:**

1. **Revisions** (Left)

2. **PRM Search** (Right)

* **Language:** English

---

## 2. Component Isolation

### A. Global Legend & Scales

* **Y-Axis (Both):** MATH Difficulty Level Accuracy (%)

* **Range:** 0 to 100 (Left chart), 0 to 80+ (Right chart).

* **Markers:** 0, 20, 40, 60, 80, 100.

* **X-Axis (Both):** Proportional to Inference FLOPs

* **Scale:** Logarithmic (Base 2).

* **Markers:** $2^0, 2^1, 2^2, 2^3, 2^4, 2^5, 2^6, 2^7, 2^8$.

* **Color Gradient Legend (Right Side):** Difficulty Level

* **Scale:** 1 (Purple/Top) to 5 (Blue/Bottom).

* **Mapping:**

* Level 1: Purple

* Level 2: Red/Pink

* Level 3: Green

* Level 4: Orange/Brown

* Level 5: Blue

* **Bottom Legend (Symbols & Lines):**

* **Star ($\star$):** Pretraining Compute

* **Circle-Line ($\bullet$):** Test-time Compute

* **Blue Dashed Vertical Line:** $R \gg 1$

* **Orange Dashed Vertical Line:** $R \approx 1$

* **Green Dashed Vertical Line:** $R \ll 1$

---

## 3. Data Analysis: Revisions (Left Chart)

### Trend Verification

All data series show a positive correlation between inference FLOPs and accuracy. The slope is steepest for Level 1 (Purple) and Level 2 (Red), while Level 5 (Blue) remains nearly flat near 0% accuracy.

### Data Points (Approximate Values)

| Difficulty Level (Color) | $2^0$ Accuracy | $2^8$ Accuracy | Trend Description |

| :--- | :--- | :--- | :--- |

| **1 (Purple)** | ~60% | ~90% | Rapid ascent, plateaus after $2^4$. |

| **2 (Red)** | ~22% | ~62% | Steady upward slope. |

| **3 (Green)** | ~8% | ~45% | Steady upward slope. |

| **4 (Orange)** | ~2% | ~18% | Shallow upward slope. |

| **5 (Blue)** | ~1% | ~3% | Nearly flat; very low accuracy. |

### Pretraining Compute ($\star$) Comparison

Stars are placed at $2^4, 2^6,$ and $2^8$ for various levels.

* At $2^8$, the test-time compute (circles) generally matches or slightly exceeds the pretraining compute (stars) for levels 2, 3, and 4.

---

## 4. Data Analysis: PRM Search (Right Chart)

### Trend Verification

The "PRM Search" method shows a much sharper "elbow" in the curve. Accuracy gains are very high between $2^0$ and $2^2$ for Level 1, then plateau. Lower difficulty levels show more linear growth.

### Data Points (Approximate Values)

| Difficulty Level (Color) | $2^0$ Accuracy | $2^8$ Accuracy | Trend Description |

| :--- | :--- | :--- | :--- |

| **1 (Purple)** | ~38% | ~85% | Sharp jump to $2^2$, then plateaus. |

| **2 (Red)** | ~11% | ~60% | Consistent upward slope. |

| **3 (Green)** | ~4% | ~35% | Consistent upward slope. |

| **4 (Orange)** | ~1% | ~16% | Shallow upward slope. |

| **5 (Blue)** | ~0% | ~2% | Minimal gains. |

---

## 5. Key Observations & Vertical Markers

The vertical dashed lines represent the ratio ($R$) of compute efficiency.

1. **$R \gg 1$ (Blue, $2^4$):** Indicates a regime where one form of compute is significantly more efficient.

2. **$R \approx 1$ (Orange, $2^6$):** Indicates a "break-even" point where test-time and pretraining compute yields similar returns.

3. **$R \ll 1$ (Green, $2^8$):** Indicates the opposite regime of the blue line.

**Summary of Findings:**

* Increasing test-time compute (moving right on the x-axis) consistently improves accuracy across all difficulty levels, though the returns diminish as difficulty increases (Level 5 is hardest).

* **PRM Search** appears to reach higher performance ceilings faster for Level 1 problems compared to the **Revisions** method, but Revisions starts with a higher baseline at $2^0$ FLOPs.

* The stars ($\star$) indicate that for many difficulty levels, increasing test-time compute can achieve the same accuracy as models with significantly more pretraining compute.