## Diagram: Knowledge Graph Reasoning for Question Answering

### Overview

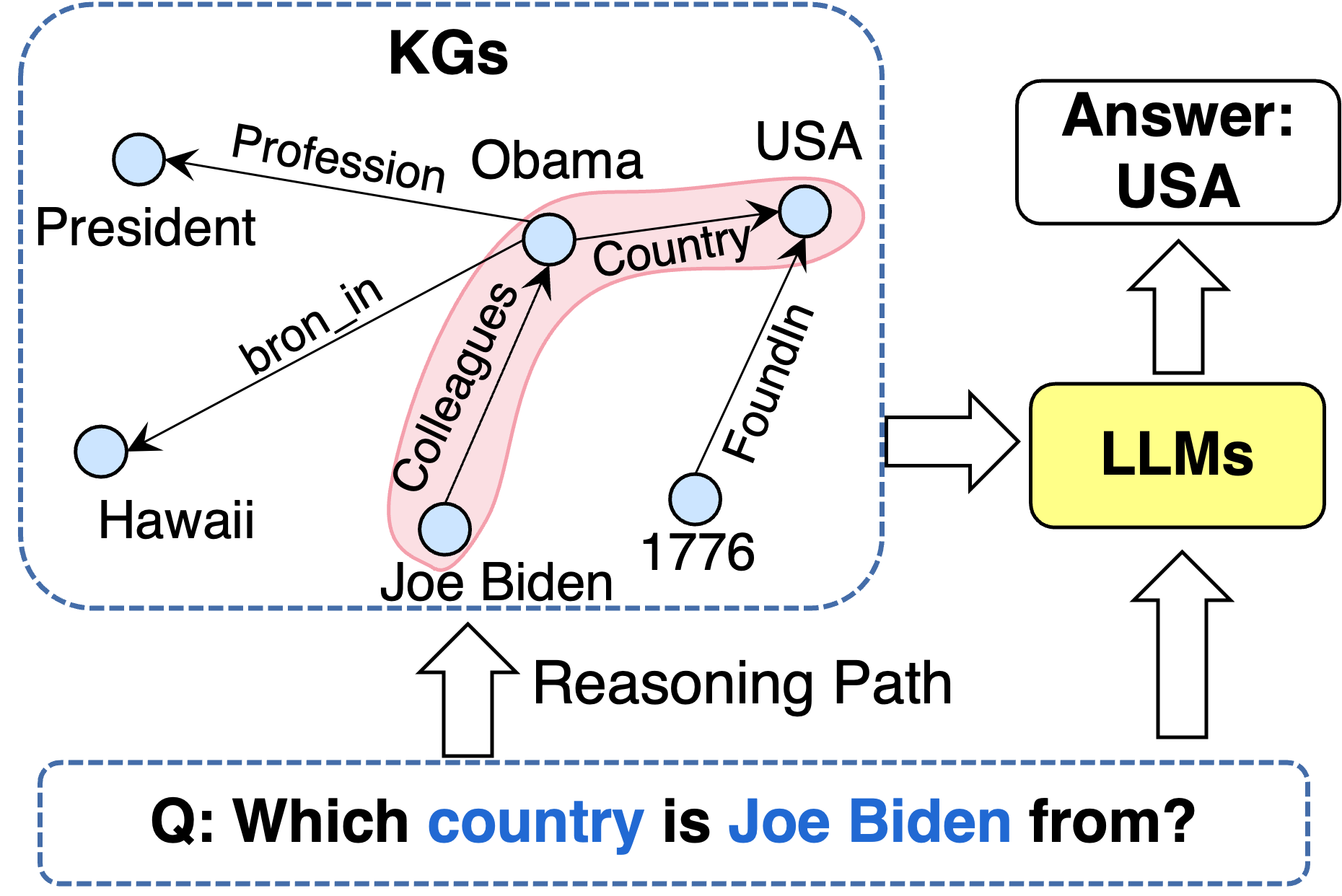

This image is a conceptual diagram illustrating how a Knowledge Graph (KG) and Large Language Models (LLMs) can be combined to answer a factual question through multi-hop reasoning. The diagram shows a specific example: answering "Which country is Joe Biden from?" by traversing relationships in a KG.

### Components/Axes

The diagram is segmented into three primary regions:

1. **Left Region (KGs):** A dashed blue box labeled "KGs" (Knowledge Graphs) containing a network of nodes (entities) and directed edges (relationships).

2. **Center-Right Region (LLMs & Answer):** A yellow rounded rectangle labeled "LLMs" and a white rounded rectangle labeled "Answer: USA".

3. **Bottom Region (Question):** A dashed blue box containing the input question.

**Spatial Layout:**

* The **KGs** box occupies the left ~60% of the image.

* The **LLMs** box is positioned to the right of the KGs box, connected by a large white arrow.

* The **Answer** box is directly above the LLMs box, connected by an upward-pointing white arrow.

* The **Question** box spans the bottom of the image, with an upward arrow labeled "Reasoning Path" pointing into the KGs box.

### Detailed Analysis

**1. Knowledge Graph (KGs) Content:**

* **Nodes (Entities):** Represented by light blue circles. The labeled nodes are:

* `Obama`

* `President`

* `Hawaii`

* `Joe Biden`

* `USA`

* `1776`

* **Edges (Relationships):** Represented by black arrows with text labels. The relationships are:

* `Obama` --(Profession)--> `President`

* `Obama` --(bron_in)--> `Hawaii` *(Note: "bron_in" appears to be a typo for "born_in")*

* `Joe Biden` --(Colleagues)--> `Obama`

* `Obama` --(Country)--> `USA`

* `USA` --(FoundedIn)--> `1776`

* **Highlighted Reasoning Path:** A semi-transparent pink shape highlights a specific path through the graph: `Joe Biden` -> `Colleagues` -> `Obama` -> `Country` -> `USA`.

**2. Process Flow:**

* An upward arrow from the **Question** box points into the **KGs** box, labeled "Reasoning Path".

* A large white arrow points from the **KGs** box to the **LLMs** box.

* A white upward arrow points from the **LLMs** box to the **Answer** box.

**3. Text Content:**

* **Question Box:** `Q: Which country is Joe Biden from?`

* The words `country` and `Joe Biden` are highlighted in blue font.

* **LLMs Box:** `LLMs` (in black text on a yellow background).

* **Answer Box:** `Answer: USA` (in black text).

### Key Observations

* The diagram explicitly shows a **multi-hop reasoning** process. The answer "USA" is not directly linked to "Joe Biden" in the KG. The system must infer the connection via the intermediate node "Obama".

* The **pink highlight** visually isolates the critical reasoning chain used to derive the answer.

* The **typo "bron_in"** is present on the edge from Obama to Hawaii.

* The diagram uses **color coding**: light blue for KG entities, yellow for the LLM processing unit, and blue text to highlight key terms in the question.

### Interpretation

This diagram serves as a pedagogical or conceptual model for a **neuro-symbolic AI system**. It demonstrates how structured knowledge (the KG) can be leveraged by a statistical model (the LLM) to perform logical reasoning.

* **What it demonstrates:** The system doesn't just retrieve a stored fact. It performs a graph traversal: 1) Identify the subject (`Joe Biden`). 2) Find a connecting relationship (`Colleagues`) to another entity (`Obama`). 3) From that entity, find the target relationship (`Country`) to arrive at the answer (`USA`). This mimics human-like deductive reasoning.

* **Relationship between elements:** The KG provides the factual backbone and relational structure. The LLM acts as the reasoning engine that interprets the question, navigates the graph, and synthesizes the final answer. The "Reasoning Path" arrow signifies that the question triggers a specific search strategy within the KG.

* **Notable Anomaly:** The inclusion of the node `1776` and the `FoundedIn` relationship, while factually correct for the USA, is **extraneous to answering the specific question**. This highlights a challenge in KG-based QA: distinguishing relevant from irrelevant subgraphs. The system must focus on the path leading to the answer and ignore tangential facts.

* **Underlying Message:** The diagram argues for the value of combining explicit, symbolic knowledge representations (KGs) with the flexible processing power of LLMs to achieve more accurate, explainable, and logically sound question answering compared to using an LLM alone, which might rely solely on parametric knowledge.