TECHNICAL ASSET FINGERPRINT

3c9af4ab2a9690944e50055e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

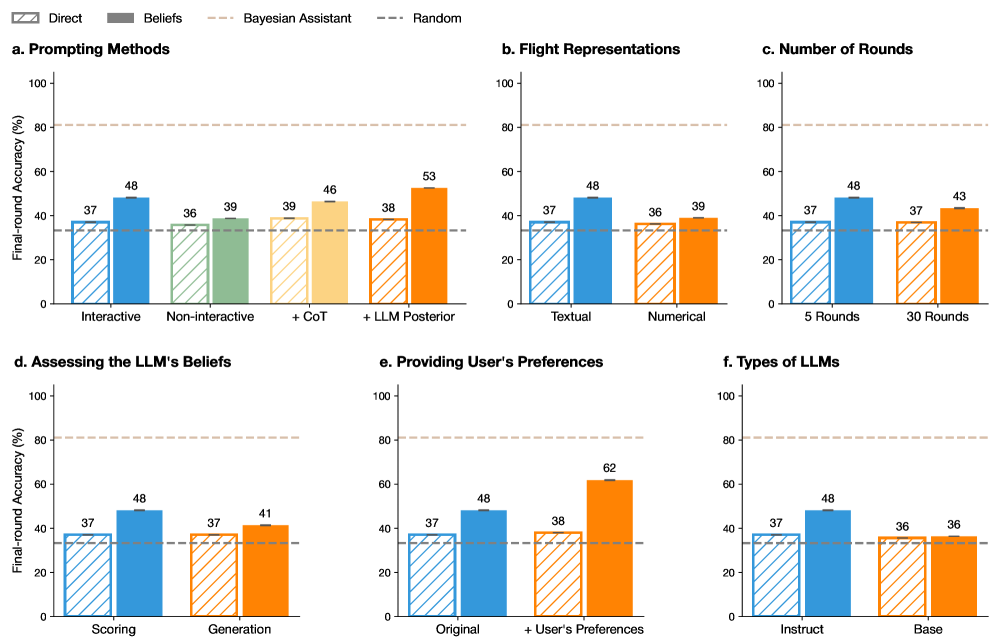

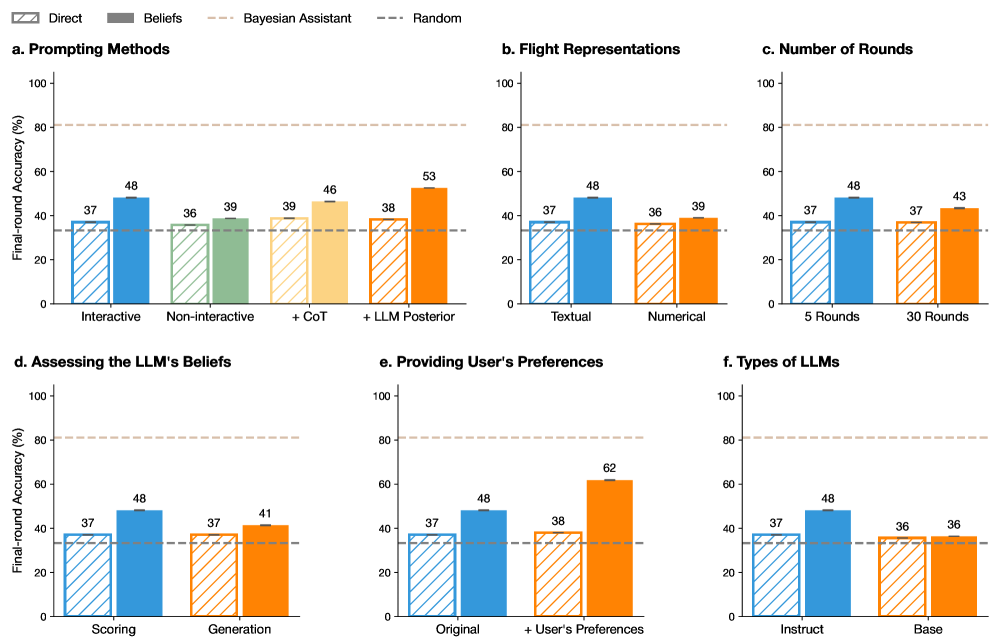

## Bar Charts: Final-Round Accuracy Comparison

### Overview

The image presents six bar charts comparing the final-round accuracy (%) of different methods and parameters related to Large Language Models (LLMs). The charts compare different prompting methods, flight representations, number of rounds, ways of assessing LLM beliefs, methods of providing user preferences, and types of LLMs. Each chart compares the "Direct" method (striped bars) with other methods (solid bars) using different colors. A horizontal dashed line represents a "Random" baseline.

### Components/Axes

* **Y-axis (all charts):** "Final-round Accuracy (%)", ranging from 0 to 100. Tick marks are not explicitly labeled, but the scale is linear.

* **Legend (top):**

* "Direct": Striped bars

* "Beliefs": Solid bars (color varies by chart)

* "Bayesian Assistant": Not explicitly represented in the charts, but implied as a comparison point.

* "Random": Dashed horizontal line.

* **Horizontal dashed lines:** Two horizontal dashed lines are present in each chart. One is at approximately 33% and is labeled "Random" in the legend. The other is at approximately 82% and is unlabeled.

* **Chart Titles:** Each chart has a title (a, b, c, d, e, f) indicating the comparison being made.

### Detailed Analysis

**a. Prompting Methods**

* X-axis: Prompting Methods (Interactive, Non-interactive, +CoT, +LLM Posterior)

* "Direct" (striped blue):

* Interactive: 37%

* Non-interactive: 36%

* +CoT: 39%

* +LLM Posterior: 38%

* "Beliefs" (solid):

* Interactive (solid blue): 48%

* Non-interactive (solid green): 39%

* +CoT (solid yellow): 46%

* +LLM Posterior (solid orange): 53%

* Trend: The "Beliefs" method generally outperforms the "Direct" method across all prompting methods.

**b. Flight Representations**

* X-axis: Flight Representations (Textual, Numerical)

* "Direct" (striped blue):

* Textual: 37%

* Numerical: 36%

* "Beliefs" (solid):

* Textual (solid blue): 48%

* Numerical (solid orange): 39%

* Trend: The "Beliefs" method outperforms the "Direct" method for both textual and numerical flight representations.

**c. Number of Rounds**

* X-axis: Number of Rounds (5 Rounds, 30 Rounds)

* "Direct" (striped blue):

* 5 Rounds: 37%

* 30 Rounds: 37%

* "Beliefs" (solid):

* 5 Rounds (solid blue): 48%

* 30 Rounds (solid orange): 43%

* Trend: The "Beliefs" method outperforms the "Direct" method for both 5 and 30 rounds.

**d. Assessing the LLM's Beliefs**

* X-axis: Assessing the LLM's Beliefs (Scoring, Generation)

* "Direct" (striped blue):

* Scoring: 37%

* Generation: 37%

* "Beliefs" (solid):

* Scoring (solid blue): 48%

* Generation (solid orange): 41%

* Trend: The "Beliefs" method outperforms the "Direct" method for both scoring and generation.

**e. Providing User's Preferences**

* X-axis: Providing User's Preferences (Original, + User's Preferences)

* "Direct" (striped blue):

* Original: 37%

* + User's Preferences: 38%

* "Beliefs" (solid):

* Original (solid blue): 48%

* + User's Preferences (solid orange): 62%

* Trend: The "Beliefs" method outperforms the "Direct" method for both original and user-preference-enhanced methods. The largest performance gap is observed when user preferences are included.

**f. Types of LLMs**

* X-axis: Types of LLMs (Instruct, Base)

* "Direct" (striped blue):

* Instruct: 37%

* Base: 36%

* "Beliefs" (solid):

* Instruct (solid blue): 48%

* Base (solid orange): 36%

* Trend: The "Beliefs" method outperforms the "Direct" method for the "Instruct" LLM type, but performs similarly for the "Base" LLM type.

### Key Observations

* The "Beliefs" method consistently outperforms the "Direct" method across most categories.

* The largest performance difference is observed in "Providing User's Preferences" when user preferences are included.

* The "Beliefs" method shows a significant advantage with the "Instruct" LLM type compared to the "Base" LLM type.

* The "Random" baseline is consistently around 33% accuracy.

### Interpretation

The data suggests that incorporating "Beliefs" into LLMs generally improves final-round accuracy compared to the "Direct" method. The improvement is particularly noticeable when providing user preferences and when using "Instruct" type LLMs. This indicates that understanding and utilizing the LLM's beliefs, especially in conjunction with user-specific information, can significantly enhance performance. The consistent outperformance over the "Random" baseline confirms that these methods are providing meaningful improvements. The "Bayesian Assistant" mentioned in the legend is not directly represented in the charts, but it likely serves as a theoretical or comparative benchmark for the other methods.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Bar Charts: LLM Accuracy Across Different Conditions

### Overview

The image presents six bar charts (labeled a through f) comparing the final-round accuracy (%) of a Large Language Model (LLM) under various conditions. Each chart explores a different aspect of the LLM's performance, including prompting methods, flight representations, number of rounds, assessing LLM beliefs, providing user preferences, and types of LLMs. Four data series are represented in each chart: "Direct" (blue), "Beliefs" (light blue), "Bayesian Assistant" (orange), and "Random" (yellow).

### Components/Axes

* **Y-axis:** Final-round Accuracy (%), ranging from 0 to 100.

* **X-axis:** Varies depending on the chart, representing different conditions or categories.

* **Legend:** Located in the top-left corner, identifying the data series colors:

* Direct (Blue)

* Beliefs (Light Blue)

* Bayesian Assistant (Orange)

* Random (Yellow)

* **Charts:** Arranged in a 2x3 grid.

### Detailed Analysis or Content Details

**a. Prompting Methods**

* X-axis categories: Interactive, Non-Interactive, + CoT, + LLM Posterior.

* Direct: 37%, 36%, 39%, 53% - Trend: Generally stable, with a significant increase at "+ LLM Posterior".

* Beliefs: 48%, 39%, 46%, 38% - Trend: Starts high, dips, then recovers.

* Bayesian Assistant: N/A, N/A, 39%, 38% - Trend: Data only available for the last two categories, relatively stable.

* Random: N/A, N/A, N/A, N/A - Trend: No data.

**b. Flight Representations**

* X-axis categories: Textual, Numerical.

* Direct: 48%, 37% - Trend: Decreases from Textual to Numerical.

* Beliefs: 48%, 36% - Trend: Decreases from Textual to Numerical.

* Bayesian Assistant: N/A, 39% - Trend: Data only available for Numerical.

* Random: N/A, N/A - Trend: No data.

**c. Number of Rounds**

* X-axis categories: 5 Rounds, 30 Rounds.

* Direct: 48%, 37% - Trend: Decreases from 5 to 30 rounds.

* Beliefs: 37%, 37% - Trend: Remains constant.

* Bayesian Assistant: N/A, 43% - Trend: Data only available for 30 rounds.

* Random: N/A, N/A - Trend: No data.

**d. Assessing the LLM's Beliefs**

* X-axis categories: Scoring, Generation.

* Direct: 37%, 48% - Trend: Increases from Scoring to Generation.

* Beliefs: 48%, 37% - Trend: Decreases from Scoring to Generation.

* Bayesian Assistant: N/A, 41% - Trend: Data only available for Generation.

* Random: N/A, N/A - Trend: No data.

**e. Providing User's Preferences**

* X-axis categories: Original, + User's Preferences.

* Direct: 37%, 62% - Trend: Significant increase with user preferences.

* Beliefs: 48%, 38% - Trend: Decreases with user preferences.

* Bayesian Assistant: N/A, N/A - Trend: No data.

* Random: N/A, N/A - Trend: No data.

**f. Types of LLMs**

* X-axis categories: Instruct, Base.

* Direct: 37%, 48% - Trend: Increases from Instruct to Base.

* Beliefs: 36%, 36% - Trend: Remains constant.

* Bayesian Assistant: N/A, 36% - Trend: Data only available for Base.

* Random: N/A, N/A - Trend: No data.

### Key Observations

* The "Direct" method consistently shows moderate accuracy across most conditions.

* The "Beliefs" method often performs well initially but can decline in certain scenarios.

* The "Bayesian Assistant" generally shows promising results when data is available, but is often missing data.

* The "Random" method consistently lacks data.

* Providing user preferences (chart e) leads to a substantial increase in accuracy for the "Direct" method.

* "+ LLM Posterior" prompting method (chart a) shows the highest accuracy for the "Direct" method.

### Interpretation

The data suggests that the LLM's performance is highly sensitive to the prompting method and the context provided. The significant improvement observed when incorporating user preferences indicates that aligning the LLM with user expectations is crucial for achieving higher accuracy. The varying performance of the "Beliefs" method suggests that the LLM's internal beliefs may not always align with the desired outcome. The limited data for the "Bayesian Assistant" and "Random" methods hinders a comprehensive comparison. The charts collectively demonstrate the importance of carefully designing prompts and leveraging external information (like user preferences) to optimize LLM performance. The absence of data for the "Random" method suggests it may not be a viable approach or was not tested in these conditions. The differences in accuracy between "Instruct" and "Base" LLM types (chart f) suggest that instruction tuning can improve performance. The overall trend shows that the LLM's accuracy is not uniform across all conditions, highlighting the need for tailored approaches based on the specific task and context.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Panel Bar Chart: Final-Round Accuracy Across Experimental Conditions

### Overview

The image displays a set of six bar charts (labeled a through f) comparing the "Final-round Accuracy (%)" of different methods or conditions in what appears to be a study on Large Language Model (LLM) performance, likely in a Bayesian or interactive reasoning task. Each subplot compares two primary conditions ("Direct" and "Beliefs") across different experimental variables. Two horizontal dashed lines serve as baselines across all charts.

### Components/Axes

* **Global Y-Axis:** "Final-round Accuracy (%)" with a scale from 0 to 100, marked at intervals of 20 (0, 20, 40, 60, 80, 100).

* **Global Legend (Top Center):**

* **Direct:** Represented by striped bars.

* **Beliefs:** Represented by solid-colored bars.

* **Bayesian Assistant:** A horizontal dashed line in light brown/tan, positioned at approximately 80% accuracy.

* **Random:** A horizontal dashed line in dark gray, positioned at approximately 33% accuracy.

* **Subplot Titles:**

* a. Prompting Methods

* b. Flight Representations

* c. Number of Rounds

* d. Assessing the LLM's Beliefs

* e. Providing User's Preferences

* f. Types of LLMs

* **X-Axis Categories:** Each subplot has two primary categories on its x-axis, with each category containing a pair of bars (Direct and Beliefs).

### Detailed Analysis

**Subplot a. Prompting Methods**

* **Categories:** "Interactive" and "Non-interactive".

* **Data Points:**

* Interactive: Direct = 37%, Beliefs = 48% (Blue bars).

* Non-interactive: Direct = 36%, Beliefs = 39% (Green bars).

* **Trend:** The "Beliefs" condition outperforms "Direct" in both cases, with a larger gain in the Interactive setting.

**Subplot b. Flight Representations**

* **Categories:** "Textual" and "Numerical".

* **Data Points:**

* Textual: Direct = 37%, Beliefs = 48% (Blue bars).

* Numerical: Direct = 36%, Beliefs = 39% (Orange bars).

* **Trend:** Similar pattern to subplot (a). The "Beliefs" condition shows a significant improvement with Textual representation.

**Subplot c. Number of Rounds**

* **Categories:** "5 Rounds" and "30 Rounds".

* **Data Points:**

* 5 Rounds: Direct = 37%, Beliefs = 48% (Blue bars).

* 30 Rounds: Direct = 37%, Beliefs = 43% (Orange bars).

* **Trend:** Accuracy for the "Beliefs" condition is higher with fewer rounds (5 vs. 30).

**Subplot d. Assessing the LLM's Beliefs**

* **Categories:** "Scoring" and "Generation".

* **Data Points:**

* Scoring: Direct = 37%, Beliefs = 48% (Blue bars).

* Generation: Direct = 37%, Beliefs = 41% (Orange bars).

* **Trend:** The "Beliefs" condition yields higher accuracy when assessed via "Scoring" compared to "Generation".

**Subplot e. Providing User's Preferences**

* **Categories:** "Original" and "+ User's Preferences".

* **Data Points:**

* Original: Direct = 37%, Beliefs = 48% (Blue bars).

* + User's Preferences: Direct = 38%, Beliefs = 62% (Orange bars).

* **Trend:** This subplot shows the most dramatic improvement. Adding user preferences boosts the "Beliefs" condition accuracy to 62%, the highest value across all charts.

**Subplot f. Types of LLMs**

* **Categories:** "Instruct" and "Base".

* **Data Points:**

* Instruct: Direct = 37%, Beliefs = 48% (Blue bars).

* Base: Direct = 36%, Beliefs = 36% (Orange bars).

* **Trend:** The "Beliefs" condition provides a substantial benefit for the "Instruct" model type but no benefit for the "Base" model type.

### Key Observations

1. **Consistent Baseline:** The "Direct" method (striped bars) shows remarkably consistent accuracy, hovering between 36-38% across all conditions, closely aligning with the "Random" baseline (~33%).

2. **Beliefs Condition Impact:** The "Beliefs" condition (solid bars) universally improves upon the "Direct" method, except for the "Base" LLM type in subplot (f), where both are equal at 36%.

3. **Peak Performance:** The highest observed accuracy (62%) is achieved in subplot (e) under the "+ User's Preferences" condition using the "Beliefs" method.

4. **Bayesian Assistant Benchmark:** The "Bayesian Assistant" baseline (~80%) remains significantly above the performance of all tested LLM conditions, indicating a substantial gap between the model's performance and this theoretical or ideal benchmark.

5. **Color Coding:** The bar colors are consistent across subplots for the same experimental variable (e.g., blue for Interactive/Textual/5 Rounds/Scoring/Original/Instruct), aiding visual comparison.

### Interpretation

The data suggests that explicitly modeling or incorporating the LLM's "Beliefs" into the prompting or reasoning process ("Beliefs" condition) consistently improves final-round accuracy compared to a "Direct" prompting approach. This improvement is robust across various factors like interactivity, data representation, and assessment method.

The most significant finding is the powerful synergistic effect of combining the "Beliefs" approach with the provision of user preferences (subplot e), which yields the highest accuracy. This implies that tailoring the interaction to align with user-specific goals or biases greatly enhances the model's performance in this task.

However, the benefit of the "Beliefs" approach is not universal; it fails to improve performance for the "Base" LLM (subplot f), suggesting that the model's underlying architecture or training (Instruct vs. Base) is a critical factor for this method to be effective. The consistent underperformance relative to the "Bayesian Assistant" benchmark highlights room for improvement in LLM-based reasoning systems. The charts collectively argue for the value of belief-aware and user-aware prompting strategies in complex reasoning tasks.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart Analysis: Prompting Methods and LLM Performance

### Overview

The image contains six grouped bar charts (a-f) comparing final-round accuracy (%) across different prompting methods, flight representations, rounds, belief assessments, user preferences, and LLM types. All charts share a consistent y-axis (0-100% accuracy) and x-axis categories specific to each subplot. A dashed line at ~37% represents a "Random" baseline, while a dotted line at ~80% marks an upper threshold.

---

### Components/Axes

**Legend (Top-left):**

- **Direct** (blue with diagonal stripes)

- **Beliefs** (green with diagonal stripes)

- **Bayesian Assistant** (orange with diagonal stripes)

- **Random** (gray dashed line at ~37%)

**Subplot Labels:**

- **a. Prompting Methods**

- X-axis: Interactive, Non-interactive, + CoT, + LLM Posterior

- **b. Flight Representations**

- X-axis: Textual, Numerical

- **c. Number of Rounds**

- X-axis: 5 Rounds, 30 Rounds

- **d. Assessing the LLM's Beliefs**

- X-axis: Scoring, Generation

- **e. Providing User's Preferences**

- X-axis: Original, + User's Preferences

- **f. Types of LLMs**

- X-axis: Instruct, Base

---

### Detailed Analysis

#### a. Prompting Methods

- **Interactive**:

- Direct: 37%

- Beliefs: 48%

- **Non-interactive**:

- Direct: 36%

- Beliefs: 39%

- **+ CoT**:

- Direct: 39%

- Beliefs: 46%

- **+ LLM Posterior**:

- Direct: 38%

- Beliefs: 53%

#### b. Flight Representations

- **Textual**:

- Direct: 37%

- Beliefs: 48%

- **Numerical**:

- Direct: 36%

- Beliefs: 39%

#### c. Number of Rounds

- **5 Rounds**:

- Direct: 37%

- Beliefs: 48%

- **30 Rounds**:

- Direct: 37%

- Beliefs: 43%

#### d. Assessing the LLM's Beliefs

- **Scoring**:

- Direct: 37%

- Beliefs: 48%

- **Generation**:

- Direct: 37%

- Beliefs: 41%

#### e. Providing User's Preferences

- **Original**:

- Direct: 37%

- Beliefs: 48%

- **+ User's Preferences**:

- Direct: 38%

- Beliefs: 62%

#### f. Types of LLMs

- **Instruct**:

- Direct: 37%

- Beliefs: 48%

- **Base**:

- Direct: 36%

- Beliefs: 36%

---

### Key Observations

1. **Beliefs > Direct**: Across all subplots, "Beliefs" consistently outperforms "Direct" prompting, with the largest gap in **e. Providing User's Preferences** (+62% vs. 38%).

2. **User Preferences Impact**: Adding user preferences in subplot **e** causes the largest accuracy jump (62%), suggesting personalization significantly enhances performance.

3. **LLM Type Neutrality**: Subplot **f** shows no difference between "Instruct" and "Base" LLMs, indicating architectural differences may not affect outcomes under these methods.

4. **Diminishing Returns**: Increasing rounds from 5 to 30 in subplot **c** yields minimal improvement (48% → 43% for Beliefs), implying saturation.

5. **Random Baseline**: All "Direct" methods cluster near the 37% Random line, while "Beliefs" consistently exceeds it.

---

### Interpretation

- **Effective Strategies**:

- **Beliefs prompting** (e.g., incorporating LLM-generated beliefs) and **user preference integration** are the most impactful methods, outperforming direct instruction by 10-25%.

- **Flight representations** (Textual vs. Numerical) show mixed results, with Textual slightly better for Beliefs.

- **LLM Agnosticism**: The lack of difference between "Instruct" and "Base" LLMs suggests prompting method matters more than model architecture.

- **Diminishing Returns**: Extended rounds (30 vs. 5) show negligible gains, highlighting efficiency trade-offs.

- **Outlier**: The 62% accuracy in subplot **e** (+User Preferences) is an extreme outlier, warranting deeper investigation into why personalization drives such high performance.

This analysis underscores the importance of belief-aware prompting and user-centric customization in optimizing LLM performance, while cautioning against over-reliance on extended interaction rounds or architectural complexity.

DECODING INTELLIGENCE...