## Neural Network Architecture Diagram: Dense Convolutional Network with Multi-Scale Filters

### Overview

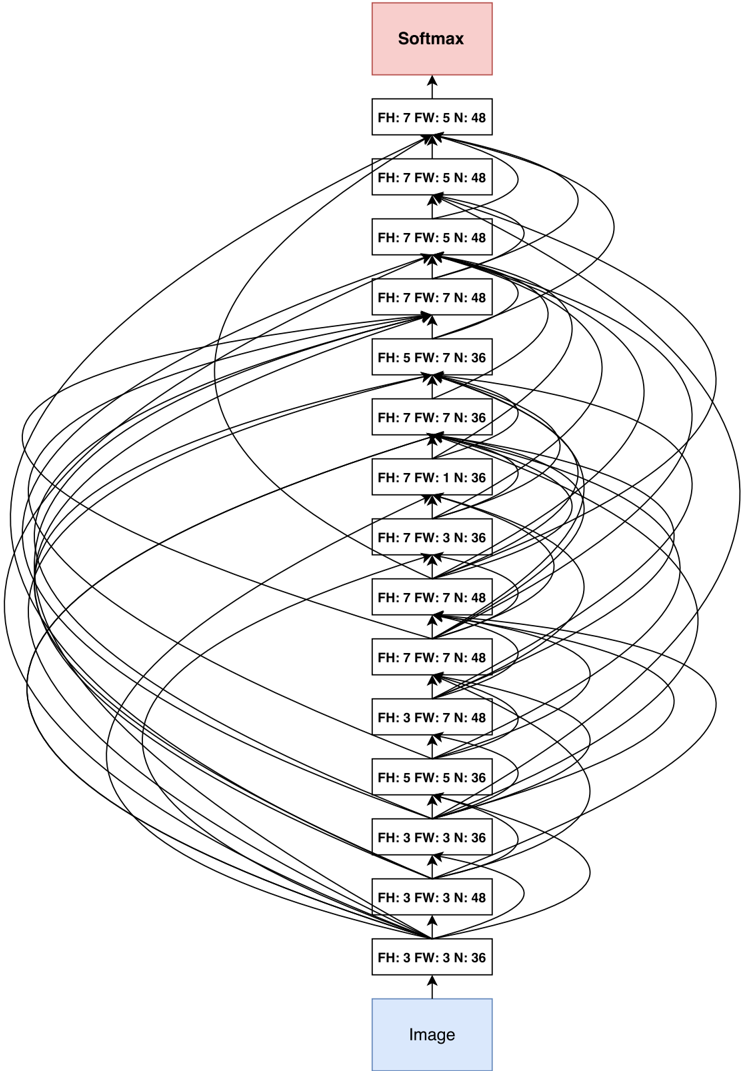

The image displays a schematic diagram of a deep convolutional neural network architecture. The flow of data is vertical, originating from an input "Image" block at the bottom and culminating in a "Softmax" output block at the top. The core of the architecture consists of 15 intermediate processing layers, each represented by a white rectangular box containing specific parameters. A defining characteristic is the dense connectivity pattern, where each layer receives inputs from multiple preceding layers, indicated by numerous curved, overlapping arrows.

### Components/Axes

* **Input Block:** A light blue rectangle at the bottom center labeled "Image".

* **Output Block:** A light pink rectangle at the top center labeled "Softmax".

* **Intermediate Layers:** 15 white rectangular boxes stacked vertically between the input and output. Each box contains text specifying three parameters:

* **FH:** Filter Height

* **FW:** Filter Width

* **N:** Number of Filters (Output Channels)

* **Connections:** Black arrows indicate the flow of data. Straight vertical arrows connect consecutive layers. A complex web of curved arrows originates from the output of lower layers and feeds into the inputs of many higher layers, illustrating a dense connectivity scheme.

### Detailed Analysis

**Layer Parameters (from bottom to top):**

1. `FH: 3 FW: 3 N: 36`

2. `FH: 3 FW: 3 N: 48`

3. `FH: 3 FW: 3 N: 36`

4. `FH: 5 FW: 5 N: 36`

5. `FH: 3 FW: 7 N: 48`

6. `FH: 7 FW: 7 N: 48`

7. `FH: 7 FW: 7 N: 48`

8. `FH: 7 FW: 3 N: 36`

9. `FH: 7 FW: 1 N: 36`

10. `FH: 7 FW: 7 N: 36`

11. `FH: 5 FW: 7 N: 36`

12. `FH: 7 FW: 7 N: 48`

13. `FH: 7 FW: 5 N: 48`

14. `FH: 7 FW: 5 N: 48`

15. `FH: 7 FW: 5 N: 48`

**Spatial Grounding & Flow:**

* The "Image" input is at the absolute bottom.

* The "Softmax" output is at the absolute top.

* The 15 intermediate layers are stacked centrally in a vertical column.

* The dense connection arrows create a visually complex, web-like pattern primarily on the left and right sides of the central column, linking lower layers to many higher layers. The exact mapping of every connection is not explicitly labeled but follows a dense block pattern where layer `i` receives the feature maps of all preceding layers `0..i-1` as input.

### Key Observations

1. **Variable Filter Sizes:** The network employs a wide variety of convolutional kernel (filter) sizes, including square kernels (3x3, 5x5, 7x7) and rectangular kernels (7x1, 7x3, 5x7, 3x7). This suggests the architecture is designed to capture features at multiple scales and aspect ratios simultaneously.

2. **Fluctuating Channel Depth:** The number of filters (`N`) does not follow a simple monotonic increase. It fluctuates between 36 and 48 throughout the network, which is atypical for many standard architectures that progressively increase depth.

3. **Dense Connectivity:** The overwhelming number of skip connections is the most prominent visual feature. This is characteristic of a DenseNet-like architecture, where each layer receives the collective knowledge of all preceding layers, promoting feature reuse and gradient flow.

4. **Asymmetric Kernels:** The presence of kernels like `FH:7 FW:1` and `FH:7 FW:3` indicates dedicated layers for extracting strongly vertical or horizontally oriented features.

### Interpretation

This diagram represents a sophisticated, non-standard convolutional neural network designed for image classification (as indicated by the final Softmax layer). The architecture's core philosophy is **multi-scale feature extraction within a densely connected framework**.

* **Purpose:** The network is engineered to recognize patterns of varying sizes and shapes from the input image. The mix of small (3x3), medium (5x5), and large (7x7) kernels, along with rectangular ones, allows it to simultaneously analyze fine details, broader textures, and elongated structures.

* **Dense Connectivity Rationale:** The dense wiring (the web of arrows) ensures that later layers have direct access to low-level features from early layers. This mitigates the vanishing gradient problem, encourages feature reuse, and can lead to more compact models with strong performance. It creates a "collective knowledge" effect where the network's understanding is built cumulatively.

* **Anomaly/Design Choice:** The non-monotonic filter count (`N`) is noteworthy. Instead of a funnel-like structure (e.g., 36 -> 48 -> 64 -> 128), the capacity expands and contracts. This could be a deliberate design to control model complexity at different stages, prevent overfitting in certain feature transformation steps, or balance computational load.

* **Overall Function:** The system processes an input image through this complex, interconnected series of multi-scale convolutions. Features are progressively transformed and combined in a non-linear fashion. The final layer aggregates all these processed features into a probability distribution over classes via the Softmax function. The architecture prioritizes rich feature interaction and gradient flow over a simple, sequential processing pipeline.