## Neural Network Architecture Diagram: Layer Configuration and Data Flow

### Overview

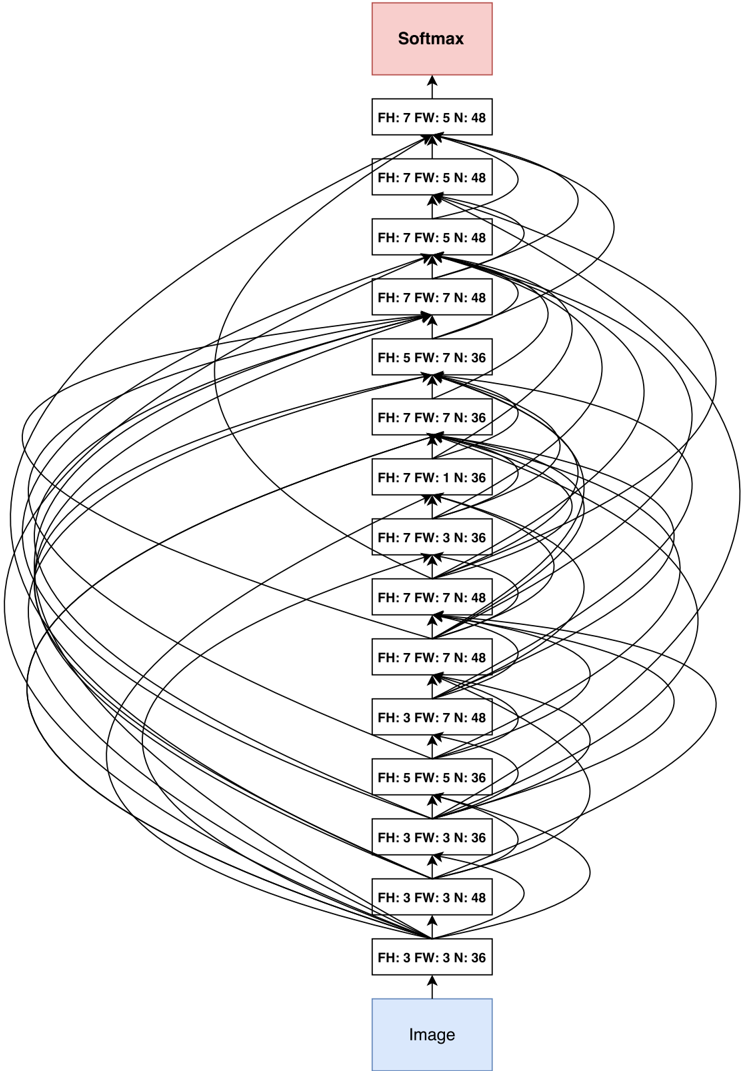

The image depicts a neural network architecture with a hierarchical structure of fully connected (FH) and convolutional (FW) layers, culminating in a Softmax output layer. The diagram illustrates the flow of data from an input image through multiple processing layers to a classification output.

### Components/Axes

- **Input Layer**: Labeled "Image" at the bottom, serving as the data entry point.

- **Hidden Layers**:

- **Fully Connected (FH) Layers**:

- FH: 3 FW: 3 N: 36

- FH: 3 FW: 3 N: 48

- FH: 5 FW: 5 N: 36

- FH: 5 FW: 5 N: 48

- FH: 7 FW: 7 N: 36

- FH: 7 FW: 7 N: 48

- **Convolutional (FW) Layers**:

- FH: 3 FW: 3 N: 36

- FH: 3 FW: 3 N: 48

- FH: 5 FW: 5 N: 36

- FH: 5 FW: 5 N: 48

- FH: 7 FW: 7 N: 36

- FH: 7 FW: 7 N: 48

- **Output Layer**: Softmax (pink box) at the top, indicating final classification probabilities.

### Detailed Analysis

1. **Layer Configuration**:

- All layers use a combination of filter height (FH), filter width (FW), and neuron count (N).

- Parameters increase in complexity from bottom to top (e.g., FH: 3 → FH: 7).

- Convolutional layers (FW) and fully connected layers (FH) alternate in the architecture.

2. **Data Flow**:

- Arrows indicate unidirectional flow from the input image upward through the network.

- Each layer's output connects to the next layer's input, with no skip connections shown.

3. **Parameter Trends**:

- Filter sizes (FH/FW) increase from 3x3 to 7x7 as data progresses upward.

- Neuron counts (N) vary between 36 and 48 across layers, suggesting dimensionality adjustments.

### Key Observations

- **Hierarchical Complexity**: The network grows in depth and filter size, typical of deep learning architectures for feature extraction.

- **Symmetry**: Convolutional and fully connected layers alternate with mirrored parameter configurations (e.g., FH: 3 FW: 3 N: 36 appears twice).

- **Output Mechanism**: Softmax at the top implies multi-class classification, though class labels are not specified.

### Interpretation

This architecture resembles a hybrid convolutional-fully connected network, optimized for hierarchical feature learning. The increasing filter sizes suggest progressive abstraction of image features, while the alternating layer types balance spatial and global pattern recognition. The Softmax output indicates the network's purpose is classification, though the specific task (e.g., image recognition) is not explicitly stated. The absence of activation functions or regularization details limits understanding of optimization strategies. The diagram emphasizes structural symmetry, which may imply balanced computational load across layers.