## [Table/Comparison Diagram]: Reasoning Chain Comparison for Question-Answering Tasks

### Overview

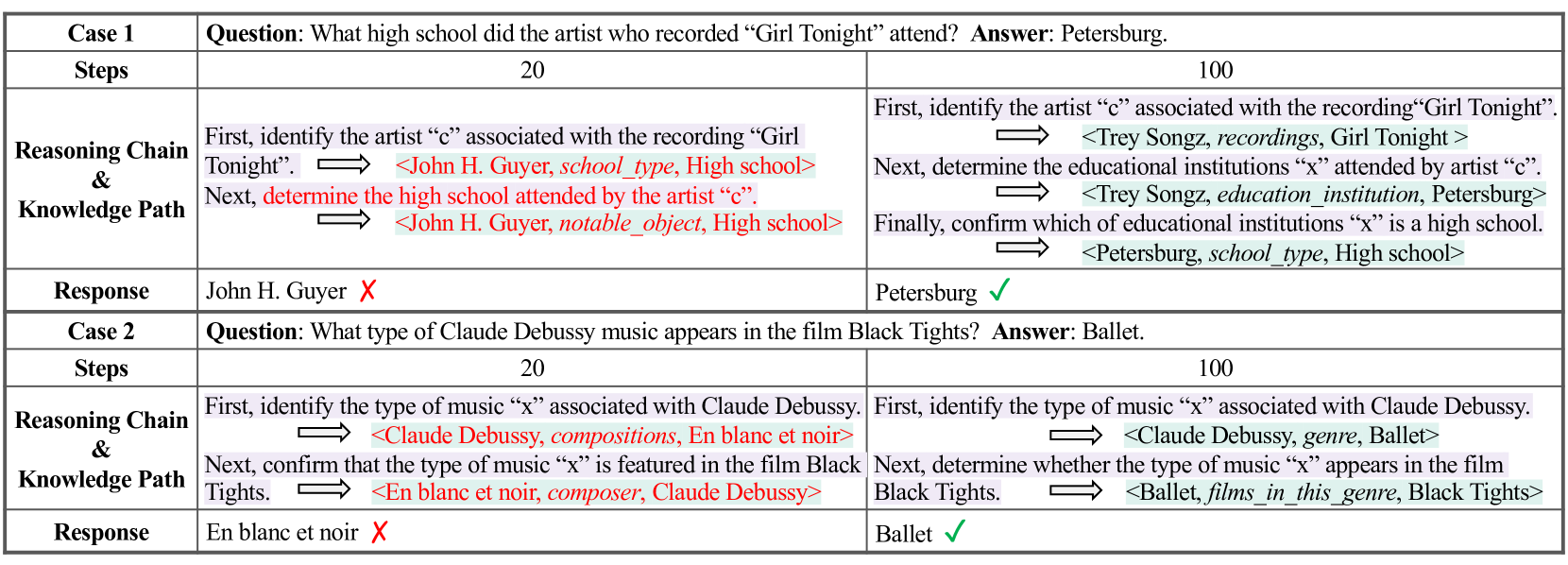

The image displays a structured comparison table analyzing two distinct question-answering cases (Case 1 and Case 2). Each case contrasts two different reasoning approaches, labeled "Steps 20" and "Steps 100," to solve a factual question. The table highlights the reasoning chain, knowledge path, and final response for each approach, using color-coding (red for incorrect paths/responses, green for correct ones) and symbols (✗ for incorrect, ✓ for correct) to indicate accuracy.

### Components/Axes

The table is organized into the following rows and columns:

* **Columns:** The table has three main columns. The first column lists the row labels (Case, Steps, Reasoning Chain & Knowledge Path, Response). The second and third columns present the two contrasting approaches for each case, labeled "20" and "100" respectively.

* **Rows (per case):**

* **Case Header:** States the case number and the specific question being answered, along with the correct answer.

* **Steps:** A numerical label ("20" or "100") for the reasoning approach.

* **Reasoning Chain & Knowledge Path:** A textual description of the logical steps taken, interspersed with knowledge triplets formatted as `<Subject, Predicate, Object>`. Arrows (`⟹`) indicate the flow from one step to the next.

* **Response:** The final answer generated by the reasoning chain.

### Detailed Analysis

**Case 1:**

* **Question:** What high school did the artist who recorded "Girl Tonight" attend?

* **Correct Answer:** Petersburg.

* **Approach "20" (Left Column):**

* **Reasoning Chain:** "First, identify the artist 'c' associated with the recording 'Girl Tonight'." → Knowledge triplet: `<John H. Guyer, school_type, High school>` (highlighted in red). "Next, determine the high school attended by the artist 'c'." → Knowledge triplet: `<John H. Guyer, notable_object, High school>` (highlighted in red).

* **Response:** John H. Guyer (marked with a red ✗).

* **Approach "100" (Right Column):**

* **Reasoning Chain:** "First, identify the artist 'c' associated with the recording 'Girl Tonight'." → Knowledge triplet: `<Trey Songz, recordings, Girl Tonight>`. "Next, determine the educational institutions 'x' attended by artist 'c'." → Knowledge triplet: `<Trey Songz, education_institution, Petersburg>`. "Finally, confirm which of educational institutions 'x' is a high school." → Knowledge triplet: `<Petersburg, school_type, High school>`.

* **Response:** Petersburg (marked with a green ✓).

**Case 2:**

* **Question:** What type of Claude Debussy music appears in the film Black Tights?

* **Correct Answer:** Ballet.

* **Approach "20" (Left Column):**

* **Reasoning Chain:** "First, identify the type of music 'x' associated with Claude Debussy." → Knowledge triplet: `<Claude Debussy, compositions, En blanc et noir>` (highlighted in red). "Next, confirm that the type of music 'x' is featured in the film Black Tights." → Knowledge triplet: `<En blanc et noir, composer, Claude Debussy>` (highlighted in red).

* **Response:** En blanc et noir (marked with a red ✗).

* **Approach "100" (Right Column):**

* **Reasoning Chain:** "First, identify the type of music 'x' associated with Claude Debussy." → Knowledge triplet: `<Claude Debussy, genre, Ballet>`. "Next, determine whether the type of music 'x' appears in the film Black Tights." → Knowledge triplet: `<Ballet, films_in_this_genre, Black Tights>`.

* **Response:** Ballet (marked with a green ✓).

### Key Observations

1. **Error Pattern in "20" Approach:** Both incorrect ("20") reasoning chains exhibit a similar flaw. They retrieve a specific entity (a composition: "John H. Guyer" or "En blanc et noir") instead of the requested categorical attribute (a high school or a music type/genre). The subsequent knowledge triplets then attempt to validate this incorrect entity, leading to a circular or irrelevant path.

2. **Correct Pattern in "100" Approach:** Both correct ("100") reasoning chains follow a more logical, multi-step verification process. They first identify the correct intermediate entity (the artist Trey Songz, or the genre Ballet), then retrieve the target attribute (the education institution, or the film), and finally perform a verification step to confirm the attribute matches the question's constraint (confirming Petersburg is a high school, or that Ballet is a genre in the film).

3. **Visual Coding:** Red text and the ✗ symbol are consistently used to denote errors in the reasoning path and final answer. Green text (in the knowledge triplets) and the ✓ symbol denote correct reasoning and answers. The arrows (`⟹`) clearly delineate the step-by-step flow of logic.

### Interpretation

This diagram serves as a technical comparison between two methodologies for knowledge-grounded question answering, likely representing different model sizes, training paradigms, or reasoning strategies (e.g., "20" vs. "100" could refer to model parameters, training steps, or chain-of-thought depth).

The data demonstrates that the "100" approach employs a more robust and verifiable reasoning strategy. It correctly parses the question to identify the *type* of information needed (a high school, a music genre) and uses a chain of knowledge lookups that culminates in a verification step. In contrast, the "20" approach appears to make an initial, incorrect associative leap to a specific named entity and then fails to correct course, resulting in a logically flawed and incorrect answer.

The key takeaway is that effective factual question answering requires not just retrieving related information, but precisely understanding the *category* of the answer sought and implementing a multi-step verification process to ensure the retrieved data satisfies all constraints of the original query. The "100" method exemplifies this, while the "20" method illustrates a common failure mode of associative, non-verifying retrieval.