## Diagram: Reinforcement Learning Feedback Loop for Code Generation

### Overview

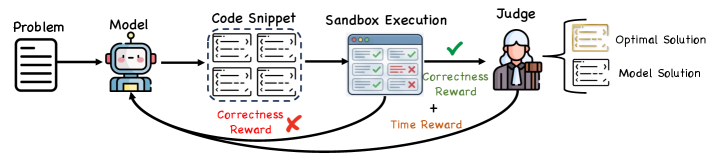

The image is a flowchart illustrating a reinforcement learning process for training a code-generation model. It depicts a cyclical workflow where a model generates code solutions to problems, which are then executed and evaluated, with feedback (rewards) sent back to the model to improve future performance.

### Components/Axes

The diagram is organized horizontally from left to right, representing a sequential process, with feedback loops returning to the model.

**Main Components (Left to Right):**

1. **Problem**: Represented by a document icon on the far left.

2. **Model**: Represented by a friendly robot icon.

3. **Code Snippet**: A dashed box containing four smaller rectangles, each representing a generated code solution.

4. **Sandbox Execution**: Represented by a browser window icon. Inside, there are two columns of results: three green checkmarks (✓) and two red X marks (✗).

5. **Judge**: Represented by a human figure icon.

6. **Output Solutions**: Two document icons on the far right:

* **Optimal Solution** (top)

* **Model Solution** (bottom)

**Feedback Loops & Labels:**

* **Correctness Reward (Red)**: A red arrow originates from the "Sandbox Execution" window and points back to the "Model." It is labeled "Correctness Reward" in red text and is accompanied by a large red "✗" symbol, indicating a negative reward or penalty for incorrect code.

* **Correctness Reward (Green)**: A green arrow originates from the "Judge" and points back to the "Model." It is labeled "Correctness Reward" in green text and is accompanied by a large green "✓" symbol, indicating a positive reward for correct code.

* **Time Reward**: An orange arrow originates from the "Sandbox Execution" window and points to the "Judge." It is labeled "Time Reward" in orange text.

### Detailed Analysis

The process flow is as follows:

1. A **Problem** is fed into the **Model**.

2. The **Model** generates multiple **Code Snippet** candidates.

3. These snippets are sent to the **Sandbox Execution** environment for testing. The results show a mixed outcome: some tests pass (green checkmarks), while others fail (red X's).

4. The execution results provide two feedback signals:

* A **Correctness Reward** (red, negative) is sent directly back to the model based on the pass/fail results.

* A **Time Reward** (orange) is sent to the **Judge**, likely based on the execution speed or efficiency of the code.

5. The **Judge** evaluates the solutions, presumably considering both correctness and efficiency (the Time Reward). The Judge then produces a final evaluation.

6. The Judge's evaluation results in a second **Correctness Reward** (green, positive) being sent back to the model.

7. The final output of the process is a selection between an **Optimal Solution** and the **Model Solution**.

### Key Observations

* **Dual Reward System**: The model receives feedback from two distinct sources: the raw execution results (sandbox) and a higher-level evaluator (judge). This suggests a multi-faceted optimization goal.

* **Negative vs. Positive Feedback**: The diagram explicitly distinguishes between negative feedback (red, from failed execution) and positive feedback (green, from the judge's approval).

* **Efficiency Metric**: The inclusion of a "Time Reward" indicates that performance is judged not only on correctness but also on computational efficiency or speed.

* **Iterative Improvement**: The two feedback loops pointing back to the model clearly frame this as an iterative, learning-oriented process.

### Interpretation

This diagram illustrates a sophisticated **Reinforcement Learning from Execution Feedback** paradigm for training code-generating AI. The core idea is to move beyond static datasets and use the live execution environment as a source of truth.

* **What it demonstrates**: The system creates a closed loop where the model's outputs are dynamically tested, and the results are quantified into reward signals. This allows the model to learn from its mistakes (via the red correctness reward) and from expert judgment (via the green correctness reward and time reward).

* **Relationship between elements**: The "Judge" acts as a critic or reward model, potentially aligning the code's performance with broader goals like readability, efficiency, or adherence to best practices, which raw test execution might not capture. The "Sandbox" provides objective, ground-truth verification.

* **Notable implications**: This approach can lead to more robust and efficient code generation. The model is incentivized to produce not just functionally correct code, but also code that is performant (time reward) and meets a standard of quality endorsed by the judge. The separation of "Optimal Solution" and "Model Solution" suggests the process aims to bridge the gap between the model's current capability and an ideal target.