## Diagram: Computational Process Flow with Offload/Onload Stages

### Overview

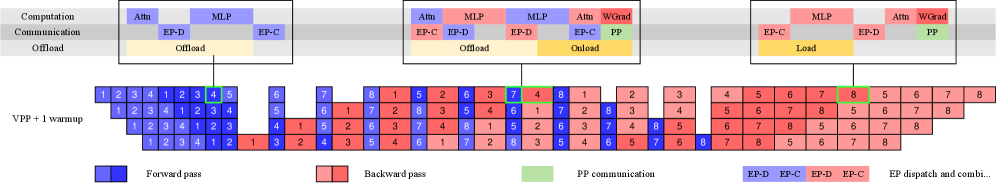

The diagram illustrates a multi-stage computational process involving computation, communication, and offload/onload operations. It includes a grid representing sequential steps (1-8) with color-coded operations (forward pass, backward pass, PP communication, EP dispatch/combined). Three primary sections at the top define computational stages, while the grid below maps the flow of operations across iterations.

---

### Components/Axes

1. **Top Sections**:

- **Computation**: Contains blocks labeled `Attn` (Attention), `MLP` (Multi-Layer Perceptron), `EP-D` (EP Dispatch), and `EP-C` (EP Combine).

- **Communication**: Includes `EP-D`, `EP-C`, and `PP` (Parameter Passing).

- **Offload/Onload**: Labeled `Offload` (yellow) and `Onload` (yellow), with `WGrad` (Weight Gradient) in red.

2. **Grid**:

- **Rows**: Labeled `VPP + 1 warmup` (Vertical Pipeline Parallelism + 1 warmup step).

- **Columns**: Numbered 1-8, representing sequential steps.

- **Colors**:

- Blue: Forward pass

- Red: Backward pass

- Green: PP communication

- Yellow: EP dispatch/combined (EP-D, EP-C)

3. **Legend**:

- Located at the bottom, mapping colors to operations:

- Blue: Forward pass

- Red: Backward pass

- Green: PP communication

- Yellow: EP dispatch/combined (EP-D, EP-C)

---

### Detailed Analysis

1. **Top Sections**:

- **Computation**:

- Leftmost section: `Attn` (blue), `MLP` (blue), `EP-D` (blue), `EP-C` (blue).

- Middle section: `Attn` (red), `MLP` (red), `EP-D` (red), `EP-C` (red).

- Rightmost section: `MLP` (red), `Attn` (red), `WGrad` (red), `EP-C` (red), `PP` (green).

- **Communication**:

- Repeats `EP-D` (blue), `EP-C` (blue), `PP` (green) across stages.

- **Offload/Onload**:

- Left: `Offload` (yellow).

- Middle: `Offload` (yellow) and `Onload` (yellow).

- Right: `Onload` (yellow).

2. **Grid**:

- **Rows**:

- Row 1: `1 2 3 4 1 2 3 4` (blue).

- Row 2: `1 2 3 4 1 2 3 4` (blue).

- Row 3: `1 2 3 4 1 2 3 4` (blue).

- Row 4: `1 2 3 4 1 2 3 4` (blue).

- **Columns**:

- Columns 1-4: Blue (forward pass).

- Columns 5-8: Red (backward pass), with green (PP communication) in column 7, row 4.

3. **Color Consistency**:

- Blue blocks in the grid align with "Forward pass" in the legend.

- Red blocks align with "Backward pass."

- Green blocks (e.g., column 7, row 4) match "PP communication."

- Yellow blocks in the top sections correspond to "EP dispatch/combined."

---

### Key Observations

1. **Sequential Flow**:

- The grid shows a repetitive pattern of forward pass (blue) in steps 1-4, followed by backward pass (red) in steps 5-8.

- PP communication (green) occurs in step 7, row 4, indicating a parameter-passing step mid-process.

2. **Offload/Onload Dynamics**:

- Offload (yellow) appears in the left and middle sections, suggesting data transfer out of the computation stage.

- Onload (yellow) in the middle and right sections indicates data retrieval into the computation stage.

3. **WGrad and PP**:

- `WGrad` (red) in the rightmost computation section highlights weight gradient computation during backward pass.

- `PP` (green) in the communication section emphasizes parameter synchronization.

---

### Interpretation

The diagram models a distributed training workflow, likely for neural networks, with stages for computation, communication, and data transfer. The grid represents iterative steps (1-8) where:

- **Forward pass** (blue) dominates early steps, executing attention and MLP layers.

- **Backward pass** (red) follows, computing gradients and updating weights.

- **PP communication** (green) occurs mid-process, synchronizing parameters between pipeline stages.

- **EP dispatch/combined** (yellow) manages data offloading/onloading, optimizing resource usage.

The `VPP + 1 warmup` row suggests a pipeline parallelism strategy with an initial warmup phase to stabilize computation. The repetition of steps 1-4 in blue and 5-8 in red implies a two-phase workflow (forward/backward), while the green and yellow blocks highlight critical communication and data transfer points. This structure balances computational efficiency with communication overhead, typical in large-scale machine learning systems.