TECHNICAL ASSET FINGERPRINT

3d15844de2fae845b3ada39f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

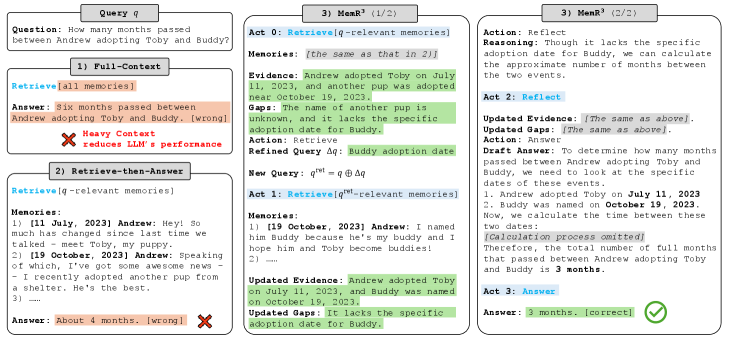

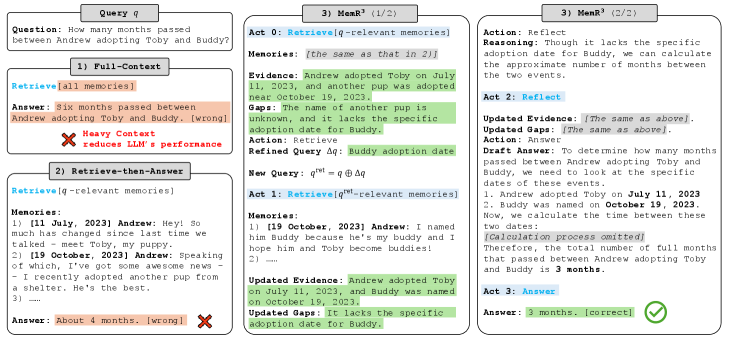

## Reasoning Process Diagram: Question Answering with Different Retrieval Strategies

### Overview

The image presents a diagram comparing three different approaches to answering a question using a language model (LLM). The question is: "How many months passed between Andrew adopting Toby and Buddy?". The diagram illustrates the reasoning process and the final answer provided by each approach: Full-Context, Retrieve-then-Answer, and MemR^3. The diagram highlights the strengths and weaknesses of each approach in terms of accuracy and the amount of context used.

### Components/Axes

* **Query q:** The initial question: "How many months passed between Andrew adopting Toby and Buddy?"

* **1) Full-Context:** This approach retrieves all available memories and uses them to answer the question.

* `Retrieve[all memories]`

* Answer: "Six months passed between Andrew adopting Toby and Buddy. [wrong]"

* "Heavy Context reduces LLM's performance" (indicated by a red "X")

* **2) Retrieve-then-Answer:** This approach retrieves only the memories relevant to the question and then answers the question.

* `Retrieve[q-relevant memories]`

* Memories:

* "1) [11 July, 2023] Andrew: Hey! So much has changed since last time we talked - meet Toby, my puppy."

* "2) [19 October, 2023] Andrew: Speaking of which, I've got some awesome news - I recently adopted another pup from a shelter. He's the best."

* "3) ..."

* Answer: "About 4 months. [wrong]" (indicated by a red "X")

* **3) MemR^3 (1/2 & 2/2):** This approach uses a more refined retrieval and reasoning process, involving multiple acts.

* **Act 0:** `Retrieve[q-relevant memories]`

* Memories: "[the same as that in 2)]"

* Evidence: "Andrew adopted Toby on July 11, 2023, and another pup was adopted near October 19, 2023."

* Gaps: "The name of another pup is unknown, and it lacks the specific adoption date for Buddy."

* Action: Retrieve

* Refined Query Δq: "Buddy adoption date"

* New Query: q^{ret} = q ⊕ Δq

* **Act 1:** `Retrieve[q^{ret}-relevant memories]`

* Memories:

* "1) [19 October, 2023] Andrew: I named him Buddy because he's my buddy and I hope him and Toby become buddies!"

* "2) ----"

* Updated Evidence: "Andrew adopted Toby on July 11, 2023, and Buddy was named on October 19, 2023."

* Updated Gaps: "It lacks the specific adoption date for Buddy."

* **Act 2:** Reflect

* Action: Reflect

* Reasoning: "Though it lacks the specific adoption date for Buddy, we can calculate the approximate number of months between the two events."

* Updated Evidence: "[The same as above]."

* Updated Gaps: "[The same as above]."

* Action: Answer

* Draft Answer: "To determine how many months passed between Andrew adopting Toby and Buddy, we need to look at the specific dates of these events."

* "1. Andrew adopted Toby on July 11, 2023"

* "2. Buddy was named on October 19, 2023."

* "Now, we calculate the time between these two dates: [Calculation process omitted]"

* "Therefore, the total number of full months that passed between Andrew adopting Toby and Buddy is 3 months."

* **Act 3:** Answer

* Answer: "3 months. [correct]" (indicated by a green checkmark)

### Detailed Analysis or ### Content Details

* **Full-Context:** This method uses all available information, leading to an incorrect answer, possibly due to irrelevant information diluting the relevant facts.

* **Retrieve-then-Answer:** This method retrieves relevant memories but still provides an incorrect answer, suggesting that the retrieved information is insufficient or misinterpreted.

* **MemR^3:** This method refines the query and iteratively retrieves and reflects on the information, leading to the correct answer. The process involves identifying gaps in the knowledge and specifically targeting those gaps with refined queries.

### Key Observations

* The Full-Context approach fails due to the inclusion of too much irrelevant information.

* The Retrieve-then-Answer approach fails despite retrieving relevant information, indicating a potential issue with reasoning or insufficient information.

* The MemR^3 approach succeeds by iteratively refining the query and focusing on filling knowledge gaps.

### Interpretation

The diagram demonstrates the importance of targeted information retrieval and iterative reasoning for accurate question answering. The MemR^3 approach highlights the effectiveness of refining queries and focusing on filling knowledge gaps, leading to a more accurate and reliable answer compared to simply using all available information or retrieving only initially relevant information. The failure of the Full-Context approach suggests that the presence of irrelevant information can negatively impact the performance of LLMs. The success of MemR^3 underscores the value of a more structured and iterative approach to knowledge retrieval and reasoning.

DECODING INTELLIGENCE...

EXPERT: gemini-3-flash-free VERSION 2

RUNTIME: google-free/gemini-3-flash-preview

INTEL_VERIFIED

## Diagram Type: Comparative Workflow for Memory-Augmented LLMs

### Overview

This technical diagram compares three different approaches for a Large Language Model (LLM) to answer a specific temporal reasoning question based on a set of "memories" (stored conversational data). The diagram is organized into four main panels: a top-left query box, two left-side panels showing failed baseline methods, and two large panels on the right detailing the successful iterative process of a method called **MemR³**.

### Components/Axes

* **Query $q$ (Top-Left):** Defines the input task.

* **1) Full-Context (Middle-Left):** A baseline method that provides all available data to the model at once.

* **2) Retrieve-then-Answer (Bottom-Left):** A standard Retrieval-Augmented Generation (RAG) approach.

* **3) MemR³ (Center and Right):** A multi-step, iterative reasoning and retrieval framework, split into two parts (1/2 and 2/2).

* **Color Coding:**

* **Blue Text in Brackets:** Represents specific system actions or "Acts" (e.g., `Retrieve`, `Reflect`, `Answer`).

* **Orange Highlight:** Indicates incorrect answers or failed outputs.

* **Green Highlight:** Indicates successful evidence extraction, gap identification, query refinement, and the final correct answer.

* **Red 'X' Icon:** Denotes a failure in performance or accuracy.

* **Green Checkmark Icon:** Denotes a successful and correct output.

---

### Content Details

#### Header: Query $q$

* **Question:** "How many months passed between Andrew adopting Toby and Buddy?"

#### Panel 1: Full-Context

* **Action:** `Retrieve[all memories]`

* **Answer (Orange):** "Six months passed between Andrew adopting Toby and Buddy. [wrong]"

* **Annotation (Red):** "Heavy Context reduces LLM's performance" (accompanied by a red 'X').

#### Panel 2: Retrieve-then-Answer

* **Action:** `Retrieve[q-relevant memories]`

* **Memories Extracted:**

1. `[11 July, 2023] Andrew: Hey! So much has changed since last time we talked - meet Toby, my puppy.`

2. `[19 October, 2023] Andrew: Speaking of which, I've got some awesome news - I recently adopted another pup from a shelter. He's the best.`

3. `...`

* **Answer (Orange):** "About 4 months. [wrong]" (accompanied by a red 'X').

#### Panel 3: MemR³ (1/2)

* **Act 0:** `Retrieve[q-relevant memories]`

* **Memories:** `[the same as that in 2)]`

* **Evidence (Green):** "Andrew adopted Toby on July 11, 2023, and another pup was adopted near October 19, 2023."

* **Gaps (Green):** "The name of another pup is unknown, and it lacks the specific adoption date for Buddy."

* **Action:** `Retrieve`

* **Refined Query $\Delta q$ (Green):** "Buddy adoption date"

* **New Query Formula:** $q^{ret} = q \oplus \Delta q$

* **Act 1:** `Retrieve[q^{ret}-relevant memories]`

* **Memories Extracted:**

1. `[19 October, 2023] Andrew: I named him Buddy because he's my buddy and I hope him and Toby become buddies!`

2. `...`

* **Updated Evidence (Green):** "Andrew adopted Toby on July 11, 2023, and Buddy was named on October 19, 2023."

* **Updated Gaps (Green):** "It lacks the specific adoption date for Buddy."

#### Panel 4: MemR³ (2/2)

* **Action:** `Reflect`

* **Reasoning:** "Though it lacks the specific adoption date for Buddy, we can calculate the approximate number of months between the two events."

* **Act 2:** `Reflect`

* **Updated Evidence/Gaps:** `[The same as above].`

* **Action:** `Answer`

* **Draft Answer:** "To determine how many months passed between Andrew adopting Toby and Buddy, we need to look at the specific dates of these events. 1. Andrew adopted Toby on **July 11, 2023** 2. Buddy was named on **October 19, 2023**. Now, we calculate the time between these two dates: *[Calculation process omitted]* Therefore, the total number of full months that passed between Andrew adopting Toby and Buddy is **3 months**."

* **Act 3:** `Answer`

* **Final Answer (Green):** "3 months. [correct]" (accompanied by a green checkmark).

---

### Key Observations

* **Context Overload:** The "Full-Context" method fails significantly (stating 6 months), suggesting that providing too much irrelevant data confuses the model's reasoning capabilities.

* **Precision Errors:** The "Retrieve-then-Answer" method gets closer (4 months) but fails on the exact calculation, likely due to a lack of explicit reasoning steps.

* **Iterative Refinement:** MemR³ identifies that the initial retrieval didn't explicitly link the name "Buddy" to the second adoption event. It generates a refined query ($\Delta q$) to confirm the identity of the second dog.

* **Explicit Reasoning:** MemR³ includes a "Reflect" stage where it acknowledges missing data (exact adoption date for Buddy) but determines that the naming date is a sufficient proxy for calculation.

### Interpretation

The data demonstrates that for complex temporal reasoning tasks, a **multi-hop, iterative retrieval and reflection process** (MemR³) is superior to single-pass methods.

1. **The "Heavy Context" Problem:** The diagram suggests that LLMs have a "distraction" threshold. When forced to process all memories, the model's accuracy drops more than when it uses a targeted retrieval.

2. **Gap Analysis as a Catalyst:** The critical innovation in MemR³ shown here is the explicit identification of "Gaps." By articulating what it *doesn't* know, the system can perform a second, targeted retrieval to bridge the information void.

3. **Logical Proxying:** In the final stage, the model uses the naming date (Oct 19) as a proxy for the adoption date. The calculation (July 11 to Oct 19) results in 3 full months (July-Aug, Aug-Sept, Sept-Oct). The "Retrieve-then-Answer" method likely rounded up to 4 months, whereas MemR³'s explicit "Draft Answer" process ensured a more precise calculation of "full months."

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: LLM Reasoning Process - Multi-Hop Question Answering

### Overview

This diagram illustrates a multi-hop question answering process using a Large Language Model (LLM). It depicts the LLM's reasoning steps to answer the question: "How many months passed between Andrew adopting Toby and Buddy?". The process is broken down into stages: Full-Context, Retrieve-then-Answer, and MemR (Memory Retrieval) with two sub-stages (1/2) and (2/2). Each stage includes a query, retrieval actions, memories, evidence, gaps, and an answer. The diagram highlights the LLM's attempts, including incorrect answers and the refinement of queries to achieve the correct result.

### Components/Axes

The diagram is structured into four main sections, arranged horizontally. Each section represents a stage in the reasoning process. Within each section, the following elements are present:

* **Query (Q):** The question or refined query being addressed.

* **Action:** The action the LLM takes (e.g., Retrieve, Reflect).

* **Memories:** Relevant memories retrieved by the LLM.

* **Evidence:** Facts extracted from the memories.

* **Gaps:** Missing information needed to answer the question.

* **Answer:** The LLM's attempt at answering the question, with an indication of correctness (correct/wrong).

* **Visual Indicators:** Red "X" marks incorrect answers, and a green checkmark indicates a correct answer.

### Detailed Analysis or Content Details

**1) Full-Context:**

* **Query:** How many months passed between Andrew adopting Toby and Buddy?

* **Action:** Retrieve [all memories]

* **Memories:** Not explicitly listed, but implied to be all available memories.

* **Evidence:** Andrew adopted Toby on July 11, 2023, and another pup was adopted near October 19, 2023.

* **Gaps:** The name of another pup is unknown, and lacks the specific adoption date for Buddy.

* **Answer:** Six months passed between Andrew adopting Toby and Buddy. [wrong]

* **Note:** A large red "X" is overlaid, indicating an incorrect answer. A text box states "Heavy context reduces LLM's performance".

**2) Retrieve-then-Answer:**

* **Query:** q → Δq

* **Action:** Retrieve [q-relevant memories]

* **Memories:**

1. (11 July, 2023) Andrew: Hey! So much has changed since last time we talked – meet Toby, my puppy.

2. (19 October, 2023) Andrew: Speaking of which, we’ve got some awesome news – I recently adopted another pup from a shelter. He’s the best.

* **Evidence:** Andrew adopted Toby on July 11, 2023, and another pup was adopted on October 19, 2023.

* **Gaps:** It lacks the specific adoption date for Buddy.

* **Answer:** About 4 months. [wrong]

**3) MemR (1/2):**

* **Query:** q → Δq

* **Action:** Retrieve [q-relevant memories]

* **Memories:** [the same as that in 2)]

* **Evidence:** Andrew adopted Toby on July 11, 2023, and another pup was adopted near October 19, 2023.

* **Gaps:** The name of another pup is unknown, and it lacks the specific adoption date for Buddy.

* **Action:** Retrieve

* **Refined Query:** Δq: Buddy adoption date

* **New Query:** q* = q + Δq

* **Action:** Retrieve [q*-relevant memories]

* **Memories:**

1. (19 October, 2023) Andrew: I named him Buddy because he’s my buddy and I hope him and Toby become buddies!

2. …

* **Evidence:** Andrew adopted Toby on July 11, 2023, and Buddy was named on October 19, 2023.

* **Gaps:** It lacks the specific adoption date for Buddy.

**4) MemR (2/2):**

* **Action:** Reflect

* **Reasoning:** Though it lacks the specific adoption date for Buddy, we can calculate the approximate number of months between the two events.

* **Action:** Reflect

* **Updated Evidence:** [The same as above].

* **Updated Gaps:** [The same as above].

* **Action:** Answer

* **Draft Answer:** To determine how many months passed between Andrew adopting Toby and Buddy, we need to look at the specific dates of the events.

1. Andrew adopted Toby on July 11, 2023

2. Buddy was named on October 19, 2023.

Now, we calculate the time between these two dates: [Calculation process omitted]

Therefore, the total number of full months that passed between Andrew adopting Toby and Buddy is 3 months.

* **Answer:** 3 months. [correct]

* **Note:** A green checkmark indicates a correct answer.

### Key Observations

* The LLM initially struggles with the question, providing incorrect answers in the Full-Context and Retrieve-then-Answer stages.

* The MemR stages demonstrate the LLM's ability to refine its query and retrieve more relevant information.

* The "Heavy context reduces LLM's performance" note suggests that providing too much initial information can hinder the LLM's reasoning.

* The final answer is achieved through a multi-step process of retrieval, reflection, and calculation.

### Interpretation

This diagram illustrates the iterative nature of LLM reasoning for complex questions. The LLM doesn't immediately arrive at the correct answer but refines its approach through multiple steps. The MemR stages highlight the importance of targeted memory retrieval and the ability to synthesize information from different sources. The diagram demonstrates that even with access to relevant information, LLMs may require explicit guidance and refinement of queries to achieve accurate results. The process shows how the LLM breaks down a complex question into smaller, manageable steps, ultimately leading to a correct answer. The diagram also suggests that LLM performance can be affected by the amount of initial context provided, emphasizing the need for efficient information retrieval strategies. The diagram is a visual representation of the LLM's internal thought process, providing insights into its strengths and limitations.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparative Memory Retrieval Approaches for Temporal Question Answering

### Overview

The image is a technical flowchart comparing two different approaches for answering a temporal question: "How many months passed between Andrew adopting Toby and Buddy?" It contrasts a naive "Full-Context" method with a more sophisticated, multi-step "Mem²" (Memory²) method. The diagram illustrates the process, intermediate data (memories, evidence), actions taken, and the final answer for each approach, highlighting the superior accuracy of the Mem² method.

### Components/Axes

The diagram is organized into three vertical columns, each representing a distinct process flow:

1. **Left Column (Query q / Full-Context):** Shows the baseline approach of retrieving all memories at once.

2. **Middle Column (Mem² (1/2)):** Shows the first step of the Mem² approach, which retrieves only question-relevant memories.

3. **Right Column (Mem² (2/2)):** Shows the second, reflective step of the Mem² approach, which identifies and retrieves missing information to correct the answer.

**Key Textual Elements & Labels:**

* **Top Header:** "Query q", "Mem² (1/2)", "Mem² (2/2)"

* **Question (in all flows):** "How many months passed between Andrew adopting Toby and Buddy?"

* **Process Steps:** Labeled as "1) Full-Context", "2) Retrieve-then-Answer", "Act 0: Retrieve", "Act 1: Retrieve", "Act 2: Reflect", "Act 3: Answer".

* **Data Containers:** Boxes labeled "Memories:", "Evidence:", "Updated Evidence:", "Updated Gaps:", "New Query:".

* **Actions:** "Retrieve [all memories]", "Retrieve [q-relevant memories]", "Retrieve [qᴹ-relevant memories]".

* **Answers & Annotations:** Final answers are boxed, with annotations like "[wrong]", "[correct]", and explanatory notes in red and green text.

### Detailed Analysis

**1. Left Column: Full-Context / Retrieve-then-Answer**

* **Process:** Retrieves all memories in one go.

* **Memories Retrieved:**

* "[11 July, 2023] Andrew: Hey! So much has changed since last time we talked!"

* "[19 October, 2023] Andrew: Speaking of news, I've got some awesome news - I recently adopted another pup from a shelter. He's the best..."

* **Evidence Extracted:** "Andrew adopted Toby on July 11, 2023, and another pup was adopted near October 19, 2023."

* **Answer Given:** "Six months passed between Andrew adopting Toby and Buddy."

* **Outcome:** Marked with a red cross (❌) and the note: "Heavy Context reduces LLM's performance". The answer is labeled "[wrong]".

**2. Middle Column: Mem² (1/2) - Initial Retrieval**

* **Process:** Act 0 retrieves only memories relevant to the original query `q`.

* **Memories Retrieved:** "(The same as that in 2)" – referring to the two memories listed in the left column.

* **Evidence Extracted:** "Andrew adopted Toby on July 11, 2023, and another pup was adopted near October 19, 2023."

* **Gap Identified:** "One, the date when the other pup is unknown, and it lacks the specific adoption date for Buddy."

* **Action Taken:** "Retrieve [Buddy adoption date]".

* **New Query Generated:** `qᴹ = q ⊕ Gap` (Query plus identified Gap).

* **Act 1 Retrieval:** Retrieves a new, specific memory: "[23 October, 2023] Andrew: I named him [Buddy] because he's my buddy and I hope him and Toby become buddies! :)"

* **Updated Evidence:** "Andrew adopted Toby on July 11, 2023, and Buddy was named on October 19, 2023." *(Note: The diagram shows "October 19" here, but the retrieved memory is dated "23 October". This is a potential inconsistency or approximation within the diagram's narrative.)*

* **Updated Gap:** "It lacks the specific adoption date for Buddy."

**3. Right Column: Mem² (2/2) - Reflection & Correction**

* **Process:** Act 2 is a "Reflect" step.

* **Reasoning:** "Though it lacks the specific adoption date for Buddy, we can calculate the approximate number of months between the two events."

* **Updated Evidence & Gaps:** "(The same as above)".

* **Final Answer Formulation:** The process lists the two key dates:

1. Andrew adopted Toby on **July 11, 2023**.

2. Buddy was named on **October 19, 2023**.

* **Calculation:** "Now, let's calculate the time between these two dates: [Calculation process omitted]".

* **Conclusion:** "Therefore, the total number of full months that have passed between Andrew adopting Toby and Buddy is **3 months**."

* **Outcome:** Marked with a green checkmark (✅) and the label "[correct]".

### Key Observations

1. **Performance Contrast:** The Full-Context method fails, providing an incorrect answer of "six months," while the Mem² method succeeds with "3 months."

2. **Error Source:** The Full-Context error likely stems from the LLM misinterpreting the vague phrase "near October 19, 2023" in the evidence, possibly rounding up or misaligning the timeline.

3. **Mem² Mechanism:** The success of Mem² is attributed to its iterative, reflective process. It explicitly identifies missing information (the specific date for Buddy), retrieves it, and then performs a reasoned calculation based on the concrete dates (July 11 to October 19).

4. **Data Discrepancy:** There is a minor inconsistency in the diagram's narrative. The memory retrieved in Act 1 is dated "23 October, 2023," but the evidence and final calculation use "October 19, 2023." This suggests the diagram may be simplifying or that the system interprets the naming event as the relevant temporal anchor for "Buddy."

5. **Spatial Layout:** The legend (color-coded annotations) is integrated directly into the flow. Red text (❌, "wrong", "Heavy Context...") indicates failure points in the left column. Green text (✅, "correct") indicates success in the right column. The flow is strictly top-to-bottom within each column, with arrows connecting the steps.

### Interpretation

This diagram serves as a technical demonstration of an advanced memory-augmented reasoning system (Mem²) designed to overcome the limitations of standard large language models (LLMs) when dealing with complex, multi-hop questions requiring precise temporal reasoning.

* **What it demonstrates:** It argues that simply feeding all available context to an LLM is insufficient and can lead to errors, especially when information is scattered or vague. The Mem² approach mimics human-like reasoning by:

1. **Initial Assessment:** Understanding what is known and, crucially, *what is not known* (identifying the "Gap").

2. **Targeted Information Retrieval:** Seeking only the specific missing data.

3. **Reflective Synthesis:** Integrating the new information with the old to perform a logical calculation, even if the perfect data point (exact adoption date) remains unavailable.

* **Underlying Principle:** The system prioritizes *reasoned approximation based on concrete data points* over *speculative interpretation of vague context*. The correct answer ("3 months") is derived from the known interval between July 11 and October 19, which is a more reliable approach than guessing from the phrase "near October 19."

* **Broader Implication:** The diagram advocates for AI architectures that incorporate explicit steps for gap analysis, targeted retrieval, and reflective reasoning, moving beyond monolithic context processing to achieve higher accuracy in knowledge-intensive tasks.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Screenshot: Comparative Analysis of Question-Answering Methods

### Overview

The image is a comparative analysis of three question-answering methods (Full-Context, Retrieve-then-Answer, MenR) applied to a temporal reasoning task. Each method is presented in a vertical panel with color-coded annotations indicating performance outcomes (red for errors, green for correct answers). The task involves calculating the number of months between two adoption events described in a conversation.

---

### Components/Axes

1. **Panels**: Three vertical sections labeled:

- **1) Full-Context** (left)

- **2) Retrieve-then-Answer** (center)

- **3) MenR** (right)

2. **Annotations**:

- Red crosses (❌) for incorrect answers

- Green checkmarks (✅) for correct answers

- Color highlights (orange, green) for key text

3. **Text Elements**:

- **Query**: Consistent across all panels: *"How many months passed between Andrew adopting Toby and Buddy?"*

- **Memories**: Contextual snippets from a conversation

- **Action**: Method-specific steps (e.g., "Retrieve," "Reflect")

- **Reasoning**: Explanations of gaps/errors

- **Answer**: Final output with correctness indicators

---

### Detailed Analysis

#### 1) Full-Context Panel

- **Query**: Same as others.

- **Memories**: Entire conversation history provided.

- **Action**: Direct answer extraction.

- **Reasoning**:

- Incorrect answer: *"Six months passed between Andrew adopting Toby and Buddy."*

- Error flagged: *"Heavy Context reduces LLM's performance"* (red annotation).

- **Answer**: ❌ Incorrect (6 months).

#### 2) Retrieve-then-Answer Panel

- **Query**: Refined to *"Buddy adoption date"*.

- **Memories**: Filtered to relevant dates:

- *"Andrew adopted Toby on July 11, 2023."*

- *"Andrew adopted Buddy on October 19, 2023."*

- **Action**:

- Retrieve relevant memories.

- Calculate time difference.

- **Reasoning**:

- Updated evidence: Adoption dates confirmed.

- Gaps: *"Calculation process omitted."*

- **Answer**: ❌ Incorrect (4 months).

#### 3) MenR Panel

- **Query**: Further refined to *"Buddy adoption date"*.

- **Memories**: Same as Retrieve-then-Answer.

- **Action**:

- Reflect on reasoning gaps.

- Draft answer using specific dates.

- **Reasoning**:

- Updated evidence: Adoption dates confirmed.

- Calculation: *"3 months passed between adoption dates."*

- **Answer**: ✅ Correct (3 months).

---

### Key Observations

1. **Full-Context** fails due to overwhelming context, leading to incorrect inference.

2. **Retrieve-then-Answer** improves by filtering memories but omits critical calculation steps.

3. **MenR** succeeds by iteratively refining queries, reflecting on gaps, and explicitly calculating the time difference using precise dates.

---

### Interpretation

The image demonstrates the importance of **memory retrieval precision** and **explicit reasoning** in temporal reasoning tasks. The MenR method outperforms others by:

- **Refining queries** to extract specific evidence (adoption dates).

- **Identifying and addressing gaps** in reasoning (e.g., omitted calculations).

- **Leveraging structured reflection** to correct errors.

The red/green annotations highlight how context management and iterative reasoning directly impact accuracy. The correct answer (3 months) relies on precise date extraction and arithmetic, which only MenR executes fully.

DECODING INTELLIGENCE...