## Reasoning Process Diagram: Question Answering with Different Retrieval Strategies

### Overview

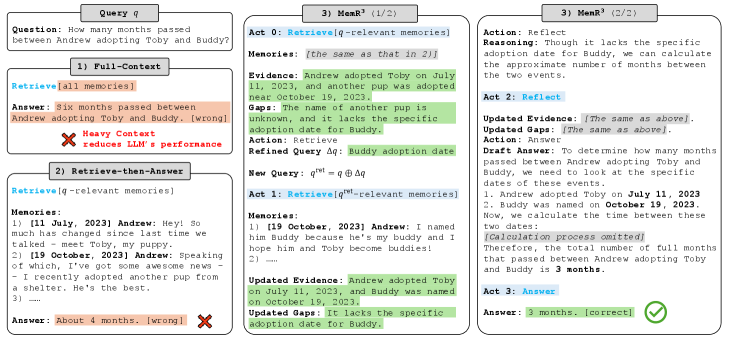

The image presents a diagram comparing three different approaches to answering a question using a language model (LLM). The question is: "How many months passed between Andrew adopting Toby and Buddy?". The diagram illustrates the reasoning process and the final answer provided by each approach: Full-Context, Retrieve-then-Answer, and MemR^3. The diagram highlights the strengths and weaknesses of each approach in terms of accuracy and the amount of context used.

### Components/Axes

* **Query q:** The initial question: "How many months passed between Andrew adopting Toby and Buddy?"

* **1) Full-Context:** This approach retrieves all available memories and uses them to answer the question.

* `Retrieve[all memories]`

* Answer: "Six months passed between Andrew adopting Toby and Buddy. [wrong]"

* "Heavy Context reduces LLM's performance" (indicated by a red "X")

* **2) Retrieve-then-Answer:** This approach retrieves only the memories relevant to the question and then answers the question.

* `Retrieve[q-relevant memories]`

* Memories:

* "1) [11 July, 2023] Andrew: Hey! So much has changed since last time we talked - meet Toby, my puppy."

* "2) [19 October, 2023] Andrew: Speaking of which, I've got some awesome news - I recently adopted another pup from a shelter. He's the best."

* "3) ..."

* Answer: "About 4 months. [wrong]" (indicated by a red "X")

* **3) MemR^3 (1/2 & 2/2):** This approach uses a more refined retrieval and reasoning process, involving multiple acts.

* **Act 0:** `Retrieve[q-relevant memories]`

* Memories: "[the same as that in 2)]"

* Evidence: "Andrew adopted Toby on July 11, 2023, and another pup was adopted near October 19, 2023."

* Gaps: "The name of another pup is unknown, and it lacks the specific adoption date for Buddy."

* Action: Retrieve

* Refined Query Δq: "Buddy adoption date"

* New Query: q^{ret} = q ⊕ Δq

* **Act 1:** `Retrieve[q^{ret}-relevant memories]`

* Memories:

* "1) [19 October, 2023] Andrew: I named him Buddy because he's my buddy and I hope him and Toby become buddies!"

* "2) ----"

* Updated Evidence: "Andrew adopted Toby on July 11, 2023, and Buddy was named on October 19, 2023."

* Updated Gaps: "It lacks the specific adoption date for Buddy."

* **Act 2:** Reflect

* Action: Reflect

* Reasoning: "Though it lacks the specific adoption date for Buddy, we can calculate the approximate number of months between the two events."

* Updated Evidence: "[The same as above]."

* Updated Gaps: "[The same as above]."

* Action: Answer

* Draft Answer: "To determine how many months passed between Andrew adopting Toby and Buddy, we need to look at the specific dates of these events."

* "1. Andrew adopted Toby on July 11, 2023"

* "2. Buddy was named on October 19, 2023."

* "Now, we calculate the time between these two dates: [Calculation process omitted]"

* "Therefore, the total number of full months that passed between Andrew adopting Toby and Buddy is 3 months."

* **Act 3:** Answer

* Answer: "3 months. [correct]" (indicated by a green checkmark)

### Detailed Analysis or ### Content Details

* **Full-Context:** This method uses all available information, leading to an incorrect answer, possibly due to irrelevant information diluting the relevant facts.

* **Retrieve-then-Answer:** This method retrieves relevant memories but still provides an incorrect answer, suggesting that the retrieved information is insufficient or misinterpreted.

* **MemR^3:** This method refines the query and iteratively retrieves and reflects on the information, leading to the correct answer. The process involves identifying gaps in the knowledge and specifically targeting those gaps with refined queries.

### Key Observations

* The Full-Context approach fails due to the inclusion of too much irrelevant information.

* The Retrieve-then-Answer approach fails despite retrieving relevant information, indicating a potential issue with reasoning or insufficient information.

* The MemR^3 approach succeeds by iteratively refining the query and focusing on filling knowledge gaps.

### Interpretation

The diagram demonstrates the importance of targeted information retrieval and iterative reasoning for accurate question answering. The MemR^3 approach highlights the effectiveness of refining queries and focusing on filling knowledge gaps, leading to a more accurate and reliable answer compared to simply using all available information or retrieving only initially relevant information. The failure of the Full-Context approach suggests that the presence of irrelevant information can negatively impact the performance of LLMs. The success of MemR^3 underscores the value of a more structured and iterative approach to knowledge retrieval and reasoning.