## Diagram Type: Comparative Workflow for Memory-Augmented LLMs

### Overview

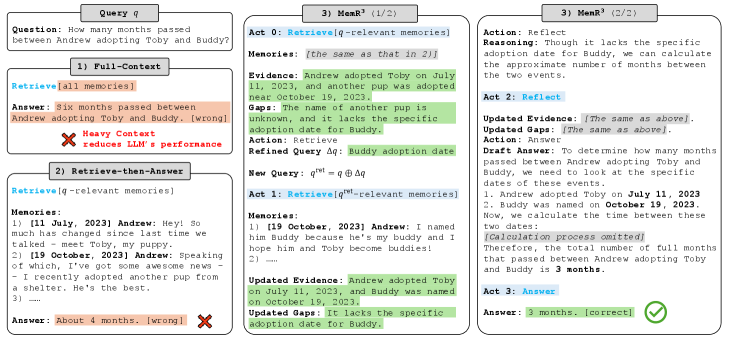

This technical diagram compares three different approaches for a Large Language Model (LLM) to answer a specific temporal reasoning question based on a set of "memories" (stored conversational data). The diagram is organized into four main panels: a top-left query box, two left-side panels showing failed baseline methods, and two large panels on the right detailing the successful iterative process of a method called **MemR³**.

### Components/Axes

* **Query $q$ (Top-Left):** Defines the input task.

* **1) Full-Context (Middle-Left):** A baseline method that provides all available data to the model at once.

* **2) Retrieve-then-Answer (Bottom-Left):** A standard Retrieval-Augmented Generation (RAG) approach.

* **3) MemR³ (Center and Right):** A multi-step, iterative reasoning and retrieval framework, split into two parts (1/2 and 2/2).

* **Color Coding:**

* **Blue Text in Brackets:** Represents specific system actions or "Acts" (e.g., `Retrieve`, `Reflect`, `Answer`).

* **Orange Highlight:** Indicates incorrect answers or failed outputs.

* **Green Highlight:** Indicates successful evidence extraction, gap identification, query refinement, and the final correct answer.

* **Red 'X' Icon:** Denotes a failure in performance or accuracy.

* **Green Checkmark Icon:** Denotes a successful and correct output.

---

### Content Details

#### Header: Query $q$

* **Question:** "How many months passed between Andrew adopting Toby and Buddy?"

#### Panel 1: Full-Context

* **Action:** `Retrieve[all memories]`

* **Answer (Orange):** "Six months passed between Andrew adopting Toby and Buddy. [wrong]"

* **Annotation (Red):** "Heavy Context reduces LLM's performance" (accompanied by a red 'X').

#### Panel 2: Retrieve-then-Answer

* **Action:** `Retrieve[q-relevant memories]`

* **Memories Extracted:**

1. `[11 July, 2023] Andrew: Hey! So much has changed since last time we talked - meet Toby, my puppy.`

2. `[19 October, 2023] Andrew: Speaking of which, I've got some awesome news - I recently adopted another pup from a shelter. He's the best.`

3. `...`

* **Answer (Orange):** "About 4 months. [wrong]" (accompanied by a red 'X').

#### Panel 3: MemR³ (1/2)

* **Act 0:** `Retrieve[q-relevant memories]`

* **Memories:** `[the same as that in 2)]`

* **Evidence (Green):** "Andrew adopted Toby on July 11, 2023, and another pup was adopted near October 19, 2023."

* **Gaps (Green):** "The name of another pup is unknown, and it lacks the specific adoption date for Buddy."

* **Action:** `Retrieve`

* **Refined Query $\Delta q$ (Green):** "Buddy adoption date"

* **New Query Formula:** $q^{ret} = q \oplus \Delta q$

* **Act 1:** `Retrieve[q^{ret}-relevant memories]`

* **Memories Extracted:**

1. `[19 October, 2023] Andrew: I named him Buddy because he's my buddy and I hope him and Toby become buddies!`

2. `...`

* **Updated Evidence (Green):** "Andrew adopted Toby on July 11, 2023, and Buddy was named on October 19, 2023."

* **Updated Gaps (Green):** "It lacks the specific adoption date for Buddy."

#### Panel 4: MemR³ (2/2)

* **Action:** `Reflect`

* **Reasoning:** "Though it lacks the specific adoption date for Buddy, we can calculate the approximate number of months between the two events."

* **Act 2:** `Reflect`

* **Updated Evidence/Gaps:** `[The same as above].`

* **Action:** `Answer`

* **Draft Answer:** "To determine how many months passed between Andrew adopting Toby and Buddy, we need to look at the specific dates of these events. 1. Andrew adopted Toby on **July 11, 2023** 2. Buddy was named on **October 19, 2023**. Now, we calculate the time between these two dates: *[Calculation process omitted]* Therefore, the total number of full months that passed between Andrew adopting Toby and Buddy is **3 months**."

* **Act 3:** `Answer`

* **Final Answer (Green):** "3 months. [correct]" (accompanied by a green checkmark).

---

### Key Observations

* **Context Overload:** The "Full-Context" method fails significantly (stating 6 months), suggesting that providing too much irrelevant data confuses the model's reasoning capabilities.

* **Precision Errors:** The "Retrieve-then-Answer" method gets closer (4 months) but fails on the exact calculation, likely due to a lack of explicit reasoning steps.

* **Iterative Refinement:** MemR³ identifies that the initial retrieval didn't explicitly link the name "Buddy" to the second adoption event. It generates a refined query ($\Delta q$) to confirm the identity of the second dog.

* **Explicit Reasoning:** MemR³ includes a "Reflect" stage where it acknowledges missing data (exact adoption date for Buddy) but determines that the naming date is a sufficient proxy for calculation.

### Interpretation

The data demonstrates that for complex temporal reasoning tasks, a **multi-hop, iterative retrieval and reflection process** (MemR³) is superior to single-pass methods.

1. **The "Heavy Context" Problem:** The diagram suggests that LLMs have a "distraction" threshold. When forced to process all memories, the model's accuracy drops more than when it uses a targeted retrieval.

2. **Gap Analysis as a Catalyst:** The critical innovation in MemR³ shown here is the explicit identification of "Gaps." By articulating what it *doesn't* know, the system can perform a second, targeted retrieval to bridge the information void.

3. **Logical Proxying:** In the final stage, the model uses the naming date (Oct 19) as a proxy for the adoption date. The calculation (July 11 to Oct 19) results in 3 full months (July-Aug, Aug-Sept, Sept-Oct). The "Retrieve-then-Answer" method likely rounded up to 4 months, whereas MemR³'s explicit "Draft Answer" process ensured a more precise calculation of "full months."