\n

## Diagram: LLM Reasoning Process - Multi-Hop Question Answering

### Overview

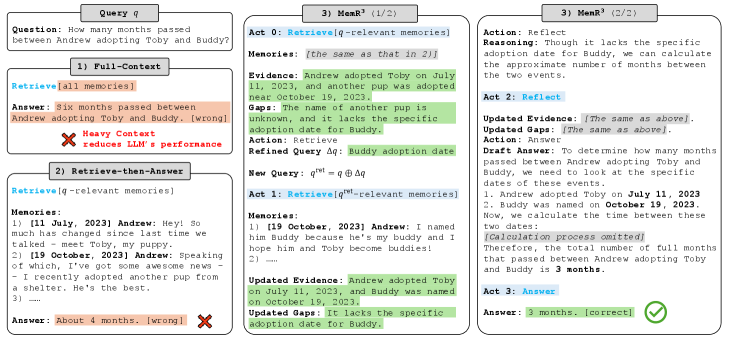

This diagram illustrates a multi-hop question answering process using a Large Language Model (LLM). It depicts the LLM's reasoning steps to answer the question: "How many months passed between Andrew adopting Toby and Buddy?". The process is broken down into stages: Full-Context, Retrieve-then-Answer, and MemR (Memory Retrieval) with two sub-stages (1/2) and (2/2). Each stage includes a query, retrieval actions, memories, evidence, gaps, and an answer. The diagram highlights the LLM's attempts, including incorrect answers and the refinement of queries to achieve the correct result.

### Components/Axes

The diagram is structured into four main sections, arranged horizontally. Each section represents a stage in the reasoning process. Within each section, the following elements are present:

* **Query (Q):** The question or refined query being addressed.

* **Action:** The action the LLM takes (e.g., Retrieve, Reflect).

* **Memories:** Relevant memories retrieved by the LLM.

* **Evidence:** Facts extracted from the memories.

* **Gaps:** Missing information needed to answer the question.

* **Answer:** The LLM's attempt at answering the question, with an indication of correctness (correct/wrong).

* **Visual Indicators:** Red "X" marks incorrect answers, and a green checkmark indicates a correct answer.

### Detailed Analysis or Content Details

**1) Full-Context:**

* **Query:** How many months passed between Andrew adopting Toby and Buddy?

* **Action:** Retrieve [all memories]

* **Memories:** Not explicitly listed, but implied to be all available memories.

* **Evidence:** Andrew adopted Toby on July 11, 2023, and another pup was adopted near October 19, 2023.

* **Gaps:** The name of another pup is unknown, and lacks the specific adoption date for Buddy.

* **Answer:** Six months passed between Andrew adopting Toby and Buddy. [wrong]

* **Note:** A large red "X" is overlaid, indicating an incorrect answer. A text box states "Heavy context reduces LLM's performance".

**2) Retrieve-then-Answer:**

* **Query:** q → Δq

* **Action:** Retrieve [q-relevant memories]

* **Memories:**

1. (11 July, 2023) Andrew: Hey! So much has changed since last time we talked – meet Toby, my puppy.

2. (19 October, 2023) Andrew: Speaking of which, we’ve got some awesome news – I recently adopted another pup from a shelter. He’s the best.

* **Evidence:** Andrew adopted Toby on July 11, 2023, and another pup was adopted on October 19, 2023.

* **Gaps:** It lacks the specific adoption date for Buddy.

* **Answer:** About 4 months. [wrong]

**3) MemR (1/2):**

* **Query:** q → Δq

* **Action:** Retrieve [q-relevant memories]

* **Memories:** [the same as that in 2)]

* **Evidence:** Andrew adopted Toby on July 11, 2023, and another pup was adopted near October 19, 2023.

* **Gaps:** The name of another pup is unknown, and it lacks the specific adoption date for Buddy.

* **Action:** Retrieve

* **Refined Query:** Δq: Buddy adoption date

* **New Query:** q* = q + Δq

* **Action:** Retrieve [q*-relevant memories]

* **Memories:**

1. (19 October, 2023) Andrew: I named him Buddy because he’s my buddy and I hope him and Toby become buddies!

2. …

* **Evidence:** Andrew adopted Toby on July 11, 2023, and Buddy was named on October 19, 2023.

* **Gaps:** It lacks the specific adoption date for Buddy.

**4) MemR (2/2):**

* **Action:** Reflect

* **Reasoning:** Though it lacks the specific adoption date for Buddy, we can calculate the approximate number of months between the two events.

* **Action:** Reflect

* **Updated Evidence:** [The same as above].

* **Updated Gaps:** [The same as above].

* **Action:** Answer

* **Draft Answer:** To determine how many months passed between Andrew adopting Toby and Buddy, we need to look at the specific dates of the events.

1. Andrew adopted Toby on July 11, 2023

2. Buddy was named on October 19, 2023.

Now, we calculate the time between these two dates: [Calculation process omitted]

Therefore, the total number of full months that passed between Andrew adopting Toby and Buddy is 3 months.

* **Answer:** 3 months. [correct]

* **Note:** A green checkmark indicates a correct answer.

### Key Observations

* The LLM initially struggles with the question, providing incorrect answers in the Full-Context and Retrieve-then-Answer stages.

* The MemR stages demonstrate the LLM's ability to refine its query and retrieve more relevant information.

* The "Heavy context reduces LLM's performance" note suggests that providing too much initial information can hinder the LLM's reasoning.

* The final answer is achieved through a multi-step process of retrieval, reflection, and calculation.

### Interpretation

This diagram illustrates the iterative nature of LLM reasoning for complex questions. The LLM doesn't immediately arrive at the correct answer but refines its approach through multiple steps. The MemR stages highlight the importance of targeted memory retrieval and the ability to synthesize information from different sources. The diagram demonstrates that even with access to relevant information, LLMs may require explicit guidance and refinement of queries to achieve accurate results. The process shows how the LLM breaks down a complex question into smaller, manageable steps, ultimately leading to a correct answer. The diagram also suggests that LLM performance can be affected by the amount of initial context provided, emphasizing the need for efficient information retrieval strategies. The diagram is a visual representation of the LLM's internal thought process, providing insights into its strengths and limitations.