## Process Flow Diagram: Expert Selection Mechanism

### Overview

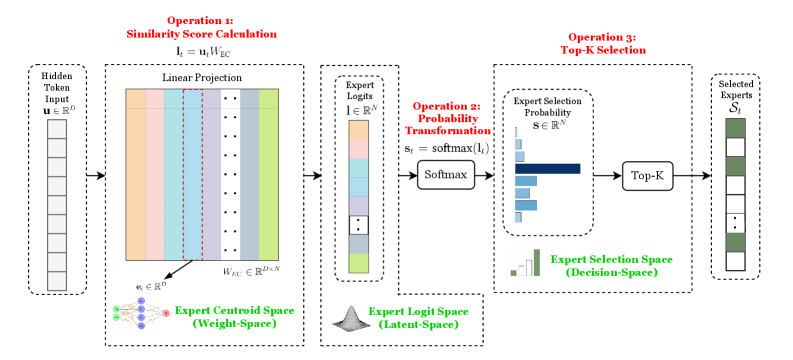

This image is a technical process flow diagram illustrating a three-step mechanism for selecting "experts" (likely in a machine learning or neural network context, such as a Mixture-of-Experts model). The flow proceeds from left to right, starting with a "Hidden Token Input" and culminating in "Selected Experts." The diagram uses mathematical notation, color-coded blocks, and labeled spaces to explain the transformation of data.

### Components/Axes

The diagram is segmented into four main vertical sections, connected by arrows indicating data flow. Each major operation is enclosed in a dashed box and labeled in red text.

**1. Input (Far Left):**

* **Label:** `Hidden Token Input`

* **Mathematical Notation:** `u ∈ R^d` (indicating a vector `u` in a d-dimensional real space).

* **Visual:** A vertical column of 8 empty white rectangles, representing a sequence of token vectors.

**2. Operation 1: Similarity Score Calculation (Left-Center):**

* **Title (Red):** `Operation 1: Similarity Score Calculation`

* **Equation:** `l_i = u_i W_EC`

* **Process Label:** `Linear Projection`

* **Visual:** A large block composed of 8 vertical colored bars (from left to right: orange, light blue, purple, grey, light green, dark blue, yellow, dark green). These represent the projection of the input `u` onto expert centroids.

* **Key Components:**

* `W_EC ∈ R^(d×N)`: The weight matrix for the Expert Centroid Space.

* `e_i ∈ R^d`: A single expert centroid vector, pointed to by an arrow from the colored bars.

* **Space Label (Green, Bottom):** `Expert Centroid Space (Weight-Space)`. This is accompanied by a small diagram of interconnected nodes (green and blue dots).

**3. Intermediate Output & Operation 2: Probability Transformation (Center):**

* **Output Label:** `Expert Logits`

* **Mathematical Notation:** `l ∈ R^N` (a vector of N logits).

* **Visual:** A vertical column of 8 colored rectangles (matching the colors from the Linear Projection block), representing the raw similarity scores (logits) for each expert.

* **Title (Red):** `Operation 2: Probability Transformation`

* **Equation:** `s_i = softmax(l_i)`

* **Process Label:** `Softmax`

* **Space Label (Green, Bottom):** `Expert Logit Space (Latent-Space)`. This is accompanied by a small bell curve icon.

**4. Operation 3: Top-K Selection & Output (Right):**

* **Title (Red):** `Operation 3: Top-K Selection`

* **Process Label:** `Top-K`

* **Visual (Expert Selection Probability):** A horizontal bar chart labeled `Expert Selection Probability` with notation `s ∈ R^N`. It shows 8 bars of varying lengths. The 4th bar (dark blue) is the longest, followed by the 1st (orange) and 6th (dark green). The others are shorter.

* **Space Label (Green, Bottom):** `Expert Selection Space (Decision-Space)`. This is accompanied by a small bar chart icon.

* **Final Output Label:** `Selected Experts`

* **Mathematical Notation:** `S_k`

* **Visual:** A vertical column of 8 rectangles. The 1st (orange), 4th (dark blue), and 6th (dark green) are filled with a solid dark green color, indicating they are the "selected" experts from the Top-K operation. The other five are empty white rectangles.

### Detailed Analysis

The diagram details a precise mathematical pipeline:

1. **Input Transformation:** A hidden token vector `u` is linearly projected using a weight matrix `W_EC` to produce a set of similarity scores or "logits" (`l`). Each logit corresponds to an expert, represented by a centroid `e_i` in the "Weight-Space."

2. **Probability Conversion:** The logits `l` are passed through a softmax function to convert them into a probability distribution `s`. This transforms the data from the "Latent-Space" (logits) to the "Decision-Space" (selection probabilities).

3. **Expert Selection:** A Top-K operation is applied to the probability distribution `s`. This selects the `k` experts with the highest selection probabilities. In the visual example, K appears to be 3, as three experts (1st, 4th, and 6th) are highlighted in the final "Selected Experts" block.

### Key Observations

* **Color Consistency:** The color coding is consistent throughout the flow. The 4th expert (dark blue) has the highest logit, the highest selection probability, and is selected. The 1st (orange) and 6th (dark green) experts are also selected, corresponding to the next highest probabilities.

* **Spatial Organization:** The diagram clearly segregates conceptual spaces: Weight-Space (where expert definitions live), Latent-Space (raw model outputs), and Decision-Space (final routing choices).

* **Mathematical Rigor:** Every step is accompanied by its formal mathematical operation (`l_i = u_i W_EC`, `softmax`, `Top-K`), making the process unambiguous for a technical audience.

* **Visual Example:** The bar chart for "Expert Selection Probability" provides a concrete example of the softmax output, and the final "Selected Experts" block shows the discrete outcome of the Top-K selection.

### Interpretation

This diagram explains the **routing mechanism** in a Mixture-of-Experts (MoE) neural network layer. It answers the question: "Given an input token, how does the model decide which specialized sub-networks (experts) should process it?"

* **What it demonstrates:** The process is a learned, dynamic routing system. Instead of sending every input to every expert (computationally expensive), the model uses a lightweight "gating network" (the operations shown) to compute a similarity score between the input and each expert's prototype (centroid). It then probabilistically selects only the most relevant experts (Top-K) for that specific input.

* **Relationships:** The "Expert Centroid Space" (`W_EC`) contains the learned knowledge of what each expert specializes in. The input `u` is compared against these specializations. The softmax ensures the selection is a competition, and the Top-K enforces sparsity for efficiency.

* **Significance:** This mechanism allows models to have a very large total number of parameters (many experts) while only activating a small subset for any given input, enabling scaling without a proportional increase in computational cost. The diagram meticulously breaks down the core computation that makes this efficient scaling possible.