## Line Chart: Validation Accuracy Comparison

### Overview

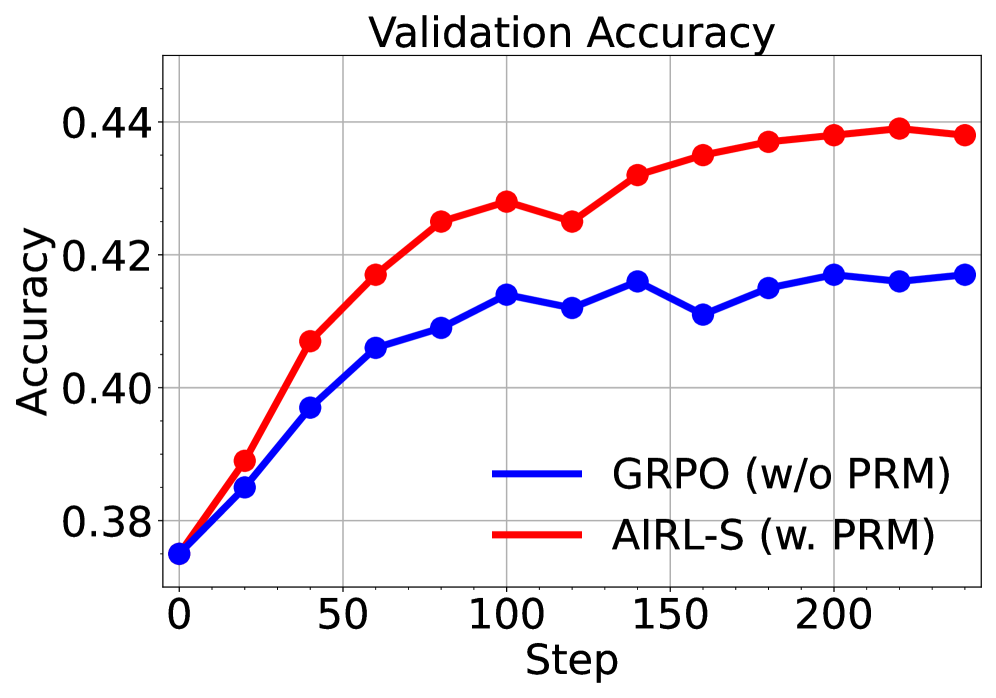

The image is a line chart titled "Validation Accuracy" that compares the performance of two different methods over a series of training steps. The chart plots accuracy values on the y-axis against training steps on the x-axis, showing how each method's validation accuracy evolves.

### Components/Axes

* **Chart Title:** "Validation Accuracy" (centered at the top).

* **Y-Axis:** Labeled "Accuracy". The scale runs from approximately 0.38 to 0.44, with major tick marks at 0.38, 0.40, 0.42, and 0.44.

* **X-Axis:** Labeled "Step". The scale runs from 0 to 200, with major tick marks at 0, 50, 100, 150, and 200.

* **Legend:** Located in the bottom-right quadrant of the chart area.

* A blue line segment is labeled **"GRPO (w/o PRM)"**.

* A red line segment is labeled **"AIRL-S (w. PRM)"**.

* **Data Series:** Two lines with circular markers at data points.

* **Blue Line (GRPO w/o PRM):** Represents one method.

* **Red Line (AIRL-S w. PRM):** Represents the other method.

### Detailed Analysis

**Data Series 1: GRPO (w/o PRM) - Blue Line**

* **Trend:** The line shows an overall upward trend, indicating improving accuracy over steps. The increase is steepest between steps 0 and 100, after which it plateaus with minor fluctuations.

* **Approximate Data Points:**

| Step | GRPO (w/o PRM) Accuracy | AIRL-S (w. PRM) Accuracy |

| :--- | :--- | :--- |

| 0 | ~0.375 | ~0.375 |

| 25 | ~0.385 | ~0.389 |

| 50 | ~0.397 | ~0.407 |

| 75 | ~0.406 | ~0.417 |

| 100 | ~0.414 | ~0.425 |

| 125 | ~0.412 | ~0.428 |

| 150 | ~0.416 | ~0.425 |

| 175 | ~0.411 | ~0.432 |

| 200 | ~0.415 | ~0.435 |

| 225 | ~0.417 | ~0.437 |

| 250 | ~0.416 | ~0.438 |

**Data Series 2: AIRL-S (w. PRM) - Red Line**

* **Trend:** This line also shows a strong upward trend, consistently achieving higher accuracy than the blue line at every measured step after the start. It rises sharply until around step 150, after which the rate of increase slows, approaching a plateau near 0.44.

### Key Observations

1. **Performance Gap:** The red line (AIRL-S w. PRM) maintains a clear and consistent performance advantage over the blue line (GRPO w/o PRM) from approximately step 25 onward. The gap widens significantly between steps 50 and 150.

2. **Convergence:** Both methods show signs of convergence (plateauing) in the later steps (150-250), but the red line converges at a higher accuracy level (~0.438) compared to the blue line (~0.416).

3. **Initial Similarity:** Both methods start at nearly the same accuracy (~0.375) at step 0.

4. **Volatility:** The blue line exhibits slightly more volatility (e.g., dips at steps 125 and 175) compared to the smoother ascent of the red line.

### Interpretation

This chart demonstrates a comparative experiment between two algorithms or training methods, likely in a machine learning or reinforcement learning context. The key finding is that the method labeled **AIRL-S (w. PRM)** significantly outperforms **GRPO (w/o PRM)** in terms of validation accuracy over 250 training steps.

The inclusion of "PRM" (which could stand for something like "Preference Reward Model" or "Probabilistic Reward Model") in the AIRL-S method appears to be a critical factor for its superior performance. The data suggests that AIRL-S with PRM not only learns faster (steeper initial slope) but also achieves a higher final performance ceiling. The plateauing of both curves indicates that further training steps beyond 250 may yield diminishing returns for both methods under the current conditions. The experiment strongly supports the efficacy of the AIRL-S approach with the PRM component for this specific task.