\n

## Diagram: Error Analysis of Mathematical Reasoning

### Overview

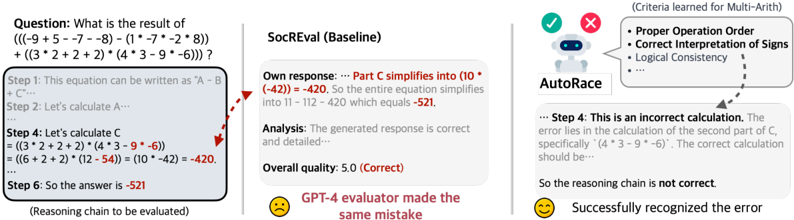

This diagram illustrates an error analysis scenario in mathematical reasoning, comparing the output of a baseline model (SocREval) with an automated error recognition system (AutoRace). The diagram focuses on a specific arithmetic problem and highlights where the baseline model fails, and how AutoRace successfully identifies the error.

### Components/Axes

The diagram is divided into three main sections:

1. **Question & Reasoning Chain (Left):** Presents the mathematical problem and the step-by-step reasoning provided by the baseline model.

2. **Model Comparison (Center):** Shows the baseline model's response, analysis, and overall quality score, alongside the AutoRace system's evaluation.

3. **Evaluation Criteria & Error Identification (Right):** Lists the criteria used for evaluation and visually indicates the error detection process.

The diagram also includes visual cues like red arrows indicating the error location and smiley/frowning faces to represent evaluation outcomes.

### Detailed Analysis or Content Details

**1. Question & Reasoning Chain (Left)**

* **Question:** "What is the result of ((−9 + 5 − 7 − 8) − (1 − 7 − 2 * 8)) + (3 * 2 + 2 * 2) * (4 * 3 − 9 − 6))?"

* **Step 1:** "This equation can be written as 'A - B + C'"

* **Step 2:** "Let's calculate A…"

* **Step 4:** "Let's calculate C ( = (3 * 2 + 2 * 2) * (4 * 3 - 9 - 6) = (6 + 4) * (12 - 15) = (10 * -3) = -30)"

* **Step 6:** "So the answer is -521"

**2. Model Comparison (Center)**

* **Model:** SocREval (Baseline)

* **Own response:** "...Part C simplifies into (10 * (-42)) = -420. So the entire equation simplifies into 11 - 112 - 420 which equals -521."

* **Analysis:** "The generated response is correct and detailed…"

* **Overall quality:** 5.0 (Correct)

* **GPT-4 evaluator made the same mistake** (Text in red)

**3. Evaluation Criteria & Error Identification (Right)**

* **Criteria learned for Multi-Arith:**

* Proper Operation Order

* Correct Interpretation of Signs

* Logical Consistency

* **AutoRace:** (Robot icon with a red 'X' over it initially, then a green checkmark)

* **Text:** "...Step 4: This is an incorrect calculation. The error lies in the calculation of the second part of C, specifically (4 * 3 − 9 − 6). The correct calculation should be…"

* **Text:** "So the reasoning chain is not correct."

* **Text:** "Successfully recognized the error" (with a smiley face)

**Red Arrow:** Points from Step 4 in the Reasoning Chain to the error identified by AutoRace.

### Key Observations

* Both the baseline model (SocREval) and the GPT-4 evaluator incorrectly assessed the calculation in Step 4 as correct, resulting in a final answer of -521.

* AutoRace successfully identified the error in Step 4, specifically in the calculation of `(4 * 3 − 9 − 6)`. The correct calculation is not fully shown, but the error is pinpointed.

* The diagram highlights a failure case where a complex reasoning chain leads to an incorrect result, and an automated system can detect the error where human evaluation (GPT-4) fails.

* The visual cues (red arrow, smiley/frowning faces) effectively communicate the error detection process.

### Interpretation

This diagram demonstrates the importance of automated error detection in complex reasoning tasks. While the baseline model and even a powerful language model like GPT-4 can be misled by a subtle error in a multi-step calculation, AutoRace is able to pinpoint the mistake based on predefined criteria (operation order, sign interpretation, logical consistency). This suggests that AutoRace employs a different evaluation strategy, likely focusing on the correctness of individual calculations rather than the overall reasoning chain. The diagram underscores the potential for automated systems to improve the reliability of mathematical reasoning by providing a more rigorous and objective evaluation process. The fact that the GPT-4 evaluator made the same mistake as the baseline model is a notable outlier, suggesting that even advanced language models are not immune to errors in arithmetic reasoning. This highlights the need for specialized tools like AutoRace to complement human evaluation and ensure the accuracy of complex calculations.