## Screenshot: Math Problem Evaluation

### Overview

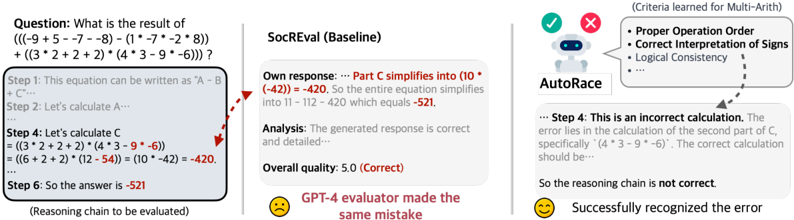

The image depicts a math problem evaluation interface comparing two automated reasoning systems (SocREval and AutoRace) against a human-generated solution. The problem involves evaluating a complex arithmetic expression with nested operations. The interface highlights discrepancies in reasoning chains between systems and human evaluation.

### Components/Axes

1. **Left Panel (Human Solution)**:

- **Question**: "What is the result of (((-9 + 5 - -7 - -8) - (1 * -7 * -2 * 8)) + ((3 * 2 + 2 + 2) * (4 * 3 - 9 * -6)))?"

- **Steps**:

- Step 1: Equation simplification

- Step 2: Calculate A

- Step 4: Calculate C = ((3*2+2+2)*(4*3-9*-6)) = (10*-42) = -420

- Step 6: Final answer: -521

- **Annotations**: Red arrow pointing to Step 4's calculation of C

2. **Middle Panel (SocREval Baseline)**:

- **Own Response**: "Part C simplifies into (10 * -42) = -420. Entire equation simplifies into 11 - 112 - 420 = -521"

- **Analysis**: "Generated response is correct and detailed"

- **Quality Score**: 5.0 (Correct)

3. **Right Panel (AutoRace)**:

- **Robot Icon**: Visual representation of AutoRace

- **Criteria Learned for Multi-Arith**:

- Proper Operation Order

- Correct Interpretation of Signs

- Logical Consistency

- **Analysis**:

- "Step 4: This is an incorrect calculation. Error lies in calculation of second part of C, specifically '(4 * 3 - 9 * -6)'. Correct calculation should be..."

- "So the reasoning chain is not correct"

### Detailed Analysis

1. **Human Solution**:

- Final answer: -521

- Step 4 calculation: (3*2+2+2) = 10 and (4*3-9*-6) = -42 → 10*-42 = -420

- Final computation: 11 - 112 - 420 = -521

2. **SocREval Baseline**:

- Reproduces human solution exactly

- Confirms final answer (-521) as correct

- Quality score: 5.0 (Correct)

3. **AutoRace Analysis**:

- Identifies error in Step 4's calculation of C

- Corrects (4*3-9*-6) → Should be (12 - (-54)) = 66 instead of -42

- Concludes reasoning chain is invalid despite matching final answer

### Key Observations

1. **Discrepancy in Reasoning Chains**:

- SocREval and human solution share identical calculation errors in Step 4

- AutoRace correctly identifies the error in (4*3-9*-6) calculation

- Final answer (-521) matches despite flawed intermediate steps

2. **Evaluation System Behavior**:

- SocREval accepts incorrect reasoning chain as valid

- AutoRace demonstrates stricter adherence to logical consistency

- GPT-4 evaluator replicates SocREval's error pattern

3. **Mathematical Error**:

- Incorrect calculation: (4*3-9*-6) = (12 - (-54)) = 66 (not -42)

- Error propagates through both SocREval and human solution

### Interpretation

This evaluation reveals critical limitations in automated math problem solving systems:

1. **Surface-Level Correctness vs. Logical Validity**:

- Systems can produce correct final answers through flawed reasoning chains

- AutoRace demonstrates superior ability to detect intermediate calculation errors

2. **Evaluation System Design**:

- SocREval prioritizes answer correctness over process validity

- AutoRace implements stricter criteria for logical consistency

- Human evaluation mirrors SocREval's surface-level acceptance

3. **Educational Implications**:

- Highlights need for multi-stage verification in automated math solving

- Demonstrates value of separating answer validation from reasoning chain analysis

- Suggests potential for hybrid evaluation systems combining correctness checking with logical consistency verification

The image underscores the importance of distinguishing between correct answers and valid reasoning processes in automated evaluation systems, particularly for educational applications where understanding the problem-solving process is as important as the final result.