TECHNICAL ASSET FINGERPRINT

3ed2209c8cdaa2389b47b1dd

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

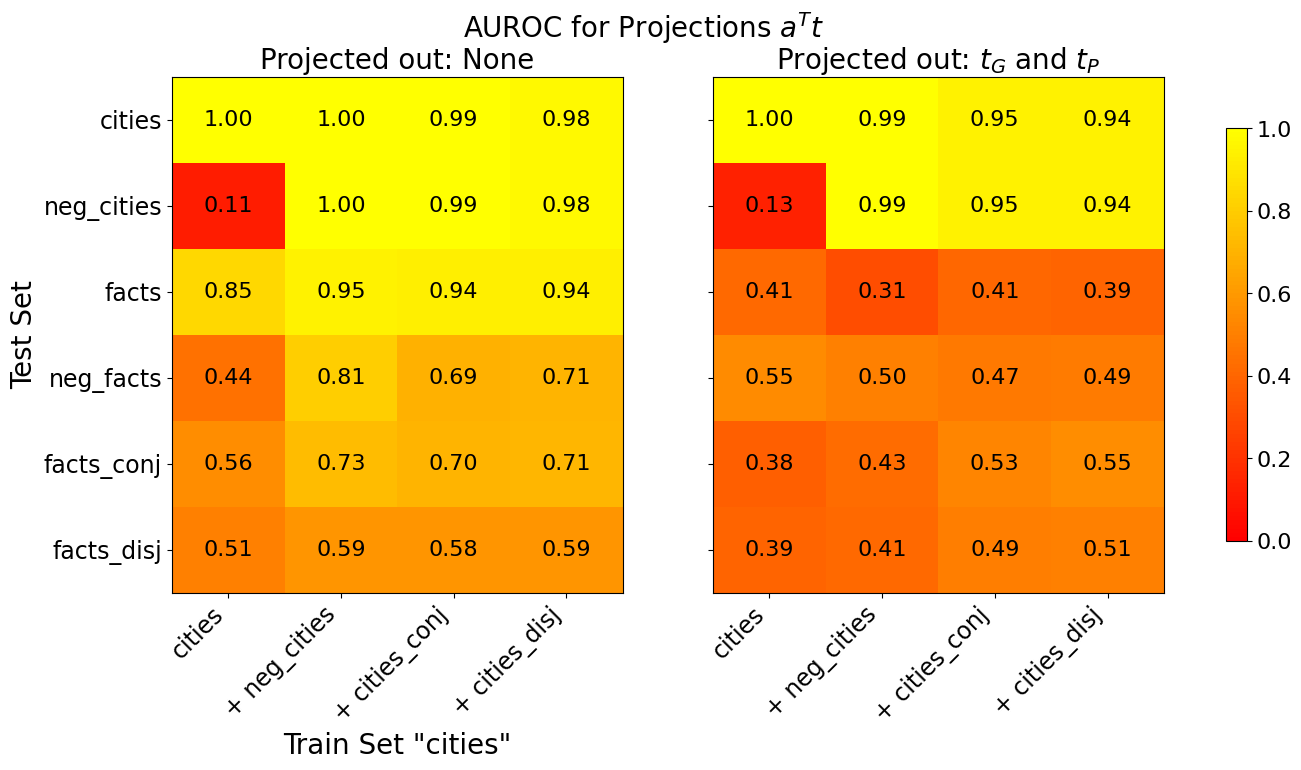

## Heatmap: AUROC for Projections a^Tt

### Overview

The image presents two heatmaps comparing the Area Under the Receiver Operating Characteristic curve (AUROC) for different projections. The left heatmap shows results when no projection is applied ("Projected out: None"), while the right heatmap shows results when projections tG and tP are applied ("Projected out: tG and tP"). The heatmaps compare performance across different test sets and training sets, with the training set fixed as "cities". The color intensity represents the AUROC score, ranging from 0.0 (red) to 1.0 (yellow).

### Components/Axes

* **Title:** AUROC for Projections a^Tt

* **Y-axis Label (Test Set):**

* cities

* neg\_cities

* facts

* neg\_facts

* facts\_conj

* facts\_disj

* **X-axis Label (Train Set "cities"):**

* cities

* + neg\_cities

* + cities\_conj

* + cities\_disj

* **Heatmap 1 Title:** Projected out: None

* **Heatmap 2 Title:** Projected out: tG and tP

* **Colorbar:** Vertical colorbar on the right side of the image, ranging from 0.0 (red) to 1.0 (yellow) in increments of 0.2.

### Detailed Analysis

**Heatmap 1: Projected out: None**

| Test Set | cities | + neg\_cities | + cities\_conj | + cities\_disj |

| :---------- | :----- | :------------ | :------------- | :------------- |

| cities | 1.00 | 1.00 | 0.99 | 0.98 |

| neg\_cities | 0.11 | 1.00 | 0.99 | 0.98 |

| facts | 0.85 | 0.95 | 0.94 | 0.94 |

| neg\_facts | 0.44 | 0.81 | 0.69 | 0.71 |

| facts\_conj | 0.56 | 0.73 | 0.70 | 0.71 |

| facts\_disj | 0.51 | 0.59 | 0.58 | 0.59 |

**Heatmap 2: Projected out: tG and tP**

| Test Set | cities | + neg\_cities | + cities\_conj | + cities\_disj |

| :---------- | :----- | :------------ | :------------- | :------------- |

| cities | 1.00 | 0.99 | 0.95 | 0.94 |

| neg\_cities | 0.13 | 0.99 | 0.95 | 0.94 |

| facts | 0.41 | 0.31 | 0.41 | 0.39 |

| neg\_facts | 0.55 | 0.50 | 0.47 | 0.49 |

| facts\_conj | 0.38 | 0.43 | 0.53 | 0.55 |

| facts\_disj | 0.39 | 0.41 | 0.49 | 0.51 |

### Key Observations

* When no projection is applied, the "cities" and "neg\_cities" test sets achieve perfect or near-perfect AUROC scores (1.00 or 0.99) when trained on any of the "cities" training sets.

* The "neg\_cities" test set has a very low AUROC score (0.11) when trained on the "cities" training set without any added negative cities when no projection is applied.

* Applying the tG and tP projections significantly reduces the AUROC scores for the "facts", "neg\_facts", "facts\_conj", and "facts\_disj" test sets.

* Applying the tG and tP projections slightly reduces the AUROC scores for the "cities" and "neg\_cities" test sets.

### Interpretation

The heatmaps illustrate the impact of applying projections tG and tP on the AUROC performance of different test sets when trained on variations of the "cities" training set. The results suggest that projecting out tG and tP significantly degrades the performance on "facts", "neg\_facts", "facts\_conj", and "facts\_disj" test sets, indicating that these projections might remove information relevant to these tasks. The "cities" and "neg\_cities" test sets are less affected, suggesting that the projections have less impact on tasks related to city classification. The low AUROC score for "neg\_cities" when trained on "cities" without added negative cities highlights the importance of including negative examples in the training data for that specific task.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

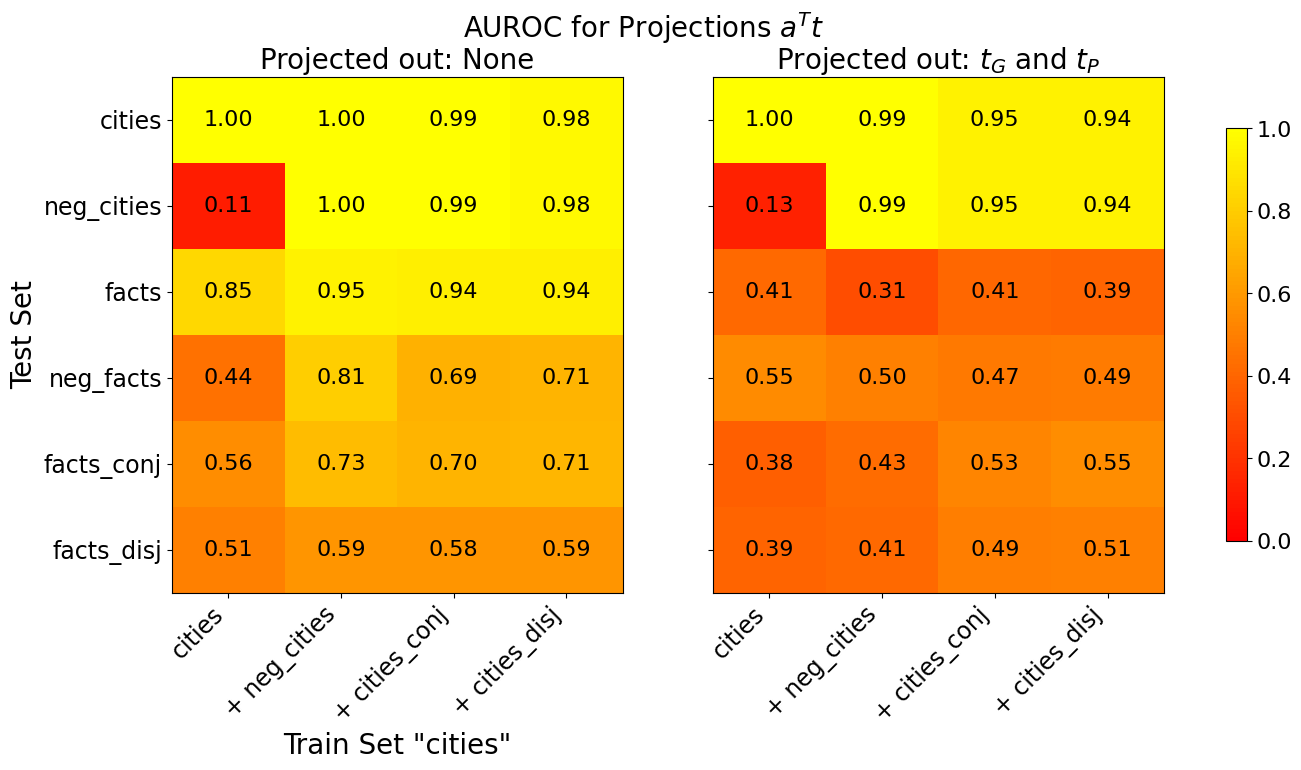

## Heatmap: AUROC for Projections aᵀt

### Overview

The image presents two heatmaps displaying Area Under the Receiver Operating Characteristic curve (AUROC) values for projections aᵀt. The heatmaps compare performance across different train and test sets, with varying projection conditions. The first heatmap shows results when no projections are applied ("Projected out: None"), while the second shows results when projections for τG and τP are applied ("Projected out: τG and τP").

### Components/Axes

* **Title:** "AUROC for Projections aᵀt" (centered at the top)

* **Subtitles:** "Projected out: None" (top-left) and "Projected out: τG and τP" (top-right)

* **X-axis Label:** "Train Set 'cities'" (bottom, shared by both heatmaps)

* **Y-axis Label:** "Test Set" (left, shared by both heatmaps)

* **X-axis Categories:** "cities", "+ neg\_cities", "+ cities\_conj", "+ cities\_disj"

* **Y-axis Categories:** "cities", "neg\_cities", "facts", "neg\_facts", "facts\_conj", "facts\_disj"

* **Color Scale/Legend:** Located on the right side of the image. Ranges from dark red (approximately 0.0) to yellow (approximately 1.0). The scale is linear.

* **Data Values:** Numerical values displayed within each cell of the heatmaps, representing AUROC scores.

### Detailed Analysis or Content Details

**Heatmap 1: Projected out: None**

The heatmap shows AUROC values when no projections are applied. The color intensity indicates the AUROC score, with darker red representing lower scores and yellow representing higher scores.

* **cities vs. cities:** 1.00

* **cities vs. + neg\_cities:** 1.00

* **cities vs. + cities\_conj:** 0.99

* **cities vs. + cities\_disj:** 0.98

* **neg\_cities vs. cities:** 0.11

* **neg\_cities vs. + neg\_cities:** 1.00

* **neg\_cities vs. + cities\_conj:** 0.99

* **neg\_cities vs. + cities\_disj:** 0.98

* **facts vs. cities:** 0.85

* **facts vs. + neg\_cities:** 0.95

* **facts vs. + cities\_conj:** 0.94

* **facts vs. + cities\_disj:** 0.94

* **neg\_facts vs. cities:** 0.44

* **neg\_facts vs. + neg\_cities:** 0.81

* **neg\_facts vs. + cities\_conj:** 0.69

* **neg\_facts vs. + cities\_disj:** 0.71

* **facts\_conj vs. cities:** 0.56

* **facts\_conj vs. + neg\_cities:** 0.73

* **facts\_conj vs. + cities\_conj:** 0.70

* **facts\_conj vs. + cities\_disj:** 0.71

* **facts\_disj vs. cities:** 0.51

* **facts\_disj vs. + neg\_cities:** 0.59

* **facts\_disj vs. + cities\_conj:** 0.58

* **facts\_disj vs. + cities\_disj:** 0.59

**Heatmap 2: Projected out: τG and τP**

The heatmap shows AUROC values when projections for τG and τP are applied.

* **cities vs. cities:** 1.00

* **cities vs. + neg\_cities:** 0.99

* **cities vs. + cities\_conj:** 0.95

* **cities vs. + cities\_disj:** 0.94

* **neg\_cities vs. cities:** 0.13

* **neg\_cities vs. + neg\_cities:** 0.99

* **neg\_cities vs. + cities\_conj:** 0.95

* **neg\_cities vs. + cities\_disj:** 0.94

* **facts vs. cities:** 0.41

* **facts vs. + neg\_cities:** 0.31

* **facts vs. + cities\_conj:** 0.41

* **facts vs. + cities\_disj:** 0.39

* **neg\_facts vs. cities:** 0.55

* **neg\_facts vs. + neg\_cities:** 0.50

* **neg\_facts vs. + cities\_conj:** 0.47

* **neg\_facts vs. + cities\_disj:** 0.49

* **facts\_conj vs. cities:** 0.38

* **facts\_conj vs. + neg\_cities:** 0.43

* **facts\_conj vs. + cities\_conj:** 0.53

* **facts\_conj vs. + cities\_disj:** 0.55

* **facts\_disj vs. cities:** 0.39

* **facts\_disj vs. + neg\_cities:** 0.41

* **facts\_disj vs. + cities\_conj:** 0.49

* **facts\_disj vs. + cities\_disj:** 0.51

### Key Observations

* In the first heatmap ("Projected out: None"), the AUROC scores are generally higher, particularly for the "cities" and "neg\_cities" categories. The highest scores (close to 1.0) are observed when the train and test sets are the same (e.g., "cities" vs. "cities").

* The second heatmap ("Projected out: τG and τP") shows significantly lower AUROC scores, especially for the "facts" and "neg\_facts" categories. This suggests that projecting out τG and τP negatively impacts performance on these types of data.

* The diagonal elements (train set = test set) consistently have the highest AUROC scores in both heatmaps.

* The "neg\_cities" category consistently performs poorly as a train set when tested against "facts" or "neg\_facts" in both heatmaps.

### Interpretation

The data suggests that the projections aᵀt are effective at distinguishing between "cities" and "neg\_cities" but less effective when dealing with "facts" and "neg\_facts". Projecting out τG and τP further degrades performance, particularly for the "facts" categories. This could indicate that τG and τP contain information that is important for accurately classifying facts, and removing this information leads to a loss of discriminative power.

The high AUROC scores along the diagonal indicate that the model is good at identifying instances that belong to the same category in both the train and test sets. The lower scores off-diagonal suggest that the model struggles to generalize to different categories.

The difference in performance between the two heatmaps highlights the importance of feature selection and projection techniques in machine learning. Choosing the right projections can significantly impact the accuracy and robustness of a model. The fact that projecting out τG and τP *decreases* performance suggests these projections are not simply noise, but contain useful signal for the task. Further investigation into the nature of τG and τP and their relationship to the "facts" categories could be beneficial.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap Pair: AUROC for Projections a^T t

### Overview

The image displays two side-by-side heatmaps comparing the Area Under the Receiver Operating Characteristic curve (AUROC) for different combinations of training and test sets. The overall title is "AUROC for Projections a^T t". The left heatmap shows results when nothing is "Projected out," while the right heatmap shows results when "t_G and t_P" are projected out. A shared color bar on the far right indicates the AUROC scale, ranging from 0.0 (dark red) to 1.0 (bright yellow).

### Components/Axes

* **Main Title:** "AUROC for Projections a^T t"

* **Subplot Titles:**

* Left: "Projected out: None"

* Right: "Projected out: t_G and t_P"

* **Y-Axis (Both Heatmaps):** Labeled "Test Set". Categories from top to bottom:

1. `cities`

2. `neg_cities`

3. `facts`

4. `neg_facts`

5. `facts_conj`

6. `facts_disj`

* **X-Axis (Both Heatmaps):** Labeled "Train Set 'cities'". Categories from left to right:

1. `cities`

2. `+ neg_cities`

3. `+ cities_conj`

4. `+ cities_disj`

* **Color Bar (Legend):** Positioned vertically on the right edge of the image. Scale from 0.0 (bottom, dark red) to 1.0 (top, bright yellow). Ticks at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

### Detailed Analysis

**Left Heatmap: Projected out: None**

This matrix shows generally high AUROC scores, especially for the `cities` and `neg_cities` test sets when trained on related sets.

| Test Set \ Train Set | `cities` | `+ neg_cities` | `+ cities_conj` | `+ cities_disj` |

| :--- | :--- | :--- | :--- | :--- |

| **`cities`** | 1.00 | 1.00 | 0.99 | 0.98 |

| **`neg_cities`** | 0.11 | 1.00 | 0.99 | 0.98 |

| **`facts`** | 0.85 | 0.95 | 0.94 | 0.94 |

| **`neg_facts`** | 0.44 | 0.81 | 0.69 | 0.71 |

| **`facts_conj`** | 0.56 | 0.73 | 0.70 | 0.71 |

| **`facts_disj`** | 0.51 | 0.59 | 0.58 | 0.59 |

**Right Heatmap: Projected out: t_G and t_P**

This matrix shows a significant reduction in AUROC scores across most categories, particularly for the fact-related test sets (`facts`, `neg_facts`, `facts_conj`, `facts_disj`), which now show values mostly in the 0.3-0.5 range (orange/red). The `cities` and `neg_cities` test sets retain relatively high scores.

| Test Set \ Train Set | `cities` | `+ neg_cities` | `+ cities_conj` | `+ cities_disj` |

| :--- | :--- | :--- | :--- | :--- |

| **`cities`** | 1.00 | 0.99 | 0.95 | 0.94 |

| **`neg_cities`** | 0.13 | 0.99 | 0.95 | 0.94 |

| **`facts`** | 0.41 | 0.31 | 0.41 | 0.39 |

| **`neg_facts`** | 0.55 | 0.50 | 0.47 | 0.49 |

| **`facts_conj`** | 0.38 | 0.43 | 0.53 | 0.55 |

| **`facts_disj`** | 0.39 | 0.41 | 0.49 | 0.51 |

### Key Observations

1. **Projection Impact:** The most striking observation is the dramatic decrease in AUROC for all test sets involving "facts" (`facts`, `neg_facts`, `facts_conj`, `facts_disj`) when `t_G` and `t_P` are projected out. Their scores drop from the 0.5-0.95 range to the 0.3-0.55 range.

2. **Robustness of `cities`/`neg_cities`:** The `cities` and `neg_cities` test sets maintain very high AUROC (≥0.94) in both conditions, except for the specific case where `neg_cities` is tested against a model trained only on `cities` (AUROC ~0.11-0.13). This indicates the model's core ability to distinguish city-related concepts is largely unaffected by projecting out `t_G` and `t_P`.

3. **Training Set Augmentation:** In the left heatmap, adding more data to the training set (`+ neg_cities`, `+ cities_conj`, `+ cities_disj`) generally improves or maintains performance for most test sets, with the notable exception of `neg_cities` tested on `cities` alone.

4. **Color Gradient Confirmation:** The visual color gradient aligns perfectly with the numerical values. Bright yellow cells correspond to values near 1.0, orange to mid-range values (~0.5-0.7), and dark red to low values (<0.2).

### Interpretation

This analysis investigates the role of specific projection vectors (`t_G` and `t_P`) in a model's ability to classify different types of data. The data suggests that `t_G` and `t_P` are **critical features for distinguishing fact-based information** (`facts`, `neg_facts`, etc.). When these features are removed (projected out), the model's performance on fact-related tasks collapses to near-random levels (AUROC ~0.5 is random guessing).

Conversely, the model's ability to classify city-related data (`cities`, `neg_cities`) appears to rely on different, more robust features that are not captured by `t_G` and `t_P`. The consistently high scores for these sets imply the model has learned a strong, separate representation for geographical or entity-based concepts.

The outlier—the very low AUROC for `neg_cities` vs. `cities` training—highlights a specific failure mode: a model trained only on positive city examples is very poor at identifying negative city examples, a gap that is immediately closed when negative examples are added to the training set (`+ neg_cities`).

In essence, the experiment demonstrates a **functional separation in the model's learned representations**: one set of features (`t_G`, `t_P`) is specialized for factual reasoning, while other, more persistent features handle entity classification. Removing the former cripples factual reasoning but leaves entity classification intact.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: AUROC for Projections a^Tt

### Overview

The image presents two side-by-side heatmaps comparing Area Under the Receiver Operating Characteristic (AUROC) values for model projections under two scenarios:

1. **Left**: No variables projected out (`Projected out: None`).

2. **Right**: Variables `t_G` and `t_P` projected out (`Projected out: t_G and t_P`).

The heatmaps evaluate model performance across combinations of **test sets** (rows) and **train sets** (columns), with values ranging from 0.0 (red) to 1.0 (yellow).

---

### Components/Axes

- **X-axis (Train Set "cities")**:

Subcategories:

- `cities`

- `+ neg_cities`

- `+ cities_conj`

- `+ cities_disj`

- **Y-axis (Test Set)**:

Subcategories:

- `cities`

- `neg_cities`

- `facts`

- `neg_facts`

- `facts_conj`

- `facts_disj`

- **Legend**:

A color bar on the right maps AUROC values:

- **Red**: 0.0–0.2

- **Orange**: 0.2–0.4

- **Yellow**: 0.4–0.6

- **Bright Yellow**: 0.6–0.8

- **Light Yellow**: 0.8–1.0

---

### Detailed Analysis

#### Left Heatmap (`Projected out: None`)

- **Key Values**:

- `cities` vs `cities`: 1.00 (bright yellow)

- `cities` vs `neg_cities`: 1.00 (bright yellow)

- `neg_cities` vs `cities`: 0.11 (red)

- `facts` vs `cities`: 0.85 (light yellow)

- `facts_conj` vs `cities_disj`: 0.71 (yellow)

- **Trends**:

- Highest AUROC values (1.00) occur when test and train sets match (`cities` vs `cities`, `cities` vs `neg_cities`).

- Values drop significantly when test and train sets differ (e.g., `neg_cities` vs `cities`: 0.11).

- `facts` and `facts_conj` show moderate performance (0.56–0.85).

#### Right Heatmap (`Projected out: t_G and t_P`)

- **Key Values**:

- `cities` vs `cities`: 1.00 (bright yellow)

- `cities` vs `neg_cities`: 0.99 (light yellow)

- `neg_cities` vs `cities`: 0.13 (red)

- `facts` vs `cities`: 0.41 (orange)

- `facts_conj` vs `cities_disj`: 0.55 (orange)

- **Trends**:

- Projection reduces AUROC for most combinations (e.g., `facts` vs `cities` drops from 0.85 to 0.41).

- `neg_facts` vs `cities` improves slightly (0.44 → 0.55).

- `facts_disj` vs `cities_disj` remains stable (0.59 → 0.51).

---

### Key Observations

1. **Projection Impact**:

- Projecting `t_G` and `t_P` generally **reduces AUROC** across most test-train pairs, except for `neg_facts` vs `cities` (improvement from 0.44 to 0.55).

- The largest drops occur in `facts` and `facts_conj` categories (e.g., `facts` vs `cities`: 0.85 → 0.41).

2. **Consistency**:

- `cities` vs `cities` remains perfect (1.00) in both scenarios.

- `neg_cities` vs `cities` shows minimal improvement (0.11 → 0.13).

3. **Color Consistency**:

- Red/orange dominates the right heatmap, confirming reduced performance post-projection.

---

### Interpretation

- **Model Sensitivity**:

Projecting `t_G` and `t_P` weakens the model’s ability to distinguish between `facts` and `cities` categories, likely due to loss of critical features.

- **Robustness**:

The model retains high performance when test and train sets align (`cities` vs `cities`), suggesting overfitting to the training data.

- **Anomalies**:

The slight improvement in `neg_facts` vs `cities` (0.44 → 0.55) may indicate that removing `t_G`/`t_P` reduces noise in this specific case.

- **Practical Implications**:

Projection of `t_G` and `t_P` risks degrading generalization, particularly for fact-based test sets. Retaining these variables preserves discriminative power across diverse scenarios.

DECODING INTELLIGENCE...