## Diagram: Three-Step Process for Training Language Models with RLHF

### Overview

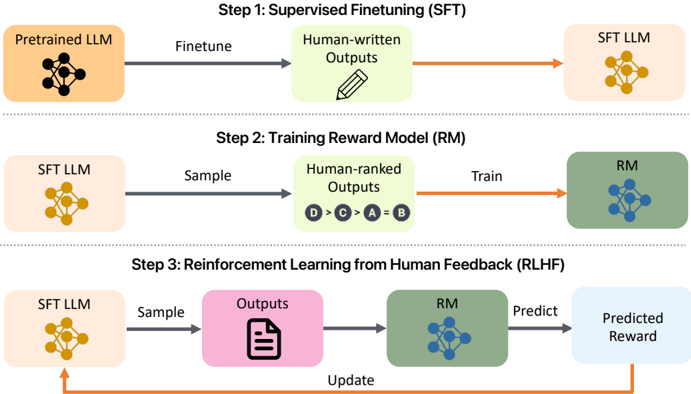

This image is a technical flowchart illustrating the three primary stages of the Reinforcement Learning from Human Feedback (RLHF) process for training large language models (LLMs). The diagram is divided into three horizontal sections, each representing a sequential step. The flow of data and model updates is indicated by directional arrows.

### Components/Axes

The diagram is structured into three distinct steps, each with its own set of components connected by arrows.

**Step 1: Supervised Finetuning (SFT)**

* **Input Component (Left):** An orange box labeled "Pretrained LLM" with a black brain/network icon.

* **Process Arrow:** A dark blue arrow labeled "Finetune" points to the right.

* **Data Component (Center):** A light green box labeled "Human-written Outputs" with a pencil icon.

* **Output Arrow:** An orange arrow points to the right.

* **Output Component (Right):** A light orange box labeled "SFT LLM" with a gold brain/network icon.

**Step 2: Training Reward Model (RM)**

* **Input Component (Left):** A light orange box labeled "SFT LLM" with a gold brain/network icon.

* **Process Arrow:** A dark blue arrow labeled "Sample" points to the right.

* **Data Component (Center):** A light green box labeled "Human-ranked Outputs". Inside this box is a ranking example: "D > C > A = B".

* **Process Arrow:** An orange arrow labeled "Train" points to the right.

* **Output Component (Right):** A dark green box labeled "RM" with a blue brain/network icon.

**Step 3: Reinforcement Learning from Human Feedback (RLHF)**

* **Input Component (Left):** A light orange box labeled "SFT LLM" with a gold brain/network icon.

* **Process Arrow:** A dark blue arrow labeled "Sample" points to the right.

* **Data Component (Center-Left):** A pink box labeled "Outputs" with a document icon.

* **Process Arrow:** A dark blue arrow points to the right.

* **Model Component (Center-Right):** A dark green box labeled "RM" with a blue brain/network icon.

* **Process Arrow:** A dark blue arrow labeled "Predict" points to the right.

* **Output Component (Right):** A light blue box labeled "Predicted Reward".

* **Feedback Loop:** An orange arrow labeled "Update" originates from the "Predicted Reward" box, curves downward and left, and points back to the initial "SFT LLM" component, indicating a cyclical update process.

### Detailed Analysis

The diagram explicitly details a sequential, three-stage pipeline:

1. **Step 1 (SFT):** A base "Pretrained LLM" is finetuned using a dataset of "Human-written Outputs" to create a supervised fine-tuned model ("SFT LLM").

2. **Step 2 (RM Training):** The "SFT LLM" from Step 1 is used to generate sample outputs. These outputs are then ranked by humans (as exemplified by the ranking "D > C > A = B", where D is preferred over C, which is preferred over A and B, which are tied). This ranked data is used to train a separate "Reward Model" ("RM").

3. **Step 3 (RLHF):** This step forms a closed loop. The "SFT LLM" generates new "Outputs". The trained "RM" evaluates these outputs and produces a "Predicted Reward". This reward signal is then used to "Update" the parameters of the "SFT LLM", improving it to generate outputs that better align with human preferences as captured by the RM.

### Key Observations

* **Model Evolution:** The diagram tracks the evolution of the primary LLM: from "Pretrained LLM" (black icon) to "SFT LLM" (gold icon). The "RM" (blue icon) is a separate, auxiliary model.

* **Data Flow:** Human input is critical in the first two steps (providing written outputs and rankings). The third step automates the feedback using the trained RM.

* **Visual Coding:** Colors and icons are used consistently: orange/gold for the primary LLM lineage, green for data/human input, blue for the RM and process arrows, and pink for generated outputs in the final step.

* **Cyclical Nature:** The "Update" arrow in Step 3 is the only feedback loop shown, emphasizing that RLHF is an iterative optimization process.

### Interpretation

This diagram provides a clear, high-level schematic of the RLHF alignment technique. It demonstrates how human feedback is systematically integrated into model training. The process moves from general language capability (Pretrained LLM) to basic instruction following (SFT), then learns a model of human preference (RM), and finally uses that preference model to optimize the LLM's behavior through reinforcement learning. The key insight is that the final "SFT LLM" in Step 3 is not static; it is continuously refined by the reward signal from the RM, which itself was trained on human judgments. This creates a pipeline where human values and preferences can be encoded into the model's behavior at scale. The ranking example "D > C > A = B" is particularly important, as it shows the RM is trained on comparative judgments, not absolute scores.