## Block Diagram: Neural Network Architecture with Input Processing

### Overview

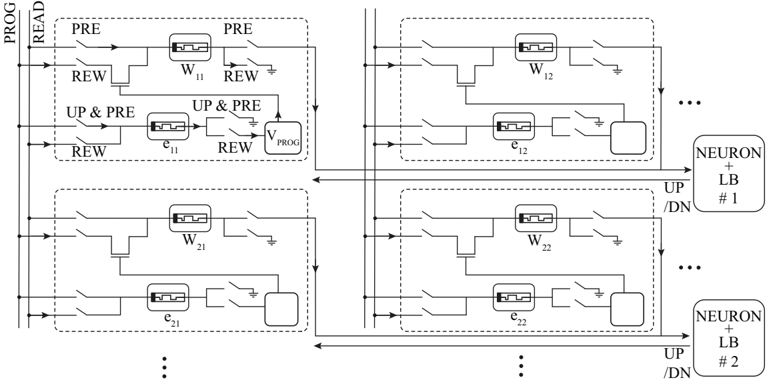

The diagram illustrates a multi-layered neural network architecture with integrated input processing components. It features parallel processing blocks connected to neuron layers, with explicit control signals for pre-processing, reward mechanisms, and voltage regulation.

### Components/Axes

- **Left Axis**:

- "PROG" (Program) and "READ" (Read) signals

- **Right Axis**:

- "NEURON + LB /DN #1" and "#2" (Neuron layers with layer boost and downsampling)

- **Key Components**:

- **Input Processing Blocks**:

- `PRE` (Pre-processing)

- `REW` (Reward mechanism)

- `UP & PRE` (Update + Pre-processing)

- `V_PROG` (Program voltage)

- **Processing Units**:

- `W11`, `W12`, `W21`, `W22` (Weight matrices)

- `e11`, `e12`, `e21`, `e22` (Error terms)

- **Output Path**:

- Arrows indicate signal flow from input processing to neuron layers

### Detailed Analysis

1. **Input Processing Path**:

- `PROG` and `READ` signals feed into pre-processing (`PRE`) and reward (`REW`) blocks.

- `UP & PRE` combines update operations with pre-processing, outputting to `V_PROG` (program voltage).

- Error terms (`e11`, `e12`, `e21`, `e22`) are generated within each processing block.

2. **Neuron Layer Connections**:

- Right-side blocks labeled `NEURON + LB /DN #1` and `#2` receive inputs from processing units.

- Layer boost (`LB`) and downsampling (`/DN`) operations are applied to neuron outputs.

3. **Signal Flow**:

- Arrows show bidirectional control between input processing and neuron layers.

- Feedback loops exist between `REW` and `UP & PRE` blocks.

### Key Observations

- **Repetitive Structure**: Identical processing blocks (`W11/e11`, `W12/e12`, etc.) suggest parallel or stacked layers.

- **Control Signals**: `PRE`, `REW`, and `UP & PRE` indicate dynamic adjustment mechanisms.

- **Voltage Regulation**: `V_PROG` implies hardware-level control of program execution.

### Interpretation

This diagram represents a hybrid neural network architecture combining:

1. **Input Processing**: Pre-processing, reward-based optimization, and voltage-controlled programming.

2. **Neuron Layers**: Standard feedforward connections with layer-specific boost/downsampling.

3. **Error Feedback**: Error terms (`e11`, `e12`, etc.) likely propagate back to adjust weights (`W11`, `W12`, etc.).

The architecture suggests a neuromorphic computing system where input signals are dynamically modulated before neuron activation, with explicit hardware support for reward-driven learning and voltage optimization. The parallel processing blocks imply scalability for multi-input or multi-output tasks.