## Flowchart Diagram: Neural Network Architecture Comparison

### Overview

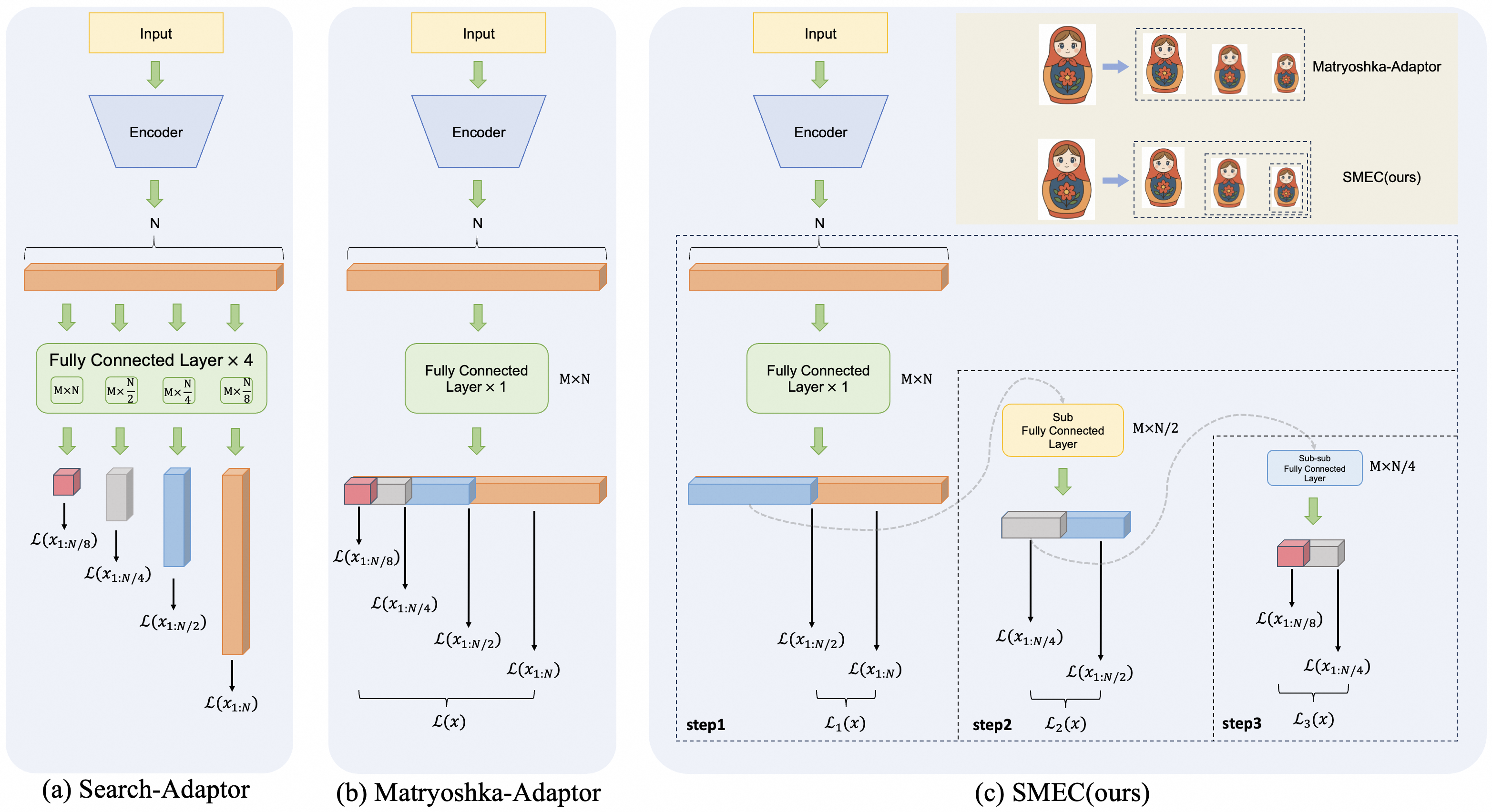

The diagram compares three neural network architectures (Search-Adaptor, Matryoshka-Adaptor, SMEC) through visual workflows. Each architecture processes input through an encoder, followed by distinct layer configurations and loss functions. The right side includes visual metaphors (Matryoshka dolls, SMEC logo) to represent the methods.

### Components/Axes

- **Input**: Top box labeled "Input" for all architectures.

- **Encoder**: Blue trapezoid labeled "Encoder" feeding into each architecture.

- **Layers**:

- **Search-Adaptor (a)**: Four fully connected layers (M×N, M×N/2, M×N/4, M×N/8) with loss functions L(x₁₈), L(x₁₄), L(x₁₂), L(x₁₁).

- **Matryoshka-Adaptor (b)**: Single fully connected layer (M×N) with loss functions L(x₁₈), L(x₁₄), L(x₁₂), L(x₁₁).

- **SMEC (c)**: Three-step process with sub-layers (M×N/2, M×N/4, M×N/8) and loss functions L₁(x), L₂(x), L₃(x).

- **Legend**: Right side shows Matryoshka-Adaptor (dolls) and SMEC (logo).

### Detailed Analysis

1. **Search-Adaptor (a)**:

- Input → Encoder → Four fully connected layers with progressively smaller dimensions.

- Loss functions applied at each layer (L(x₁₈) to L(x₁₁)).

2. **Matryoshka-Adaptor (b)**:

- Input → Encoder → Single fully connected layer (M×N).

- Loss functions applied at four stages (L(x₁₈) to L(x₁₁)), despite only one layer.

3. **SMEC (c)**:

- Input → Encoder → Three-step sub-layer process:

- **Step 1**: M×N layer with loss L₁(x).

- **Step 2**: M×N/2 layer with loss L₂(x).

- **Step 3**: M×N/4 layer with loss L₃(x).

### Key Observations

- **Complexity**: Search-Adaptor has the most layers (4), Matryoshka-Adaptor the fewest (1), and SMEC a moderate three-step process.

- **Loss Functions**: All architectures use similar loss terms (L(x₁₈), L(x₁₄), etc.), but SMEC introduces stepwise losses (L₁, L₂, L₃).

- **Visual Metaphors**: Matryoshka dolls (nested) represent Matryoshka-Adaptor’s layered structure; SMEC logo emphasizes its stepwise approach.

### Interpretation

The diagram highlights trade-offs between architectural complexity and adaptability:

- **Search-Adaptor** prioritizes granularity with multiple layers but may increase computational cost.

- **Matryoshka-Adaptor** simplifies the process but risks underfitting due to fewer layers.

- **SMEC** balances complexity and efficiency through stepwise sub-layers, potentially offering better generalization.

The Matryoshka dolls and SMEC logo visually reinforce the conceptual differences, suggesting SMEC’s method is both innovative and optimized for performance.