TECHNICAL ASSET FINGERPRINT

3fd11468c15fcfaa7d057324

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

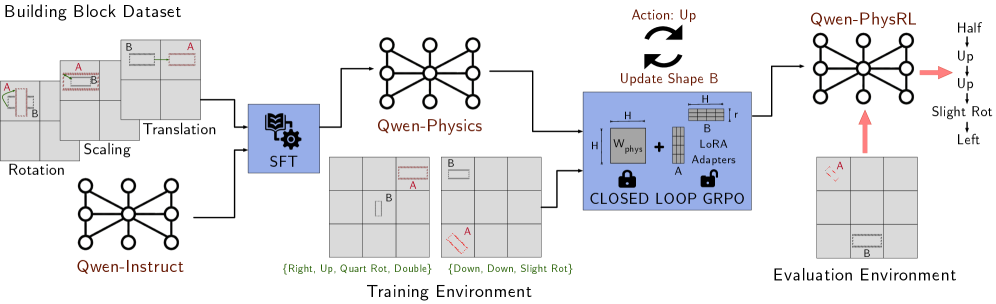

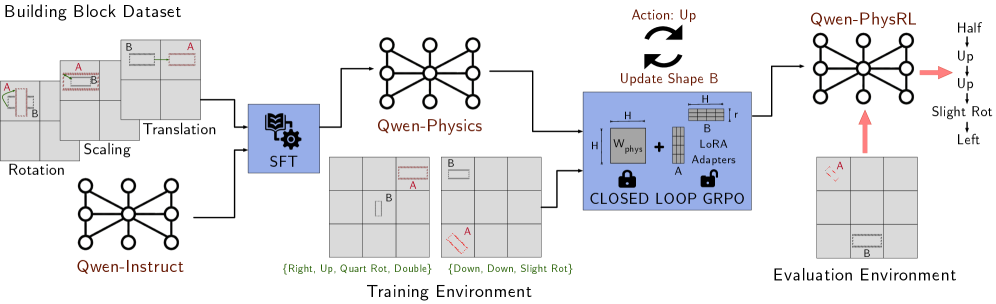

## Diagram: Building Block Dataset Workflow

### Overview

The image is a diagram illustrating a workflow for a building block dataset, likely used in a machine learning or AI context. It shows the process from initial data transformations to training and evaluation environments, incorporating components like Qwen-Instruct, Qwen-Physics, and a closed-loop GRPO.

### Components/Axes

* **Title:** Building Block Dataset

* **Initial Transformations (Left):**

* Rotation: Shows a 3x3 grid with blocks A and B undergoing rotation.

* Scaling: Shows a 3x3 grid with blocks A and B undergoing scaling.

* Translation: Shows a 3x3 grid with blocks A and B undergoing translation.

* **Qwen-Instruct:** A network diagram labeled "Qwen-Instruct" below the initial transformations.

* **SFT:** A blue box containing a book and gear icon, labeled "SFT".

* **Qwen-Physics:** A network diagram labeled "Qwen-Physics".

* **Training Environment:**

* Two 3x3 grids showing different block configurations.

* Text below the grids: "{Right, Up, Quart Rot, Double} {Down, Down, Slight Rot}"

* **Closed Loop GRPO:** A blue box labeled "CLOSED LOOP GRPO" containing:

* Wphys: A square labeled "Wphys" with height and width indicated by "H".

* LoRA Adapters: A stack of blocks labeled "LoRA Adapters" with height "H" and width "r".

* "+" symbol between Wphys and LoRA Adapters.

* Padlock icons above "CLOSED LOOP GRPO"

* "Update Shape B" above the box, with a circular arrow indicating feedback.

* "Action: Up" above the circular arrow.

* **Qwen-PhysRL:** A network diagram labeled "Qwen-PhysRL".

* **Evaluation Environment:** A 3x3 grid showing blocks A and B.

* **Action Labels (Right of Qwen-PhysRL):**

* Half

* Up

* Up

* Slight Rot

* Left

### Detailed Analysis

1. **Data Flow:** The workflow starts with initial transformations (Rotation, Scaling, Translation) applied to building blocks.

2. **Qwen-Instruct:** This component feeds into the SFT (likely Supervised Fine-Tuning) module.

3. **SFT and Qwen-Physics:** The output of SFT is processed by Qwen-Physics.

4. **Training Environment:** The training environment provides data for the Closed Loop GRPO.

5. **Closed Loop GRPO:** This module updates the shape B based on actions, creating a feedback loop. It combines Wphys and LoRA Adapters.

6. **Qwen-PhysRL and Evaluation:** The output of the GRPO is fed into Qwen-PhysRL, which interacts with the Evaluation Environment.

7. **Action Labels:** The actions "Half", "Up", "Up", "Slight Rot", and "Left" are associated with the Qwen-PhysRL component, indicating possible actions or outputs.

### Key Observations

* The diagram illustrates a closed-loop system for training and evaluating building block manipulations.

* The use of Qwen-Instruct, Qwen-Physics, and Qwen-PhysRL suggests a specific architecture or framework.

* The Closed Loop GRPO is a key component, incorporating both physical properties (Wphys) and learned adaptations (LoRA Adapters).

* The training environment provides specific actions like "Right, Up, Quart Rot, Double" and "Down, Down, Slight Rot".

### Interpretation

The diagram outlines a system for training an AI agent to manipulate building blocks. The agent learns through a combination of supervised fine-tuning (SFT), physics-based simulation (Qwen-Physics), and reinforcement learning (Qwen-PhysRL). The Closed Loop GRPO likely represents a control mechanism that refines the agent's actions based on feedback from the environment. The "Action: Up" and "Update Shape B" elements suggest an iterative process where the agent takes actions to modify the shape of block B, and the system evaluates the results. The LoRA adapters likely allow for efficient adaptation to new tasks or environments. The entire workflow demonstrates a sophisticated approach to AI-driven manipulation of physical objects.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Qwen-Physics Training and Evaluation Pipeline

### Overview

This diagram illustrates the training and evaluation pipeline for the Qwen-Physics model. It depicts the flow of data from a "Building Block Dataset" through a "Training Environment" to an "Evaluation Environment". The pipeline involves stages like Translation, Scaling, Rotation, Supervised Fine-Tuning (SFT), and Closed Loop GRPO, culminating in the Qwen-PhysRL model.

### Components/Axes

The diagram is structured into three main sections: "Building Block Dataset" (left), "Training Environment" (center), and "Evaluation Environment" (right). Within these sections, key components are:

* **Building Block Dataset:** Contains images of block arrangements labeled 'A' and 'B', undergoing transformations like Translation, Scaling, and Rotation.

* **Qwen-Physics:** A graph-based representation of the physics engine.

* **Qwen-Instruct:** Another graph-based representation, likely used for instruction following.

* **SFT (Supervised Fine-Tuning):** Represented by a gear icon within a blue box.

* **LoRA Adapters:** A component within the Closed Loop GRPO stage, represented by a blue box with 'H' and 'W' inside, and 'A' and 'B' connected by a plus sign.

* **Closed Loop GRPO:** Represented by a locked padlock icon.

* **Qwen-PhysRL:** The final model, also a graph-based representation.

* **Evaluation Environment:** Contains images of block arrangements labeled 'A' and 'B'.

* **Action:** "Up" with a circular arrow indicating an update.

* **Update Shape B:** Text indicating the action affects shape B.

* **Training Environment Labels:** "(Right, Up, Quart Rot, Double)" and "(Down, Down, Slight Rot)".

* **Evaluation Environment Labels:** "Half", "Up", "Slight Rot", "Left".

### Detailed Analysis / Content Details

The diagram shows a sequential flow of information.

1. **Building Block Dataset:** The initial stage presents images of block arrangements. The blocks are labeled 'A' and 'B'. These images are subjected to transformations: Translation, Scaling, and Rotation. Below these transformations is a graph representation of Qwen-Instruct.

2. **Training Environment:** The transformed data then flows into the Training Environment. Here, the data is processed through SFT, which then feeds into a component involving LoRA Adapters and Closed Loop GRPO. The training environment also shows two sets of block arrangements with labels indicating the training conditions: "(Right, Up, Quart Rot, Double)" and "(Down, Down, Slight Rot)".

3. **Evaluation Environment:** The output of the training process (Qwen-PhysRL) is then used in the Evaluation Environment. The evaluation environment shows block arrangements with labels indicating the evaluation conditions: "Half", "Up", "Slight Rot", "Left". An arrow points from Qwen-PhysRL to the evaluation environment.

4. **Qwen-Physics:** A graph representation is shown between the SFT and the LoRA Adapters/Closed Loop GRPO.

5. **Action/Update:** An "Action: Up" with a circular arrow indicates an iterative update process, specifically affecting "Update Shape B".

### Key Observations

* The pipeline is designed for iterative refinement, as indicated by the "Closed Loop GRPO" and the "Action/Update" components.

* The use of LoRA Adapters suggests a parameter-efficient fine-tuning approach.

* The diagram highlights the importance of both supervised learning (SFT) and reinforcement learning (RL) in the training process.

* The training and evaluation environments have specific conditions defined by the labels.

* The graph representations of Qwen-Instruct, Qwen-Physics, and Qwen-PhysRL suggest a focus on relational reasoning and physics understanding.

### Interpretation

The diagram illustrates a sophisticated training pipeline for a physics-based AI model (Qwen-Physics). The pipeline begins with a dataset of building block arrangements and uses transformations to create a diverse training set. Supervised fine-tuning (SFT) is used to initialize the model, followed by a closed-loop reinforcement learning process (GRPO) with LoRA adapters for efficient adaptation. The evaluation environment tests the model's ability to generalize to new scenarios. The labels associated with the training and evaluation environments suggest that the model is being trained and tested on a variety of conditions, including position, rotation, and scale. The use of graph representations suggests that the model is designed to reason about the relationships between objects in the environment. The iterative nature of the closed-loop GRPO indicates a focus on continuous improvement and refinement of the model's performance. The diagram suggests a system designed to learn and apply physics principles to solve problems involving block arrangements.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Qwen-Physics Reinforcement Learning Training Pipeline

### Overview

The image is a technical flowchart illustrating a machine learning training pipeline for a physics-aware model named "Qwen-Physics" and its reinforcement learning variant "Qwen-PhysRL." The diagram details the flow from a dataset, through supervised fine-tuning (SFT), into a training environment with a closed-loop reinforcement learning algorithm (GRPO), and finally to an evaluation environment. The process involves manipulating building blocks (labeled A and B) within a grid world.

### Components/Axes

The diagram is organized into several distinct regions and components, flowing generally from left to right.

**1. Header (Top-Left):**

* **Title:** "Building Block Dataset"

* **Content:** A series of grid-based examples showing transformations applied to two objects, labeled **A** (red outline) and **B** (blue outline).

* **Transformation Labels:** "Rotation", "Scaling", "Translation". These are positioned near the corresponding example grids.

**2. Initial Model & Training Start (Left):**

* **Model Icon:** A neural network diagram labeled **"Qwen-Instruct"**.

* **Process Block:** A blue box with a gear icon labeled **"SFT"** (Supervised Fine-Tuning). Arrows connect the "Building Block Dataset" and "Qwen-Instruct" to this block.

**3. Core Model (Center-Top):**

* **Model Icon:** A larger neural network diagram labeled **"Qwen-Physics"**. An arrow flows from the "SFT" block to this model.

**4. Training Environment (Center-Bottom):**

* **Title:** **"Training Environment"** (positioned at the bottom center).

* **Content:** Two example grid states.

* **Left Grid:** Shows object **A** (red) and object **B** (blue). Below it, a text string in green: **`{Right, Up, Quart Rot, Double}`**.

* **Right Grid:** Shows object **A** (red, rotated) and object **B** (blue). Below it, a text string in green: **`{Down, Down, Slight Rot}`**.

* **Flow:** An arrow points from "Qwen-Physics" to this environment.

**5. Learning Algorithm Block (Center-Right):**

* **Title:** **"CLOSED LOOP GRPO"** (inside a large blue box).

* **Internal Components:**

* A weight matrix labeled **`W_physics`** with a lock icon.

* A plus sign (`+`).

* A diagram labeled **"LoRA Adapters"** showing matrices **H**, **B**, **r**, and **A**.

* A lock icon next to the LoRA Adapters.

* **External Annotations:**

* Above the box: Two curved arrows forming a loop. Labels: **"Action: Up"** and **"Update Shape B"**.

* An arrow flows from the "Training Environment" into this block.

**6. Evaluation Environment & Final Model (Right):**

* **Model Icon:** A neural network diagram labeled **"Qwen-PhysRL"**. An arrow flows from the "CLOSED LOOP GRPO" block to this model.

* **Title:** **"Evaluation Environment"** (positioned at the bottom right).

* **Content:** A single grid state showing object **A** (red) and object **B** (blue). A red arrow points from this grid up to the "Qwen-PhysRL" model.

* **Output Action Sequence:** To the right of the "Qwen-PhysRL" model, a vertical list of actions in black text:

* **Half**

* **Up**

* **Up**

* **Slight Rot**

* **Left**

* A pink arrow points from the model to this list.

### Detailed Analysis

The diagram depicts a multi-stage training and evaluation process:

1. **Data & Initialization:** A "Building Block Dataset" containing examples of object transformations (Rotation, Scaling, Translation) is used. An initial model, "Qwen-Instruct," is the starting point.

2. **Supervised Fine-Tuning (SFT):** The dataset and the initial model are used to perform SFT, resulting in the "Qwen-Physics" model.

3. **Reinforcement Learning Loop:**

* The "Qwen-Physics" model interacts with a "Training Environment" consisting of grid worlds with objects A and B.

* The model's actions (e.g., `{Right, Up, Quart Rot, Double}`) are fed into a "CLOSED LOOP GRPO" (Group Relative Policy Optimization) algorithm.

* This algorithm contains a frozen physics weight matrix (`W_physics`) and trainable LoRA adapters, indicating parameter-efficient fine-tuning.

* The loop annotation ("Action: Up" / "Update Shape B") suggests the policy is updated based on the outcome of actions taken in the environment.

4. **Final Model & Evaluation:** The output of the GRPO training is the "Qwen-PhysRL" model. This model is then tested in a separate "Evaluation Environment." The diagram shows it generating a specific sequence of actions ("Half, Up, Up, Slight Rot, Left") in response to a given grid state.

### Key Observations

* **Two-Phase Training:** The pipeline clearly separates initial supervised learning (SFT) from subsequent reinforcement learning (GRPO).

* **Parameter Efficiency:** The use of LoRA Adapters within the GRPO block highlights a focus on efficient adaptation of the large "Qwen-Physics" model.

* **Environment Abstraction:** The "Training" and "Evaluation" environments are represented as grid worlds with simple objects (A, B) and discrete action spaces (movement, rotation).

* **Action Representation:** Actions are represented as sequences of commands (e.g., "Quart Rot" for quarter rotation, "Slight Rot").

* **Visual Coding:** Objects are consistently color-coded (A=red, B=blue) across all grids. The learning algorithm components are highlighted in a distinct blue box.

### Interpretation

This diagram outlines a methodology for imbuing a large language model (Qwen) with physics-based reasoning capabilities through a structured, two-stage training regimen.

* **Purpose:** The goal is to create a model ("Qwen-PhysRL") that can understand and predict the outcomes of physical interactions (like moving and rotating objects in a grid) and generate appropriate action sequences to achieve a desired state.

* **Relationships:** The "Building Block Dataset" provides the foundational knowledge of object transformations. SFT injects this knowledge into the base model. The RL loop (GRPO) then refines this knowledge by having the model actively experiment in an environment, learning from trial and error to optimize its policy, with the frozen `W_physics` likely preserving core physical understanding while the LoRA adapters learn task-specific strategies.

* **Notable Design Choice:** The "CLOSED LOOP" nature of the GRPO is critical. It implies the model's own actions and their results in the environment are used to continuously update its policy, creating a self-improving system for physical reasoning tasks. The separation of training and evaluation environments tests the model's ability to generalize its learned physics policies to new, unseen configurations.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

```markdown

## Diagram: PhysicsSimulation Training and Evaluation Pipeline

### Overview

The diagram illustrates a closed-loop physics simulation pipeline for training and evaluating a reinforcement learning (RL) agent. It integrates dataset generation, physics modeling, training with gradient-based optimization, and evaluation in a block-stacking environment. Key components include data augmentation, physics simulation, and action-space exploration.

### Components/Axes

1. **Building Block Dataset**

- Contains blocks labeled A and B with transformations:

- Rotation (e.g., 90°, 180°)

- Scaling (e.g., 0.5x, 2x)

- Translation (e.g., +1 unit, -2 units)

- Visualized as grid-aligned blocks with positional annotations.

2. **Qwen-Instruct**

- A graph-based node (central hub) connected to the Building Block Dataset.

- Represents instruction processing or policy initialization.

3. **Qwen-Physics**

- A graph-based node connected to Qwen-Instruct.

- Models physical interactions (e.g., gravity, friction) for block stacking.

4. **Training Environment**

- Contains:

- **SFT (Supervised Fine-Tuning)**: A blue box with a gear icon, processing transformed blocks.

- **Closed-Loop GRPO**: A locked box with "W_phys" (physics weight) and "LoRA Adapters" for parameter-efficient tuning.

- Actions include directional movements (Right, Up, Down) and rotations (Quart Rot, Slight Rot).

5. **Evaluation Environment**

- Labeled "Qwen-PhysRL" with a graph-based node.

- Actions: Up, Slight Rot, Left, Half Up.

- Visualized with arrows indicating action outcomes (e.g., "Up" moves block A upward).

6. **Action Feedback Loop**

- Arrows indicate iterative updates:

- "Update: Up" → "Update Shape B"

- Closed-loop GRPO adjusts physics weights (W_phys) based on evaluation results.

### Detailed Analysis

- **Dataset Generation**: Blocks A and B undergo randomized transformations (rotation, scaling, translation) to create diverse training scenarios.

- **Physics Modeling**: Qwen-Physics simulates real-world constraints (e.g., block stability, collision detection).

- **Training**:

- SFT initializes the policy using supervised examples.

- Closed-loop GRPO refines the policy using LoRA adapters to optimize physics-aware actions.

- **Evaluation**: Qwen-PhysRL tests the agent’s ability to execute precise actions (e.g., "Slight Rot" rotates block A 45°).

### Key Observations

- **Action-Space Complexity**: The evaluation environment includes both discrete (Up/Down) and continuous (Slight Rot) actions, suggesting hybrid control strategies.

- **Physics Integration**: LoRA adapters in the closed-loop GRPO indicate dynamic adjustment of physics parameters during training.

- **Block Interactions**: Block B is frequently updated ("Update Shape B"), implying it acts as a movable target or obstacle.

### Interpretation

This pipeline demonstrates a physics-informed RL framework for robotic manipulation tasks. The closed-loop GRPO with Lo

DECODING INTELLIGENCE...