\n

## Line Chart: Training Accuracy Comparison

### Overview

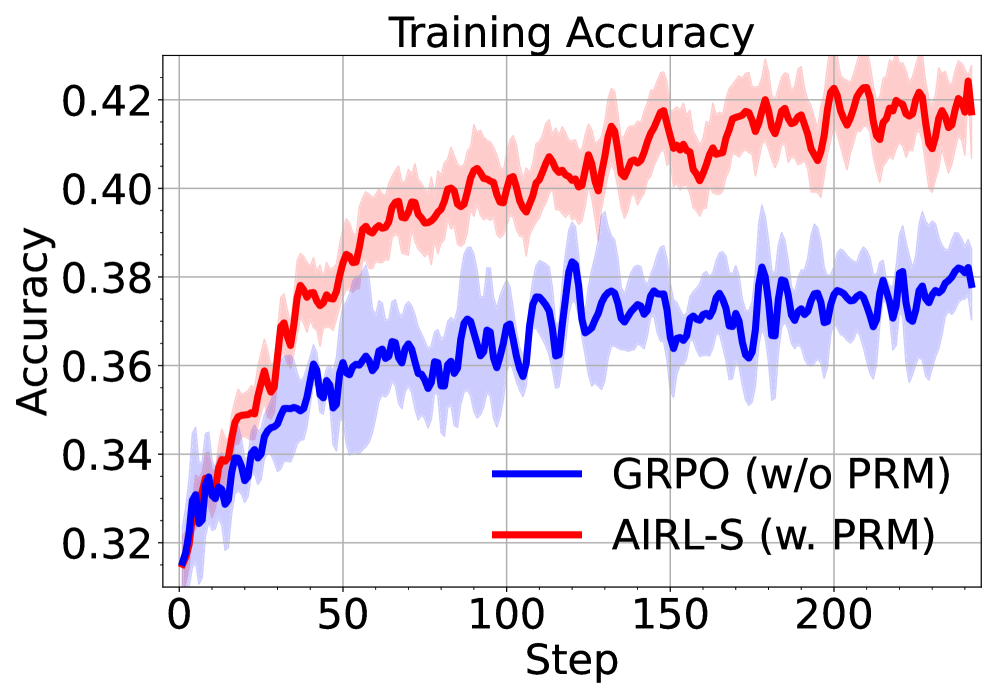

The image displays a line chart titled "Training Accuracy," comparing the performance of two machine learning training methods over 200+ steps. The chart plots accuracy values on the y-axis against training steps on the x-axis. Each method is represented by a solid line (mean performance) and a semi-transparent shaded region (likely representing variance or confidence intervals).

### Components/Axes

* **Chart Title:** "Training Accuracy" (centered at the top).

* **Y-Axis:**

* **Label:** "Accuracy" (rotated vertically on the left side).

* **Scale:** Linear scale ranging from 0.32 to 0.42.

* **Tick Marks:** Major ticks at 0.32, 0.34, 0.36, 0.38, 0.40, 0.42.

* **X-Axis:**

* **Label:** "Step" (centered at the bottom).

* **Scale:** Linear scale from 0 to just beyond 200.

* **Tick Marks:** Major ticks at 0, 50, 100, 150, 200.

* **Legend:** Located in the bottom-right quadrant of the chart area.

* **Blue Line:** Labeled "GRPO (w/o PRM)".

* **Red Line:** Labeled "AIRL-S (w. PRM)".

* **Data Series:**

1. **Blue Line (GRPO w/o PRM):** A solid blue line with a light blue shaded region around it.

2. **Red Line (AIRL-S w. PRM):** A solid red line with a light red shaded region around it.

### Detailed Analysis

**Trend Verification & Data Points:**

* **GRPO (w/o PRM) - Blue Line:**

* **Visual Trend:** The line shows a rapid initial increase from step 0 to approximately step 50, after which the rate of improvement slows significantly, entering a noisy plateau phase from step 100 onward.

* **Approximate Data Points:**

* Step 0: ~0.32

* Step 50: ~0.36

* Step 100: ~0.37

* Step 150: ~0.375

* Step 200: ~0.38

* **Uncertainty (Shaded Region):** The blue shaded region is relatively narrow initially but widens considerably after step 50, indicating increased variance in performance. The width suggests the accuracy for this method fluctuates within a band of approximately ±0.01 to ±0.015 around the mean line during the plateau phase.

* **AIRL-S (w. PRM) - Red Line:**

* **Visual Trend:** The line exhibits a strong, sustained upward trend throughout the entire training period shown. The slope is steepest initially and remains positive, though slightly less steep, after step 100. It consistently stays above the blue line after the first ~20 steps.

* **Approximate Data Points:**

* Step 0: ~0.32 (similar starting point to blue line)

* Step 50: ~0.385

* Step 100: ~0.405

* Step 150: ~0.415

* Step 200: ~0.42

* **Uncertainty (Shaded Region):** The red shaded region is also present and appears to widen as training progresses, similar to the blue region. Its width suggests a variance of approximately ±0.01 to ±0.02 around the mean red line, particularly in the later steps.

**Spatial Grounding:** The legend is positioned in the bottom-right, clearly associating the blue color with "GRPO (w/o PRM)" and the red color with "AIRL-S (w. PRM)". The lines and their corresponding shaded regions maintain these color assignments throughout the chart.

### Key Observations

1. **Performance Gap:** A clear and growing performance gap emerges early in training. By step 50, AIRL-S (w. PRM) is already about 0.025 accuracy points higher than GRPO (w/o PRM). This gap widens to approximately 0.04 points by step 200.

2. **Learning Dynamics:** GRPO (w/o PRM) appears to converge or plateau around an accuracy of 0.37-0.38 after step 100. In contrast, AIRL-S (w. PRM) shows no clear signs of plateauing within the 200-step window and continues to improve.

3. **Initial Conditions:** Both methods start at nearly the identical accuracy level (~0.32) at step 0.

4. **Noise/Variance:** Both training processes exhibit significant step-to-step noise, as evidenced by the jaggedness of the mean lines and the width of the shaded confidence bands. The variance appears comparable between the two methods.

### Interpretation

The chart presents a comparative analysis of two training algorithms, likely in the domain of reinforcement learning or iterative model optimization, given the "Step" axis and the acronyms (GRPO, AIRL-S, PRM).

* **What the data suggests:** The method "AIRL-S (w. PRM)" demonstrates superior learning efficiency and final performance compared to "GRPO (w/o PRM)" on this specific task, as measured by training accuracy. The inclusion of "PRM" (the specific component is not defined in the chart) appears to be a critical factor enabling sustained learning and higher asymptotic performance.

* **Relationship between elements:** The direct comparison on the same axes controls for task and evaluation metrics, isolating the effect of the algorithmic difference (AIRL-S vs. GRPO) and the presence/absence of PRM. The shared starting point reinforces that the divergence is due to the training process, not initial model states.

* **Notable patterns/anomalies:** The most significant pattern is the divergence in learning trajectories. The plateau of the blue line suggests it may have reached a local optimum or a limit imposed by its algorithmic structure. The continued rise of the red line indicates that AIRL-S with PRM either has a better optimization landscape, avoids premature convergence, or incorporates a mechanism (possibly the PRM) that facilitates ongoing improvement. The high variance in both signals is typical of many stochastic training processes but does not obscure the clear trend difference.