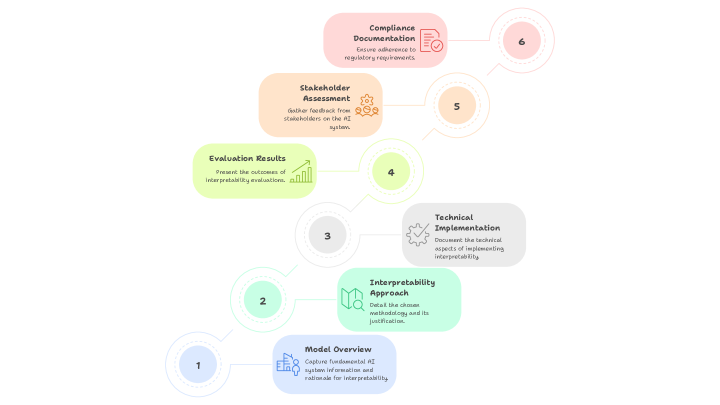

## Process Diagram: AI Interpretability Documentation Workflow

### Overview

The image displays a six-step sequential process diagram illustrating the workflow for documenting AI system interpretability. The steps are arranged in a zigzag pattern, ascending from the bottom-left to the top-right of the frame. Each step is contained within a rounded rectangular box of a distinct pastel color, connected by thin, curved lines to numbered circles that indicate the sequence. The overall aesthetic is clean, modern, and uses a soft color palette.

### Components/Axes

The diagram consists of six primary components (steps), each with a title, a descriptive text block, and an associated icon. The steps are connected by lines to numbered circles (1 through 6) which establish the order of operations.

**Numbered Sequence Circles:**

* **1:** Light blue circle, bottom-left.

* **2:** Light green circle, positioned above and to the right of step 1.

* **3:** Light grey circle, positioned above and to the right of step 2.

* **4:** Light yellow-green circle, positioned above and to the right of step 3.

* **5:** Light orange circle, positioned above and to the right of step 4.

* **6:** Light pink circle, top-right.

**Process Steps (in sequential order):**

1. **Step 1 (Bottom-Left):**

* **Color:** Light blue box.

* **Title:** `Model Overview`

* **Description:** `Capture fundamental AI model characteristics and rationale for interpretability.`

* **Icon:** A simple line drawing of a brain or neural network.

2. **Step 2:**

* **Color:** Light green box.

* **Title:** `Interpretability Approach`

* **Description:** `Detail the chosen interpretability method and its justification.`

* **Icon:** A magnifying glass over a document or chart.

3. **Step 3:**

* **Color:** Light grey box.

* **Title:** `Technical Implementation`

* **Description:** `Document the technical aspects of implementing interpretability.`

* **Icon:** A gear or cogwheel.

4. **Step 4:**

* **Color:** Light yellow-green box.

* **Title:** `Evaluation Results`

* **Description:** `Present the outcomes of interpretability evaluations.`

* **Icon:** A bar chart with an upward trend.

5. **Step 5:**

* **Color:** Light orange box.

* **Title:** `Stakeholder Assessment`

* **Description:** `Gather feedback from relevant stakeholders on the AI system.`

* **Icon:** A group of three stylized people figures.

6. **Step 6 (Top-Right):**

* **Color:** Light pink box.

* **Title:** `Compliance Documentation`

* **Description:** `Ensure adherence to regulatory requirements.`

* **Icon:** A document with a checkmark seal.

### Detailed Analysis

The diagram outlines a linear, six-phase documentation process for AI interpretability. The flow is clearly directional, moving from foundational understanding (Model Overview) through method selection, technical execution, results evaluation, human feedback, and finally to regulatory formalization.

* **Spatial Flow:** The process begins at the bottom-left (Step 1) and progresses diagonally upward to the top-right (Step 6), suggesting a building or ascending workflow.

* **Visual Grouping:** Each step is visually distinct due to its unique color, but the consistent shape and connecting lines unify them as parts of a single process.

* **Text Transcription:** All text within the six boxes has been transcribed verbatim above. The language is technical and action-oriented (e.g., "Capture," "Detail," "Document," "Present," "Gather," "Ensure").

### Key Observations

1. **Logical Progression:** The sequence follows a logical project lifecycle: define the subject (1), choose a method (2), build it (3), test it (4), get user input (5), and finalize for audit (6).

2. **Stakeholder Inclusion:** Step 5 explicitly introduces human elements ("Stakeholder Assessment") after the technical work is evaluated, indicating that interpretability is not just a technical exercise but also a communicative and social one.

3. **Regulatory Endpoint:** The final step is "Compliance Documentation," highlighting that the ultimate goal of this interpretability process may be to meet external legal or ethical standards.

4. **Iconography:** Each step has a simple, relevant icon that reinforces its title (e.g., a gear for "Technical Implementation," people for "Stakeholder Assessment").

### Interpretation

This diagram represents a **structured framework for creating comprehensive documentation for an AI system's interpretability features.** It is not a generic software development lifecycle but a specialized process focused on making an AI model's decisions understandable and accountable.

The workflow suggests that true AI interpretability requires more than just technical implementation (Step 3). It necessitates:

* **A clear starting rationale** (Why do we need to interpret this model? - Step 1).

* **A justified methodology** (How will we interpret it? - Step 2).

* **Validation through evaluation** (Did our method work? - Step 4).

* **Social validation** (Do stakeholders understand and trust the explanations? - Step 5).

* **Formalization for governance** (Can we prove compliance? - Step 6).

The placement of "Compliance Documentation" as the final output implies that the entire process is geared towards producing an auditable artifact. This positions AI interpretability as a critical component of responsible AI governance, risk management, and regulatory adherence. The diagram serves as a checklist or roadmap for teams to ensure they address all necessary dimensions—technical, evaluative, social, and legal—when documenting how and why their AI models make decisions.