## Process Diagram: AI System Interpretability

### Overview

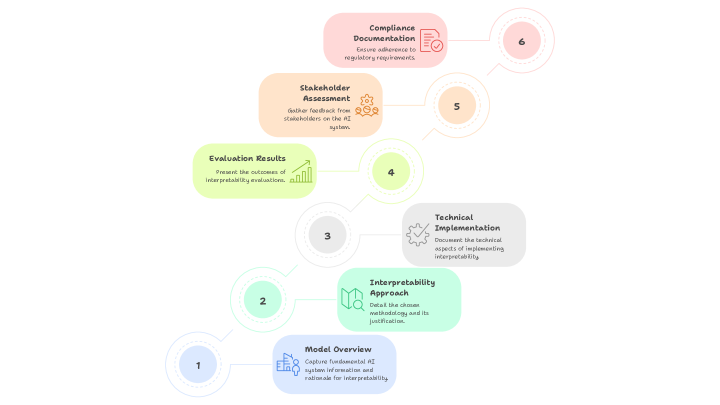

The image is a process diagram outlining the steps for ensuring interpretability in an AI system. It presents a sequential workflow, starting with a model overview and ending with compliance documentation. Each step is represented by a numbered circle connected to a text box describing the action.

### Components/Axes

The diagram consists of the following components:

* **Numbered Circles:** Representing the sequential steps in the process (1 through 6).

* **Text Boxes:** Adjacent to each numbered circle, providing a description of the step.

* **Icons:** Each text box contains an icon representing the step.

* **Connecting Lines:** Indicate the flow of the process from one step to the next.

The steps are as follows:

1. **Model Overview:** Capture fundamental AI system information and rationale for interpretability. Icon: Building icon with figures.

2. **Interpretability Approach:** Detail the chosen methodology and its justification. Icon: Map icon.

3. **Technical Implementation:** Document the technical aspects of implementing interpretability. Icon: Gear icon with a checkmark.

4. **Evaluation Results:** Present the outcomes of interpretability evaluations. Icon: Bar graph icon with an upward trend.

5. **Stakeholder Assessment:** Gather feedback from stakeholders on the AI system. Icon: Gear icon with three person icons.

6. **Compliance Documentation:** Ensure adherence to regulatory requirements. Icon: Document icon with a checkmark.

### Detailed Analysis

* **Step 1: Model Overview**

* Text: "Model Overview. Capture fundamental AI system information and rationale for interpretability."

* Position: Bottom-left.

* Icon: Building icon with figures.

* **Step 2: Interpretability Approach**

* Text: "Interpretability Approach. Detail the chosen methodology and its justification."

* Position: Lower-left, slightly above Step 1.

* Icon: Map icon.

* **Step 3: Technical Implementation**

* Text: "Technical Implementation. Document the technical aspects of implementing interpretability."

* Position: Center-right, slightly above Step 2.

* Icon: Gear icon with a checkmark.

* **Step 4: Evaluation Results**

* Text: "Evaluation Results. Present the outcomes of interpretability evaluations."

* Position: Center-left, slightly above Step 3.

* Icon: Bar graph icon with an upward trend.

* **Step 5: Stakeholder Assessment**

* Text: "Stakeholder Assessment. Gather feedback from stakeholders on the AI system."

* Position: Upper-right, slightly above Step 4.

* Icon: Gear icon with three person icons.

* **Step 6: Compliance Documentation**

* Text: "Compliance Documentation. Ensure adherence to regulatory requirements."

* Position: Top-right.

* Icon: Document icon with a checkmark.

### Key Observations

* The diagram presents a linear, sequential process.

* Each step builds upon the previous one, starting with foundational information and culminating in compliance.

* The icons provide a visual representation of each step, aiding in quick comprehension.

### Interpretation

The diagram illustrates a structured approach to incorporating interpretability into an AI system. It emphasizes the importance of understanding the model, defining a clear methodology, implementing technical solutions, evaluating results, gathering stakeholder feedback, and ensuring compliance. The process highlights the iterative nature of AI development, where feedback and evaluation inform subsequent steps. The diagram suggests that interpretability is not a one-time task but an ongoing process integrated into the AI system's lifecycle.