\n

## Diagram: AI Interpretability Lifecycle

### Overview

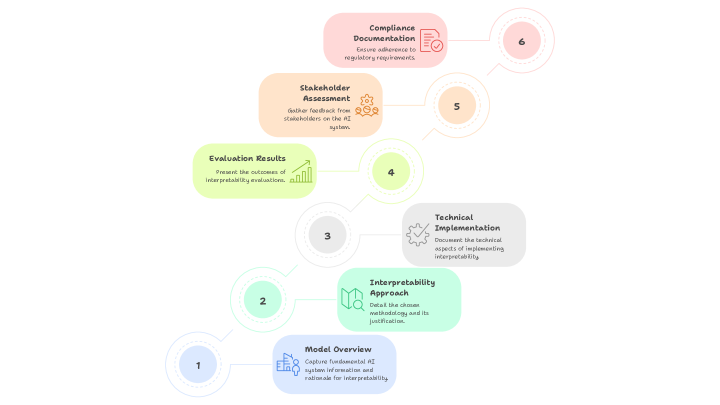

The image depicts a circular diagram illustrating a six-stage lifecycle for AI Interpretability. The stages are arranged clockwise, starting from the bottom and progressing upwards. Each stage is represented by a colored circle containing a number and a descriptive text box. Connecting lines with arrows indicate the flow between stages.

### Components/Axes

The diagram consists of six numbered stages, each with a corresponding text description. There are no explicit axes, but the circular arrangement implies a cyclical process. The stages are:

1. Model Overview

2. Interpretability Approach

3. Technical Implementation

4. Evaluation Results

5. Stakeholder Assessment

6. Compliance Documentation

### Detailed Analysis or Content Details

The diagram details a process flow with the following stages:

* **Stage 1 (Bottom):** "Model Overview" - Capture fundamental AI system information and rationale for interpretability. Circle color: Light Blue.

* **Stage 2 (Lower-Left):** "Interpretability Approach" - Detail the chosen methodology and its justification. Circle color: Light Green.

* **Stage 3 (Left):** "Technical Implementation" - Document the technical aspects of implementing interpretability. Circle color: Yellow.

* **Stage 4 (Upper-Left):** "Evaluation Results" - Present the outcomes of interpretability evaluation. Circle color: Orange.

* **Stage 5 (Upper-Right):** "Stakeholder Assessment" - Gather feedback from stakeholders on the AI system. Circle color: Peach.

* **Stage 6 (Right):** "Compliance Documentation" - Ensure adherence to regulatory requirements. Circle color: Light Red.

The connecting lines are curved arrows indicating the sequential flow from Stage 1 to Stage 6, and implicitly back to Stage 1, suggesting a continuous improvement cycle. Each stage also has a small icon associated with it.

### Key Observations

The diagram emphasizes a cyclical process, highlighting the importance of continuous evaluation and improvement in AI interpretability. The inclusion of "Stakeholder Assessment" and "Compliance Documentation" indicates a focus on both user acceptance and regulatory adherence. The diagram does not contain any numerical data or specific metrics.

### Interpretation

This diagram represents a best-practice framework for developing and deploying interpretable AI systems. It suggests that interpretability is not a one-time task but an ongoing process integrated throughout the AI lifecycle. The stages are logically ordered: starting with understanding the model itself, defining an interpretability approach, implementing it technically, evaluating its effectiveness, gathering stakeholder feedback, and finally, ensuring compliance. The cyclical nature of the diagram implies that the process should be revisited and refined as the AI system evolves and new insights are gained. The inclusion of stakeholder assessment is crucial, as interpretability is often subjective and depends on the needs and understanding of different users. The emphasis on compliance documentation reflects the growing regulatory scrutiny of AI systems and the need for transparency and accountability. The diagram is a high-level overview and lacks specific details on the methods or tools to be used in each stage.