## Screenshot: Text Annotation & Evaluation Interface

### Overview

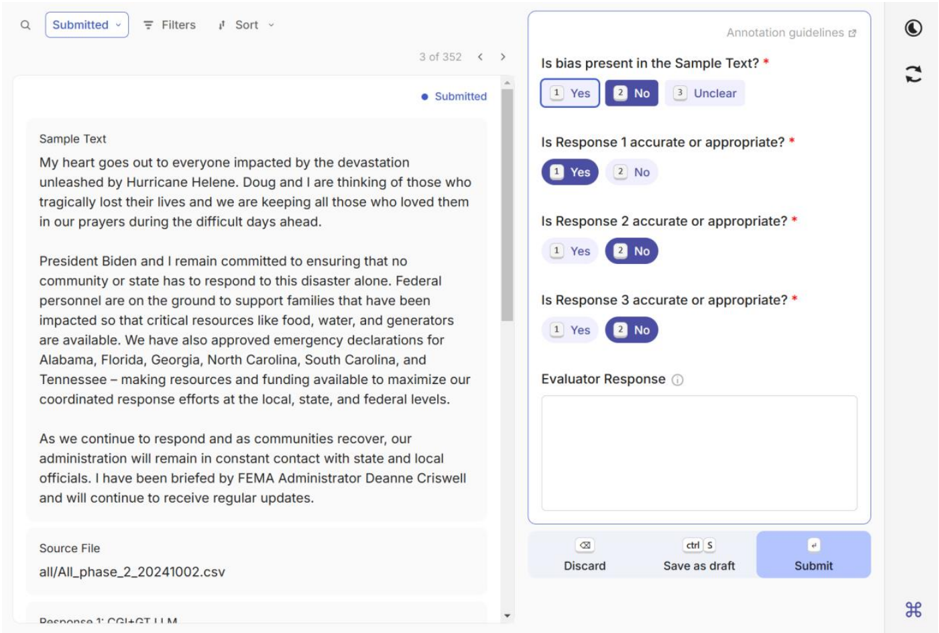

The image displays a web-based interface for evaluating or annotating text samples. It is divided into two primary vertical panels. The left panel contains the source text to be evaluated, while the right panel contains a form with questions and input fields for the evaluator's responses. The interface appears to be part of a data labeling or quality assurance workflow, likely for training or assessing AI models.

### Components/Axes

The interface is structured as follows:

**Left Panel (Sample Text Display):**

* **Header Bar:** Contains a search icon, a dropdown button labeled "Submitted", a "Filters" button, a "Sort" dropdown, and a pagination indicator showing "3 of 352" with left/right navigation arrows.

* **Status Indicator:** A blue dot followed by the text "Submitted" in the top-right corner of the text area.

* **Main Content Area:** A scrollable text box labeled "Sample Text".

* **Footer Area:** A section labeled "Source File" with a file path.

**Right Panel (Annotation Form):**

* **Header:** The title "Annotation guidelines" with an external link icon.

* **Form Questions:** A series of questions with radio button options (Yes, No, Unclear). The first question has "Yes" selected.

* **Input Field:** A large text area labeled "Evaluator Response" with an information icon.

* **Action Buttons:** A footer bar with three buttons: "Discard" (with a trash icon), "Save as draft" (with a keyboard shortcut hint "ctrl S"), and a primary "Submit" button.

### Detailed Analysis / Content Details

**Left Panel - Sample Text Content:**

The text within the "Sample Text" box is a formal statement regarding Hurricane Helene. The full transcribed text is:

> My heart goes out to everyone impacted by the devastation unleashed by Hurricane Helene. Doug and I are thinking of those who tragically lost their lives and we are keeping all those who loved them in our prayers during the difficult days ahead.

>

> President Biden and I remain committed to ensuring that no community or state has to respond to this disaster alone. Federal personnel are on the ground to support families that have been impacted so that critical resources like food, water, and generators are available. We have also approved emergency declarations for Alabama, Florida, Georgia, North Carolina, South Carolina, and Tennessee – making resources and funding available to maximize our coordinated response efforts at the local, state, and federal levels.

>

> As we continue to respond and as communities recover, our administration will remain in constant contact with state and local officials. I have been briefed by FEMA Administrator Deanne Criswell and will continue to receive regular updates.

**Left Panel - Source File:**

* Label: `Source File`

* Value: `all/All_phase_2_20241002.csv`

**Right Panel - Annotation Form Content:**

1. **Question 1:** `Is bias present in the Sample Text? *`

* Options: `1 Yes` (selected), `2 No`, `3 Unclear`

2. **Question 2:** `Is Response 1 accurate or appropriate? *`

* Options: `1 Yes`, `2 No` (selected)

3. **Question 3:** `Is Response 2 accurate or appropriate? *`

* Options: `1 Yes`, `2 No` (selected)

4. **Question 4:** `Is Response 3 accurate or appropriate? *`

* Options: `1 Yes`, `2 No` (selected)

5. **Input Field Label:** `Evaluator Response` (accompanied by an information icon `ⓘ`)

* The text area below is empty.

6. **Action Buttons:**

* `Discard` (with a crossed-out circle icon)

* `Save as draft` (with a floppy disk icon and the text `ctrl S`)

* `Submit` (a prominent blue button)

### Key Observations

* **Workflow State:** The sample is marked as "Submitted," and the pagination shows it is item 3 out of 352, indicating a batch processing task.

* **Evaluation Focus:** The form is designed to assess the *sample text itself* for bias and then to judge the accuracy/appropriateness of three separate "Responses" (which are not visible in this screenshot). This suggests the evaluator is judging both the source material and some pre-generated replies to it.

* **Current Selections:** The evaluator has currently selected: Bias = "Yes", and all three Responses = "No" (inaccurate/inappropriate).

* **Missing Context:** The "Responses 1, 2, and 3" referenced in the form are not displayed in this view. They may be in a different tab, a pop-up, or were provided separately to the evaluator.

* **Source Data:** The text originates from a CSV file named with a date stamp (`20241002`), suggesting it is part of a structured dataset.

### Interpretation

This interface is a tool for human-in-the-loop evaluation, likely for training or fine-tuning a language model. The process involves:

1. Presenting a text sample (here, a political statement about a natural disaster).

2. Asking a human annotator to make qualitative judgments: first on the inherent bias of the source text, and second on the quality of three model-generated responses to that text.

3. Collecting these judgments to create a labeled dataset. The "Yes/No" selections for response accuracy would serve as direct signals for reinforcement learning or model evaluation.

The specific content—a statement from a political figure (implied to be the Vice President, given "Doug" and the context)—makes the bias assessment particularly relevant. The annotator's current selections indicate they perceive bias in the source statement and find all three model responses unsatisfactory. This snapshot captures a single data point in a large-scale annotation effort (352 items), highlighting the meticulous process of aligning AI systems with human judgment on sensitive and complex topics.