TECHNICAL ASSET FINGERPRINT

4045e07b61b5746ee194c3a1

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Game Evaluation: Mastermind with Agentic and Deductive Reasoning

### Overview

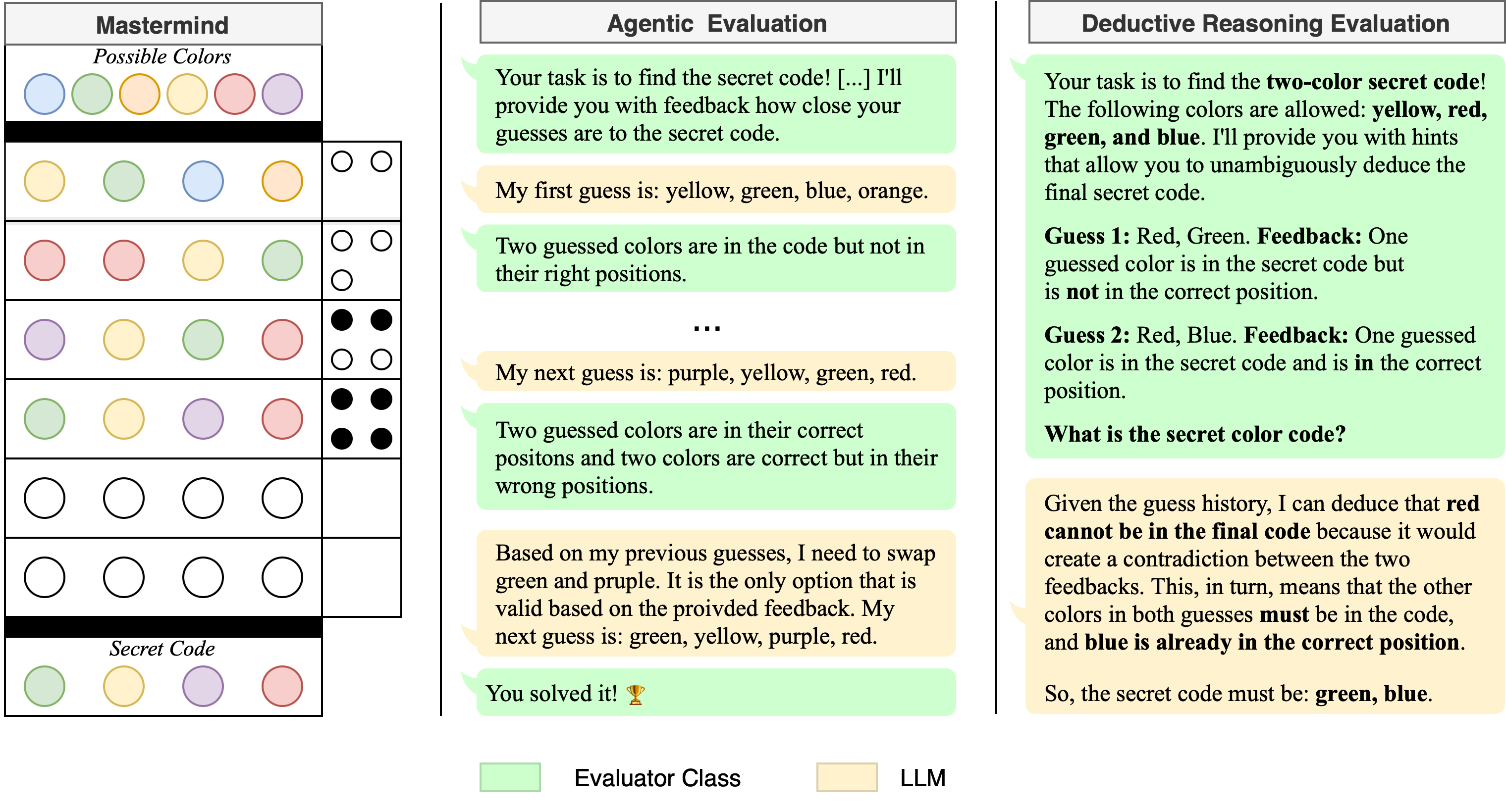

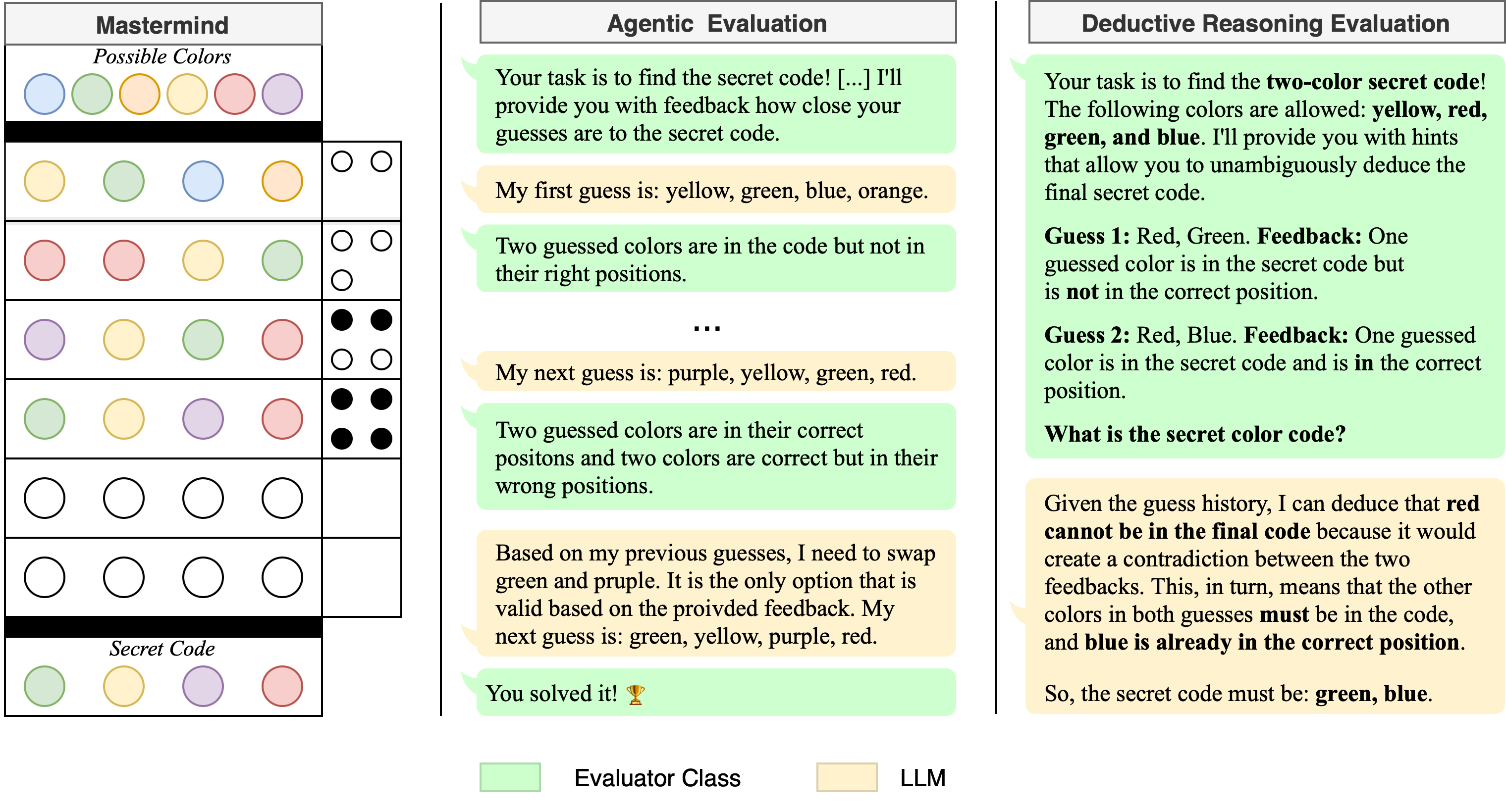

The image presents three distinct sections: a Mastermind game board, an "Agentic Evaluation" of a game-playing AI, and a "Deductive Reasoning Evaluation" of another AI. The Mastermind section shows a game in progress. The Agentic Evaluation shows an AI attempting to solve the Mastermind game. The Deductive Reasoning Evaluation shows an AI attempting to solve a simplified two-color Mastermind game using deductive logic.

### Components/Axes

**Mastermind Section (Left)**

* **Title:** Mastermind

* **Subtitle:** Possible Colors

* Colors: Blue, Green, Yellow, Orange, Red, Purple

* **Game Board:**

* Rows 1-4: Guesses with feedback.

* Row 5-6: Empty guesses.

* Row 7: Secret Code.

* **Feedback Mechanism:** Black and white dots indicating correct color and position.

* **Secret Code:** Green, Yellow, Purple, Red

**Agentic Evaluation Section (Center)**

* **Title:** Agentic Evaluation

* **Content:** A series of text blocks representing the AI's thought process and guesses.

* **Color Coding:** Green for "Evaluator Class" and Yellow for "LLM" (Language Learning Model).

**Deductive Reasoning Evaluation Section (Right)**

* **Title:** Deductive Reasoning Evaluation

* **Content:** A series of text blocks representing the AI's deductive reasoning process.

* **Color Coding:** Green for "Evaluator Class" and Yellow for "LLM" (Language Learning Model).

**Legend (Bottom)**

* Evaluator Class: Green

* LLM: Yellow

### Detailed Analysis

**Mastermind Game Board**

* **Possible Colors:** The top row displays the possible colors for the game: blue, green, yellow, orange, red, and purple.

* **Guess 1:** Yellow, Green, Blue, Orange. Feedback: Two white dots, indicating two colors are correct but in the wrong position.

* **Guess 2:** Red, Red, Red, Red. Feedback: Four black dots, indicating four colors are correct and in the correct position.

* **Guess 3:** Purple, Yellow, Green, Red. Feedback: Four black dots, indicating four colors are correct and in the correct position.

* **Guess 4:** Green, Yellow, Purple, Red. Feedback: Four black dots, indicating four colors are correct and in the correct position.

* **Secret Code:** The secret code is revealed to be Green, Yellow, Purple, Red.

**Agentic Evaluation**

The AI's thought process is presented as a series of statements:

* **Initial Task:** The AI is tasked with finding the secret code and will receive feedback on its guesses.

* **First Guess:** Yellow, Green, Blue, Orange.

* **Feedback:** Two guessed colors are in the code but not in their right positions.

* **Next Guess:** Purple, Yellow, Green, Red.

* **Feedback:** Two guessed colors are in their correct positions and two colors are correct but in their wrong positions.

* **Deduction:** Based on previous guesses, the AI needs to swap green and purple.

* **Next Guess:** Green, Yellow, Purple, Red.

* **Result:** The AI solves the game.

**Deductive Reasoning Evaluation**

The AI's deductive reasoning process is presented as a series of statements:

* **Initial Task:** The AI is tasked with finding the two-color secret code, with allowed colors being yellow, red, green, and blue.

* **Guess 1:** Red, Green. Feedback: One guessed color is in the secret code but is not in the correct position.

* **Guess 2:** Red, Blue. Feedback: One guessed color is in the secret code and is in the correct position.

* **Deduction:** Red cannot be in the final code. The other colors in both guesses (green and blue) must be in the code, and blue is already in the correct position.

* **Solution:** The secret code is green, blue.

### Key Observations

* The Mastermind game is successfully solved in four guesses.

* The Agentic Evaluation demonstrates an AI's ability to solve the Mastermind game through iterative guessing and feedback analysis.

* The Deductive Reasoning Evaluation showcases an AI's ability to solve a simplified Mastermind game using logical deduction.

### Interpretation

The image illustrates different approaches to solving the Mastermind game. The Mastermind game board shows a human-like approach, while the Agentic Evaluation and Deductive Reasoning Evaluation demonstrate AI-driven solutions. The Agentic Evaluation highlights the use of feedback to refine guesses, while the Deductive Reasoning Evaluation emphasizes logical deduction to arrive at the solution. The comparison provides insights into the strengths and weaknesses of different problem-solving strategies.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Mastermind Evaluation Comparison

### Overview

This diagram presents a comparison of two evaluation methods – Agentic Evaluation and Deductive Reasoning Evaluation – in the context of the Mastermind game. The Mastermind section on the left displays the possible colors and the secret code. The diagram illustrates the process of guessing the secret code and the feedback provided, comparing how an agentic approach and a deductive reasoning approach perform.

### Components/Axes

The diagram is divided into three main columns:

1. **Mastermind Possible Colors:** Displays the possible colors for the Mastermind game (yellow, red, green, blue, purple, orange). The bottom row shows the "Secret Code".

2. **Agentic Evaluation:** Describes the process of an agent attempting to solve the code through iterative guesses and feedback.

3. **Deductive Reasoning Evaluation:** Demonstrates how deductive reasoning can be used to solve the code with minimal guesses, given feedback.

A legend at the bottom indicates the color coding:

* **Green:** Evaluator Class

* **LLM:** LLM (Large Language Model)

### Content Details

**Mastermind Possible Colors:**

* Six colors are available: yellow, red, green, blue, purple, and orange.

* The "Secret Code" consists of four colors: yellow, green, purple, and blue.

**Agentic Evaluation:**

* **Task:** "Your task is to find the secret code! [...] I’ll provide you with feedback how close your guesses are to the secret code."

* **First Guess:** yellow, green, blue, orange.

* **Feedback 1:** "Two guessed colors are in the code but not in their right positions."

* **Second Guess:** purple, yellow, green, red.

* **Feedback 2:** "Two guessed colors are in their correct positions and two colors are correct but in their wrong positions."

* **Next Guess:** green, yellow, purple, red.

* **Outcome:** "You solved it!"

**Deductive Reasoning Evaluation:**

* **Task:** "Your task is to find the two-color secret code! The following colors are allowed: yellow, red, green, and blue. I’ll provide you with hints that allow you to unambiguously deduce the final secret code."

* **Guess 1:** Red, Green.

* **Feedback 1:** "One guessed color is in the secret code but is not in the correct position."

* **Guess 2:** Red, Blue.

* **Feedback 2:** "One guessed color is in the secret code and is in the correct position."

* **Question:** "What is the secret color code?"

* **Deduction:** "Given the guess history, I can deduce that red cannot be in the final code because it would create a contradiction between the two feedbacks. This, in turn, means that the other colors in both guesses must be in the code, and blue is already in the correct position."

* **Solution:** "So, the secret code must be: green, blue."

### Key Observations

* The Agentic Evaluation demonstrates an iterative process of guessing and refining based on feedback. It requires multiple guesses to arrive at the solution.

* The Deductive Reasoning Evaluation showcases a more efficient approach, solving the code in only two guesses by logically eliminating possibilities based on the feedback.

* The Deductive Reasoning example simplifies the color palette to yellow, red, green, and blue.

* The Agentic Evaluation uses all six colors.

### Interpretation

The diagram highlights the difference between two problem-solving approaches. The Agentic Evaluation represents a trial-and-error method, akin to how a reinforcement learning agent might explore a solution space. It relies on repeated attempts and learning from feedback. The Deductive Reasoning Evaluation, on the other hand, demonstrates the power of logical deduction. By carefully analyzing the feedback, it can systematically eliminate incorrect possibilities and arrive at the correct solution with minimal effort.

The contrast between the two evaluations suggests that deductive reasoning is more effective for solving the Mastermind game, particularly when the number of possible colors is limited. The Agentic Evaluation, while eventually successful, requires more steps and is less efficient. The diagram effectively illustrates the benefits of a structured, logical approach versus a more exploratory one. The simplification of the color palette in the Deductive Reasoning example likely contributes to its efficiency, reducing the complexity of the search space.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Comparative Evaluation of Mastermind Game Solving Approaches

### Overview

The image is a three-panel diagram comparing two methods for solving a Mastermind-style code-breaking game. The left panel shows a visual game board, the middle panel shows an "Agentic Evaluation" dialogue, and the right panel shows a "Deductive Reasoning Evaluation" dialogue. The diagram illustrates how a Large Language Model (LLM) interacts with an evaluator to solve the puzzle using different reasoning strategies.

### Components/Axes

The image is divided into three vertical panels of roughly equal width.

**1. Left Panel: Mastermind Game Board**

* **Title:** "Mastermind" (top center).

* **Sub-section 1: "Possible Colors"** (top). Displays six colored circles in a row: light blue, light green, yellow, orange, red, and purple.

* **Sub-section 2: Guess Grid.** A grid with 4 columns (for code positions) and 6 rows (for guesses). The first four rows contain guesses. The last two rows are empty (white circles).

* **Row 1 (Guess 1):** Yellow, Green, Blue, Orange. Feedback (right side): Two white circles (indicating correct color, wrong position).

* **Row 2 (Guess 2):** Red, Red, Yellow, Green. Feedback: Two white circles, one black circle (indicating correct color and position).

* **Row 3 (Guess 3):** Purple, Yellow, Green, Red. Feedback: Two black circles, two white circles.

* **Row 4 (Guess 4):** Green, Yellow, Purple, Red. Feedback: Four black circles (indicating the code is solved).

* **Sub-section 3: "Secret Code"** (bottom). Displays the solution: Green, Yellow, Purple, Red.

* **Legend (Bottom Center):** A light green rectangle labeled "Evaluator Class" and a light yellow rectangle labeled "LLM".

**2. Middle Panel: Agentic Evaluation**

* **Title:** "Agentic Evaluation" (top center).

* **Content:** A simulated chat dialogue between the "Evaluator Class" (green speech bubbles) and the "LLM" (yellow speech bubbles).

* **Evaluator (Green):** "Your task is to find the secret code! [...] I'll provide you with feedback how close your guesses are to the secret code."

* **LLM (Yellow):** "My first guess is: yellow, green, blue, orange."

* **Evaluator (Green):** "Two guessed colors are in the code but not in their right positions."

* **[Ellipsis "..." indicating omitted steps]**

* **LLM (Yellow):** "My next guess is: purple, yellow, green, red."

* **Evaluator (Green):** "Two guessed colors are in their correct positons and two colors are correct but in their wrong positions." (Note: "positons" is a typo in the image).

* **LLM (Yellow):** "Based on my previous guesses, I need to swap green and prupule. It is the only option that is valid based on the proivded feedback. My next guess is: green, yellow, purple, red." (Note: "prupule" and "proivded" are typos in the image).

* **Evaluator (Green):** "You solved it! 🏆"

**3. Right Panel: Deductive Reasoning Evaluation**

* **Title:** "Deductive Reasoning Evaluation" (top center).

* **Content:** A simulated chat dialogue with a more constrained, logic-puzzle format.

* **Evaluator (Green):** "Your task is to find the **two-color secret code!** The following colors are allowed: **yellow, red, green, and blue.** I'll provide you with hints that allow you to unambiguously deduce the final secret code."

* **Evaluator (Green):** "**Guess 1: Red, Green. Feedback:** One guessed color is in the secret code but is **not** in the correct position."

* **Evaluator (Green):** "**Guess 2: Red, Blue. Feedback:** One guessed color is in the secret code and is **in** the correct position."

* **Evaluator (Green):** "**What is the secret color code?**"

* **LLM (Yellow):** "Given the guess history, I can deduce that **red cannot be in the final code** because it would create a contradiction between the two feedbacks. This, in turn, means that the other colors in both guesses **must** be in the code, and **blue is already in the correct position.** So, the secret code must be: **green, blue.**"

### Detailed Analysis

* **Game Logic (Left Panel):** The Mastermind board uses a standard feedback system: a black circle means a correct color in the correct position, a white circle means a correct color in the wrong position. The secret code is four colors long. The LLM successfully solves it in four guesses.

* **Agentic Evaluation Process (Middle Panel):** This shows an interactive, trial-and-error approach. The LLM makes guesses, receives descriptive feedback about color presence and position, and iteratively refines its next guess based on that feedback. The process includes a logical deduction step ("swap green and purple") before the final correct guess.

* **Deductive Reasoning Process (Right Panel):** This presents a more formal logic puzzle. The constraints are tighter (two-color code, four allowed colors). The feedback is phrased to enable pure deduction. The LLM's response demonstrates deductive reasoning: it identifies a contradiction if Red were in the code, thereby eliminating it and logically concluding the code must be Green and Blue, with Blue in the correct (second) position.

### Key Observations

1. **Two Distinct Evaluation Paradigms:** The diagram contrasts an open-ended, agentic interaction (middle) with a closed, deductive logic puzzle (right).

2. **Feedback Complexity:** The Agentic Evaluation provides richer, more natural language feedback ("in the code but not in their right positions"). The Deductive Reasoning Evaluation provides minimal, binary-style feedback ("in the correct position" / "not in the correct position") designed for unambiguous logic.

3. **LLM Reasoning Styles:** The LLM adapts its reasoning to the task. In the agentic task, it uses iterative refinement. In the deductive task, it uses contradiction and elimination.

4. **Visual vs. Textual Representation:** The left panel provides a complete visual record of the game state, while the middle and right panels provide a textual transcript of the reasoning process. The visual board in the left panel corresponds to the Agentic Evaluation dialogue.

### Interpretation

This diagram serves as a technical illustration for evaluating AI reasoning capabilities. It demonstrates how the same underlying concept (solving a color code game) can be framed as two different types of cognitive tasks:

1. **Agentic Problem-Solving:** This tests an AI's ability to engage in a multi-turn dialogue, interpret nuanced feedback, maintain context over a long interaction, and perform iterative hypothesis testing. It mimics a more human-like, exploratory problem-solving approach.

2. **Deductive Reasoning:** This tests an AI's ability to perform formal logic, handle constraints, and derive a unique solution from a limited set of premises. It evaluates pure logical deduction without the noise of iterative guessing.

The inclusion of the visual Mastermind board grounds the abstract dialogues in a concrete, familiar game. The typos in the Agentic Evaluation panel ("positons," "prupule," "proivded") may be intentional to show the model working with imperfect input or could be artifacts of the diagram's creation. Overall, the image argues that comprehensive AI evaluation requires testing across a spectrum of reasoning styles, from flexible agentic interaction to rigid deductive logic.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Screenshot: Mastermind Game Interface with Evaluation Scenarios

### Overview

The image depicts a digital Mastermind game interface divided into three sections:

1. **Mastermind** (left): Game board with color circles and secret code

2. **Agentic Evaluation** (center): Dialogue showing iterative guessing and feedback

3. **Deductive Reasoning Evaluation** (right): Logical deduction process with color elimination

### Components/Axes

#### Mastermind Section

- **Possible Colors Legend**:

- Blue (circle)

- Green (circle)

- Orange (circle)

- Yellow (circle)

- Red (circle)

- Purple (circle)

- **Game Board**:

- 6 rows of 4 circles each (guesses)

- Secret code row at bottom: Green, Yellow, Purple, Red

#### Agentic Evaluation Section

- **Dialogue Text**:

- "Your task is to find the secret code! [...] I'll provide feedback..."

- Guess 1: Yellow, Green, Blue, Orange → Feedback: 2 correct colors (not in position)

- Guess 2: Purple, Yellow, Green, Red → Feedback: 2 correct positions, 2 correct colors (wrong positions)

- Final guess: Green, Yellow, Purple, Red → "You solved it!"

#### Deductive Reasoning Evaluation Section

- **Dialogue Text**:

- "Your task is to find the two-color secret code! Allowed colors: Yellow, Red, Green, Blue"

- Guess 1: Red, Green → Feedback: 1 correct color (wrong position)

- Guess 2: Red, Blue → Feedback: 1 correct color (correct position)

- Final deduction: "Secret code must be: Green, Blue"

### Detailed Analysis

#### Mastermind Section

- Secret code: Green (position 1), Yellow (position 2), Purple (position 3), Red (position 4)

- Guess rows show iterative attempts with feedback circles (black = correct color/position, white = correct color/wrong position, gray = incorrect color)

#### Agentic Evaluation

- **Guess 1**: Yellow, Green, Blue, Orange

- Feedback: 2 correct colors (not in position)

- **Guess 2**: Purple, Yellow, Green, Red

- Feedback: 2 correct positions (positions 2 and 4), 2 correct colors (positions 1 and 3)

- **Resolution**: Final guess matches secret code

#### Deductive Reasoning Evaluation

- **Color Elimination**:

- Red excluded from final code due to contradiction between feedbacks

- Green and Blue confirmed through logical deduction

### Key Observations

1. **Color Positioning**:

- Green appears in position 1 in both secret code and deductive conclusion

- Blue confirmed in position 4 through elimination of alternatives

2. **Feedback Patterns**:

- Agentic Evaluation uses positional feedback to refine guesses

- Deductive section employs logical contradiction to eliminate possibilities

3. **Color Distribution**:

- Yellow and Purple appear in multiple guesses but only Yellow survives to final code

- Orange and Red are eliminated through feedback contradictions

### Interpretation

This interface demonstrates two AI evaluation approaches:

1. **Agentic Evaluation**:

- Shows iterative guessing with explicit feedback loops

- Highlights the importance of positional accuracy in code-breaking

2. **Deductive Reasoning**:

- Emphasizes logical elimination over trial-and-error

- Reveals how contradictions in feedback can invalidate color candidates

The secret code (Green, Yellow, Purple, Red) serves as the ground truth for both evaluation methods. The Agentic approach requires 3 guesses, while the Deductive method uses 2 guesses plus logical inference. The interface effectively visualizes the transition from probabilistic guessing to deterministic reasoning.

DECODING INTELLIGENCE...