## Horizontal Bar Chart: Model Performance on VCR Tasks

### Overview

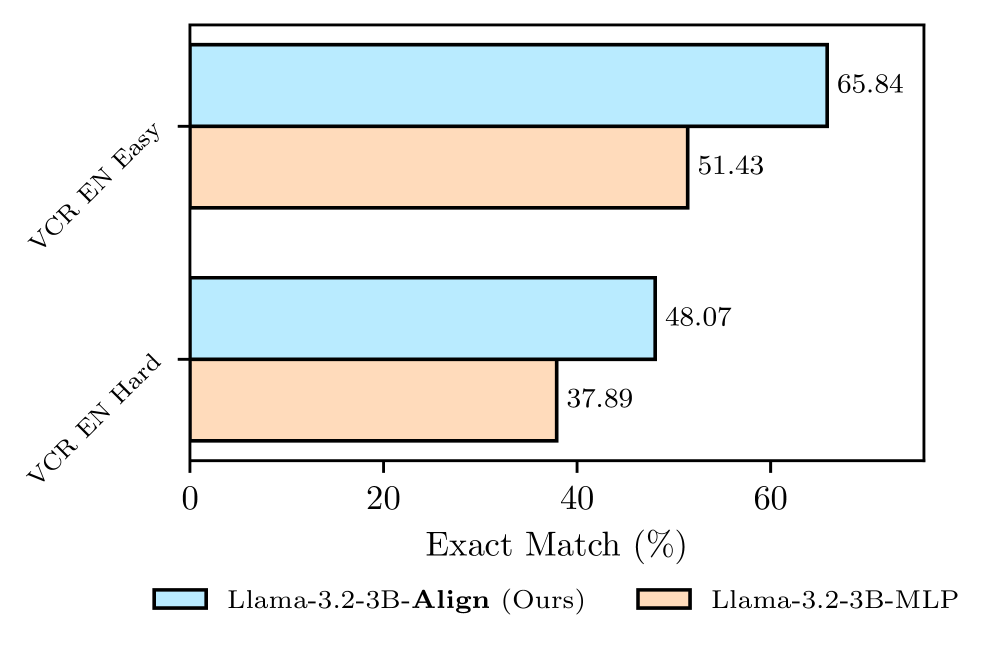

The image displays a horizontal bar chart comparing the performance of two language models on two variants of a Visual Commonsense Reasoning (VCR) task in English. The performance metric is "Exact Match (%)". The chart clearly shows that one model, labeled as "Ours," outperforms the other on both task difficulties.

### Components/Axes

* **Chart Type:** Horizontal grouped bar chart.

* **Y-Axis (Vertical):** Lists the two task categories.

* Top category: `VCR EN Easy`

* Bottom category: `VCR EN Hard`

* **X-Axis (Horizontal):** Represents the performance metric.

* **Label:** `Exact Match (%)`

* **Scale:** Linear scale from 0 to approximately 70, with major tick marks at 0, 20, 40, and 60.

* **Legend:** Positioned at the bottom center of the chart.

* **Light Blue Bar:** `Llama-3.2-3B-Align (Ours)`

* **Light Orange Bar:** `Llama-3.2-3B-MLP`

* **Data Labels:** Numerical values are printed at the end of each bar, indicating the exact percentage.

### Detailed Analysis

The chart presents the following specific data points:

**1. VCR EN Easy Task:**

* **Llama-3.2-3B-Align (Ours) [Light Blue Bar]:** The bar extends to the right, ending at a data label of **65.84%**. This is the highest value on the chart.

* **Llama-3.2-3B-MLP [Light Orange Bar]:** The bar is shorter, ending at a data label of **51.43%**.

**2. VCR EN Hard Task:**

* **Llama-3.2-3B-Align (Ours) [Light Blue Bar]:** The bar extends to a data label of **48.07%**.

* **Llama-3.2-3B-MLP [Light Orange Bar]:** This is the shortest bar on the chart, ending at a data label of **37.89%**.

**Trend Verification:**

* For both models, performance is higher on the "Easy" task compared to the "Hard" task. The blue bar for "Easy" is longer than the blue bar for "Hard," and the same relationship holds for the orange bars.

* For both task difficulties, the "Llama-3.2-3B-Align (Ours)" model (blue) achieves a higher score than the "Llama-3.2-3B-MLP" model (orange). The blue bar is consistently longer than the orange bar within each task group.

### Key Observations

1. **Consistent Performance Hierarchy:** The "Align" model demonstrates a clear and consistent performance advantage over the "MLP" model across both evaluated task difficulties.

2. **Task Difficulty Impact:** Both models experience a significant drop in performance when moving from the "Easy" to the "Hard" variant of the VCR EN task. The "Align" model's score drops by approximately 17.77 percentage points (65.84% to 48.07%), while the "MLP" model's score drops by approximately 13.54 percentage points (51.43% to 37.89%).

3. **Performance Gap:** The absolute performance gap between the two models is larger on the "Easy" task (14.41 percentage points) than on the "Hard" task (10.18 percentage points).

### Interpretation

This chart provides a direct, quantitative comparison of two model variants on a visual reasoning benchmark. The data suggests that the architectural or training modification designated as "Align" in "Llama-3.2-3B-Align" yields a substantial improvement in exact match accuracy over the "MLP" variant for this specific task.

The universal drop in scores from "Easy" to "Hard" validates the task design, confirming that the "Hard" subset presents a greater challenge. The fact that the "Align" model maintains a lead even on the harder task indicates that its performance gains are robust and not limited to simpler examples.

From a research perspective, this visualization efficiently communicates the success of the "Align" method. The clear visual separation of the bars, reinforced by the precise numerical labels, leaves little ambiguity about the relative effectiveness of the two approaches on the VCR EN benchmark. The chart is designed to highlight the superiority of the authors' proposed model ("Ours").