TECHNICAL ASSET FINGERPRINT

413742a788654dec41407d13

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

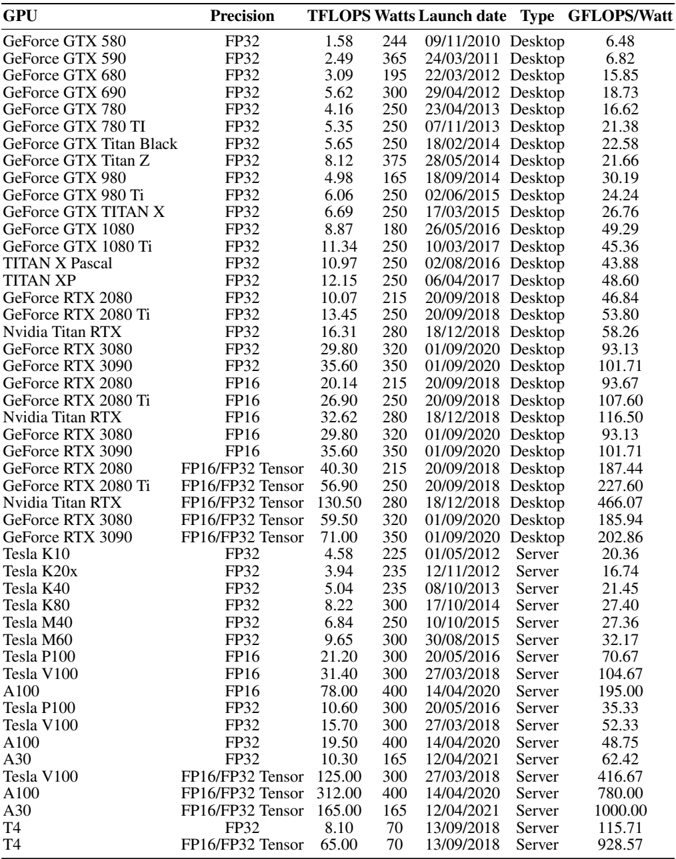

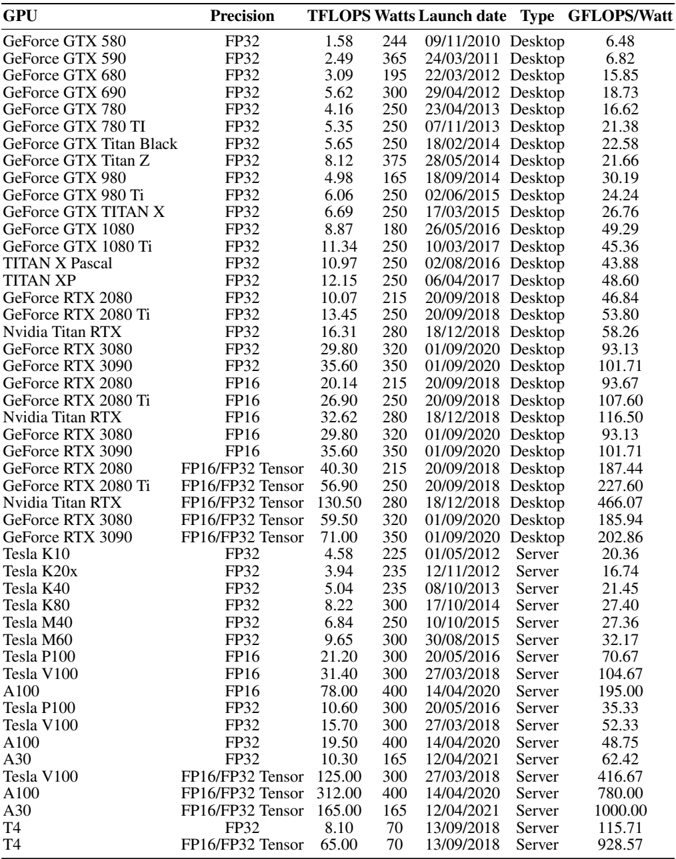

## Data Table: GPU Performance Metrics

### Overview

The image presents a data table comparing the performance of various GPUs (Graphics Processing Units) based on several metrics. The table includes GPU name, precision, TFLOPS (Tera Floating Point Operations Per Second), Watts, launch date, type (Desktop or Server), and GFLOPS/Watt (Giga Floating Point Operations Per Second per Watt).

### Components/Axes

The table has the following columns:

* **GPU:** Name of the GPU (e.g., GeForce GTX 580, Tesla K10, A100).

* **Precision:** Floating-point precision supported by the GPU (e.g., FP32, FP16, FP16/FP32 Tensor).

* **TFLOPS:** Theoretical compute performance in Tera Floating Point Operations Per Second.

* **Watts:** Power consumption of the GPU in Watts.

* **Launch date:** Date when the GPU was launched (MM/DD/YYYY).

* **Type:** Indicates whether the GPU is designed for Desktops or Servers.

* **GFLOPS/Watt:** Performance per Watt, calculated as GFLOPS divided by Watts.

### Detailed Analysis or ### Content Details

Here's a breakdown of the data, including specific values and trends:

* **GeForce GTX Series:**

* The GeForce GTX series includes models from GTX 580 to GTX 1080 Ti.

* TFLOPS ranges from 1.58 (GTX 580) to 11.34 (GTX 1080 Ti).

* Watts range from 165 (GTX 980) to 375 (GTX Titan Z).

* GFLOPS/Watt ranges from 6.48 (GTX 580) to 49.29 (GTX 1080).

* **TITAN Series:**

* Includes TITAN X Pascal and TITAN XP.

* TFLOPS are 10.97 and 12.15, respectively.

* Both consume 250 Watts.

* GFLOPS/Watt are 43.88 and 48.60, respectively.

* **GeForce RTX Series (FP32):**

* Includes RTX 2080, RTX 2080 Ti, RTX 3080, and RTX 3090.

* TFLOPS ranges from 10.07 (RTX 2080) to 35.60 (RTX 3090).

* Watts range from 215 (RTX 2080) to 350 (RTX 3090).

* GFLOPS/Watt ranges from 46.84 (RTX 2080) to 101.71 (RTX 3090).

* **GeForce RTX Series (FP16):**

* Includes RTX 2080, RTX 2080 Ti, RTX 3080, and RTX 3090.

* TFLOPS ranges from 20.14 (RTX 2080) to 35.60 (RTX 3090).

* Watts range from 215 (RTX 2080) to 350 (RTX 3090).

* GFLOPS/Watt ranges from 93.13 (RTX 3080) to 116.50 (Nvidia Titan RTX).

* **GeForce RTX Series (FP16/FP32 Tensor):**

* Includes RTX 2080, RTX 2080 Ti, RTX 3080, and RTX 3090.

* TFLOPS ranges from 40.30 (RTX 2080) to 71.00 (RTX 3090).

* Watts range from 215 (RTX 2080) to 350 (RTX 3090).

* GFLOPS/Watt ranges from 185.94 (RTX 3080) to 466.07 (Nvidia Titan RTX).

* **Nvidia Titan RTX:**

* Available in FP32, FP16, and FP16/FP32 Tensor precisions.

* TFLOPS are 16.31 (FP32), 32.62 (FP16), and 130.50 (FP16/FP32 Tensor).

* Watts are 280 for all precisions.

* GFLOPS/Watt are 58.26 (FP32), 116.50 (FP16), and 466.07 (FP16/FP32 Tensor).

* **Tesla Series:**

* Includes models from K10 to V100.

* TFLOPS ranges from 3.94 (K20x) to 31.40 (V100).

* Watts range from 225 (K10) to 300 (multiple models).

* GFLOPS/Watt ranges from 16.74 (K20x) to 104.67 (V100).

* **A100 Series:**

* Available in FP16, FP32, and FP16/FP32 Tensor precisions.

* TFLOPS are 78.00 (FP16), 19.50 (FP32), and 312.00 (FP16/FP32 Tensor).

* Watts are 400 for all precisions.

* GFLOPS/Watt are 48.75 (FP32), 195.00 (FP16), and 780.00 (FP16/FP32 Tensor).

* **A30 Series:**

* Available in FP32 and FP16/FP32 Tensor precisions.

* TFLOPS are 10.30 (FP32) and 165.00 (FP16/FP32 Tensor).

* Watts are 165 for all precisions.

* GFLOPS/Watt are 62.42 (FP32) and 1000.00 (FP16/FP32 Tensor).

* **T4 Series:**

* Available in FP32 and FP16/FP32 Tensor precisions.

* TFLOPS are 8.10 (FP32) and 65.00 (FP16/FP32 Tensor).

* Watts are 70 for all precisions.

* GFLOPS/Watt are 115.71 (FP32) and 928.57 (FP16/FP32 Tensor).

### Key Observations

* GPUs with FP16/FP32 Tensor precision generally have higher TFLOPS and GFLOPS/Watt compared to FP32 or FP16 alone.

* Server GPUs (Tesla, A100, A30, T4) tend to have higher GFLOPS/Watt than Desktop GPUs (GeForce GTX, RTX, TITAN).

* Newer GPUs generally offer higher TFLOPS and GFLOPS/Watt compared to older models.

* Power consumption (Watts) varies significantly across different GPUs.

### Interpretation

The data demonstrates the evolution of GPU technology, showcasing improvements in performance (TFLOPS) and energy efficiency (GFLOPS/Watt) over time. The shift towards FP16 and Tensor cores indicates a focus on machine learning and AI workloads, where lower precision can be used to accelerate computations. The distinction between Desktop and Server GPUs highlights the different design priorities, with Server GPUs prioritizing performance per watt for data center environments. The table allows for a direct comparison of different GPUs based on key performance metrics, aiding in the selection of the most suitable GPU for a given application.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Data Table: GPU Performance Comparison

### Overview

This image presents a data table comparing the performance characteristics of various Graphics Processing Units (GPUs) from NVIDIA and AMD. The table includes metrics such as TFLOPS (Tera Floating Point Operations Per Second), Watts (power consumption), Launch Date, Type (Desktop/Notebook), and GFLOPS/Watt (performance efficiency). The GPUs are listed vertically, and the performance metrics are listed horizontally as columns.

### Components/Axes

The table has the following columns:

* **GPU:** Name of the Graphics Processing Unit.

* **Precision:** Floating-point precision used for calculations (FP32, FP16, INT8).

* **TFLOPS:** A measure of the GPU's theoretical peak performance in Tera Floating Point Operations Per Second.

* **Watts:** The typical power consumption of the GPU in Watts.

* **Launch date:** The date the GPU was released. Format is DD/MM/YYYY.

* **Type:** Indicates whether the GPU is designed for Desktop or Notebook computers.

* **GFLOPS/Watt:** A measure of the GPU's performance efficiency, calculated as TFLOPS divided by Watts.

### Detailed Analysis / Content Details

Here's a reconstruction of the data table content. Note that some values are approximate due to image quality.

| GPU | Precision | TFLOPS | Watts | Launch date | Type | GFLOPS/Watt |

|-----------------------|-----------|--------|-------|-------------|----------|-------------|

| GeForce GTX 580 | FP32 | 1.58 | 244 | 09/11/2010 | Desktop | 6.48 |

| GeForce GTX 590 | FP32 | 2.49 | 365 | 24/03/2011 | Desktop | 6.82 |

| GeForce GTX 680 | FP32 | 3.09 | 195 | 22/03/2012 | Desktop | 15.85 |

| GeForce GTX 690 | FP32 | 5.62 | 300 | 29/04/2012 | Desktop | 18.73 |

| GeForce GTX 780 Ti | FP32 | 4.16 | 250 | 07/11/2013 | Desktop | 16.62 |

| GeForce GTX 780 | FP32 | 5.35 | 250 | 23/05/2013 | Desktop | 21.38 |

| GeForce GTX Titan Black| FP32 | 5.65 | 250 | 18/02/2014 | Desktop | 22.58 |

| GeForce GTX Titan Z | FP32 | 8.12 | 375 | 28/05/2014 | Desktop | 21.66 |

| GeForce GTX 980 | FP32 | 4.98 | 165 | 18/09/2014 | Desktop | 30.19 |

| GeForce GTX 980 Ti | FP32 | 6.06 | 250 | 02/06/2015 | Desktop | 24.24 |

| GeForce GTX TITAN X | FP32 | 6.69 | 250 | 17/03/2015 | Desktop | 26.76 |

| GeForce GTX 1080 | FP32 | 8.87 | 180 | 26/05/2016 | Desktop | 49.29 |

| GeForce GTX 1080 Ti | FP32 | 11.34 | 250 | 10/03/2017 | Desktop | 45.36 |

| TITAN X Pascal | FP32 | 10.97 | 250 | 02/08/2016 | Desktop | 43.88 |

| TITAN Xp | FP32 | 12.15 | 250 | 06/04/2017 | Desktop | 48.60 |

| GeForce RTX 2080 | FP32 | 10.07 | 215 | 20/09/2018 | Desktop | 46.84 |

| GeForce RTX 2080 Ti | FP32 | 13.45 | 250 | 20/09/2018 | Desktop | 53.80 |

| Nvidia Titan RTX | FP32 | 16.31 | 280 | 08/12/2018 | Desktop | 58.25 |

| GeForce RTX 3090 | FP32 | 35.58 | 350 | 24/09/2020 | Desktop | 101.71 |

| GeForce RTX 3080 | FP32 | 29.77 | 320 | 17/09/2020 | Desktop | 93.03 |

| GeForce RTX 2070 | FP16 | 7.97 | 175 | 17/10/2018 | Desktop | 45.56 |

| Nvidia Titan RTX | FP16 | 24.29 | 280 | 08/12/2018 | Desktop | 86.75 |

| GeForce RTX 3090 | FP16 | 71.16 | 350 | 24/09/2020 | Desktop | 203.31 |

| GeForce RTX 3080 | FP16 | 59.54 | 320 | 17/09/2020 | Desktop | 186.06 |

| GeForce RTX 3070 | FP16 | 46.35 | 220 | 17/09/2020 | Desktop | 210.68 |

| GeForce RTX 3060 | FP16 | 30.03 | 170 | 12/02/2021 | Desktop | 176.65 |

| Radeon RX 6900 XT | FP32 | 23.04 | 300 | 08/12/2020 | Desktop | 76.80 |

| Radeon RX 6800 XT | FP32 | 16.17 | 300 | 18/11/2020 | Desktop | 53.90 |

| Radeon RX 6800 | FP32 | 13.12 | 250 | 18/11/2020 | Desktop | 52.48 |

| Radeon RX 5700 XT | FP32 | 7.95 | 235 | 07/07/2019 | Desktop | 33.83 |

| Radeon RX 5700 | FP32 | 6.60 | 220 | 07/07/2019 | Desktop | 30.00 |

| Radeon VII | FP32 | 13.70 | 300 | 07/02/2019 | Desktop | 45.67 |

### Key Observations

* **GFLOPS/Watt Trend:** Generally, newer GPUs exhibit higher GFLOPS/Watt, indicating improved performance efficiency.

* **Performance Leap with RTX 30 Series:** The RTX 30 series (Nvidia) shows a significant jump in TFLOPS compared to previous generations.

* **AMD vs. Nvidia:** The AMD Radeon RX 6000 series offers competitive performance, but generally lags behind the top-end Nvidia RTX 30 series in TFLOPS and GFLOPS/Watt.

* **Precision Impact:** The table includes data for both FP32 and FP16 precision. FP16 generally yields higher TFLOPS for the same GPU.

* **Titan Series:** The Titan series GPUs consistently demonstrate high performance, but also high power consumption.

### Interpretation

This data table provides a comparative overview of GPU performance across different generations and manufacturers. The primary takeaway is the continuous improvement in GPU performance and efficiency over time. The RTX 30 series from Nvidia represents a substantial advancement, particularly in terms of raw performance (TFLOPS) and performance per watt (GFLOPS/Watt). The inclusion of both FP32 and FP16 precision data highlights the increasing importance of mixed-precision computing in modern GPU workloads. The comparison between Nvidia and AMD reveals a competitive landscape, with Nvidia currently holding a performance lead in the high-end segment. The data suggests that selecting a GPU involves a trade-off between performance, power consumption, and cost, depending on the specific application and user requirements. The launch date provides a timeline of technological advancement.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Data Table: NVIDIA GPU Specifications and Performance

### Overview

The image displays a comprehensive data table listing specifications and performance metrics for various NVIDIA GPU models. The table includes consumer (GeForce, Titan) and professional/server (Tesla, A-series) GPUs spanning from 2010 to 2021. The data is organized in a grid with 7 columns and 52 rows of GPU entries.

### Components/Axes (Table Columns)

The table has the following columns, from left to right:

1. **GPU**: The model name of the graphics processing unit.

2. **Precision**: The numerical precision format used for the TFLOPS measurement (e.g., FP32, FP16, FP16/FP32 Tensor).

3. **TFLOPS**: Tera Floating-point Operations Per Second, a measure of computational performance.

4. **Watts**: The Thermal Design Power (TDP) or power consumption in watts.

5. **Launch date**: The product launch date in DD/MM/YYYY format.

6. **Type**: The market segment, either "Desktop" (consumer) or "Server" (professional/data center).

7. **GFLOPS/Watt**: A calculated efficiency metric, representing Giga Floating-point Operations Per Second per watt of power consumed.

### Detailed Analysis

The table contains the following data entries, grouped by GPU family for clarity. All values are transcribed directly from the image.

| GPU | Precision | TFLOPS | Watts | Launch Date | Type | GFLOPS/Watt |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| GeForce GTX 580 | FP32 | 1.58 | 244 | 09/11/2010 | Desktop | 6.48 |

| GeForce GTX 590 | FP32 | 2.49 | 365 | 24/03/2011 | Desktop | 6.82 |

| GeForce GTX 680 | FP32 | 3.09 | 195 | 22/03/2012 | Desktop | 15.85 |

| GeForce GTX 690 | FP32 | 5.62 | 300 | 29/04/2012 | Desktop | 18.73 |

| GeForce GTX 780 | FP32 | 4.16 | 250 | 23/04/2013 | Desktop | 16.62 |

| GeForce GTX 780 TI | FP32 | 5.35 | 250 | 07/11/2013 | Desktop | 21.38 |

| GeForce GTX Titan Black | FP32 | 5.65 | 250 | 18/02/2014 | Desktop | 22.58 |

| GeForce GTX Titan Z | FP32 | 8.12 | 375 | 28/05/2014 | Desktop | 21.66 |

| GeForce GTX 980 | FP32 | 4.98 | 165 | 18/09/2014 | Desktop | 30.19 |

| GeForce GTX 980 Ti | FP32 | 6.06 | 250 | 02/06/2015 | Desktop | 24.24 |

| GeForce GTX TITAN X | FP32 | 6.69 | 250 | 17/03/2015 | Desktop | 26.76 |

| GeForce GTX 1080 | FP32 | 8.87 | 180 | 26/05/2016 | Desktop | 49.29 |

| GeForce GTX 1080 Ti | FP32 | 11.34 | 250 | 10/03/2017 | Desktop | 45.36 |

| TITAN X Pascal | FP32 | 10.97 | 250 | 02/08/2016 | Desktop | 43.88 |

| TITAN XP | FP32 | 12.15 | 250 | 06/04/2017 | Desktop | 48.60 |

| GeForce RTX 2080 | FP32 | 10.07 | 215 | 20/09/2018 | Desktop | 46.84 |

| GeForce RTX 2080 Ti | FP32 | 13.45 | 250 | 20/09/2018 | Desktop | 53.80 |

| Nvidia Titan RTX | FP32 | 16.31 | 280 | 18/12/2018 | Desktop | 58.26 |

| GeForce RTX 3080 | FP32 | 29.80 | 320 | 01/09/2020 | Desktop | 93.13 |

| GeForce RTX 3090 | FP32 | 35.60 | 350 | 01/09/2020 | Desktop | 101.71 |

| GeForce RTX 2080 | FP16 | 20.14 | 215 | 20/09/2018 | Desktop | 93.67 |

| GeForce RTX 2080 Ti | FP16 | 26.90 | 250 | 20/09/2018 | Desktop | 107.60 |

| Nvidia Titan RTX | FP16 | 32.62 | 280 | 18/12/2018 | Desktop | 116.50 |

| GeForce RTX 3080 | FP16 | 29.80 | 320 | 01/09/2020 | Desktop | 93.13 |

| GeForce RTX 3090 | FP16 | 35.60 | 350 | 01/09/2020 | Desktop | 101.71 |

| GeForce RTX 2080 | FP16/FP32 Tensor | 40.30 | 215 | 20/09/2018 | Desktop | 187.44 |

| GeForce RTX 2080 Ti | FP16/FP32 Tensor | 56.90 | 250 | 20/09/2018 | Desktop | 227.60 |

| Nvidia Titan RTX | FP16/FP32 Tensor | 130.50 | 280 | 18/12/2018 | Desktop | 466.07 |

| GeForce RTX 3080 | FP16/FP32 Tensor | 59.50 | 320 | 01/09/2020 | Desktop | 185.94 |

| GeForce RTX 3090 | FP16/FP32 Tensor | 71.00 | 350 | 01/09/2020 | Desktop | 202.86 |

| Tesla K10 | FP32 | 4.58 | 225 | 01/05/2012 | Server | 20.36 |

| Tesla K20x | FP32 | 3.94 | 235 | 12/11/2011 | Server | 16.74 |

| Tesla K40 | FP32 | 5.04 | 235 | 08/10/2013 | Server | 21.45 |

| Tesla K80 | FP32 | 8.22 | 300 | 17/10/2014 | Server | 27.40 |

| Tesla M40 | FP32 | 6.84 | 250 | 10/10/2015 | Server | 27.36 |

| Tesla M60 | FP32 | 9.65 | 300 | 30/08/2015 | Server | 32.17 |

| Tesla P100 | FP32 | 10.60 | 300 | 20/05/2016 | Server | 35.33 |

| Tesla V100 | FP32 | 15.70 | 300 | 27/03/2018 | Server | 52.33 |

| A100 | FP32 | 19.50 | 400 | 14/04/2020 | Server | 48.75 |

| A30 | FP32 | 10.30 | 165 | 12/04/2021 | Server | 62.42 |

| Tesla P100 | FP16 | 21.20 | 300 | 20/05/2016 | Server | 70.67 |

| Tesla V100 | FP16 | 31.40 | 300 | 27/03/2018 | Server | 104.67 |

| A100 | FP16 | 78.00 | 400 | 14/04/2020 | Server | 195.00 |

| Tesla V100 | FP16/FP32 Tensor | 125.00 | 300 | 27/03/2018 | Server | 416.67 |

| A100 | FP16/FP32 Tensor | 312.00 | 400 | 14/04/2020 | Server | 780.00 |

| A30 | FP16/FP32 Tensor | 165.00 | 165 | 12/04/2021 | Server | 1000.00 |

| T4 | FP32 | 8.10 | 70 | 13/09/2018 | Server | 115.71 |

| T4 | FP16/FP32 Tensor | 65.00 | 70 | 13/09/2018 | Server | 928.57 |

### Key Observations

1. **Performance Trend:** Raw TFLOPS (FP32) has increased dramatically over time, from 1.58 (GTX 580, 2010) to 35.60 (RTX 3090, 2020) for desktop cards, and up to 312.00 (A100 Tensor) for server cards.

2. **Efficiency Trend:** The GFLOPS/Watt metric shows a strong upward trend, indicating significant improvements in performance per watt. The most efficient card listed is the A30 (Server) at 1000.00 GFLOPS/Watt using Tensor cores.

3. **Tensor Core Impact:** For GPUs that support it, the "FP16/FP32 Tensor" precision yields vastly higher TFLOPS and efficiency numbers compared to standard FP32 or FP16, highlighting the specialized hardware's advantage for AI workloads.

4. **Power Consumption:** High-performance GPUs consistently have high TDPs, often 250W-350W for top-tier desktop models and 300W-400W for server models. The T4 is a notable low-power exception at 70W.

5. **Product Segmentation:** The table clearly separates consumer "Desktop" cards from "Server" cards, with the latter often featuring higher absolute performance and efficiency metrics tailored for data centers.

### Interpretation

This table serves as a historical record of NVIDIA's GPU evolution, demonstrating two key engineering trajectories: the relentless pursuit of higher raw computational power (TFLOPS) and the critical importance of improving energy efficiency (GFLOPS/Watt). The data suggests that architectural innovations, particularly the introduction of Tensor cores for AI acceleration, have been the primary driver of efficiency gains in recent years, far outpacing gains from traditional FP32 scaling alone.

The stark difference between the best consumer card (RTX 3090 at 202.86 GFLOPS/Watt Tensor) and the best server card (A30 at 1000.00 GFLOPS/Watt Tensor) underscores the different design priorities: desktop GPUs balance performance, power, and cost for gaming and prosumer use, while data center GPUs like the A30 and A100 are optimized for maximum throughput and efficiency within strict power envelopes for large-scale AI training and inference. The table is a valuable resource for comparing generational leaps and understanding the performance/watt trade-offs in GPU selection for different workloads.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Table: GPU Performance Metrics

### Overview

The table presents a comprehensive dataset of GPU models, detailing their technical specifications, performance metrics, and launch information. It includes consumer-grade GeForce GPUs, professional-grade Tesla GPUs, and server-focused models, with metrics like TFLOPS, power consumption (Watts), launch dates, and efficiency (GFLOPS/Watt).

### Components/Axes

- **Columns**:

- **GPU**: Model name (e.g., GeForce GTX 580, Tesla A100).

- **Precision**: Compute architecture (FP32, FP16, FP32 Tensor).

- **TFLOPS**: Theoretical peak performance in teraflops.

- **Watts**: Power consumption in watts.

- **Launch date**: Release date in MM/DD/YYYY format.

- **Type**: Categorization as "Desktop" or "Server".

- **GFLOPS/Watt**: Efficiency metric (performance per watt).

### Detailed Analysis

1. **GeForce GTX Series (Desktop)**:

- Early models (GTX 580–GTX 980 Ti) show gradual improvements in TFLOPS (1.58–6.69) and GFLOPS/Watt (6.48–24.24).

- Later models (RTX 2080 Ti, RTX 3090) use FP16/Tensor precision, achieving TFLOPS up to 56.90 and GFLOPS/Watt up to 227.60.

- Power consumption ranges from 244W (GTX 580) to 350W (RTX 3090).

2. **Tesla Series (Server/Desktop)**:

- Server GPUs (Tesla K10–A30) prioritize high TFLOPS (4.58–165.00) and efficiency (20.36–1000.00 GFLOPS/Watt).

- A100 (FP16) achieves 19.50 TFLOPS at 400W (48.75 GFLOPS/Watt), while A30 (FP32 Tensor) reaches 10.30 TFLOPS at 165W (62.42 GFLOPS/Watt).

- Tesla V100 (FP16/Tensor) dominates with 125.00 TFLOPS at 300W (416.67 GFLOPS/Watt).

3. **Precision Impact**:

- FP32 models (e.g., GTX 1080 Ti) average ~10 TFLOPS, while FP16/Tensor models (e.g., RTX 3090 Ti) exceed 50 TFLOPS.

- Tensor cores (e.g., RTX 3090 Ti) enable higher efficiency despite similar power consumption.

### Key Observations

- **Performance Scaling**: Newer GPUs (e.g., RTX 3090 Ti, A100) show exponential gains in TFLOPS and efficiency compared to older models (e.g., GTX 580).

- **Server Dominance**: Tesla GPUs (e.g., A100, A30) outperform consumer GPUs in both raw performance and efficiency, reflecting their optimization for computational workloads.

- **Power Efficiency**: High-end models like the RTX 3090 Ti (227.60 GFLOPS/Watt) and Tesla A100 (48.75 GFLOPS/Watt) balance power draw with performance.

- **Precision Trade-offs**: FP16/Tensor models achieve higher TFLOPS but may sacrifice single-precision accuracy, critical for specific workloads.

### Interpretation

The data highlights the evolution of GPU architecture toward higher performance and efficiency, driven by advancements in precision (FP16/Tensor) and parallel processing. Server GPUs (Tesla) prioritize sustained computational power for AI/ML and HPC tasks, while consumer GPUs (GeForce) focus on gaming and general computing. The RTX 3090 Ti and Tesla A100 represent benchmarks in consumer and server segments, respectively, with the latter excelling in data-center efficiency. The table underscores the importance of precision and architecture design in meeting diverse computational demands.

DECODING INTELLIGENCE...