## Line Chart: Scaling Laws for Language Model Loss vs. Computational Cost

### Overview

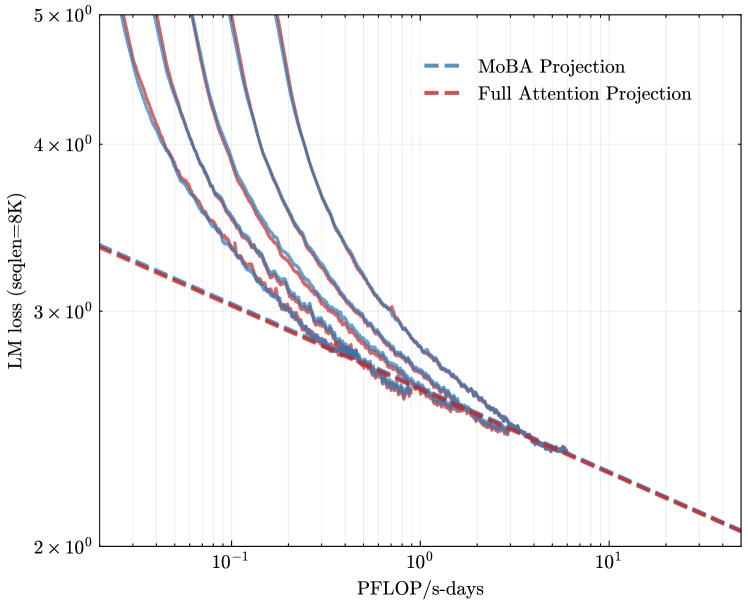

The image is a log-log plot illustrating the relationship between language model (LM) loss and computational cost, measured in PFLOP/s-days. It compares empirical scaling curves for various model configurations against two theoretical projection lines: "MoBA Projection" and "Full Attention Projection." The chart demonstrates the power-law scaling behavior typical of neural language models.

### Components/Axes

* **Chart Type:** Line chart with logarithmic scales on both axes.

* **X-Axis:**

* **Label:** `PFLOP/s-days`

* **Scale:** Logarithmic, ranging from approximately `10^-1` (0.1) to `10^1` (10).

* **Major Ticks:** `10^-1`, `10^0`, `10^1`.

* **Y-Axis:**

* **Label:** `LM loss (seqlen=8K)`

* **Scale:** Logarithmic, ranging from `2 x 10^0` (2) to `5 x 10^0` (5).

* **Major Ticks:** `2 x 10^0`, `3 x 10^0`, `4 x 10^0`, `5 x 10^0`.

* **Legend:**

* **Position:** Top-right corner of the plot area.

* **Entries:**

1. `MoBA Projection` - Represented by a blue dashed line (`--`).

2. `Full Attention Projection` - Represented by a red dashed line (`--`).

* **Data Series:** Multiple solid lines in various colors (blue, red, purple, grey) representing empirical data from different model runs or configurations. These lines are not individually labeled in the legend.

### Detailed Analysis

* **Trend Verification:** All data series (both solid lines and dashed projections) exhibit a clear downward slope from left to right. This indicates an inverse relationship: as computational cost (PFLOP/s-days) increases, the language model loss decreases.

* **Projection Lines:**

* The **red dashed "Full Attention Projection"** line starts at a loss of approximately `3.3` at `0.1` PFLOP/s-days and slopes downward to a loss of approximately `2.1` at `10` PFLOP/s-days.

* The **blue dashed "MoBA Projection"** line starts at a higher loss (off the top of the chart at `0.1` PFLOP/s-days) but has a steeper downward slope. It intersects and falls below the Full Attention projection line at approximately `1.5` PFLOP/s-days, suggesting MoBA becomes more efficient at higher compute budgets.

* **Empirical Data Curves:**

* The solid lines represent actual model training data. They generally follow the power-law trend predicted by the projections.

* At lower compute values (`0.1 - 1` PFLOP/s-days), the data curves are more spread out vertically, indicating higher variance in loss for similar compute.

* As compute increases (`>1` PFLOP/s-days), the data curves converge and cluster tightly around the projection lines, particularly the MoBA projection.

* The curves are not smooth; they exhibit small-scale fluctuations or "jitter," which is typical of training loss curves.

### Key Observations

1. **Power-Law Scaling:** The linear relationship on a log-log plot confirms that LM loss scales as a power law with increased compute.

2. **Projection Divergence:** The MoBA and Full Attention projections diverge significantly at both low and high compute ends, with MoBA predicting worse performance at low compute but better performance at high compute.

3. **Data Convergence:** Empirical data points converge towards the projection lines as compute increases, suggesting the scaling laws become more predictable at larger scales.

4. **Efficiency Crossover:** There is a visual crossover point around `1.5` PFLOP/s-days where the MoBA projection suggests it becomes the more compute-efficient method for achieving lower loss.

### Interpretation

This chart is a technical comparison of scaling efficiency between two attention mechanism paradigms—likely "Mixture of Block Attention" (MoBA) and standard "Full Attention"—for language models.

* **What the data suggests:** The empirical data validates the theoretical scaling laws for both attention types. The steeper slope of the MoBA projection indicates it has a better scaling exponent; it achieves a greater reduction in loss per additional unit of compute. However, its higher intercept suggests it may be less efficient at very small scales.

* **How elements relate:** The solid data lines serve as real-world validation for the dashed theoretical projections. Their convergence at high compute values lends credibility to the projections' predictive power for large-scale model training.

* **Notable implications:** The chart argues that for very large-scale training runs (high PFLOP/s-days), the MoBA architecture could be more advantageous, yielding lower loss for the same computational budget compared to Full Attention. The crossover point is a critical value for practitioners deciding which architecture to invest in for a given compute budget. The tight clustering of data at the high-compute end suggests that scaling behavior is robust and predictable in this regime.