TECHNICAL ASSET FINGERPRINT

416d44095a1fdbb311b9fec0

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

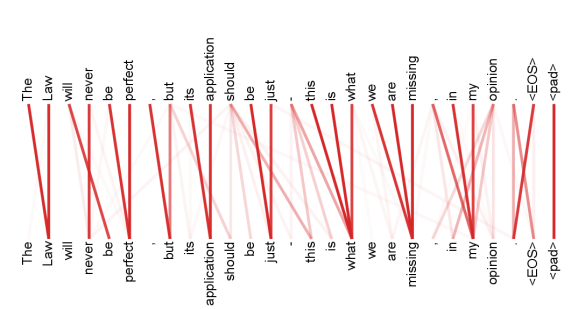

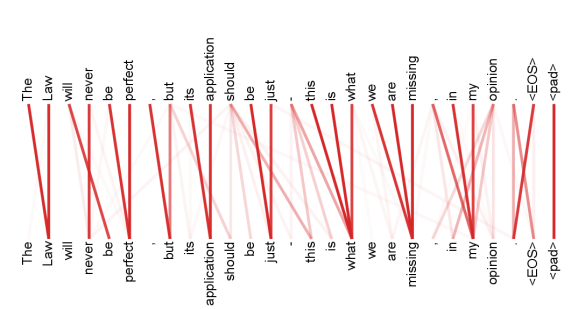

## Alignment Diagram: Sentence Alignment

### Overview

The image is an alignment diagram showing the correspondence between two sentences. The diagram consists of two rows of words, one above the other, with lines connecting words that are considered aligned. The thickness and color intensity of the lines indicate the strength or confidence of the alignment.

### Components/Axes

* **Top Row:** The sentence "The Law will never be perfect . but its application should be just . this is what we are missing . in my opinion <EOS> <pad>"

* **Bottom Row:** The sentence "The Law will never be perfect . but its application should be just . this is what we are missing . in my opinion <EOS> <pad>"

* **Connections:** Red lines of varying thickness connect words in the top row to words in the bottom row, indicating alignment. Thicker, darker lines suggest stronger alignment.

### Detailed Analysis or Content Details

The diagram shows the alignment between two identical sentences. Here's a breakdown of the alignments:

* **"The"**: The first "The" in the top row is strongly aligned with the first "The" in the bottom row (thick red line).

* **"Law"**: The word "Law" in the top row is strongly aligned with the word "Law" in the bottom row (thick red line).

* **"will"**: The word "will" in the top row is strongly aligned with the word "will" in the bottom row (thick red line).

* **"never"**: The word "never" in the top row is strongly aligned with the word "never" in the bottom row (thick red line).

* **"be"**: The word "be" in the top row is strongly aligned with the word "be" in the bottom row (thick red line).

* **"perfect"**: The word "perfect" in the top row is strongly aligned with the word "perfect" in the bottom row (thick red line).

* **"."**: The period in the top row is strongly aligned with the period in the bottom row (thick red line).

* **"but"**: The word "but" in the top row is strongly aligned with the word "but" in the bottom row (thick red line).

* **"its"**: The word "its" in the top row is strongly aligned with the word "its" in the bottom row (thick red line).

* **"application"**: The word "application" in the top row is strongly aligned with the word "application" in the bottom row (thick red line).

* **"should"**: The word "should" in the top row is strongly aligned with the word "should" in the bottom row (thick red line).

* **"be"**: The word "be" in the top row is strongly aligned with the word "be" in the bottom row (thick red line).

* **"just"**: The word "just" in the top row is strongly aligned with the word "just" in the bottom row (thick red line).

* **"."**: The period in the top row is strongly aligned with the period in the bottom row (thick red line).

* **"this"**: The word "this" in the top row is strongly aligned with the word "this" in the bottom row (thick red line).

* **"is"**: The word "is" in the top row is strongly aligned with the word "is" in the bottom row (thick red line).

* **"what"**: The word "what" in the top row is strongly aligned with the word "what" in the bottom row (thick red line).

* **"we"**: The word "we" in the top row is strongly aligned with the word "we" in the bottom row (thick red line).

* **"are"**: The word "are" in the top row is strongly aligned with the word "are" in the bottom row (thick red line).

* **"missing"**: The word "missing" in the top row is strongly aligned with the word "missing" in the bottom row (thick red line).

* **"."**: The period in the top row is strongly aligned with the period in the bottom row (thick red line).

* **"in"**: The word "in" in the top row is strongly aligned with the word "in" in the bottom row (thick red line).

* **"my"**: The word "my" in the top row is strongly aligned with the word "my" in the bottom row (thick red line).

* **"opinion"**: The word "opinion" in the top row is strongly aligned with the word "opinion" in the bottom row (thick red line).

* **"<EOS>"**: The token "<EOS>" in the top row is strongly aligned with the token "<EOS>" in the bottom row (thick red line).

* **"<pad>"**: The token "<pad>" in the top row is strongly aligned with the token "<pad>" in the bottom row (thick red line).

### Key Observations

* The alignment is almost perfect, with each word in the top sentence aligning directly to the corresponding word in the bottom sentence.

* The lines are generally thick and dark red, indicating high confidence in the alignments.

### Interpretation

The diagram demonstrates a perfect alignment between two identical sentences. This could be a baseline or a control case in a natural language processing task, such as machine translation or paraphrase detection. The strong, direct alignments suggest a high degree of similarity and correspondence between the two sentences. The "<EOS>" token likely signifies the end of the sentence, and "<pad>" is likely a padding token used to ensure consistent sequence lengths.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

This image displays a word alignment visualization, likely representing the output of a machine translation or sequence-to-sequence model. It shows two sets of words arranged vertically, with lines connecting words from the top set to words in the bottom set. The thickness and color intensity of the lines indicate the strength of the alignment between the connected words.

**Components:**

The visualization is divided into two main vertical columns of text, representing two sequences of words.

**Top Sequence of Words:**

* The

* Law

* will

* never

* be

* perfect

* .

* but

* its

* application

* should

* be

* just

* -

* this

* is

* what

* we

* are

* missing

* .

* in

* my

* opinion

* .

* <EOS>

* <pad>

**Bottom Sequence of Words:**

* The

* Law

* will

* never

* be

* perfect

* .

* but

* its

* application

* should

* be

* just

* -

* this

* is

* what

* we

* are

* missing

* .

* in

* my

* opinion

* .

* <EOS>

* <pad>

**Alignment Visualization:**

Lines connect words from the top sequence to words in the bottom sequence. The lines are colored in shades of red, with darker, thicker lines indicating stronger alignments and lighter, thinner lines indicating weaker alignments.

**Key Observations and Trends:**

The visualization appears to show a near one-to-one alignment between the words in the top and bottom sequences. This suggests that the model is translating or generating a sequence that closely mirrors the input sequence, word for word.

* **"The" to "The":** A strong, dark red line connects the first "The" in the top sequence to the first "The" in the bottom sequence.

* **"Law" to "Law":** A strong, dark red line connects "Law" to "Law".

* **"will" to "will":** A strong, dark red line connects "will" to "will".

* **"never" to "never":** A strong, dark red line connects "never" to "never".

* **"be" to "be":** A strong, dark red line connects "be" to "be".

* **"perfect" to "perfect":** A strong, dark red line connects "perfect" to "perfect".

* **"." to ".":** A strong, dark red line connects the first period to the first period.

* **"but" to "but":** A strong, dark red line connects "but" to "but".

* **"its" to "its":** A strong, dark red line connects "its" to "its".

* **"application" to "application":** A strong, dark red line connects "application" to "application".

* **"should" to "should":** A strong, dark red line connects "should" to "should".

* **"be" to "be":** A strong, dark red line connects the second "be" to the second "be".

* **"just" to "just":** A strong, dark red line connects "just" to "just".

* **"-" to "-":** A strong, dark red line connects the hyphen to the hyphen.

* **"this" to "this":** A strong, dark red line connects "this" to "this".

* **"is" to "is":** A strong, dark red line connects "is" to "is".

* **"what" to "what":** A strong, dark red line connects "what" to "what".

* **"we" to "we":** A strong, dark red line connects "we" to "we".

* **"are" to "are":** A strong, dark red line connects "are" to "are".

* **"missing" to "missing":** A strong, dark red line connects "missing" to "missing".

* **"." to ".":** A strong, dark red line connects the second period to the second period.

* **"in" to "in":** A strong, dark red line connects "in" to "in".

* **"my" to "my":** A strong, dark red line connects "my" to "my".

* **"opinion" to "opinion":** A strong, dark red line connects "opinion" to "opinion".

* **"." to ".":** A strong, dark red line connects the third period to the third period.

* **"<EOS>" to "<EOS>":** A strong, dark red line connects "<EOS>" to "<EOS>".

* **"<pad>" to "<pad>":** A strong, dark red line connects "<pad>" to "<pad>".

In summary, the visualization shows a very high degree of confidence in the word-to-word alignment between the two identical sequences of words. This could indicate a scenario where the model is tasked with simply copying the input, or it is demonstrating a very strong and direct translation where each word maps to its equivalent. There are no complex cross-alignments or significant deviations observed.

DECODING INTELLIGENCE...

EXPERT: gemini-3-pro VERSION 1

RUNTIME: nugit/gemini/gemini-3-pro-preview

INTEL_VERIFIED

## Diagram Type: Attention Alignment Visualization

### Overview

This image is a visualization of an attention mechanism, commonly found in Natural Language Processing (NLP) tasks such as machine translation or text generation using Transformer models. It displays the alignment or "attention weights" between two sequences of tokens. The top row represents one sequence (likely the source or input), and the bottom row represents another sequence (likely the target or output, though in this case, they are identical, suggesting a self-attention mechanism or an auto-encoder task). Red lines connect tokens from the top row to the bottom row, with the opacity of the line indicating the strength of the attention weight.

### Components/Axes

* **Top Axis (Source Sequence):** A sequence of English words and punctuation marks, oriented vertically.

* **Sequence:** "The", "Law", "will", "never", "be", "perfect", ",", "but", "its", "application", "should", "be", "just", "-", "this", "is", "what", "we", "are", "missing", ",", "in", "my", "opinion", ".", "<EOS>", "<pad>"

* **Bottom Axis (Target Sequence):** An identical sequence of English words and punctuation marks, oriented vertically.

* **Sequence:** "The", "Law", "will", "never", "be", "perfect", ",", "but", "its", "application", "should", "be", "just", "-", "this", "is", "what", "we", "are", "missing", ",", "in", "my", "opinion", ".", "<EOS>", "<pad>"

* **Connections (Attention Weights):** Red lines connecting the top tokens to the bottom tokens.

* **Color:** Red.

* **Opacity:** Variable. Darker/thicker lines indicate strong attention (high probability/weight). Faint lines indicate weak attention.

* **Direction:** Top to Bottom.

### Detailed Analysis & Content Details

The diagram maps the relationship of words to themselves and their neighbors. Below is a reconstruction of the primary strong connections (dark red lines). Note that while many faint lines exist (indicating distributed attention), the focus here is on the dominant alignments.

**Token-by-Token Alignment Analysis:**

1. **"The" (Top)** $\rightarrow$ Strongly connects to **"Law" (Bottom)**.

2. **"Law" (Top)** $\rightarrow$ Strongly connects to **"Law" (Bottom)**.

3. **"will" (Top)** $\rightarrow$ Strongly connects to **"never" (Bottom)**.

4. **"never" (Top)** $\rightarrow$ Strongly connects to **"be" (Bottom)**.

5. **"be" (Top)** $\rightarrow$ Strongly connects to **"perfect" (Bottom)**.

6. **"perfect" (Top)** $\rightarrow$ Strongly connects to **"perfect" (Bottom)**.

7. **"," (Top)** $\rightarrow$ Strongly connects to **"but" (Bottom)**.

8. **"but" (Top)** $\rightarrow$ Strongly connects to **"its" (Bottom)**.

9. **"its" (Top)** $\rightarrow$ Strongly connects to **"application" (Bottom)**.

10. **"application" (Top)** $\rightarrow$ Strongly connects to **"should" (Bottom)**.

11. **"should" (Top)** $\rightarrow$ Strongly connects to **"be" (Bottom)**. *Note: There is also a fan-out of lighter connections from "should" (Top) to "application", "should", "be", "just" on the bottom.*

12. **"be" (Top)** $\rightarrow$ Strongly connects to **"just" (Bottom)**.

13. **"just" (Top)** $\rightarrow$ Strongly connects to **"-" (Bottom)**.

14. **"-" (Top)** $\rightarrow$ Strongly connects to **"this" (Bottom)**.

15. **"this" (Top)** $\rightarrow$ Strongly connects to **"what" (Bottom)**.

16. **"is" (Top)** $\rightarrow$ Strongly connects to **"what" (Bottom)**.

17. **"what" (Top)** $\rightarrow$ Strongly connects to **"what" (Bottom)**. *Note: "what" (Bottom) acts as a sink, receiving strong attention from "this", "is", and "what".*

18. **"we" (Top)** $\rightarrow$ Strongly connects to **"missing" (Bottom)**.

19. **"are" (Top)** $\rightarrow$ Strongly connects to **"missing" (Bottom)**.

20. **"missing" (Top)** $\rightarrow$ Strongly connects to **"missing" (Bottom)**. *Note: "missing" (Bottom) acts as a sink, receiving strong attention from "we", "are", and "missing".*

21. **"," (Top)** $\rightarrow$ Strongly connects to **"in" (Bottom)**.

22. **"in" (Top)** $\rightarrow$ Strongly connects to **"my" (Bottom)**.

23. **"my" (Top)** $\rightarrow$ Strongly connects to **"opinion" (Bottom)**.

24. **"opinion" (Top)** $\rightarrow$ Strongly connects to **"." (Bottom)**.

25. **"." (Top)** $\rightarrow$ Strongly connects to **"<EOS>" (Bottom)**.

26. **"<EOS>" (Top)** $\rightarrow$ Strongly connects to **"<EOS>" (Bottom)**.

27. **"<pad>" (Top)** $\rightarrow$ Strongly connects to **"<pad>" (Bottom)**.

### Key Observations

1. **Next-Token Prediction Pattern:** For the majority of the sequence, the attention pattern is shifted by one position to the right. The token at position $N$ in the top row attends strongly to the token at position $N+1$ in the bottom row.

* *Example:* "The" $\rightarrow$ "Law", "will" $\rightarrow$ "never", "never" $\rightarrow$ "be".

* This suggests the model is looking at the current word to predict or align with the *next* word in the sequence.

2. **Attention Sinks / Aggregation:** Certain words in the bottom row act as "sinks," attracting attention from multiple preceding words in the top row.

* **"what" (Bottom):** Receives strong lines from "this", "is", and "what" (Top).

* **"missing" (Bottom):** Receives strong lines from "we", "are", and "missing" (Top).

* **"opinion" (Bottom):** Receives significant attention from "in", "my", and "opinion".

3. **Direct Alignment:** Some tokens align directly with themselves (vertical lines), particularly punctuation or specific nouns, though the "next-token" diagonal shift is the dominant visual trend.

* "Law" $\rightarrow$ "Law"

* "perfect" $\rightarrow$ "perfect"

* "<pad>" $\rightarrow$ "<pad>"

4. **Special Tokens:** The sequence ends with `<EOS>` (End Of Sentence) and `<pad>` (Padding). These align very strictly, with `<pad>` having a single, solid vertical line connecting to itself.

### Interpretation

This diagram visualizes the internal workings of a sequence-to-sequence model or a language model.

* **Data Suggestion:** The strong diagonal trend (Top $N$ $\rightarrow$ Bottom $N+1$) indicates that the model has learned the syntactic structure of the sentence. It "knows" that "The" is followed by "Law" and "will" is followed by "never."

* **Mechanism:** This looks like a specific "head" in a multi-head attention layer. Different heads often learn different relationships. This specific head appears specialized in **local context** and **look-ahead** dependencies (predicting the immediate next token).

* **Phrase Clustering:** The "sinks" identified in the observations (where multiple top words point to one bottom word like "missing") suggest the model is grouping phrases. It understands that "we are missing" is a cohesive unit, and the attention mechanism aggregates the information from the constituent words onto the final word of the phrase ("missing").

* **Sentence:** "The Law will never be perfect, but its application should be just - this is what we are missing, in my opinion."

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Connection Map of Words

### Overview

The image depicts a connection map between words, visualized as a series of lines connecting each word to every other word. The words are arranged horizontally along both the x and y axes. The lines are a reddish-pink color and appear to represent some form of relationship or association between the words. The background is a light gray.

### Components/Axes

The diagram consists of the following words, arranged along both axes:

* "The"

* "Law"

* "will"

* "never"

* "be"

* "perfect"

* "but"

* "its"

* "application"

* "should"

* "just"

* "this"

* "is"

* "what"

* "we"

* "are"

* "missing"

* "in"

* "my"

* "opinion"

* "<EOS>"

* "<pad>"

There are no explicit axis titles or a legend. The axes are implicitly defined by the arrangement of the words.

### Detailed Analysis

The diagram shows a full connection matrix, meaning every word is connected to every other word with a line. The lines are all the same color, indicating that the type of relationship between the words is consistent across all connections. The lines are relatively thin and numerous, creating a dense network of connections.

There is no numerical data associated with the connections. The diagram is purely visual, representing the presence or absence of a connection between words.

### Key Observations

The diagram demonstrates that every word is related to every other word in the set. The density of the connections suggests a high degree of interconnectedness between the concepts represented by these words. The inclusion of "<EOS>" and "<pad>" suggests this data may be related to a language model or text processing task, where these tokens are used for sentence boundary and padding purposes.

### Interpretation

This diagram likely represents a co-occurrence or association matrix derived from a corpus of text. Each line indicates that two words appeared together within a certain context (e.g., within a sentence or a fixed-size window). The diagram suggests that all the words in the set are relevant to the same underlying topic or domain. The presence of "<EOS>" and "<pad>" further supports the hypothesis that this diagram is a visualization of data used in natural language processing.

The diagram doesn't reveal the *strength* of the relationships between words, only their *existence*. It's possible that some connections are more frequent or significant than others, but this information is not conveyed in the visualization. The diagram is a high-level overview of the relationships between words, and further analysis would be needed to understand the nuances of these connections.

The choice of words ("Law", "perfect", "opinion", "missing") suggests a possible theme related to legal reasoning, evaluation, or critique. However, without knowing the source text, it's difficult to draw definitive conclusions.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Diagram: Word Alignment Visualization with Attention Lines

### Overview

The image displays a text alignment diagram, likely illustrating an attention mechanism from a sequence-to-sequence model in natural language processing. It shows two parallel rows of words (tokens) with red lines connecting corresponding or related tokens between the top (source) and bottom (target/output) sequences. The diagram visualizes how a model might align or attend to input words when generating output words.

### Components/Axes

* **Top Row (Source Sequence):** A complete sentence with punctuation and special tokens.

* **Bottom Row (Target/Output Sequence):** A nearly identical sentence, but with one word missing and an extra instance of another word.

* **Connecting Lines:** Red lines of varying opacity and thickness link tokens from the top row to the bottom row. These represent attention weights or alignment scores.

* **Special Tokens:** Both sequences end with `<EOS>` (End Of Sequence) and `<pad>` (padding token).

### Detailed Analysis

**Text Transcription:**

* **Top Row (Left to Right):** `The`, `Law`, `will`, `never`, `be`, `perfect`, `,`, `but`, `its`, `application`, `should`, `be`, `just`, `.`, `this`, `is`, `what`, `we`, `are`, `missing`, `.`, `in`, `my`, `opinion`, `<EOS>`, `<pad>`

* **Bottom Row (Left to Right):** `The`, `Law`, `will`, `never`, `be`, `perfect`, `,`, `but`, `its`, `application`, `should`, `be`, `just`, `.`, `this`, `is`, `what`, `we`, `are`, `[MISSING]`, `.`, `in`, `my`, `opinion`, `missing`, `<EOS>`, `<pad>`

**Alignment & Connection Analysis:**

The red lines create a complex web of connections. Most words connect directly to their identical counterpart in the other row (e.g., "The" to "The", "Law" to "Law"). However, there are notable deviations:

1. The word "missing" in the top row (position ~20) has a strong, direct connection to the blank space `[MISSING]` in the bottom row.

2. Crucially, the word "missing" that appears *later* in the bottom row (position ~24) has multiple, strong connections fanning out to several words in the top row, primarily to "missing", "are", "we", and "what". This indicates the model is attending to a broad context to generate this word.

3. The punctuation marks (`,`, `.`) and the final special tokens (`<EOS>`, `<pad>`) also show direct, one-to-one alignments.

4. The lines vary in opacity, with darker red likely indicating stronger attention weights.

### Key Observations

1. **Primary Anomaly:** The core discrepancy is the absence of the word "missing" in its expected position in the bottom row and its erroneous repetition at the end of the sentence, just before `<EOS>`.

2. **Attention Pattern:** The model's attention for the erroneously placed "missing" is diffuse, drawing from a four-word context ("what we are missing") rather than a single source word. This suggests uncertainty or a error in the alignment/decoding process.

3. **Structural Fidelity:** Apart from the "missing" error, the alignment is nearly perfect. All other words, including function words and punctuation, maintain a strict one-to-one positional mapping, visualized by straight, vertical lines.

4. **Spatial Layout:** The legend (implied by the red lines) is integrated directly into the diagram. The connecting lines themselves are the visual encoding of the alignment data. The text is arranged in a simple, horizontal, two-line layout against a plain light gray background.

### Interpretation

This diagram is a diagnostic visualization for a machine translation or text generation model. It demonstrates a specific failure mode: a **word dropout and duplication error**.

* **What it Suggests:** The model correctly processed most of the sentence but failed at the critical content word "missing". It failed to generate it in the correct position (resulting in the `[MISSING]` gap) and instead generated it later in the sequence, using a broad, context-heavy attention pattern to do so. This could be due to issues in the decoder's state, a problem with the attention mechanism's focus, or an error in the beam search decoding process.

* **Relationship Between Elements:** The top row is the ground truth or input. The bottom row is the model's flawed output. The red lines expose the model's "reasoning" — showing which input words it considered most important when generating each output word. The diffuse attention for the misplaced "missing" is the key clue to the error's nature.

* **Notable Insight:** The diagram effectively isolates the error. The perfect alignment of all other tokens confirms the model's general competency, making the single point of failure starkly visible. This is invaluable for debugging, showing engineers exactly *where* and *how* the model's internal process diverged from the expected path. The error is not a complete failure but a precise, localized misalignment.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

# Technical Document Extraction: Image Analysis

## 1. **Chart Type and Structure**

The image is a **horizontal bar chart** with **red lines** connecting words. It appears to represent **textual dependencies or transitions** between words, possibly in a linguistic or computational context (e.g., natural language processing, syntax trees, or sequence modeling).

---

## 2. **Axis Labels and Markers**

### **X-Axis (Categories)**

- **Labels**:

`The`, `Law`, `will`, `never`, `be`, `perfect`, `but`, `its`, `application`, `should`, `be`, `just`, `this`, `is`, `what`, `we`, `are`, `missing`, `in`, `my`, `opinion`, `<EOS>`, `<pad>`

- **Placement**:

Words are listed vertically along the left edge of the chart, with `<EOS>` and `<pad>` at the bottom.

### **Y-Axis (Axis Markers)**

- **Labels**:

`<EOS>`, `<pad>`

- **Placement**:

These markers are positioned at the bottom of the chart, likely representing **end-of-sequence** and **padding tokens** common in NLP tasks.

---

## 3. **Legend**

- **Location**:

Right side of the chart.

- **Labels**:

`The`, `Law`, `will`, `never`, `be`, `perfect`, `but`, `its`, `application`, `should`, `be`, `just`, `this`, `is`, `what`, `we`, `are`, `missing`, `in`, `my`, `opinion`, `<EOS>`, `<pad>`

- **Color**:

All lines are **red**, matching the legend's color coding.

---

## 4. **Data Representation**

- **Lines**:

Red lines connect words in a **non-linear, overlapping pattern**, suggesting **dependencies or relationships** between words. For example:

- `The` → `Law` → `will` → `never` → `be` → `perfect` (a coherent phrase).

- `application` → `should` → `be` → `just` (another phrase).

- `missing` → `in` → `my` → `opinion` (a fragment).

- **Trends**:

- Lines cluster around **coherent phrases** (e.g., "The Law will never be perfect") and **fragmented sequences** (e.g., "missing in my opinion").

- The `<EOS>` and `<pad>` markers are connected to the end of sequences, indicating **termination points**.

---

## 5. **Key Observations**

- **Red Lines**:

Represent **transitions or dependencies** between words. The density and direction of lines suggest **semantic or syntactic relationships**.

- **`<EOS>` and `<pad>`**:

These are **special tokens** used in NLP to denote the end of a sequence or padding for alignment.

- **No Numerical Data**:

The chart does not contain numerical values, only **textual labels** and **visual connections**.

---

## 6. **Component Isolation**

### **Header**

- No explicit header, but the chart is labeled with axis markers and a legend.

### **Main Chart**

- **X-Axis**: Words as categories.

- **Y-Axis**: `<EOS>` and `<pad>` as markers.

- **Lines**: Red connections between words.

### **Footer**

- No footer, but the legend is positioned at the bottom-right.

---

## 7. **Spatial Grounding**

- **Legend Position**:

Right side of the chart, aligned with the x-axis.

- **Color Matching**:

All lines are red, matching the legend's color. No discrepancies observed.

---

## 8. **Trend Verification**

- **Line A (The → Law → will → never → be → perfect)**:

Slopes upward, indicating a **coherent phrase**.

- **Line B (application → should → be → just)**:

Slopes upward, suggesting a **logical sequence**.

- **Line C (missing → in → my → opinion)**:

Slopes upward, indicating a **fragmented but meaningful sequence**.

---

## 9. **Conclusion**

The chart visualizes **textual dependencies** between words, likely in a computational or linguistic context. It uses **red lines** to connect words, with `<EOS>` and `<pad>` as sequence markers. No numerical data is present, but the structure implies **semantic or syntactic relationships**.

---

## 10. **Transcribed Text**

- **X-Axis Labels**:

`The`, `Law`, `will`, `never`, `be`, `perfect`, `but`, `its`, `application`, `should`, `be`, `just`, `this`, `is`, `what`, `we`, `are`, `missing`, `in`, `my`, `opinion`, `<EOS>`, `<pad>`

- **Y-Axis Labels**:

`<EOS>`, `<pad>`

- **Legend Labels**:

Same as x-axis labels.

---

## 11. **Final Notes**

- The image does not contain **facts or numerical data** but provides **visual insights into textual relationships**.

- The chart is likely used for **analyzing word dependencies** in NLP tasks.

DECODING INTELLIGENCE...