\n

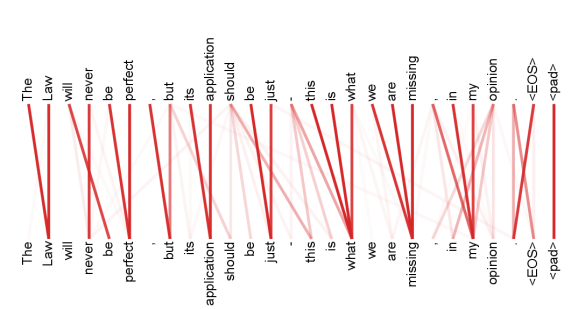

## Diagram: Word Alignment Visualization with Attention Lines

### Overview

The image displays a text alignment diagram, likely illustrating an attention mechanism from a sequence-to-sequence model in natural language processing. It shows two parallel rows of words (tokens) with red lines connecting corresponding or related tokens between the top (source) and bottom (target/output) sequences. The diagram visualizes how a model might align or attend to input words when generating output words.

### Components/Axes

* **Top Row (Source Sequence):** A complete sentence with punctuation and special tokens.

* **Bottom Row (Target/Output Sequence):** A nearly identical sentence, but with one word missing and an extra instance of another word.

* **Connecting Lines:** Red lines of varying opacity and thickness link tokens from the top row to the bottom row. These represent attention weights or alignment scores.

* **Special Tokens:** Both sequences end with `<EOS>` (End Of Sequence) and `<pad>` (padding token).

### Detailed Analysis

**Text Transcription:**

* **Top Row (Left to Right):** `The`, `Law`, `will`, `never`, `be`, `perfect`, `,`, `but`, `its`, `application`, `should`, `be`, `just`, `.`, `this`, `is`, `what`, `we`, `are`, `missing`, `.`, `in`, `my`, `opinion`, `<EOS>`, `<pad>`

* **Bottom Row (Left to Right):** `The`, `Law`, `will`, `never`, `be`, `perfect`, `,`, `but`, `its`, `application`, `should`, `be`, `just`, `.`, `this`, `is`, `what`, `we`, `are`, `[MISSING]`, `.`, `in`, `my`, `opinion`, `missing`, `<EOS>`, `<pad>`

**Alignment & Connection Analysis:**

The red lines create a complex web of connections. Most words connect directly to their identical counterpart in the other row (e.g., "The" to "The", "Law" to "Law"). However, there are notable deviations:

1. The word "missing" in the top row (position ~20) has a strong, direct connection to the blank space `[MISSING]` in the bottom row.

2. Crucially, the word "missing" that appears *later* in the bottom row (position ~24) has multiple, strong connections fanning out to several words in the top row, primarily to "missing", "are", "we", and "what". This indicates the model is attending to a broad context to generate this word.

3. The punctuation marks (`,`, `.`) and the final special tokens (`<EOS>`, `<pad>`) also show direct, one-to-one alignments.

4. The lines vary in opacity, with darker red likely indicating stronger attention weights.

### Key Observations

1. **Primary Anomaly:** The core discrepancy is the absence of the word "missing" in its expected position in the bottom row and its erroneous repetition at the end of the sentence, just before `<EOS>`.

2. **Attention Pattern:** The model's attention for the erroneously placed "missing" is diffuse, drawing from a four-word context ("what we are missing") rather than a single source word. This suggests uncertainty or a error in the alignment/decoding process.

3. **Structural Fidelity:** Apart from the "missing" error, the alignment is nearly perfect. All other words, including function words and punctuation, maintain a strict one-to-one positional mapping, visualized by straight, vertical lines.

4. **Spatial Layout:** The legend (implied by the red lines) is integrated directly into the diagram. The connecting lines themselves are the visual encoding of the alignment data. The text is arranged in a simple, horizontal, two-line layout against a plain light gray background.

### Interpretation

This diagram is a diagnostic visualization for a machine translation or text generation model. It demonstrates a specific failure mode: a **word dropout and duplication error**.

* **What it Suggests:** The model correctly processed most of the sentence but failed at the critical content word "missing". It failed to generate it in the correct position (resulting in the `[MISSING]` gap) and instead generated it later in the sequence, using a broad, context-heavy attention pattern to do so. This could be due to issues in the decoder's state, a problem with the attention mechanism's focus, or an error in the beam search decoding process.

* **Relationship Between Elements:** The top row is the ground truth or input. The bottom row is the model's flawed output. The red lines expose the model's "reasoning" — showing which input words it considered most important when generating each output word. The diffuse attention for the misplaced "missing" is the key clue to the error's nature.

* **Notable Insight:** The diagram effectively isolates the error. The perfect alignment of all other tokens confirms the model's general competency, making the single point of failure starkly visible. This is invaluable for debugging, showing engineers exactly *where* and *how* the model's internal process diverged from the expected path. The error is not a complete failure but a precise, localized misalignment.