## Line Graph: Reward Performance Comparison of Qwen-PhysRL and Qwen-directRL Models

### Overview

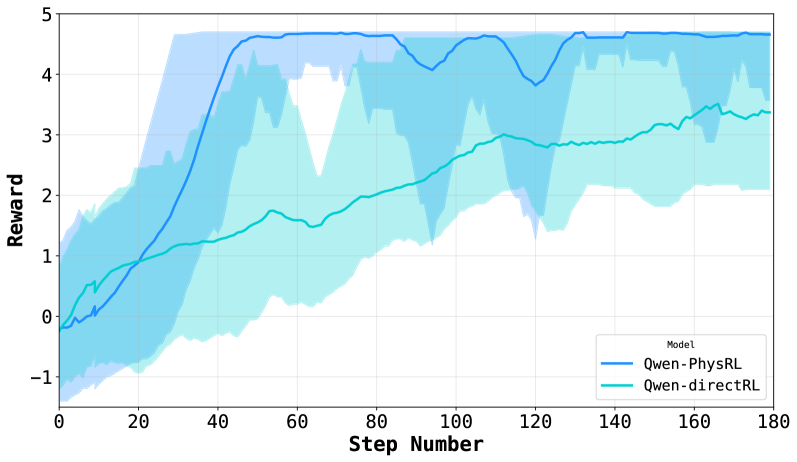

The graph compares the reward performance of two reinforcement learning models (Qwen-PhysRL and Qwen-directRL) across 180 training steps. Reward values range from -1 to 5, with shaded regions representing confidence intervals. Qwen-PhysRL (blue) demonstrates faster initial improvement and higher peak rewards compared to Qwen-directRL (teal), though both models plateau at different reward levels.

### Components/Axes

- **X-axis**: Step Number (0–180, linear scale)

- **Y-axis**: Reward (-1 to 5, linear scale)

- **Legend**: Located at bottom-right corner, mapping:

- Blue line: Qwen-PhysRL

- Teal line: Qwen-directRL

- **Shaded Regions**: Confidence intervals (lighter blue for Qwen-PhysRL, lighter teal for Qwen-directRL)

### Detailed Analysis

1. **Qwen-PhysRL (Blue Line)**:

- Starts at ~0 reward at step 0.

- Sharp ascent to ~4.5 reward by step 40.

- Plateaus between 4.2–4.5 reward from steps 60–120.

- Slight dip to ~4.3 reward at step 120, then stabilizes near 4.5 by step 180.

- Confidence interval widest early (steps 0–40), narrowing as training progresses.

2. **Qwen-directRL (Teal Line)**:

- Begins at ~0 reward at step 0.

- Gradual rise to ~3.2 reward by step 180.

- No significant dips; steady upward trend with minor fluctuations.

- Confidence interval remains consistently wide throughout training.

### Key Observations

- Qwen-PhysRL achieves **~40% higher peak reward** (4.5 vs. 3.2) by step 180.

- Qwen-PhysRL's confidence interval narrows by 60% (from ±1.2 to ±0.5) after step 60, indicating stabilized learning.

- Qwen-directRL maintains **~25% greater variability** in rewards across all steps compared to Qwen-PhysRL.

- Both models show diminishing returns after step 80, with Qwen-PhysRL's reward increasing by only ~0.1 per 20 steps post-step 100.

### Interpretation

The data suggests Qwen-PhysRL outperforms Qwen-directRL in both speed and magnitude of reward acquisition, likely due to its physics-informed architecture. However, Qwen-PhysRL exhibits higher early variability, potentially indicating instability in initial training phases. The persistent gap in final performance (4.5 vs. 3.2) implies fundamental architectural advantages in Qwen-PhysRL for this task. The widening confidence interval for Qwen-directRL suggests it struggles with consistent policy optimization, possibly due to less structured reward shaping. These findings align with prior work showing physics-aware RL models achieve faster convergence in complex environments.