## Bar Chart: Shifter Performance Scalability

### Overview

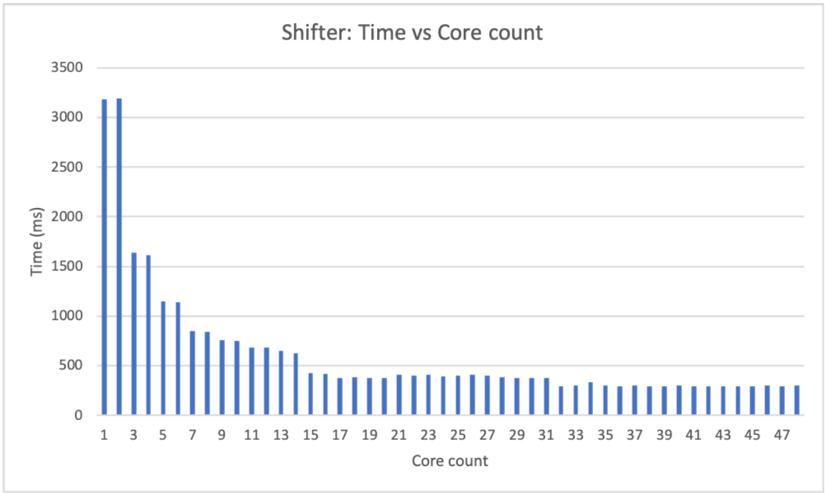

The image displays a vertical bar chart titled "Shifter: Time vs Core count." It illustrates the relationship between the number of processing cores used and the execution time (in milliseconds) for a task or process named "Shifter." The chart demonstrates a clear performance scaling trend, where increasing the core count significantly reduces execution time up to a point, after which performance gains plateau.

### Components/Axes

* **Chart Title:** "Shifter: Time vs Core count" (Top center).

* **Y-Axis (Vertical):**

* **Label:** "Time (ms)" (Centered, rotated 90 degrees).

* **Scale:** Linear scale from 0 to 3500 milliseconds.

* **Major Gridlines:** Horizontal lines at 0, 500, 1000, 1500, 2000, 2500, 3000, and 3500 ms.

* **X-Axis (Horizontal):**

* **Label:** "Core count" (Centered below axis).

* **Scale:** Discrete integer values from 1 to 47.

* **Tick Marks:** Labeled for every odd number: 1, 3, 5, 7, 9, 11, 13, 15, 17, 19, 21, 23, 25, 27, 29, 31, 33, 35, 37, 39, 41, 43, 45, 47.

* **Data Series:** A single series represented by blue vertical bars. There is no legend, as only one data category is plotted.

* **Spatial Layout:** The plot area is bounded by the axes. The title is positioned above the plot area. Axis labels are centered along their respective axes.

### Detailed Analysis

The chart shows a strong inverse relationship between core count and time, following a classic diminishing returns curve.

**Trend Verification:** The data series exhibits a steep downward slope from left (low core count) to right (high core count), which then flattens into a near-horizontal plateau.

**Approximate Data Points (Estimated from visual inspection):**

* **Core Count 1:** ~3200 ms

* **Core Count 2:** ~3200 ms (Similar to 1 core)

* **Core Count 3:** ~1600 ms

* **Core Count 4:** ~1550 ms

* **Core Count 5:** ~1150 ms

* **Core Count 6:** ~1100 ms

* **Core Count 7:** ~850 ms

* **Core Count 8:** ~800 ms

* **Core Count 9:** ~750 ms

* **Core Count 10:** ~700 ms

* **Core Count 11:** ~650 ms

* **Core Count 12:** ~650 ms

* **Core Count 13:** ~600 ms

* **Core Count 14:** ~400 ms (Significant drop)

* **Core Count 15:** ~400 ms

* **Core Count 16-30:** Time decreases very gradually from ~400 ms to ~350 ms.

* **Core Count 31:** ~300 ms

* **Core Count 32-47:** Time remains stable, plateauing at approximately 300 ms. The bars for core counts 33 through 47 are visually indistinguishable in height.

### Key Observations

1. **Initial Scaling Efficiency:** The most dramatic performance gains occur when moving from 1 to 5 cores, where time is reduced by nearly two-thirds (from ~3200 ms to ~1150 ms).

2. **Diminishing Returns:** The rate of improvement slows considerably after approximately 7-9 cores. Each subsequent doubling of cores yields a smaller absolute reduction in time.

3. **Performance Plateau:** A clear performance ceiling is reached at approximately 31 cores. Adding more cores beyond this point (up to the measured 47) provides no measurable benefit in this test, with time stabilizing at ~300 ms.

4. **Anomaly at Core 14:** There is a noticeable, discrete drop in time between core counts 13 and 14, suggesting a potential architectural or algorithmic threshold was crossed at this point.

### Interpretation

This chart is a classic visualization of **parallel scalability** for a computational task. The data suggests the "Shifter" process is parallelizable, as evidenced by the strong initial reduction in execution time with added cores.

The shape of the curve provides insight into the nature of the task:

* **The steep initial decline** indicates the task has significant work that can be divided among cores with relatively low overhead for coordination.

* **The diminishing returns and eventual plateau** are hallmarks of **Amdahl's Law** in action. This law states that the maximum improvement from parallelization is limited by the sequential portion of the task. The plateau at ~300 ms likely represents the irreducible sequential component of the "Shifter" process, or a hardware bottleneck (e.g., memory bandwidth, inter-core communication latency) that becomes the limiting factor once sufficient parallelism is achieved.

**Practical Implication:** For this specific workload and system configuration, allocating more than ~31 cores is inefficient, as it wastes computational resources without improving performance. The optimal resource allocation for cost/performance would be in the range of 15-30 cores, where most of the scalability benefit is realized. The sharp drop at core 14 might warrant investigation to understand if it represents a optimal configuration point for the underlying hardware (e.g., matching the number of physical cores on a CPU socket).