## Heatmap: Layer-Token Activation Heatmap

### Overview

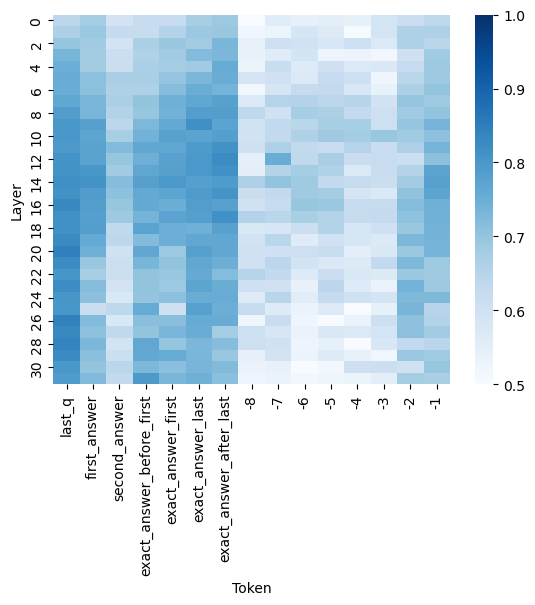

The image is a heatmap visualizing numerical activation values across two dimensions: neural network layers (vertical axis) and specific tokens (horizontal axis). The color intensity represents the magnitude of the value, with a scale provided on the right. The data appears to relate to the internal activations of a language model during a question-answering task, focusing on specific token positions.

### Components/Axes

* **Y-Axis (Vertical):** Labeled **"Layer"**. It is a linear scale with major tick marks labeled from **0** at the top to **30** at the bottom, in increments of 2 (0, 2, 4, ..., 30). This represents the depth or layer number within a neural network.

* **X-Axis (Horizontal):** Labeled **"Token"**. It contains categorical labels for specific token positions. From left to right, the labels are:

* `last_q`

* `first_answer`

* `second_answer`

* `exact_answer_before_first`

* `exact_answer_first`

* `exact_answer_last`

* `exact_answer_after_last`

* `-8`

* `-7`

* `-6`

* `-5`

* `-4`

* `-3`

* `-2`

* `-1`

* **Color Bar (Legend):** Positioned on the far right of the chart. It is a vertical gradient bar mapping color to numerical value.

* **Scale:** Linear, ranging from **0.5** (bottom, lightest blue/white) to **1.0** (top, darkest blue).

* **Labels:** The bar is labeled at intervals: **0.5, 0.6, 0.7, 0.8, 0.9, 1.0**.

* **Color Mapping:** Lighter shades (approaching white) correspond to lower values (~0.5-0.6). Medium blue shades correspond to mid-range values (~0.7-0.8). Dark blue shades correspond to high values (~0.9-1.0).

### Detailed Analysis

The heatmap displays a grid of colored cells, where each cell's color corresponds to a value between 0.5 and 1.0 for a specific Layer-Token pair.

**Trend Verification & Data Point Extraction (Approximate):**

* **Column `last_q`:** This column shows consistently high activation (dark blue) across nearly all layers. Values appear to be in the **0.85 - 1.0** range from Layer 0 to Layer 30, with some of the darkest cells (closest to 1.0) appearing in the middle layers (approx. Layers 8-20).

* **Columns `first_answer` to `exact_answer_after_last`:** These columns show moderate to high activation, but with more variation than `last_q`.

* `first_answer`: Shows medium-dark blue in upper layers (0-10), becoming slightly lighter in lower layers. Approximate range: **0.7 - 0.9**.

* `second_answer`: Similar pattern to `first_answer`, perhaps slightly lighter on average. Approximate range: **0.65 - 0.85**.

* `exact_answer_before_first`, `exact_answer_first`, `exact_answer_last`, `exact_answer_after_last`: These four columns exhibit a similar pattern. They show relatively high activation (medium to dark blue) in the upper half of the layers (0-15), which then becomes notably lighter (lower values) in the lower layers (16-30). Approximate range across all: **0.6 - 0.9**, with the lower layers dipping towards **0.6**.

* **Columns `-8` to `-1`:** These columns, representing negative token indices (likely positions relative to the end of a sequence), show the lowest activations overall.

* They are predominantly light blue to white, indicating values clustered near the bottom of the scale.

* The approximate range for most cells in these columns is **0.5 - 0.7**.

* There is a slight trend where columns `-8` to `-5` are marginally darker (higher value) than columns `-4` to `-1`, which are the lightest in the entire heatmap.

**Spatial Grounding:**

* The **highest value cells** (darkest blue, ~1.0) are located in the **center-left** region of the heatmap, specifically in the `last_q` column across the middle layers.

* The **lowest value cells** (lightest blue/white, ~0.5) are located in the **bottom-right** region, specifically in the `-4` to `-1` columns across the lower layers (20-30).

* The legend is placed **outside the main plot area, to its right**.

### Key Observations

1. **Dominance of `last_q`:** The token labeled `last_q` (likely the last token of the question) exhibits the strongest and most consistent high activation across the entire network depth. This is the most salient feature of the heatmap.

2. **Layer-Dependent Activation for Answer Tokens:** Tokens related to the answer (`first_answer`, `exact_answer_*`) show a clear pattern of higher activation in the upper/middle layers, which diminishes in the deepest layers (20-30).

3. **Low Activation for Late Sequence Tokens:** Tokens with negative indices (`-8` to `-1`), which may represent padding or tokens far from the answer span, show uniformly low activation, especially the very last positions (`-4` to `-1`).

4. **Vertical Banding:** There is a visible vertical banding pattern, where entire columns share similar color profiles, indicating that the token position is a stronger determinant of activation level than the specific layer, except for the answer-related tokens which show a layer-dependent gradient.

### Interpretation

This heatmap likely visualizes the **attention or activation patterns** within a transformer-based language model during a question-answering inference step. The data suggests the following:

* **Model Focus:** The model's internal representations are most strongly and consistently engaged with the **last token of the question (`last_q`)** throughout its processing layers. This implies the question's final context is a critical anchor for the model's reasoning process.

* **Information Processing Flow:** For answer-specific tokens, the model appears to process them most intensely in its **middle layers**. The fading activation in the deepest layers could indicate that by that stage, the information from these tokens has been integrated into a more abstract representation, or that the final layers are performing a different function (like output projection) where these specific token activations are less pronounced.

* **Noise vs. Signal:** The very low activation for the final negative-index tokens suggests the model effectively **ignores or down-weights** these positions, treating them as irrelevant padding or non-informative context. This demonstrates the model's ability to filter out noise.

* **Architectural Insight:** The clear separation between the high-activation "question" zone, the medium-activation "answer" zone with its layer gradient, and the low-activation "padding" zone provides a visual map of how the model allocates its computational resources across different types of input tokens. This is valuable for interpretability, showing where the model "looks" to perform its task.