## Line Charts: Performance Comparison of Graph Neural Network Methods Under Differential Privacy

### Overview

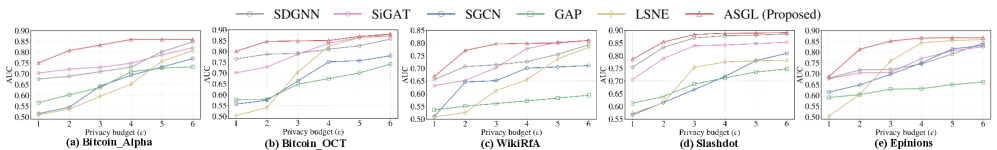

The image displays a series of five line charts arranged horizontally, comparing the performance (AUC) of six different graph neural network methods as a function of an increasing privacy budget (ε). The charts evaluate these methods across five distinct datasets. The overall visual suggests a performance benchmark study in the context of privacy-preserving machine learning.

### Components/Axes

* **Legend:** Positioned at the top center of the entire figure. It contains six entries, each with a colored line and marker symbol:

* `SDGNN` (Gray line, circle marker)

* `SiGAT` (Pink line, square marker)

* `SGCN` (Blue line, diamond marker)

* `GAP` (Green line, upward-pointing triangle marker)

* `LSNE` (Yellow/Olive line, downward-pointing triangle marker)

* `ASGL (Proposed)` (Red line, star marker)

* **Common Axes:**

* **X-axis (All Charts):** Labeled `Privacy budget (ε)`. The scale is linear with integer markers at 1, 2, 3, 4, 5, and 6.

* **Y-axis (All Charts):** Labeled `AUC`. The scale is linear, ranging from approximately 0.50 to 0.90, with major tick marks at 0.05 intervals (e.g., 0.50, 0.55, 0.60... 0.90).

* **Subplot Titles:** Each chart has a label below it:

* (a) `Bitcoin_Alpha`

* (b) `Bitcoin_OCT`

* (c) `WikiRIA`

* (d) `Slashdot`

* (e) `Epinions`

### Detailed Analysis

**Chart (a) Bitcoin_Alpha:**

* **Trend:** All methods show an increasing AUC trend as ε increases. The `ASGL (Proposed)` method (red star) consistently achieves the highest AUC across all ε values, starting at ~0.78 (ε=1) and reaching ~0.88 (ε=6).

* **Data Points (Approximate AUC):**

* ε=1: ASGL (~0.78), SiGAT (~0.75), SDGNN (~0.72), SGCN (~0.60), GAP (~0.55), LSNE (~0.52).

* ε=6: ASGL (~0.88), SiGAT (~0.85), SDGNN (~0.83), SGCN (~0.82), LSNE (~0.78), GAP (~0.75).

**Chart (b) Bitcoin_OCT:**

* **Trend:** Similar upward trend for all. `ASGL` again leads, starting at ~0.80 (ε=1) and plateauing near ~0.88 (ε=4-6). `LSNE` shows a very steep improvement from ε=1 to ε=3.

* **Data Points (Approximate AUC):**

* ε=1: ASGL (~0.80), SiGAT (~0.75), SDGNN (~0.72), SGCN (~0.58), GAP (~0.55), LSNE (~0.52).

* ε=6: ASGL (~0.88), SiGAT (~0.87), SDGNN (~0.86), SGCN (~0.85), LSNE (~0.84), GAP (~0.78).

**Chart (c) WikiRIA:**

* **Trend:** `ASGL` maintains a clear lead. `LSNE` starts extremely low (~0.50 at ε=1) but improves dramatically. `GAP` shows the weakest performance, with a very shallow slope.

* **Data Points (Approximate AUC):**

* ε=1: ASGL (~0.72), SiGAT (~0.65), SDGNN (~0.64), SGCN (~0.62), GAP (~0.55), LSNE (~0.50).

* ε=6: ASGL (~0.85), SiGAT (~0.82), SDGNN (~0.80), SGCN (~0.79), LSNE (~0.78), GAP (~0.60).

**Chart (d) Slashdot:**

* **Trend:** `ASGL` and `SiGAT` perform very closely at the top, with `ASGL` having a slight edge. All methods show strong improvement from ε=1 to ε=3 before leveling off.

* **Data Points (Approximate AUC):**

* ε=1: ASGL (~0.78), SiGAT (~0.77), SDGNN (~0.70), SGCN (~0.65), GAP (~0.60), LSNE (~0.55).

* ε=6: ASGL (~0.90), SiGAT (~0.89), SDGNN (~0.85), SGCN (~0.82), LSNE (~0.78), GAP (~0.75).

**Chart (e) Epinions:**

* **Trend:** `ASGL` is the top performer. `LSNE` again shows a very steep initial climb. The performance gap between methods is more pronounced at lower ε values.

* **Data Points (Approximate AUC):**

* ε=1: ASGL (~0.75), SiGAT (~0.70), SDGNN (~0.68), SGCN (~0.62), GAP (~0.58), LSNE (~0.50).

* ε=6: ASGL (~0.88), SiGAT (~0.86), SDGNN (~0.85), SGCN (~0.84), LSNE (~0.82), GAP (~0.65).

### Key Observations

1. **Consistent Leader:** The proposed method, `ASGL` (red star line), achieves the highest AUC score across all five datasets and all privacy budget levels.

2. **Privacy-Utility Trade-off:** For every method and dataset, AUC increases as the privacy budget (ε) increases. This illustrates the fundamental trade-off: allowing less privacy (higher ε) yields better model utility (higher AUC).

3. **Method Ranking:** The relative performance ranking of the methods is largely consistent across datasets: ASGL > SiGAT ≈ SDGNN > SGCN > LSNE/GAP. However, `LSNE` often starts poorly at ε=1 but improves rapidly.

4. **Dataset Sensitivity:** The absolute AUC values and the steepness of the curves vary by dataset. For example, performance on `Slashdot` (d) reaches higher AUC values (~0.90) compared to `WikiRIA` (c) (~0.85), suggesting the task or data structure is inherently easier for these models on the Slashdot dataset.

### Interpretation

This set of charts provides strong empirical evidence for the effectiveness of the proposed `ASGL` method in the context of privacy-preserving graph learning. The data demonstrates that `ASGL` achieves a superior balance between privacy and utility compared to five other baseline methods (SDGNN, SiGAT, SGCN, GAP, LSNE).

The consistent upward trend of all lines validates the expected behavior under differential privacy: relaxing the privacy constraint (increasing ε) allows models to learn more effectively from the data, improving performance. The fact that `ASGL`'s curve is not only the highest but also often has a strong initial slope suggests it is particularly data-efficient, achieving good performance even under stricter privacy regimes (lower ε).

The variation across the five datasets (Bitcoin transactions, wiki edits, social networks) indicates that the findings are robust across different types of graph-structured data. The outlier behavior of `LSNE`—starting very low but improving sharply—might indicate it is more sensitive to the noise injected for privacy at low ε levels but can leverage the additional information allowed at higher ε more effectively than some other baselines like `GAP`.

In summary, the visualization argues that the `ASGL` framework represents a state-of-the-art advancement for tasks like link prediction or node classification on graphs when differential privacy is a requirement, offering the best-known utility for a given privacy guarantee among the methods tested.