## Grouped Bar Chart: Effect of RL Fine-Tuning on Game-Specific Performance

### Overview

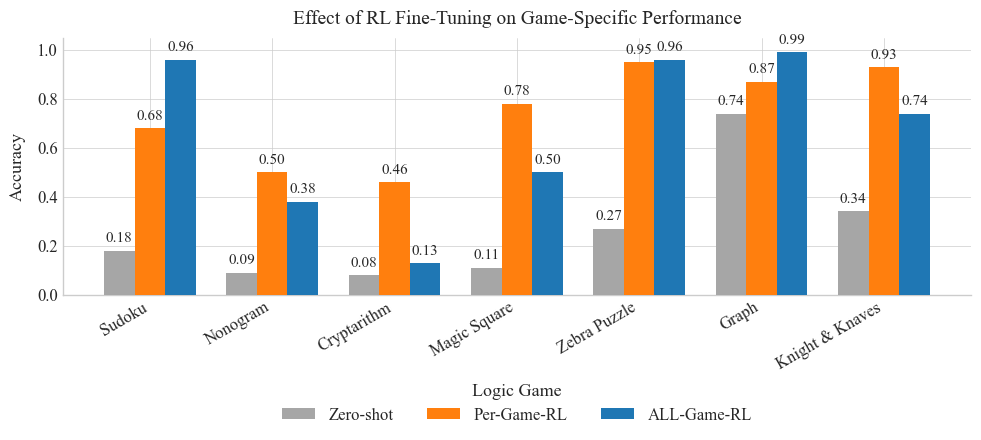

The image is a grouped bar chart comparing the accuracy of three different methods (Zero-shot, Per-Game-RL, and ALL-Game-RL) across seven distinct logic games. The chart demonstrates the impact of reinforcement learning (RL) fine-tuning on performance for each specific game.

### Components/Axes

* **Chart Title:** "Effect of RL Fine-Tuning on Game-Specific Performance" (centered at the top).

* **Y-Axis:** Labeled "Accuracy". The scale runs from 0.0 to 1.0, with major gridlines at intervals of 0.2 (0.0, 0.2, 0.4, 0.6, 0.8, 1.0).

* **X-Axis:** Labeled "Logic Game". It lists seven categories: Sudoku, Nonogram, Cryptarithm, Magic Square, Zebra Puzzle, Graph, and Knight & Knaves.

* **Legend:** Positioned at the bottom center of the chart. It defines three data series:

* **Gray Bar:** "Zero-shot"

* **Orange Bar:** "Per-Game-RL"

* **Blue Bar:** "ALL-Game-RL"

* **Data Labels:** Each bar has a numerical value displayed directly above it, indicating the precise accuracy score.

### Detailed Analysis

The following data is extracted for each game category, following the order of bars from left (Zero-shot) to right (ALL-Game-RL).

1. **Sudoku**

* **Zero-shot (Gray):** 0.18

* **Per-Game-RL (Orange):** 0.68

* **ALL-Game-RL (Blue):** 0.96

* **Trend:** A strong, consistent upward trend from Zero-shot to Per-Game-RL to ALL-Game-RL.

2. **Nonogram**

* **Zero-shot (Gray):** 0.09

* **Per-Game-RL (Orange):** 0.50

* **ALL-Game-RL (Blue):** 0.38

* **Trend:** Performance improves significantly from Zero-shot to Per-Game-RL, but then decreases for ALL-Game-RL.

3. **Cryptarithm**

* **Zero-shot (Gray):** 0.08

* **Per-Game-RL (Orange):** 0.46

* **ALL-Game-RL (Blue):** 0.13

* **Trend:** Similar to Nonogram, a large jump from Zero-shot to Per-Game-RL, followed by a substantial drop for ALL-Game-RL.

4. **Magic Square**

* **Zero-shot (Gray):** 0.11

* **Per-Game-RL (Orange):** 0.78

* **ALL-Game-RL (Blue):** 0.50

* **Trend:** A very large increase from Zero-shot to Per-Game-RL, followed by a moderate decrease for ALL-Game-RL.

5. **Zebra Puzzle**

* **Zero-shot (Gray):** 0.27

* **Per-Game-RL (Orange):** 0.95

* **ALL-Game-RL (Blue):** 0.96

* **Trend:** A dramatic increase from Zero-shot to Per-Game-RL, with ALL-Game-RL achieving a nearly identical, very high score.

6. **Graph**

* **Zero-shot (Gray):** 0.74

* **Per-Game-RL (Orange):** 0.87

* **ALL-Game-RL (Blue):** 0.99

* **Trend:** A steady, consistent upward trend across all three methods, with ALL-Game-RL achieving near-perfect accuracy.

7. **Knight & Knaves**

* **Zero-shot (Gray):** 0.34

* **Per-Game-RL (Orange):** 0.93

* **ALL-Game-RL (Blue):** 0.74

* **Trend:** A very large increase from Zero-shot to Per-Game-RL, followed by a notable decrease for ALL-Game-RL.

### Key Observations

* **Universal Zero-shot Baseline:** The "Zero-shot" method (gray bars) consistently yields the lowest accuracy across all seven games, ranging from 0.08 (Cryptarithm) to 0.74 (Graph).

* **Per-Game-RL is Highly Effective:** The "Per-Game-RL" method (orange bars) provides a massive performance boost over Zero-shot in every single game. It is the top-performing method for three games: Nonogram (0.50), Cryptarithm (0.46), and Magic Square (0.78).

* **ALL-Game-RL Performance is Mixed:** The "ALL-Game-RL" method (blue bars) shows variable results. It is the best-performing method for four games: Sudoku (0.96), Zebra Puzzle (0.96), Graph (0.99), and Knight & Knaves (0.74). However, for Nonogram, Cryptarithm, and Magic Square, its performance is lower than the Per-Game-RL method.

* **Highest and Lowest Scores:** The highest accuracy on the chart is 0.99 for ALL-Game-RL on the Graph game. The lowest accuracy is 0.08 for Zero-shot on Cryptarithm.

* **Notable Outlier - Graph Game:** The "Graph" game has a much higher Zero-shot baseline (0.74) compared to all other games, suggesting it may be inherently easier for the base model to solve without fine-tuning.

### Interpretation

The data suggests that reinforcement learning fine-tuning is a powerful technique for improving performance on logic games, as both RL methods dramatically outperform the Zero-shot baseline in all cases.

The key insight lies in the comparison between **Per-Game-RL** (specialized training) and **ALL-Game-RL** (generalized training). The results indicate a trade-off:

* **Specialization Wins for Certain Games:** For games like Nonogram, Cryptarithm, and Magic Square, specialized training (Per-Game-RL) yields better results. This implies these games may have unique structures or strategies that benefit from focused training, and generalized training might dilute this specificity.

* **Generalization Wins for Others:** For Sudoku, Zebra Puzzle, Graph, and Knight & Knaves, the generalized ALL-Game-RL model performs as well or better. This suggests these games may share underlying logical patterns or reasoning skills that transfer well when trained together, allowing the model to leverage a broader knowledge base.

The chart effectively demonstrates that there is no one-size-fits-all approach to RL fine-tuning for logic games. The optimal strategy—specialized versus generalized training—depends on the specific characteristics of the game domain. The high performance of Per-Game-RL across the board, however, underscores the critical importance of any form of task-specific fine-tuning over a zero-shot approach.