## Heatmap: Attention/Activation Patterns Across Heads and Layers

### Overview

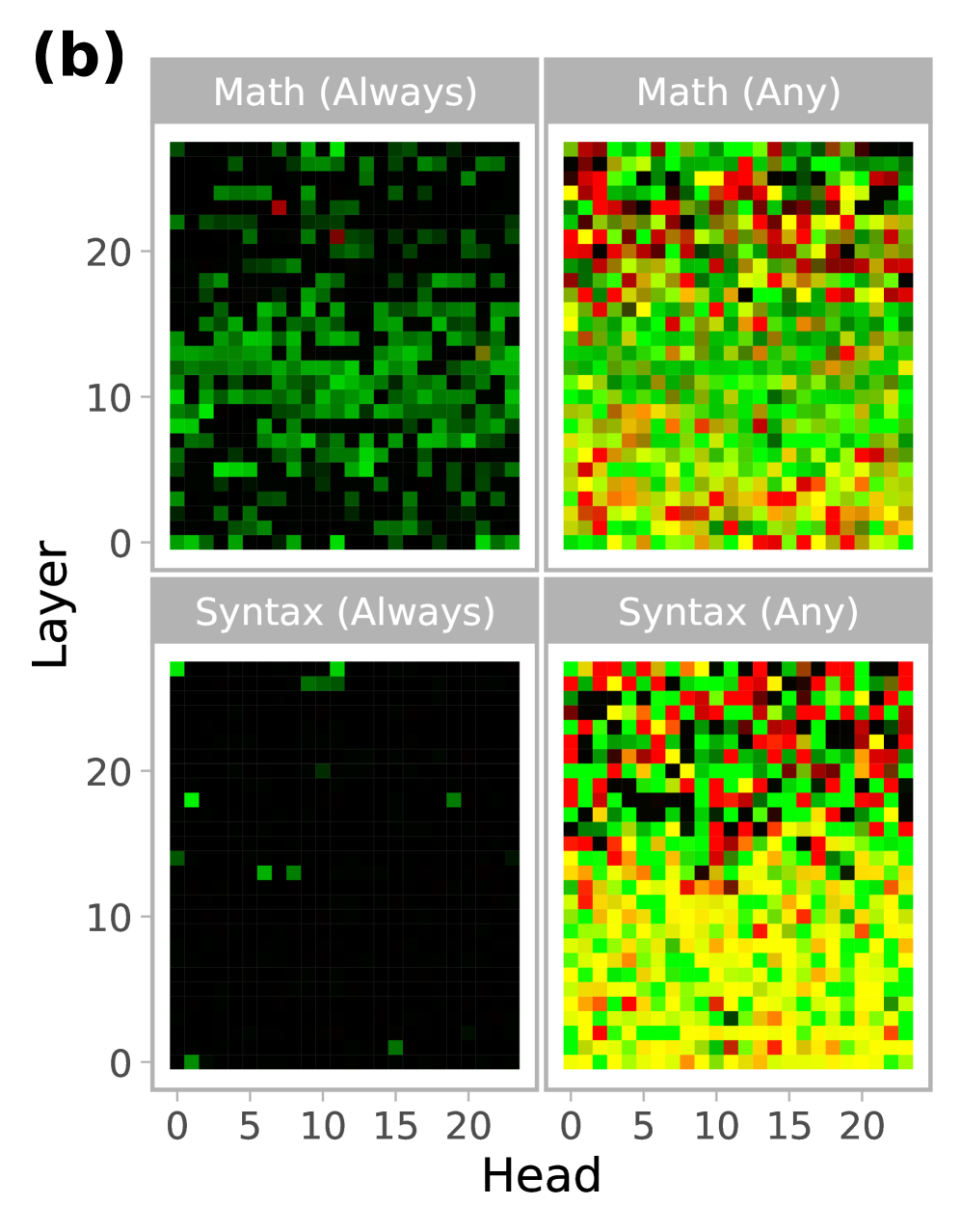

The image displays a 2x2 grid of heatmaps comparing activation patterns across 21 heads (x-axis) and 21 layers (y-axis) under two conditions: "Math (Always)", "Math (Any)", "Syntax (Always)", and "Syntax (Any)". Colors range from black (low values) to red/yellow (high values), indicating varying levels of activity or attention.

### Components/Axes

- **X-axis (Head)**: Labeled "Head", with integer values from 0 to 20.

- **Y-axis (Layer)**: Labeled "Layer", with integer values from 0 to 20.

- **Quadrants**:

- **Top-left**: "Math (Always)" – Dominated by dark green/black with sparse red spots.

- **Top-right**: "Math (Any)" – More varied colors, including red, yellow, and green.

- **Bottom-left**: "Syntax (Always)" – Mostly black with a few bright green spots.

- **Bottom-right**: "Syntax (Any)" – Dense red/yellow regions with scattered green/black.

### Detailed Analysis

1. **Math (Always)**:

- **Trend**: Sparse red/yellow spots (e.g., Head 5, Layer 10; Head 15, Layer 5) against a dark green/black background.

- **Values**: Most heads/layers show low activity (black/green), with only a few high-activity regions.

2. **Math (Any)**:

- **Trend**: Broader distribution of red/yellow across heads/layers, especially in lower layers (0–10).

- **Values**: Higher overall activity compared to "Math (Always)", with clusters in Heads 5–15 and Layers 5–15.

3. **Syntax (Always)**:

- **Trend**: Extremely sparse green spots (e.g., Head 2, Layer 3; Head 18, Layer 18) on a black background.

- **Values**: Minimal activity, suggesting limited engagement of heads/layers under this condition.

4. **Syntax (Any)**:

- **Trend**: Dense red/yellow regions (e.g., Heads 10–15, Layers 5–10) with scattered green/black.

- **Values**: High activity concentrated in mid-heads and mid-layers, indicating widespread engagement.

### Key Observations

- **"Any" vs. "Always"**:

- "Any" conditions (Math/Syntax) show significantly more red/yellow activity, implying broader head/layer involvement when conditions are variable.

- "Always" conditions are sparse, suggesting selective or modular activation.

- **Syntax Patterns**:

- Syntax (Any) exhibits the highest activity, with a dense red/yellow block in Heads 10–15 and Layers 5–10, possibly indicating a dedicated syntax-processing module.

- **Math Patterns**:

- Math (Any) shows distributed activity, while Math (Always) has isolated high-activity regions, hinting at context-dependent processing.

### Interpretation

The heatmaps suggest that neural network heads/layers exhibit **context-dependent activation**:

- **Math (Any)**: Broader engagement across heads/layers, likely due to the need to handle diverse mathematical contexts.

- **Syntax (Any)**: Highly concentrated activity in mid-heads/layers, possibly reflecting a specialized syntax-processing mechanism that scales with variable input.

- **Syntax (Always)**: Minimal activity, which may indicate that syntax processing is either redundant under constant conditions or handled by a small subset of heads.

These patterns align with theories of **modularity vs. distributed processing** in neural networks, where specific tasks (e.g., syntax) may rely on dedicated modules, while others (e.g., math) require flexible, distributed computation. The stark contrast between "Always" and "Any" conditions underscores the importance of contextual variability in shaping neural activation.