TECHNICAL ASSET FINGERPRINT

4226da2f57f0b693d2e9ef0c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

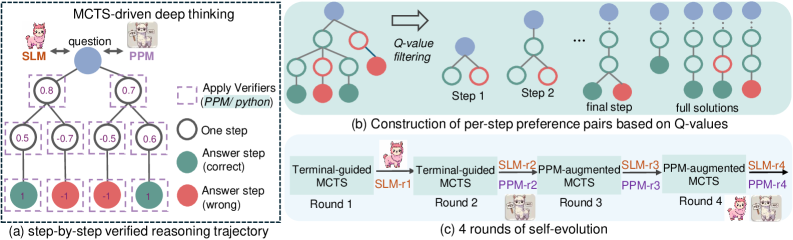

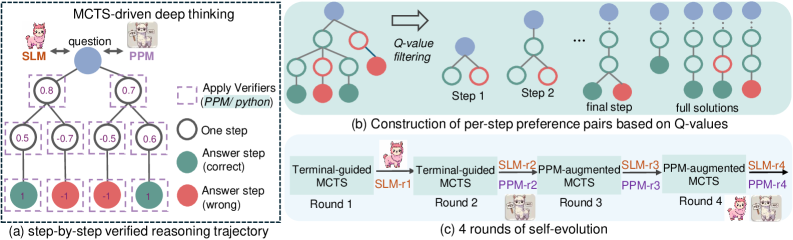

## Diagram: MCTS-driven Deep Thinking and Self-Evolution

### Overview

The image illustrates a Monte Carlo Tree Search (MCTS)-driven deep thinking process, including a step-by-step verified reasoning trajectory, construction of per-step preference pairs based on Q-values, and 4 rounds of self-evolution. The diagram uses tree structures and flowcharts to represent the reasoning and learning processes.

### Components/Axes

* **General Layout:** The image is divided into three sub-diagrams labeled (a), (b), and (c). Each sub-diagram represents a different aspect of the MCTS-driven deep thinking process.

* **Nodes:** Blue nodes represent the question or initial state. Green nodes represent correct answer steps. Red nodes represent wrong answer steps. White nodes represent one step.

* **Edges:** Edges connect the nodes, representing the flow of reasoning.

* **Labels:**

* **MCTS-driven deep thinking:** Title of the overall process.

* **SLM:** Represents one agent, depicted as a pink llama.

* **PPM:** Represents another agent, depicted as a white llama.

* **question:** Label near the top-most node in diagram (a).

* **Apply Verifiers (PPM/python):** Label indicating the application of verifiers.

* **Q-value filtering:** Label indicating the filtering process based on Q-values.

* **Step 1, Step 2, final step, full solutions:** Labels indicating the stages of constructing per-step preference pairs.

* **Terminal-guided MCTS:** Label for the initial MCTS stage.

* **PPM-augmented MCTS:** Label for the augmented MCTS stage.

* **Round 1, Round 2, Round 3, Round 4:** Labels indicating the rounds of self-evolution.

* **SLM-r1, PPM-r2, SLM-r3, PPM-r4:** Labels indicating the agents involved in each round.

### Detailed Analysis

#### (a) Step-by-step Verified Reasoning Trajectory

* **Description:** This sub-diagram shows a tree structure representing the reasoning process. The tree starts with a blue node labeled "question" at the top.

* **Nodes and Values:**

* The root node (question) has two child nodes with values 0.8 (left) and 0.7 (right).

* The left child node (0.8) has two children with values 0.5 (left) and -0.7 (right).

* The right child node (0.7) has two children with values -0.5 (left) and 0.6 (right).

* The left-most leaf node has a value of 1 and is colored green (correct).

* The second leaf node has a value of -1 and is colored red (wrong).

* The third leaf node has a value of -1 and is colored red (wrong).

* The right-most leaf node has a value of 1 and is colored green (correct).

* **Verifiers:** Dashed purple boxes surround each node, indicating the application of verifiers (PPM/python).

#### (b) Construction of Per-Step Preference Pairs Based on Q-Values

* **Description:** This sub-diagram illustrates the construction of per-step preference pairs through Q-value filtering.

* **Process:** The diagram shows a sequence of tree structures, starting with a more complex tree and gradually simplifying to "full solutions".

* **Stages:**

* The initial tree has a blue root node, followed by green and red nodes.

* "Q-value filtering" is applied, leading to simpler trees in "Step 1" and "Step 2".

* The "final step" shows a tree with a blue root node and a green and red child node.

* "full solutions" shows a linear sequence of blue and green nodes.

#### (c) 4 Rounds of Self-Evolution

* **Description:** This sub-diagram shows a flowchart representing 4 rounds of self-evolution.

* **Process:** The process starts with "Terminal-guided MCTS" in Round 1, followed by "PPM-augmented MCTS" in Round 3, alternating between the two agents (SLM and PPM).

* **Agents:**

* Round 1: Terminal-guided MCTS, SLM-r1

* Round 2: Terminal-guided MCTS, PPM-r2, SLM-r2

* Round 3: PPM-augmented MCTS, PPM-r3, SLM-r3

* Round 4: PPM-augmented MCTS, PPM-r4, SLM-r4

### Key Observations

* The reasoning trajectory in (a) shows a branching process with associated values at each step.

* The Q-value filtering in (b) simplifies the tree structure, leading to a set of full solutions.

* The self-evolution process in (c) involves alternating between Terminal-guided and PPM-augmented MCTS, with the SLM and PPM agents taking turns.

### Interpretation

The diagram illustrates a deep learning approach where an MCTS algorithm is used for reasoning and problem-solving. The step-by-step verification process ensures the quality of the reasoning trajectory. The Q-value filtering helps to refine the solutions. The self-evolution process allows the agents (SLM and PPM) to learn and improve over time through interaction and augmentation. The use of two agents suggests a collaborative or adversarial learning setup. The diagram highlights the iterative and adaptive nature of the learning process.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: MCTS-driven Deep Thinking Process

### Overview

The image depicts a diagram illustrating the process of MCTS (Monte Carlo Tree Search)-driven deep thinking, specifically focusing on a step-by-step verified reasoning trajectory, the construction of preference pairs based on Q-values, and iterative self-evolution rounds. The diagram uses tree-like structures and icons to represent the reasoning process and model evolution.

### Components/Axes

The diagram is divided into three main sections: (a), (b), and (c). Section (a) shows a step-by-step reasoning trajectory. Section (b) illustrates the construction of preference pairs. Section (c) demonstrates four rounds of self-evolution. The diagram includes icons representing SLM (a pink cartoon character) and PPM (a grey cartoon character). The diagram also uses color-coding: green for correct answer steps, red for incorrect answer steps, blue for nodes in the tree, and varying shades of red/green for Q-values.

### Detailed Analysis or Content Details

**(a) Step-by-Step Verified Reasoning Trajectory:**

* **Initial State:** A question is posed, and the process begins with SLM and PPM.

* **Nodes & Values:** The reasoning process unfolds as a tree. Nodes are represented by circles.

* The first node (leftmost) has a value of 0.8.

* The next two nodes have values of 0.7 and 0.7.

* Following these, there are nodes with values 0.5, -0.7, 0.5, and 0.6.

* The final nodes represent answer steps: 1 (green, correct), -1 (red, wrong), -1 (red, wrong), and 1 (green, correct).

* **Process Flow:** The diagram shows a flow from the initial question through a series of reasoning steps, culminating in an answer step. A dashed box surrounds the reasoning steps, labeled "One step".

* **Verifiers:** A dashed box labeled "Apply Verifiers (PPM/python)" indicates the use of verifiers to assess the reasoning steps.

**(b) Construction of Per-Step Preference Pairs Based on Q-Values:**

* **Initial Tree:** A tree structure with nodes colored green and red.

* **Q-Value Filtering:** An arrow indicates a filtering process based on Q-values.

* **Step 1 & Step 2:** The diagram shows two steps in the construction of preference pairs.

* **Final Step & Full Solutions:** The process leads to identifying the final step and full solutions.

**(c) 4 Rounds of Self-Evolution:**

* **Round 1:** Terminal-guided MCTS.

* **Round 2:** Terminal-guided SLM-r1.

* **Round 3:** SLM-r2 and PPM-augmented MCTS.

* **Round 4:** SLM-r3 and PPM-augmented MCTS, SLM-r4 and PPM-r4.

* **Icons:** Each round is associated with an icon representing the model (SLM or PPM).

### Key Observations

* The diagram highlights the iterative nature of the MCTS process.

* The use of Q-values suggests a reinforcement learning component.

* The self-evolution rounds demonstrate a process of model refinement.

* The color-coding clearly distinguishes between correct and incorrect reasoning steps.

* The diagram shows a clear progression from initial reasoning to refined solutions.

### Interpretation

The diagram illustrates a sophisticated AI reasoning process that combines MCTS with verification and self-improvement. The process begins with an initial question and explores possible reasoning paths, assigning Q-values to each step. These Q-values are then used to filter and refine the reasoning process, leading to the identification of optimal solutions. The self-evolution rounds suggest a continuous learning loop where the model (SLM and PPM) iteratively improves its reasoning capabilities. The use of verifiers (PPM/python) indicates a mechanism for ensuring the accuracy and reliability of the reasoning process. The diagram suggests a system designed to not only find answers but also to understand *why* those answers are correct, and to improve its reasoning abilities over time. The diagram is a conceptual illustration of a complex algorithm, rather than a presentation of specific data points. It focuses on the *process* of reasoning and improvement, rather than quantifiable results.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: MCTS-Driven Deep Thinking and Self-Evolution Process

### Overview

The image is a technical diagram illustrating a multi-stage process for improving AI model reasoning through Monte Carlo Tree Search (MCTS) and iterative self-evolution. It is divided into three main panels: (a) a step-by-step verified reasoning trajectory, (b) the construction of preference pairs from Q-values, and (c) a four-round self-evolution cycle. The diagram uses a combination of tree structures, flowcharts, and labeled components to explain the methodology.

### Components/Axes

The diagram is segmented into three distinct panels, each with its own components:

**Panel (a): step-by-step verified reasoning trajectory**

* **Location:** Left side of the image.

* **Main Structure:** A tree diagram originating from a central blue node labeled "question".

* **Key Labels & Components:**

* **Top:** "MCTS-driven deep thinking" (Title).

* **Left of Tree:** "SLM" with a cat icon.

* **Right of Tree:** "PPM" with a robot icon.

* **Tree Nodes:** Circles containing numerical values (e.g., 0.8, 0.7, 0.5, -0.7, -0.9, 0.6).

* **Legend (Right of Tree):**

* Dashed purple box: "Apply Verifiers (PPM/python)"

* White circle: "One step"

* Green circle: "Answer step (correct)"

* Red circle: "Answer step (wrong)"

* **Bottom Label:** "(a) step-by-step verified reasoning trajectory"

**Panel (b): Construction of per-step preference pairs based on Q-values**

* **Location:** Top-right quadrant.

* **Main Structure:** A horizontal sequence of simplified tree structures showing progression.

* **Key Labels & Components:**

* **Top Arrow:** "Q-value filtering" pointing from left to right.

* **Sequence Labels:** "Step 1", "Step 2", "final step", "full solutions".

* **Node Colors:** Blue (root), Green (correct), Red (incorrect). The proportion of green nodes increases from left to right.

* **Bottom Label:** "(b) Construction of per-step preference pairs based on Q-values"

**Panel (c): 4 rounds of self-evolution**

* **Location:** Bottom-right quadrant.

* **Main Structure:** A horizontal flowchart showing four iterative rounds.

* **Key Labels & Components:**

* **Round 1:** "Terminal-guided MCTS" -> "SLM-r1" (with cat icon).

* **Round 2:** "Terminal-guided MCTS" -> "SLM-r2" -> "PPM-augmented" (with robot icon).

* **Round 3:** "SLM-r3" -> "PPM-augmented" (with robot icon).

* **Round 4:** "SLM-r4" -> "PPM-augmented" (with robot icon).

* **Icons:** A cat icon (labeled "SLM") and a robot icon (labeled "PPM") appear at various stages.

* **Bottom Label:** "(c) 4 rounds of self-evolution"

### Detailed Analysis

**Panel (a) Analysis:**

* The process starts with a "question" node.

* The tree expands with steps assigned numerical values (likely Q-values or confidence scores). Values range from positive (e.g., 0.8, 0.7) to negative (e.g., -0.7, -0.9).

* Dashed purple boxes ("Apply Verifiers") enclose specific nodes and their children, indicating verification is applied at those decision points.

* The terminal nodes are classified as correct (green) or wrong (red). The final row shows a mix: two green (correct) and two red (wrong) answer steps.

**Panel (b) Analysis:**

* This panel visualizes a filtering process. It starts with a tree containing many red (incorrect) nodes.

* Through "Q-value filtering" across sequential steps, the incorrect (red) branches are pruned.

* By the "final step" and "full solutions", the tree is dominated by green (correct) nodes, demonstrating the selection of higher-quality reasoning paths.

**Panel (c) Analysis:**

* This outlines an iterative training or refinement loop.

* **Round 1:** Uses "Terminal-guided MCTS" to produce an initial Small Language Model (SLM-r1).

* **Round 2:** The process is repeated, but the output is now augmented by a Preference/Policy Model (PPM), creating SLM-r2.

* **Rounds 3 & 4:** The cycle continues, with each new SLM version (r3, r4) being refined using the PPM-augmented data from the previous round. The icons suggest the SLM (cat) and PPM (robot) are distinct models collaborating.

### Key Observations

1. **Verification Integration:** Panel (a) explicitly shows that verification (via PPM/python) is not applied uniformly but at specific, likely critical, decision points in the reasoning tree.

2. **Value-Driven Pruning:** Panel (b) clearly links the pruning of incorrect reasoning paths to Q-values, showing a direct mechanism for quality improvement.

3. **Evolutionary Progression:** Panel (c) depicts a clear, staged evolution where the system's capability is built incrementally over four rounds, with the PPM playing an increasingly integral role after the first round.

4. **Visual Coding Consistency:** The color scheme (green=correct, red=wrong) is consistent across panels (a) and (b), creating a coherent visual language for success and failure.

### Interpretation

This diagram outlines a sophisticated framework for enhancing the reasoning capabilities of a Small Language Model (SLM). The core idea is to move beyond simple, single-path generation.

* **What it demonstrates:** It shows a method that combines **exploration** (via MCTS to generate multiple reasoning paths), **evaluation** (using verifiers and Q-values to score steps), and **selection** (pruning poor paths to construct preference pairs). This curated data is then used in a **self-evolution loop**, where the model progressively learns from its own refined outputs, augmented by a stronger Preference Model (PPM).

* **How elements relate:** Panel (a) is the core reasoning engine. Panel (b) is the data processing layer that converts the engine's outputs into training signals. Panel (c) is the macro-level training regimen that uses these signals to iteratively improve the base model (SLM). The PPM acts as a judge or guide throughout.

* **Notable Implications:** The process aims to produce more reliable and accurate model outputs by systematically identifying and reinforcing correct reasoning chains while discarding flawed ones. The "self-evolution" aspect suggests a goal of reducing reliance on external human feedback over time, as the model learns to improve using its own verified trajectories. The use of "Terminal-guided MCTS" in the first round implies an initial strategy to ensure the search process is directed toward plausible final answers.

**Language Note:** The diagram contains English text and labels. The cat and robot icons are symbolic and do not contain translatable text.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: MCTS-Driven Deep Thinking Framework

### Overview

The image depicts a multi-stage technical framework for MCTS (Monte Carlo Tree Search)-driven deep thinking, visualized through three interconnected components:

1. **Step-by-Step Verified Reasoning Trajectory** (a)

2. **Per-Step Preference Pair Construction** (b)

3. **Self-Evolution Process** (c)

The framework emphasizes iterative reasoning, preference-based filtering, and self-improvement through multiple rounds of evolution.

---

### Components/Axes

#### (a) Step-by-Step Verified Reasoning Trajectory

- **Nodes**:

- **Question** (central blue node)

- **SLM** (left, pink icon)

- **PPM** (right, purple icon)

- **Answer Steps**:

- Correct (green circles)

- Wrong (red circles)

- **Intermediate Values**: Numerical scores (e.g., 0.8, 0.7, 0.5, -0.7, -0.5, 0.6, 1, -1)

- **Arrows**:

- Purple dashed lines (reasoning steps)

- Solid arrows (evolutionary flow)

- **Legend**:

- Green circles = Correct answer steps

- Red circles = Wrong answer steps

- Positioned on the right side of the diagram.

#### (b) Per-Step Preference Pair Construction

- **Nodes**:

- **Q-Value Filtering** (central blue node)

- **Step 1**, **Step 2**, **Final Step** (green/red circles)

- **Full Solutions** (blue nodes)

- **Arrows**:

- Rightward flow (→) indicating progression through steps.

- **Legend**:

- Blue circles = Q-value filtering

- Green circles = Correct steps

- Red circles = Incorrect steps

- Positioned on the right side of the diagram.

#### (c) Four Rounds of Self-Evolution

- **Rounds**:

- **Round 1**: Terminal-guided MCTS → SLM-r1 → Terminal-guided MCTS

- **Round 2**: SLM-r2 → PPM-augmented MCTS → SLM-r2

- **Round 3**: PPM-augmented MCTS → SLM-r3 → PPM-augmented MCTS

- **Round 4**: SLM-r4 → PPM-augmented MCTS → SLM-r4

- **Icons**:

- Pink (SLM) and purple (PPM) icons alternate across rounds.

- **Legend**:

- Orange = Terminal-guided MCTS

- Purple = PPM-augmented MCTS

- Positioned at the bottom of the diagram.

---

### Detailed Analysis

#### (a) Step-by-Step Verified Reasoning Trajectory

- **Flow**:

- The question (blue node) branches into SLM and PPM nodes.

- Intermediate values (e.g., 0.8, 0.7) represent confidence scores or evaluation metrics.

- Answer steps (green/red) indicate correctness, with green nodes having positive values (1) and red nodes negative (-1).

- **Key Values**:

- Highest positive value: 1 (correct answer step).

- Highest negative value: -1 (wrong answer step).

#### (b) Per-Step Preference Pair Construction

- **Q-Value Filtering**:

- Filters nodes based on Q-values (not explicitly quantified but implied by node pruning).

- Steps 1–2 show progressive refinement, culminating in "full solutions."

- **Trend**:

- Nodes reduce from multiple branches to a single path in later steps.

#### (c) Four Rounds of Self-Evolution

- **Iterative Process**:

- Each round alternates between SLM and PPM-augmented MCTS.

- "Terminal-guided" steps (orange) anchor the process, while "PPM-augmented" steps (purple) introduce external enhancements.

- **Trend**:

- Increasing complexity across rounds, with PPM augmentations becoming more frequent.

---

### Key Observations

1. **Color Consistency**:

- Green (correct) and red (wrong) nodes in (a) and (b) align with the legend.

- Orange (Terminal-guided) and purple (PPM-augmented) nodes in (c) match their legend labels.

2. **Flow Direction**:

- Arrows in (a) and (b) indicate left-to-right progression.

- (c) shows cyclical evolution across rounds.

3. **Numerical Values**:

- Values in (a) range from -1 to 1, suggesting a confidence or error metric.

- No explicit Q-values in (b), but node pruning implies threshold-based filtering.

---

### Interpretation

1. **Framework Purpose**:

- The diagram illustrates a self-improving reasoning system where MCTS is guided by SLM (Symbolic Language Model) and PPM (Probabilistic Prediction Model).

- Step verification (a) ensures correctness, while Q-value filtering (b) refines solutions iteratively.

2. **Self-Evolution Mechanism**:

- Rounds 1–4 demonstrate a hybrid approach: SLM handles symbolic reasoning, while PPM introduces probabilistic enhancements.

- Terminal-guided steps act as checkpoints, ensuring alignment with ground truth.

3. **Notable Patterns**:

- Negative values (-0.7, -0.5, -1) in (a) highlight error-prone steps, which are likely filtered out in (b).

- The final solutions in (b) represent optimized paths after multiple filtering stages.

4. **Technical Implications**:

- The framework balances symbolic reasoning (SLM) and probabilistic modeling (PPM) to enhance MCTS efficiency.

- Self-evolution suggests adaptive learning, where each round incorporates feedback from prior steps.

This diagram provides a blueprint for building robust, self-improving reasoning systems, emphasizing verification, filtering, and iterative refinement.

DECODING INTELLIGENCE...