# Technical Document Extraction: Scaling Strategies for Neural Network Inference

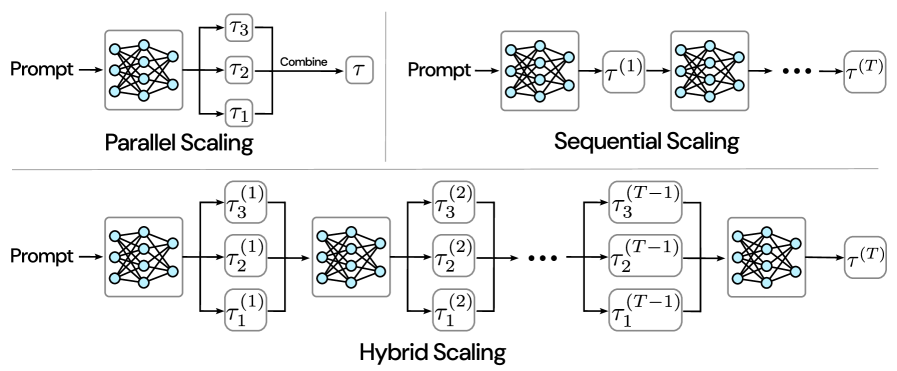

This document provides a detailed technical breakdown of an image illustrating three distinct architectural strategies for scaling neural network outputs: **Parallel Scaling**, **Sequential Scaling**, and **Hybrid Scaling**.

---

## 1. Component Isolation

The image is divided into three distinct regions separated by thin grey lines:

* **Top-Left Region:** Parallel Scaling diagram.

* **Top-Right Region:** Sequential Scaling diagram.

* **Bottom Region:** Hybrid Scaling diagram.

### Common Visual Elements

* **Prompt:** The input text/data entering the system.

* **Neural Network Icon:** Represented by a grey box containing a blue-node graph structure (3 layers: 3 nodes, 4 nodes, 3 nodes).

* **$\tau$ (Tau):** Represents a generated output or "thought" trace.

* **Arrows:** Indicate the directional flow of data and processing.

---

## 2. Detailed Diagram Analysis

### A. Parallel Scaling (Top-Left)

This diagram illustrates a "one-to-many-to-one" flow where multiple outputs are generated simultaneously from a single input.

* **Flow Description:**

1. A **Prompt** is fed into a single **Neural Network**.

2. The network generates three parallel outputs labeled $\tau_1$, $\tau_2$, and $\tau_3$.

3. These three outputs are merged via a path labeled **"Combine"**.

4. The final result is a single consolidated output labeled $\tau$.

* **Key Trend:** Horizontal expansion followed by convergence. This suggests a "Best-of-N" or ensemble approach where multiple candidates are sampled and then aggregated.

### B. Sequential Scaling (Top-Right)

This diagram illustrates a linear, iterative flow where the output of one step becomes the input for the next.

* **Flow Description:**

1. A **Prompt** is fed into a **Neural Network**.

2. The network produces an initial output labeled $\tau^{(1)}$.

3. $\tau^{(1)}$ is fed into a second **Neural Network** (or a subsequent iteration of the same network).

4. The process continues through an ellipsis (**...**), indicating multiple sequential steps.

5. The final output is labeled $\tau^{(T)}$, where $T$ represents the total number of steps.

* **Key Trend:** Linear progression. This suggests a "Chain-of-Thought" or iterative refinement approach where the model builds upon its previous reasoning.

### C. Hybrid Scaling (Bottom)

This diagram illustrates a complex architecture that combines both parallel sampling and sequential refinement.

* **Flow Description:**

1. **Initial Step:** A **Prompt** enters a **Neural Network**, which generates three parallel outputs: $\tau_1^{(1)}$, $\tau_2^{(1)}$, and $\tau_3^{(1)}$.

2. **First Transition:** These three outputs are fed into a second **Neural Network** stage.

3. **Second Step:** This network generates a new set of three parallel outputs: $\tau_1^{(2)}$, $\tau_2^{(2)}$, and $\tau_3^{(2)}$.

4. **Iterative Phase:** The process continues through an ellipsis (**...**).

5. **Final Transition:** The sequence reaches the $(T-1)$ stage, producing $\tau_1^{(T-1)}$, $\tau_2^{(T-1)}$, and $\tau_3^{(T-1)}$.

6. **Conclusion:** These are fed into a final **Neural Network** to produce the terminal output $\tau^{(T)}$.

* **Key Trend:** A "grid" or "lattice" flow. This suggests a "Tree-of-Thought" or "Graph-of-Thought" approach where the model explores multiple reasoning paths at every step of a multi-step process.

---

## 3. Textual Data Summary

| Label | Context | Meaning/Function |

| :--- | :--- | :--- |

| **Prompt** | Input | The starting query or data. |

| **Combine** | Parallel Scaling | The operation that merges multiple samples into one. |

| **$\tau_n$** | Parallel Scaling | Individual samples (1, 2, 3). |

| **$\tau^{(t)}$** | Sequential Scaling | The state of the output at time step $t$. |

| **$\tau_n^{(t)}$** | Hybrid Scaling | The $n$-th parallel sample at time step $t$. |

| **Parallel Scaling** | Header/Title | Strategy based on width (sampling). |

| **Sequential Scaling** | Header/Title | Strategy based on depth (iteration). |

| **Hybrid Scaling** | Header/Title | Strategy combining width and depth. |

---

## 4. Technical Summary

The image maps out the design space for increasing computational effort during inference (Inference-time Scaling).

* **Parallel Scaling** increases compute by sampling more candidates.

* **Sequential Scaling** increases compute by allowing more steps of reasoning.

* **Hybrid Scaling** maximizes compute by performing multi-path reasoning over multiple steps.