# Technical Document Extraction: Scaling Methodologies Diagram

## Overview

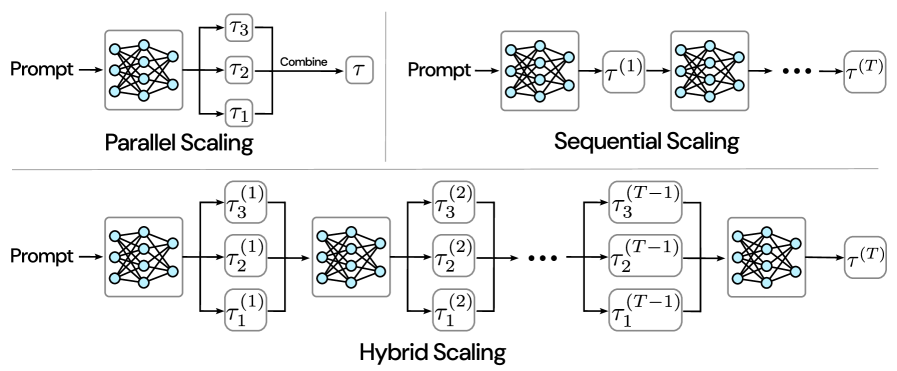

The image illustrates three scaling methodologies for processing prompts through neural network transformations. Each methodology is represented as a flowchart with distinct architectural patterns.

---

## 1. Parallel Scaling

### Components:

- **Input**: Prompt → Neural Network (interconnected nodes)

- **Transformations**:

- τ₁, τ₂, τ₃ (parallel processing branches)

- **Output**: Combined → τ (final output)

### Flow:

1. Prompt enters a neural network

2. Network output splits into three parallel transformation paths:

- τ₁ (bottom)

- τ₂ (middle)

- τ₃ (top)

3. Results from all three transformations are combined

4. Final output labeled as τ

---

## 2. Sequential Scaling

### Components:

- **Input**: Prompt → Neural Network

- **Transformations**:

- τ^(1), τ^(2), ..., τ^(T) (temporal sequence)

- **Output**: Final τ^(T)

### Flow:

1. Prompt enters neural network

2. Sequential application of transformations:

- τ^(1) → τ^(2) → ... → τ^(T)

3. Each transformation builds on previous output

4. Final output labeled as τ^(T)

---

## 3. Hybrid Scaling

### Components:

- **Input**: Prompt → Neural Network

- **Transformations**:

- τ^(1), τ^(2), ..., τ^(T-1) (temporal sequence with parallel processing)

- **Output**: Final τ^(T)

### Flow:

1. Prompt enters neural network

2. Temporal sequence of transformations:

- Stage 1: τ^(1) applied to all three parallel paths (τ₁^(1), τ₂^(1), τ₃^(1))

- Stage 2: τ^(2) applied to all three parallel paths (τ₁^(2), τ₂^(2), τ₃^(2))

- ...

- Stage T-1: τ^(T-1) applied to all three parallel paths

3. Final transformation τ^(T) applied to combined outputs

4. Final output labeled as τ^(T)

---

## Key Observations

1. **Notation Consistency**:

- Lowercase τ (τ₁, τ₂, τ₃) = parallel processing units

- Superscript τ^(n) = temporal sequence stages

2. **Architectural Patterns**:

- Parallel: Horizontal processing

- Sequential: Vertical stacking

- Hybrid: Combination of both

3. **Temporal Progression**:

- Sequential and Hybrid methods show explicit time-dependent progression (τ^(1) → τ^(T))

4. **Combination Logic**:

- Parallel method uses explicit "Combine" step

- Hybrid method implies combination through sequential stages

---

## Diagram Structure

- **X-Axis**: Represents processing stages (parallel branches or temporal sequence)

- **Y-Axis**: Represents transformation depth (individual τ units or temporal stages)

- **Color Coding**: No explicit color legend present; all components use default diagram colors

---

## Technical Implications

1. **Parallel Scaling**:

- Best for independent processing paths

- Requires explicit combination mechanism

2. **Sequential Scaling**:

- Suitable for time-dependent transformations

- Cumulative effect across stages

3. **Hybrid Scaling**:

- Balances parallelism and temporal progression

- Complex implementation but potentially optimal performance

---

## Limitations

- No quantitative performance metrics provided

- No implementation details for transformation functions

- Assumes perfect parallelization capabilities