TECHNICAL ASSET FINGERPRINT

4235727da877c8e532e70f16

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Reasoning Examples: Arithmetic vs. Factual

### Overview

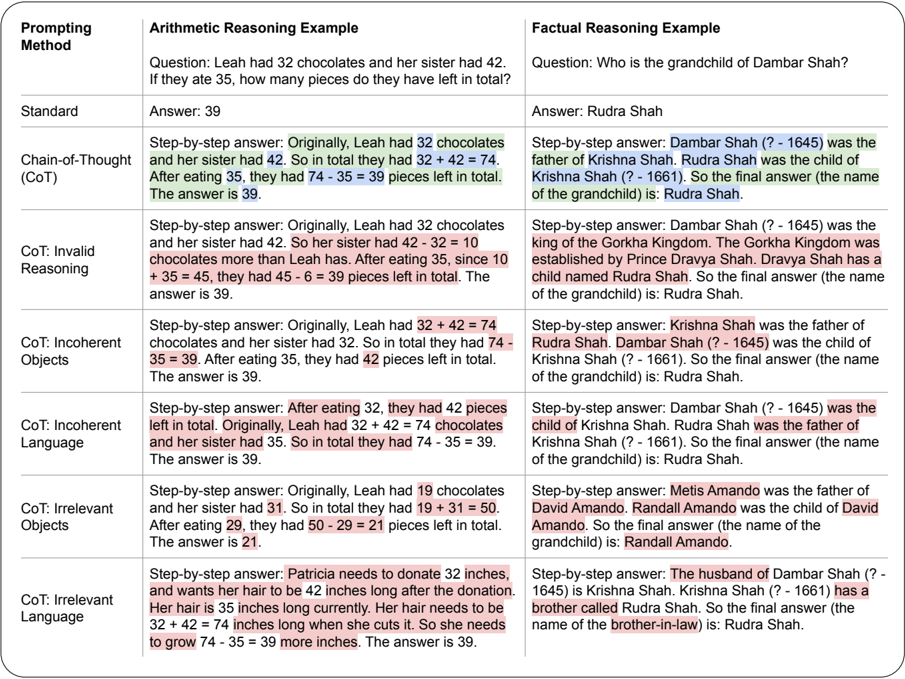

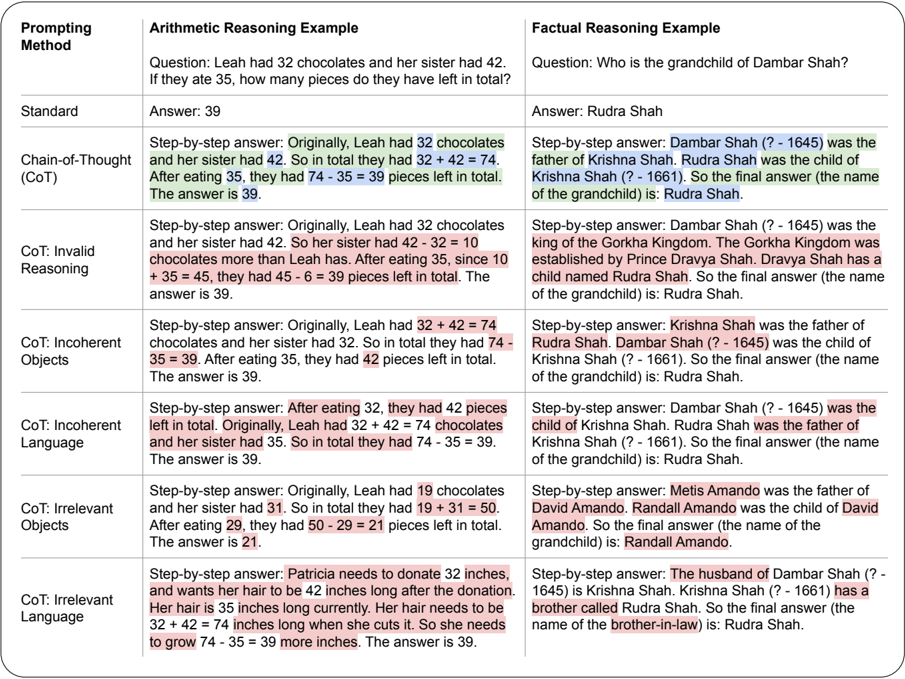

The image presents examples of arithmetic and factual reasoning problems, demonstrating different prompting methods and their corresponding solutions. The examples highlight the use of Chain-of-Thought (CoT) prompting and variations, including invalid reasoning, incoherent objects/language, and irrelevant objects/language.

### Components/Axes

The image is structured into two main columns:

1. **Arithmetic Reasoning Example**: Presents math problems.

2. **Factual Reasoning Example**: Presents knowledge-based questions.

Each column is further divided into rows based on the "Prompting Method" used. The prompting methods are:

* Standard

* Chain-of-Thought (CoT)

* CoT: Invalid Reasoning

* CoT: Incoherent Objects

* CoT: Incoherent Language

* CoT: Irrelevant Objects

* CoT: Irrelevant Language

### Detailed Analysis or ### Content Details

**1. Arithmetic Reasoning Example**

* **Standard**:

* Question: Leah had 32 chocolates and her sister had 42. If they ate 35, how many pieces do they have left in total?

* Answer: 39

* **Chain-of-Thought (CoT)**:

* Step-by-step answer: Originally, Leah had 32 chocolates and her sister had 42. So in total they had 32 + 42 = 74. After eating 35, they had 74 - 35 = 39 pieces left in total. The answer is 39.

* **CoT: Invalid Reasoning**:

* Step-by-step answer: Originally, Leah had 32 chocolates and her sister had 42. So her sister had 42 - 32 = 10 chocolates more than Leah has. After eating 35, since 10 + 35 = 45, they had 45 - 6 = 39 pieces left in total. The answer is 39.

* **CoT: Incoherent Objects**:

* Step-by-step answer: Originally, Leah had 32 + 42 = 74 chocolates and her sister had 32. So in total they had 74 - 35 = 39. After eating 35, they had 42 pieces left in total. The answer is 39.

* **CoT: Incoherent Language**:

* Step-by-step answer: After eating 32, they had 42 pieces left in total. Originally, Leah had 32 + 42 = 74 chocolates and her sister had 35. So in total they had 74 - 35 = 39. The answer is 39.

* **CoT: Irrelevant Objects**:

* Step-by-step answer: Originally, Leah had 19 chocolates and her sister had 31. So in total they had 19 + 31 = 50. After eating 29, they had 50 - 29 = 21 pieces left in total. The answer is 21.

* **CoT: Irrelevant Language**:

* Step-by-step answer: Patricia needs to donate 32 inches, and wants her hair to be 42 inches long after the donation. Her hair is 35 inches long currently. Her hair needs to be 32 + 42 = 74 inches long when she cuts it. So she needs to grow 74 - 35 = 39 more inches. The answer is 39.

**2. Factual Reasoning Example**

* **Standard**:

* Question: Who is the grandchild of Dambar Shah?

* Answer: Rudra Shah

* **Chain-of-Thought (CoT)**:

* Step-by-step answer: Dambar Shah (? - 1645) was the father of Krishna Shah. Rudra Shah was the child of Krishna Shah (? - 1661). So the final answer (the name of the grandchild) is: Rudra Shah.

* **CoT: Invalid Reasoning**:

* Step-by-step answer: Dambar Shah (? - 1645) was the king of the Gorkha Kingdom. The Gorkha Kingdom was established by Prince Dravya Shah. Dravya Shah has a child named Rudra Shah. So the final answer (the name of the grandchild) is: Rudra Shah.

* **CoT: Incoherent Objects**:

* Step-by-step answer: Krishna Shah was the father of Rudra Shah. Dambar Shah (? - 1645) was the child of Krishna Shah (? - 1661). So the final answer (the name of the grandchild) is: Rudra Shah.

* **CoT: Incoherent Language**:

* Step-by-step answer: Dambar Shah (? - 1645) was the child of Krishna Shah. Rudra Shah was the father of Krishna Shah (? - 1661). So the final answer (the name of the grandchild) is: Rudra Shah.

* **CoT: Irrelevant Objects**:

* Step-by-step answer: Metis Amando was the father of David Amando. Randall Amando was the child of David Amando. So the final answer (the name of the grandchild) is: Randall Amando.

* **CoT: Irrelevant Language**:

* Step-by-step answer: The husband of Dambar Shah (? - 1645) is Krishna Shah. Krishna Shah (? - 1661) has a brother called Rudra Shah. So the final answer (the name of the brother-in-law) is: Rudra Shah.

### Key Observations

* The "Standard" prompting method provides a direct question and answer without intermediate steps.

* The "Chain-of-Thought (CoT)" method breaks down the problem into smaller, logical steps to arrive at the final answer.

* The "CoT: Invalid Reasoning" method contains flawed logic or incorrect assumptions in the step-by-step reasoning.

* The "CoT: Incoherent Objects" method introduces irrelevant or nonsensical objects into the reasoning process.

* The "CoT: Incoherent Language" method uses confusing or grammatically incorrect language in the reasoning process.

* The "CoT: Irrelevant Objects" method introduces objects that are not related to the question.

* The "CoT: Irrelevant Language" method introduces language that is not related to the question.

### Interpretation

The image demonstrates how different prompting methods can affect the reasoning process and the accuracy of the final answer. The Chain-of-Thought (CoT) method aims to improve reasoning by explicitly outlining the steps taken to solve a problem. However, the variations of CoT (invalid reasoning, incoherent objects/language, irrelevant objects/language) highlight potential pitfalls in the reasoning process, where flawed logic, irrelevant information, or nonsensical statements can lead to incorrect or nonsensical answers. The examples illustrate the importance of careful and coherent reasoning in both arithmetic and factual problem-solving.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Comparison of Prompting Methods: Arithmetic & Factual Reasoning

### Overview

The image presents a comparison of different prompting methods (Standard, Chain-of-Thought (CoT) with varying degrees of coherence: Invalid Reasoning, Incoherent Objects, Incoherent Language, and Logically Consistent) applied to two distinct question types: Arithmetic Reasoning and Factual Reasoning. Each method is demonstrated with a question, an answer, and a step-by-step explanation.

### Components/Axes

The image is structured into a table with two main columns: "Arithmetic Reasoning Example" and "Factual Reasoning Example". Each column is further divided into rows representing the different prompting methods. Each cell contains:

* **Prompting Method:** (Standard, CoT: Invalid Reasoning, CoT: Incoherent Objects, CoT: Incoherent Language, CoT: Logically Consistent, SoTA: Logically Consistent)

* **Question:** The posed question.

* **Answer:** The provided answer.

* **Step-by-step answer:** The reasoning process leading to the answer.

### Detailed Analysis or Content Details

**Arithmetic Reasoning Example:**

* **Question:** "Leah had 32 chocolates and her sister had 42. If they ate 35, how many pieces do they have left in total?"

* **Standard:** Answer: 39

* **CoT: Invalid Reasoning:** Answer: 39. Step-by-step answer: "Originally, Leah had 32 chocolates and her sister had 42. So her sister had 42 - 32 = 10 chocolates more than Leah has. After eating 35, since 10 + 35 = 45, they had 45 - 6 = 39 pieces left in total. The answer is 39."

* **CoT: Incoherent Objects:** Answer: 39. Step-by-step answer: "Originally, Leah had 32 chocolates and her sister had 42 + 74 chocolates. So in total they had 32 + 42 + 74 = 39 pieces left in total. The answer is 39."

* **CoT: Incoherent Language:** Answer: 39. Step-by-step answer: "Originally, Leah had 32, they had 42 pieces left in total. Originally, Leah had 32 chocolates and her sister had 35. So in total they had 32 + 74 - 39 = 35. The answer is 39."

* **CoT: Logically Consistent:** Answer: 39. Step-by-step answer: "Originally, Leah had 32 chocolates and her sister had 42. So in total they had 32 + 42 = 74. After eating 35, they had 74 - 35 = 39 pieces left in total. The answer is 39."

* **SoTA: Logically Consistent:** Answer: 39. Step-by-step answer: "Originally, Leah had 32 chocolates and her sister had 42. So in total they had 32 + 42 = 74. After eating 35, they had 74 - 35 = 39 pieces left in total. The answer is 39."

**Factual Reasoning Example:**

* **Question:** "Who is the grandchild of Dambar Shah?"

* **Standard:** Answer: Rudra Shah

* **CoT: Invalid Reasoning:** Answer: Rudra Shah. Step-by-step answer: "Dambar Shah (? - 1645) was the king of the Gorkha Kingdom. The Gorkha Kingdom was established by Prince Dravya Shah. Dravya Shah has a child named Rudra Shah. So the final answer (the name of the grandchild) is: Rudra Shah."

* **CoT: Incoherent Objects:** Answer: Rudra Shah. Step-by-step answer: "Dambar Shah (? - 1645) was the father of Krishna Shah. Krishna Shah was the child of Krishna Shah (? - 1661). So the final answer (the name of the grandchild) is: Rudra Shah."

* **CoT: Incoherent Language:** Answer: Rudra Shah. Step-by-step answer: "Dambar Shah (? - 1645) was the father of Krishna Shah. Rudra Shah was the father of Krishna Shah (? - 1661). So the final answer (the name of the grandchild) is: Rudra Shah."

* **CoT: Logically Consistent:** Answer: Rudra Shah. Step-by-step answer: "Dambar Shah (? - 1645) was the father of Krishna Shah. Rudra Shah was the child of Krishna Shah (? - 1661). So the final answer (the name of the grandchild) is: Rudra Shah."

* **SoTA: Logically Consistent:** Answer: Rudra Shah. Step-by-step answer: "Dambar Shah (? - 1645) was the father of Krishna Shah. Rudra Shah was the child of Krishna Shah (? - 1661). So the final answer (the name of the grandchild) is: Rudra Shah."

### Key Observations

* All methods, including the "invalid" and "incoherent" CoT approaches, arrive at the correct answer for both questions.

* The "invalid" and "incoherent" CoT explanations demonstrate flawed or nonsensical reasoning, yet still produce the correct result. This highlights the potential for CoT to generate plausible-sounding but incorrect justifications.

* The "Logically Consistent" CoT and SoTA methods provide clear and accurate step-by-step reasoning.

* The factual reasoning examples include dates (e.g., "? - 1645", "? - 1661") associated with individuals.

### Interpretation

The image demonstrates the complexities of evaluating Large Language Models (LLMs) and the importance of examining not only the final answer but also the reasoning process. While CoT aims to improve reasoning, the examples show that it can be susceptible to generating incorrect or illogical explanations that nonetheless lead to the correct answer. This suggests that simply obtaining the correct answer is insufficient for assessing the true reasoning capabilities of an LLM. The "SoTA" (State-of-the-Art) method, in this case, is identical to the "Logically Consistent" CoT, indicating that a well-structured and accurate CoT approach can achieve performance comparable to the best available models. The inclusion of dates in the factual reasoning examples suggests a focus on historical context and the ability to retrieve and utilize factual information. The image serves as a cautionary tale about the potential for LLMs to "hallucinate" or generate plausible but incorrect explanations, even when arriving at the correct answer.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Table: Comparison of Prompting Methods for Arithmetic and Factual Reasoning

### Overview

The image displays a structured table comparing seven different "Prompting Methods" used to solve two types of reasoning tasks: an "Arithmetic Reasoning Example" and a "Factual Reasoning Example." The table demonstrates how the same initial question is answered using different prompting strategies, highlighting variations in the reasoning process and final answer. Some text segments are highlighted in blue, red, or pink, likely to indicate correct reasoning steps, errors, or irrelevant information.

### Components/Axes

The table is organized into three columns and eight rows (including the header).

* **Column Headers (Top Row):**

1. **Prompting Method** (Leftmost column)

2. **Arithmetic Reasoning Example** (Center column)

3. **Factual Reasoning Example** (Rightmost column)

* **Row Labels (First Column):** The seven prompting methods listed vertically are:

1. Standard

2. Chain-of-Thought (CoT)

3. CoT: Invalid Reasoning

4. CoT: Incoherent Objects

5. CoT: Incoherent Language

6. CoT: Irrelevant Objects

7. CoT: Irrelevant Language

### Detailed Analysis

Each cell under the "Arithmetic" and "Factual" columns contains a question and a corresponding answer generated by the prompting method named in the first column of that row.

**1. Standard Prompting**

* **Arithmetic:** Question: "Leah had 32 chocolates and her sister had 42. If they ate 35, how many pieces do they have left in total?" Answer: "39"

* **Factual:** Question: "Who is the grandchild of Dambar Shah?" Answer: "Rudra Shah"

**2. Chain-of-Thought (CoT)**

* **Arithmetic:** Provides a step-by-step calculation. Steps: "Originally, Leah had 32 chocolates and her sister had 42. So in total they had 32 + 42 = 74. After eating 35, they had 74 - 35 = 39 pieces left in total." Final Answer: "The answer is 39." (Key numbers and the final answer are highlighted in blue).

* **Factual:** Provides a step-by-step genealogy. Steps: "Dambar Shah (? - 1645) was the father of Krishna Shah. Rudra Shah was the child of Krishna Shah (? - 1661). So the final answer (the name of the grandchild) is: Rudra Shah." (Key names and dates are highlighted in blue).

**3. CoT: Invalid Reasoning**

* **Arithmetic:** The reasoning contains a logical error. It incorrectly states the sister had "42 - 32 = 10 chocolates more than Leah has." It then incorrectly calculates "10 + 35 = 45" and subtracts to get "45 - 6 = 39." Final Answer: "The answer is 39." (The erroneous steps are highlighted in red).

* **Factual:** The reasoning introduces irrelevant historical context. It states: "Dambar Shah (? - 1645) was the king of the Gorkha Kingdom. The Gorkha Kingdom was established by Prince Dravya Shah. Dravya Shah has a child named Rudra Shah." Final Answer: "The answer (the name of the grandchild) is: Rudra Shah." (The irrelevant context is highlighted in red).

**4. CoT: Incoherent Objects**

* **Arithmetic:** The reasoning swaps object values mid-process. It starts correctly with "Leah had 32 + 42 = 74 chocolates," but then incorrectly states "her sister had 32." It then correctly subtracts 35 to get 39, but incorrectly concludes "they had 42 pieces left in total." Final Answer: "The answer is 39." (The incoherent statements are highlighted in pink).

* **Factual:** The reasoning inverts the family relationships. It states: "Krishna Shah was the father of Rudra Shah. Dambar Shah (? - 1645) was the child of Krishna Shah (? - 1661)." Final Answer: "The final answer (the name of the grandchild) is: Rudra Shah." (The inverted relationships are highlighted in pink).

**5. CoT: Incoherent Language**

* **Arithmetic:** The reasoning uses incoherent language and incorrect numbers. It states: "After eating 32, they had 42 pieces left in total. Originally, Leah had 32 + 42 = 74 chocolates and her sister had 35. So in total they had 74 - 35 = 39." Final Answer: "The answer is 39." (The incoherent language is highlighted in pink).

* **Factual:** The reasoning provides a correct genealogical path but mislabels the final answer. It states: "Dambar Shah (? - 1645) was the child of Krishna Shah. Rudra Shah was the father of Krishna Shah (? - 1661)." Final Answer: "The final answer (the name of the grandchild) is: Rudra Shah." (The mislabeled path is highlighted in pink).

**6. CoT: Irrelevant Objects**

* **Arithmetic:** The reasoning uses completely different numbers from the original question. It states: "Originally, Leah had 19 chocolates and her sister had 31. So in total they had 19 + 31 = 50. After eating 29, they had 50 - 29 = 21 pieces left in total." Final Answer: "The answer is 21." (The irrelevant numbers are highlighted in red).

* **Factual:** The reasoning discusses an entirely different family. It states: "Metis Amando was the father of David Amando. Randall Amando was the child of David Amando." Final Answer: "The final answer (the name of the grandchild) is: Randall Amando." (The irrelevant family is highlighted in red).

**7. CoT: Irrelevant Language**

* **Arithmetic:** The reasoning introduces a completely irrelevant scenario about hair length. It states: "Patricia needs to donate 32 inches and wants her hair to be 42 inches long after the donation. Her hair is 35 inches long currently. Her hair needs to be 32 + 42 = 74 inches long when she cuts it. So she needs to grow 74 - 35 = 39 more inches." Final Answer: "The answer is 39." (The irrelevant language is highlighted in red).

* **Factual:** The reasoning answers a different question (about a brother-in-law) using the correct family data. It states: "The husband of Dambar Shah (? - 1645) is Krishna Shah. Krishna Shah (? - 1661) has a brother called Rudra Shah." Final Answer: "The final answer (the name of the brother-in-law) is: Rudra Shah." (The irrelevant question focus is highlighted in red).

### Key Observations

1. **Answer Consistency vs. Reasoning Quality:** The "Standard" and correct "CoT" methods produce the right answers. Several flawed CoT methods ("Invalid Reasoning," "Incoherent Objects," "Incoherent Language") still arrive at the correct final answer for the arithmetic problem (39), despite containing logical errors, swapped values, or nonsensical statements. This suggests the final answer can sometimes be correct even when the reasoning is not.

2. **Error Types in CoT:** The table categorizes different failure modes of Chain-of-Thought prompting:

* **Invalid Reasoning:** Logically flawed steps.

* **Incoherent Objects:** Swapping or misassigning values/objects.

* **Incoherent Language:** Grammatically or semantically nonsensical statements.

* **Irrelevant Objects/Language:** Introducing information completely unrelated to the problem.

3. **Factual Reasoning Robustness:** For the factual question, the correct answer "Rudra Shah" is produced by the "Standard," correct "CoT," and several flawed CoT methods ("Invalid Reasoning," "Incoherent Objects," "Incoherent Language"). Only the "Irrelevant Objects" method produces a different answer ("Randall Amando").

4. **Highlighting Scheme:** Blue highlights appear to mark correct, relevant information. Red highlights mark errors or irrelevant information. Pink highlights mark incoherent or internally contradictory information.

### Interpretation

This table serves as a technical demonstration of the strengths and, more importantly, the specific failure modes of Chain-of-Thought (CoT) prompting in large language models. It illustrates that CoT is not a guarantee of correct reasoning; the model can generate plausible-sounding step-by-step explanations that contain fundamental errors in logic, object permanence, or relevance.

The key insight is that **a correct final answer does not validate the reasoning process**. The "CoT: Invalid Reasoning" and "CoT: Incoherent Objects" rows for the arithmetic problem are prime examples: the model performs incorrect calculations but somehow lands on the right number (39), masking the underlying failure. This has significant implications for using CoT for interpretability or verification, as the reasoning trace cannot always be trusted even when the output is correct.

Furthermore, the table systematically categorizes how CoT can go wrong, providing a framework for diagnosing model errors. The "Irrelevant Objects/Language" rows show a complete breakdown where the model abandons the problem context entirely. This analysis is crucial for researchers and engineers working to improve the reliability and faithfulness of reasoning in AI systems.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Table: Prompting Methods for Arithmetic and Factual Reasoning

### Overview

The image presents a comparative table analyzing the effectiveness of different prompting methods in solving arithmetic and factual reasoning tasks. The table is divided into two main sections: **Arithmetic Reasoning Example** and **Factual Reasoning Example**, each containing a question and responses generated by various prompting strategies.

### Components/Axes

- **Columns**:

1. **Prompting Method**: Lists the type of prompting strategy (e.g., Standard, Chain-of-Thought [CoT], CoT: Invalid Reasoning).

2. **Arithmetic Reasoning Example**: Contains a math problem and answers.

3. **Factual Reasoning Example**: Contains a historical/genealogical question and answers.

- **Rows**:

- **Standard**: Baseline method with direct answers.

- **Chain-of-Thought (CoT)**: Step-by-step reasoning.

- **CoT: Invalid Reasoning**: Step-by-step with logical errors.

- **CoT: Incoherent Objects**: Step-by-step with nonsensical object references.

- **CoT: Incoherent Language**: Step-by-step with grammatically incoherent text.

- **CoT: Irrelevant Objects**: Step-by-step with unrelated object details.

- **CoT: Irrelevant Language**: Step-by-step with irrelevant contextual details.

### Detailed Analysis

#### Arithmetic Reasoning Example

- **Question**: "Leah had 32 chocolates and her sister had 42. If they ate 35, how many pieces do they have left in total?"

- **Standard Answer**: 39 (correct).

- **CoT (Valid)**: Correct step-by-step: `32 + 42 = 74`; `74 - 35 = 39`.

- **CoT: Invalid Reasoning**: Incorrect logic: `42 - 32 = 10`; `10 + 35 = 45`; `45 - 6 = 39` (highlighted error in subtraction).

- **CoT: Incoherent Objects**: Incorrect object references: `32 + 32 = 64`; `64 - 35 = 29` (highlighted error in sister’s chocolates).

- **CoT: Incoherent Language**: Grammatical errors: `After eating 32, they had 42 pieces left` (highlighted inconsistency).

- **CoT: Irrelevant Objects**: Incorrect values: `19 + 31 = 50`; `50 - 29 = 21` (highlighted irrelevant numbers).

- **CoT: Irrelevant Language**: Unrelated details: `Patricia’s hair donation` (highlighted irrelevance).

#### Factual Reasoning Example

- **Question**: "Who is the grandchild of Dambar Shah?"

- **Standard Answer**: Rudra Shah (correct).

- **CoT (Valid)**: Correct genealogy: `Dambar Shah → Krishna Shah → Rudra Shah`.

- **CoT: Invalid Reasoning**: Incorrect logic: `Dambar Shah → Gorkha Kingdom → Rudra Shah` (highlighted error in kingdom reference).

- **CoT: Incoherent Objects**: Incorrect object references: `Dambar Shah → Krishna Shah → Rudra Shah` (highlighted redundant steps).

- **CoT: Incoherent Language**: Grammatical errors: `Dambar Shah was the child of Krishna Shah` (highlighted incorrect parentage).

- **CoT: Irrelevant Objects**: Incorrect values: `Metis Amando → David Amando → Randall Amando` (highlighted unrelated names).

- **CoT: Irrelevant Language**: Unrelated details: `Patricia’s hair donation` (highlighted irrelevance).

### Key Observations

1. **Arithmetic Errors**:

- CoT: Invalid Reasoning and CoT: Incoherent Objects introduce logical errors (e.g., incorrect subtraction/addition).

- CoT: Incoherent Language and Irrelevant Objects/Irrelevant Language include irrelevant or nonsensical details.

2. **Factual Reasoning Robustness**:

- Despite irrelevant details (e.g., CoT: Irrelevant Language), the correct answer (Rudra Shah) is often maintained.

- CoT: Invalid Reasoning and CoT: Incoherent Objects occasionally derail the reasoning but still arrive at the correct answer.

3. **Highlighted Errors**:

- Pink highlights emphasize incorrect arithmetic operations (e.g., `42 - 32 = 10`).

- Red highlights mark irrelevant or incoherent text (e.g., `Patricia’s hair donation`).

### Interpretation

The table demonstrates how prompting methods impact reasoning accuracy:

- **Standard prompting** provides correct answers but lacks explanatory depth.

- **Chain-of-Thought (CoT)** improves transparency but is vulnerable to errors when logic is flawed (e.g., CoT: Invalid Reasoning).

- **Incoherent or irrelevant prompts** degrade performance in arithmetic tasks but show resilience in factual reasoning, suggesting that factual knowledge is more robust to contextual noise.

- The highlighted errors reveal common pitfalls, such as misapplying operations or introducing unrelated details.

This analysis underscores the importance of structured prompting for arithmetic tasks, while factual reasoning may tolerate some incoherence due to reliance on stored knowledge.

DECODING INTELLIGENCE...