## Table: Prompting Methods for Arithmetic and Factual Reasoning

### Overview

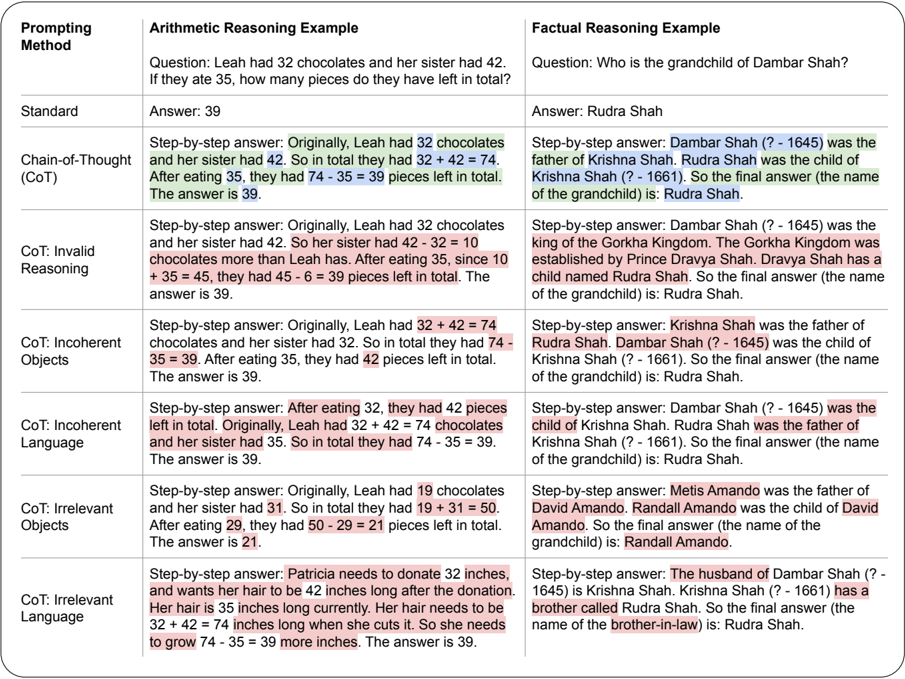

The image presents a comparative table analyzing the effectiveness of different prompting methods in solving arithmetic and factual reasoning tasks. The table is divided into two main sections: **Arithmetic Reasoning Example** and **Factual Reasoning Example**, each containing a question and responses generated by various prompting strategies.

### Components/Axes

- **Columns**:

1. **Prompting Method**: Lists the type of prompting strategy (e.g., Standard, Chain-of-Thought [CoT], CoT: Invalid Reasoning).

2. **Arithmetic Reasoning Example**: Contains a math problem and answers.

3. **Factual Reasoning Example**: Contains a historical/genealogical question and answers.

- **Rows**:

- **Standard**: Baseline method with direct answers.

- **Chain-of-Thought (CoT)**: Step-by-step reasoning.

- **CoT: Invalid Reasoning**: Step-by-step with logical errors.

- **CoT: Incoherent Objects**: Step-by-step with nonsensical object references.

- **CoT: Incoherent Language**: Step-by-step with grammatically incoherent text.

- **CoT: Irrelevant Objects**: Step-by-step with unrelated object details.

- **CoT: Irrelevant Language**: Step-by-step with irrelevant contextual details.

### Detailed Analysis

#### Arithmetic Reasoning Example

- **Question**: "Leah had 32 chocolates and her sister had 42. If they ate 35, how many pieces do they have left in total?"

- **Standard Answer**: 39 (correct).

- **CoT (Valid)**: Correct step-by-step: `32 + 42 = 74`; `74 - 35 = 39`.

- **CoT: Invalid Reasoning**: Incorrect logic: `42 - 32 = 10`; `10 + 35 = 45`; `45 - 6 = 39` (highlighted error in subtraction).

- **CoT: Incoherent Objects**: Incorrect object references: `32 + 32 = 64`; `64 - 35 = 29` (highlighted error in sister’s chocolates).

- **CoT: Incoherent Language**: Grammatical errors: `After eating 32, they had 42 pieces left` (highlighted inconsistency).

- **CoT: Irrelevant Objects**: Incorrect values: `19 + 31 = 50`; `50 - 29 = 21` (highlighted irrelevant numbers).

- **CoT: Irrelevant Language**: Unrelated details: `Patricia’s hair donation` (highlighted irrelevance).

#### Factual Reasoning Example

- **Question**: "Who is the grandchild of Dambar Shah?"

- **Standard Answer**: Rudra Shah (correct).

- **CoT (Valid)**: Correct genealogy: `Dambar Shah → Krishna Shah → Rudra Shah`.

- **CoT: Invalid Reasoning**: Incorrect logic: `Dambar Shah → Gorkha Kingdom → Rudra Shah` (highlighted error in kingdom reference).

- **CoT: Incoherent Objects**: Incorrect object references: `Dambar Shah → Krishna Shah → Rudra Shah` (highlighted redundant steps).

- **CoT: Incoherent Language**: Grammatical errors: `Dambar Shah was the child of Krishna Shah` (highlighted incorrect parentage).

- **CoT: Irrelevant Objects**: Incorrect values: `Metis Amando → David Amando → Randall Amando` (highlighted unrelated names).

- **CoT: Irrelevant Language**: Unrelated details: `Patricia’s hair donation` (highlighted irrelevance).

### Key Observations

1. **Arithmetic Errors**:

- CoT: Invalid Reasoning and CoT: Incoherent Objects introduce logical errors (e.g., incorrect subtraction/addition).

- CoT: Incoherent Language and Irrelevant Objects/Irrelevant Language include irrelevant or nonsensical details.

2. **Factual Reasoning Robustness**:

- Despite irrelevant details (e.g., CoT: Irrelevant Language), the correct answer (Rudra Shah) is often maintained.

- CoT: Invalid Reasoning and CoT: Incoherent Objects occasionally derail the reasoning but still arrive at the correct answer.

3. **Highlighted Errors**:

- Pink highlights emphasize incorrect arithmetic operations (e.g., `42 - 32 = 10`).

- Red highlights mark irrelevant or incoherent text (e.g., `Patricia’s hair donation`).

### Interpretation

The table demonstrates how prompting methods impact reasoning accuracy:

- **Standard prompting** provides correct answers but lacks explanatory depth.

- **Chain-of-Thought (CoT)** improves transparency but is vulnerable to errors when logic is flawed (e.g., CoT: Invalid Reasoning).

- **Incoherent or irrelevant prompts** degrade performance in arithmetic tasks but show resilience in factual reasoning, suggesting that factual knowledge is more robust to contextual noise.

- The highlighted errors reveal common pitfalls, such as misapplying operations or introducing unrelated details.

This analysis underscores the importance of structured prompting for arithmetic tasks, while factual reasoning may tolerate some incoherence due to reliance on stored knowledge.