\n

## Chart: Performance of Language Models on Math Problems

### Overview

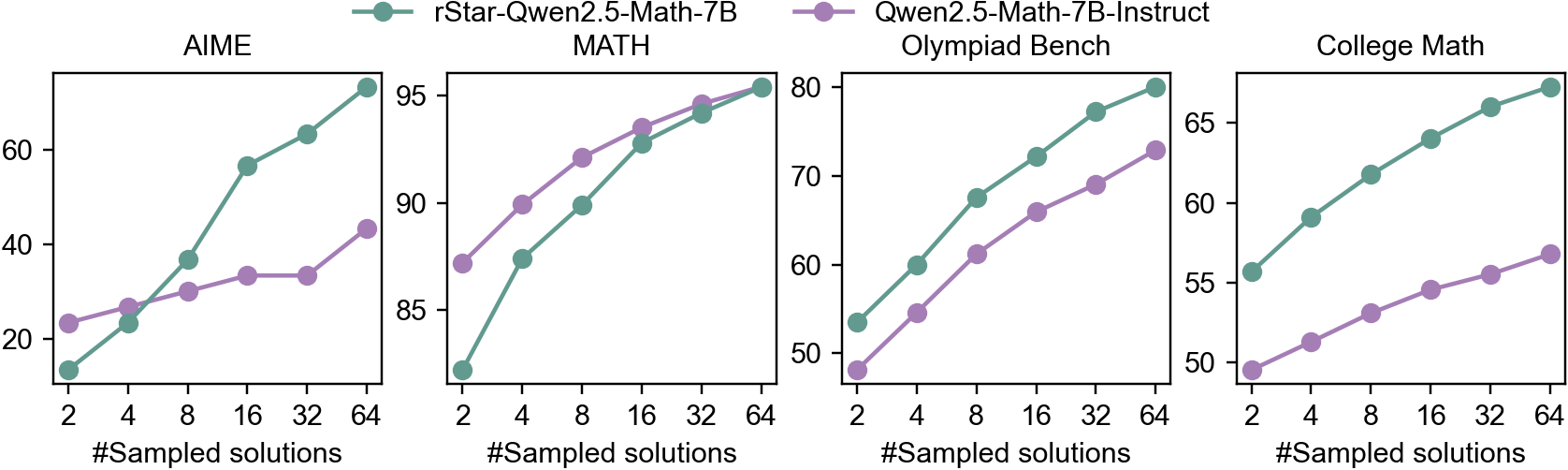

The image presents a series of four line charts, each depicting the performance of two language models – rStar-Qwen2.5-Math-7B (represented by teal lines with circular markers) and Qwen2.5-Math-7B-Instruct (represented by purple lines with circular markers) – across four different math problem categories: AIME, MATH, Olympiad Bench, and College Math. The x-axis of each chart represents the number of sampled solutions, ranging from 2 to 64. The y-axis represents the performance score, with varying scales for each category.

### Components/Axes

* **X-axis (all charts):** "#Sampled solutions" with markers at 2, 4, 8, 16, 32, and 64.

* **Y-axis:** Performance score.

* AIME: Approximately 18 to 96.

* MATH: Approximately 82 to 96.

* Olympiad Bench: Approximately 48 to 80.

* College Math: Approximately 50 to 76.

* **Legend (top-right):**

* Teal line with circle marker: rStar-Qwen2.5-Math-7B

* Purple line with circle marker: Qwen2.5-Math-7B-Instruct

* **Chart Titles (top-center of each chart):** AIME, MATH, Olympiad Bench, College Math.

### Detailed Analysis or Content Details

**AIME Chart:**

* rStar-Qwen2.5-Math-7B: The line slopes upward, starting at approximately 22 at 2 sampled solutions, reaching approximately 92 at 64 sampled solutions. Data points: (2, 22), (4, 28), (8, 38), (16, 52), (32, 68), (64, 92).

* Qwen2.5-Math-7B-Instruct: The line is relatively flat initially, then increases. Starting at approximately 24 at 2 sampled solutions, reaching approximately 34 at 64 sampled solutions. Data points: (2, 24), (4, 26), (8, 30), (16, 34), (32, 34), (64, 34).

**MATH Chart:**

* rStar-Qwen2.5-Math-7B: The line slopes upward, starting at approximately 84 at 2 sampled solutions, reaching approximately 95 at 64 sampled solutions. Data points: (2, 84), (4, 88), (8, 91), (16, 93), (32, 94), (64, 95).

* Qwen2.5-Math-7B-Instruct: The line slopes upward, starting at approximately 82 at 2 sampled solutions, reaching approximately 94 at 64 sampled solutions. Data points: (2, 82), (4, 86), (8, 90), (16, 92), (32, 93), (64, 94).

**Olympiad Bench Chart:**

* rStar-Qwen2.5-Math-7B: The line slopes upward, starting at approximately 50 at 2 sampled solutions, reaching approximately 78 at 64 sampled solutions. Data points: (2, 50), (4, 54), (8, 60), (16, 68), (32, 74), (64, 78).

* Qwen2.5-Math-7B-Instruct: The line slopes upward, starting at approximately 48 at 2 sampled solutions, reaching approximately 64 at 64 sampled solutions. Data points: (2, 48), (4, 51), (8, 56), (16, 61), (32, 64), (64, 64).

**College Math Chart:**

* rStar-Qwen2.5-Math-7B: The line slopes upward, starting at approximately 54 at 2 sampled solutions, reaching approximately 73 at 64 sampled solutions. Data points: (2, 54), (4, 57), (8, 60), (16, 65), (32, 70), (64, 73).

* Qwen2.5-Math-7B-Instruct: The line slopes upward, starting at approximately 50 at 2 sampled solutions, reaching approximately 58 at 64 sampled solutions. Data points: (2, 50), (4, 52), (8, 54), (16, 55), (32, 56), (64, 58).

### Key Observations

* In all four categories, rStar-Qwen2.5-Math-7B consistently outperforms Qwen2.5-Math-7B-Instruct.

* The performance of both models generally improves as the number of sampled solutions increases.

* The AIME chart shows the most significant performance difference between the two models.

* The College Math chart shows the smallest performance difference between the two models.

* The performance gains from increasing sampled solutions diminish at higher sampling rates (e.g., between 32 and 64 samples).

### Interpretation

The charts demonstrate the impact of increasing the number of sampled solutions on the performance of two language models across different math problem types. The consistent outperformance of rStar-Qwen2.5-Math-7B suggests it is a more robust model, or benefits more from increased sampling. The diminishing returns observed at higher sampling rates indicate a point of saturation where additional samples provide minimal performance improvement. The varying performance across different problem categories (AIME, MATH, Olympiad Bench, College Math) suggests that the models' strengths and weaknesses are problem-specific. The relatively low performance of Qwen2.5-Math-7B-Instruct, particularly in AIME, could indicate a limitation in its instruction-following capabilities or a need for further fine-tuning on these types of problems. The data suggests that for optimal performance, a balance must be struck between the number of sampled solutions and the computational cost associated with generating them.