## Diagram: Probabilistic Decision Tree

### Overview

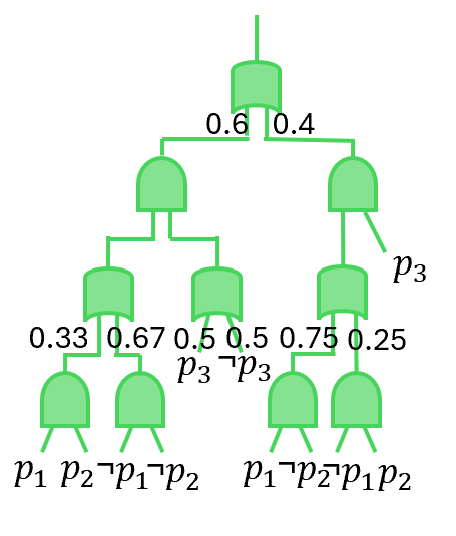

The image displays a hierarchical decision tree diagram, likely representing a probabilistic classification or logical decision process. The tree is structured with a root node at the top, branching downward into intermediate nodes and terminating in leaf nodes at the bottom. All nodes are represented by green, rounded rectangular shapes. Branches are labeled with numerical probabilities, and leaf nodes are labeled with logical propositions involving variables `p1`, `p2`, and `p3`.

### Components/Axes

* **Structure:** A top-down tree diagram.

* **Nodes:** Green, rounded rectangles. There is one root node, four intermediate nodes, and six leaf nodes.

* **Branches:** Lines connecting nodes. Each branch from an intermediate node is labeled with a numerical probability.

* **Labels:**

* **Probabilities:** `0.6`, `0.4`, `0.33`, `0.67`, `0.5`, `0.5`, `0.75`, `0.25`.

* **Logical Propositions (at leaf nodes):** `p1`, `p2`, `¬p1`, `¬p2`, `p1`, `¬p2`, `¬p1`, `p2`.

* **Additional Label:** The variable `p3` is placed next to an intermediate node on the right side of the tree. The logical expression `¬p3` appears near the center of the tree, associated with a branch.

### Detailed Analysis

The tree's structure and flow are as follows:

1. **Root Node (Top Center):** Splits into two main branches.

* **Left Branch:** Labeled `0.6`.

* **Right Branch:** Labeled `0.4`.

2. **Left Subtree (from the 0.6 branch):**

* Leads to an intermediate node.

* This node splits into two branches:

* **Left:** Labeled `0.33`, leading to a leaf node labeled `p1` and `p2`.

* **Right:** Labeled `0.67`, leading to a leaf node labeled `¬p1` and `¬p2`.

3. **Right Subtree (from the 0.4 branch):**

* Leads to an intermediate node. The label `p3` is positioned to the right of this node.

* This node splits into two branches:

* **Left:** Labeled `0.5`. This branch leads to an intermediate node. The label `¬p3` is positioned near the base of this branch.

* **Right:** Labeled `0.5`. This branch leads directly to a leaf node labeled `p1` and `¬p2`.

* The intermediate node reached via the left `0.5` branch splits further:

* **Left:** Labeled `0.75`, leading to a leaf node labeled `¬p1` and `p2`.

* **Right:** Labeled `0.25`, leading to a leaf node labeled `¬p1` and `p2`.

**Transcription of Logical Labels (Original):**

* Leaf 1 (Bottom Left): `p1 p2`

* Leaf 2: `¬p1 ¬p2`

* Leaf 3 (Center, under ¬p3 branch): `p1 ¬p2`

* Leaf 4: `¬p1 p2`

* Leaf 5: `¬p1 p2`

* Leaf 6 (Bottom Right): `p1 p2` (Note: This appears to be a duplicate label of Leaf 1, but is on a different path).

**English Translation of Logical Symbols:**

* `p1`, `p2`, `p3`: Propositions or variables (e.g., "Feature 1 is true").

* `¬`: Logical negation ("NOT"). Therefore, `¬p1` means "NOT p1" or "p1 is false".

### Key Observations

1. **Probability Summation:** At each intermediate node, the probabilities on its outgoing branches sum to 1.0 (0.6+0.4=1; 0.33+0.67=1; 0.5+0.5=1; 0.75+0.25=1). This is consistent with a decision tree where probabilities represent the likelihood of taking a given path.

2. **Variable `p3` Placement:** The variable `p3` is associated with the first node in the right subtree, and its negation `¬p3` is associated with a subsequent branch. This suggests the right subtree's decisions are conditioned on the state of `p3`.

3. **Leaf Node Outcomes:** The six leaf nodes represent four distinct logical outcomes:

* `p1 AND p2` (appears twice, on different paths).

* `NOT p1 AND NOT p2`.

* `p1 AND NOT p2`.

* `NOT p1 AND p2` (appears twice, on different paths).

4. **Asymmetry:** The left subtree (probability 0.6) is simpler, leading directly to two outcomes. The right subtree (probability 0.4) is more complex, involving an additional decision layer based on `p3`.

### Interpretation

This diagram models a sequential decision-making process under uncertainty, where the outcome depends on the values of three binary variables (`p1`, `p2`, `p3`).

* **Process Flow:** The tree first makes a high-level split (60%/40% probability). The more likely path (left) leads to a direct classification based on `p1` and `p2`. The less likely path (right) involves an intermediate check on variable `p3` before further refining the decision based on `p1` and `p2`.

* **Meaning of Probabilities:** The branch probabilities likely represent either:

* The empirical frequency of taking that path in a dataset.

* The conditional probability of a child node given the parent node's state.

* **Logical Relationships:** The tree defines a mapping from combinations of `p1`, `p2`, and `p3` to final classifications. For example, one path to the outcome `p1 AND p2` has a total probability of `0.6 * 0.33 = 0.198`. Another path to the same outcome has a probability of `0.4 * 0.5 * 0.5 = 0.1`.

* **Purpose:** This could represent a diagnostic flowchart, a classifier in a machine learning model (like a probabilistic decision tree), or a model for calculating the probability of different logical states in a system. The structure reveals that the state of `p3` is only relevant in a minority (40%) of cases.