## Diagram: Two-Run Kernel Process

### Overview

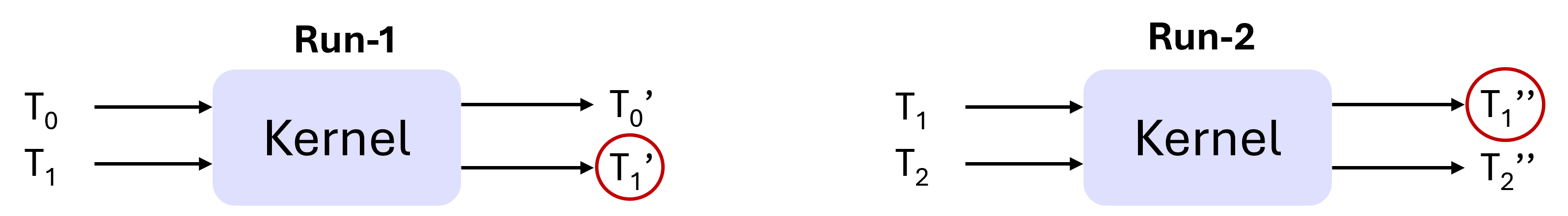

The image displays a technical diagram illustrating a two-stage computational process labeled "Run-1" and "Run-2". Each stage involves a central processing unit called a "Kernel" that transforms input tensors (denoted by T with subscripts) into output tensors (denoted by T with primes). The diagram is presented on a light gray background with black text and arrows, and light blue kernel blocks. Specific output elements are highlighted with red circles.

### Components/Axes

The diagram is composed of two distinct, side-by-side sections:

1. **Run-1 (Left Section):**

* **Title:** "Run-1" (centered above the kernel block).

* **Kernel Block:** A light blue, rounded rectangle labeled "Kernel" in the center.

* **Inputs:** Two arrows point into the left side of the kernel block.

* Top input label: `T₀`

* Bottom input label: `T₁`

* **Outputs:** Two arrows point out from the right side of the kernel block.

* Top output label: `T₀'`

* Bottom output label: `T₁'` (This label is enclosed in a red circle).

2. **Run-2 (Right Section):**

* **Title:** "Run-2" (centered above the kernel block).

* **Kernel Block:** Identical to Run-1, a light blue, rounded rectangle labeled "Kernel".

* **Inputs:** Two arrows point into the left side of the kernel block.

* Top input label: `T₁`

* Bottom input label: `T₂`

* **Outputs:** Two arrows point out from the right side of the kernel block.

* Top output label: `T₁''` (This label is enclosed in a red circle).

* Bottom output label: `T₂''`

### Detailed Analysis

* **Data Flow:** The diagram depicts a sequential data flow. The outputs of Run-1 (`T₀'` and `T₁'`) are not shown as direct inputs to Run-2. Instead, Run-2 takes a new set of inputs (`T₁` and `T₂`). The notation suggests a relationship: the `T₁` input for Run-2 may be the same tensor as the `T₁` input for Run-1, or it could be a subsequent version.

* **Notation:** The prime notation (`'`, `''`) indicates a transformation or iteration.

* `T₀` → Kernel → `T₀'`

* `T₁` → Kernel → `T₁'`

* `T₁` → Kernel → `T₁''`

* `T₂` → Kernel → `T₂''`

* **Highlighting:** The red circles are placed around the output labels `T₁'` (in Run-1) and `T₁''` (in Run-2). This visually emphasizes these two specific outputs, suggesting they are the primary results of interest or are being compared across the two runs.

### Key Observations

1. **Structural Symmetry:** Run-1 and Run-2 are structurally identical, differing only in their input/output labels.

2. **Input Progression:** The input tensor indices progress from (`T₀`, `T₁`) in Run-1 to (`T₁`, `T₂`) in Run-2. This implies a sliding window or sequential processing of a series of tensors (T₀, T₁, T₂, ...).

3. **Output Emphasis:** The consistent highlighting of the `T₁`-derived outputs (`T₁'` and `T₁''`) across both runs is the most salient visual feature, directing the viewer's attention to the transformation of this specific tensor.

4. **Kernel Abstraction:** The "Kernel" is represented as a black box; its internal operations are not defined in this diagram.

### Interpretation

This diagram illustrates a **two-step iterative or sequential kernel application** on a series of data tensors. The core concept is the repeated processing of a central tensor (`T₁`) within a moving context.

* **What it demonstrates:** The process shows how a kernel function is applied to different pairs of consecutive tensors from a sequence. The first run processes the pair (T₀, T₁), and the second run processes the next pair (T₁, T₂). The red circles indicate that the transformation of the middle tensor (`T₁`) is the key output being tracked from each step.

* **Relationship between elements:** The two runs are independent executions of the same kernel logic but on different input windows. The shared element is the tensor `T₁`, which appears as an output in the first window and as an input in the second window. This pattern is common in algorithms like convolutional neural networks (where a kernel slides over input data), temporal sequence processing, or any sliding-window computation.

* **Notable pattern:** The notation `T₁''` (double prime) for the output of Run-2 suggests it is a second-order transformation of the original `T₁` tensor, having been processed by the kernel in two successive contexts (first with `T₀`, then with `T₂`). The diagram effectively visualizes the propagation and evolution of data through a multi-stage process.