\n

## Diagram: LLM-Based Python Code Generation Process

### Overview

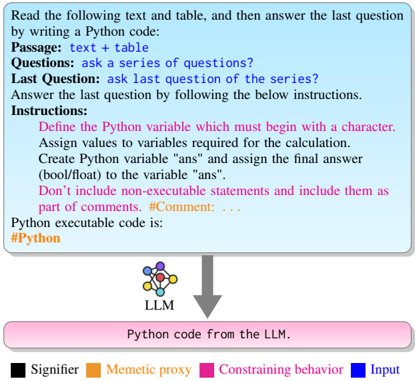

The image is a flowchart diagram illustrating a process for using a Large Language Model (LLM) to generate Python code based on a given text passage and a series of questions. The diagram uses color-coded elements and directional arrows to show the flow from input instructions to the final code output.

### Components/Axes

The diagram consists of three main visual components arranged vertically:

1. **Top Component (Light Blue Box):** A large rectangular box containing the primary instructions and input structure.

2. **Middle Component (LLM Icon & Arrow):** A small icon representing an LLM (depicted as a network of connected nodes) with a downward-pointing arrow.

3. **Bottom Component (Pink Box):** A rectangular box representing the output.

4. **Legend (Bottom of Image):** A color-coded key explaining the meaning of specific colors used in the diagram.

### Detailed Analysis

#### Top Component: Instruction Box

* **Background Color:** Light blue.

* **Text Content (Transcribed):**

* **Header:** "Read the following text and table, and then answer the last question by writing a Python code:"

* **Structure Labels (in blue):**

* "Passage: text + table"

* "Questions: ask a series of questions?"

* "Last Question: ask last question of the series?"

* **Instruction Block:** "Answer the last question by following the below instructions."

* **Detailed Instructions (in pink):**

* "Define the Python variable which must begin with a character."

* "Assign values to variables required for the calculation."

* "Create Python variable "ans" and assign the final answer (bool/float) to the variable "ans"."

* "Don't include non-executable statements and include them as part of comments. #Comment: . . ."

* **Final Line (in orange):** "Python executable code is: #Python"

#### Middle Component: Process Flow

* **LLM Icon:** A small graphic of interconnected circles (nodes) in blue, orange, and black, labeled "LLM" underneath.

* **Arrow:** A thick, gray, downward-pointing arrow connects the bottom of the instruction box to the top of the output box, indicating the direction of data flow.

#### Bottom Component: Output Box

* **Background Color:** Pink.

* **Text Content:** "Python code from the LLM."

#### Legend

* **Location:** Bottom of the image, below the main flowchart.

* **Color Key:**

* **Black Square:** "Signifier"

* **Orange Square:** "Memetic proxy"

* **Pink Square:** "Constraining behavior"

* **Blue Square:** "Input"

### Key Observations

1. **Color-Coding:** The diagram uses color intentionally. Blue is used for input labels ("Passage," "Questions," "Last Question") and the "Input" legend item. Pink is used for the constraining instructions and the output box, linking the rules to the final product. Orange highlights the final prompt for code ("#Python") and the "Memetic proxy" legend item.

2. **Process Flow:** The flow is strictly linear and top-down: Input Instructions -> LLM Processing -> Code Output.

3. **Instruction Specificity:** The instructions are highly specific, dictating variable naming conventions (must start with a character), the creation of a final answer variable (`ans`), and the handling of comments.

4. **Placeholder Text:** The "Passage," "Questions," and "Last Question" labels are placeholders, indicating where specific content would be inserted in a real application.

### Interpretation

This diagram outlines a structured **prompt engineering template** for an LLM tasked with code generation. It demonstrates a method to constrain and guide the LLM's output to ensure it produces valid, executable Python code that adheres to specific formatting rules.

* **Relationship Between Elements:** The "Constraining behavior" (pink) instructions are the core of the prompt, designed to override the LLM's default tendencies and force a predictable output format. The "Input" (blue) provides the raw data and query. The "Memetic proxy" (orange) likely refers to the LLM itself or the code-generation act as a proxy for solving the problem presented in the input.

* **Purpose:** The process aims to transform unstructured or semi-structured information (text + table + questions) into a precise, computational answer (`ans` variable) encapsulated in runnable code. This is valuable for automation, data analysis, and integrating LLM reasoning into larger software systems.

* **Notable Design Choice:** The separation of the "series of questions" from the "last question" suggests a chain-of-thought or multi-step reasoning process where the LLM must first understand the context before generating the final, code-based answer. The strict comment rule (`#Comment: . . .`) ensures that any explanatory text from the LLM does not break the code's executability.