## Line Chart: Model Accuracy vs. Difficulty Level

### Overview

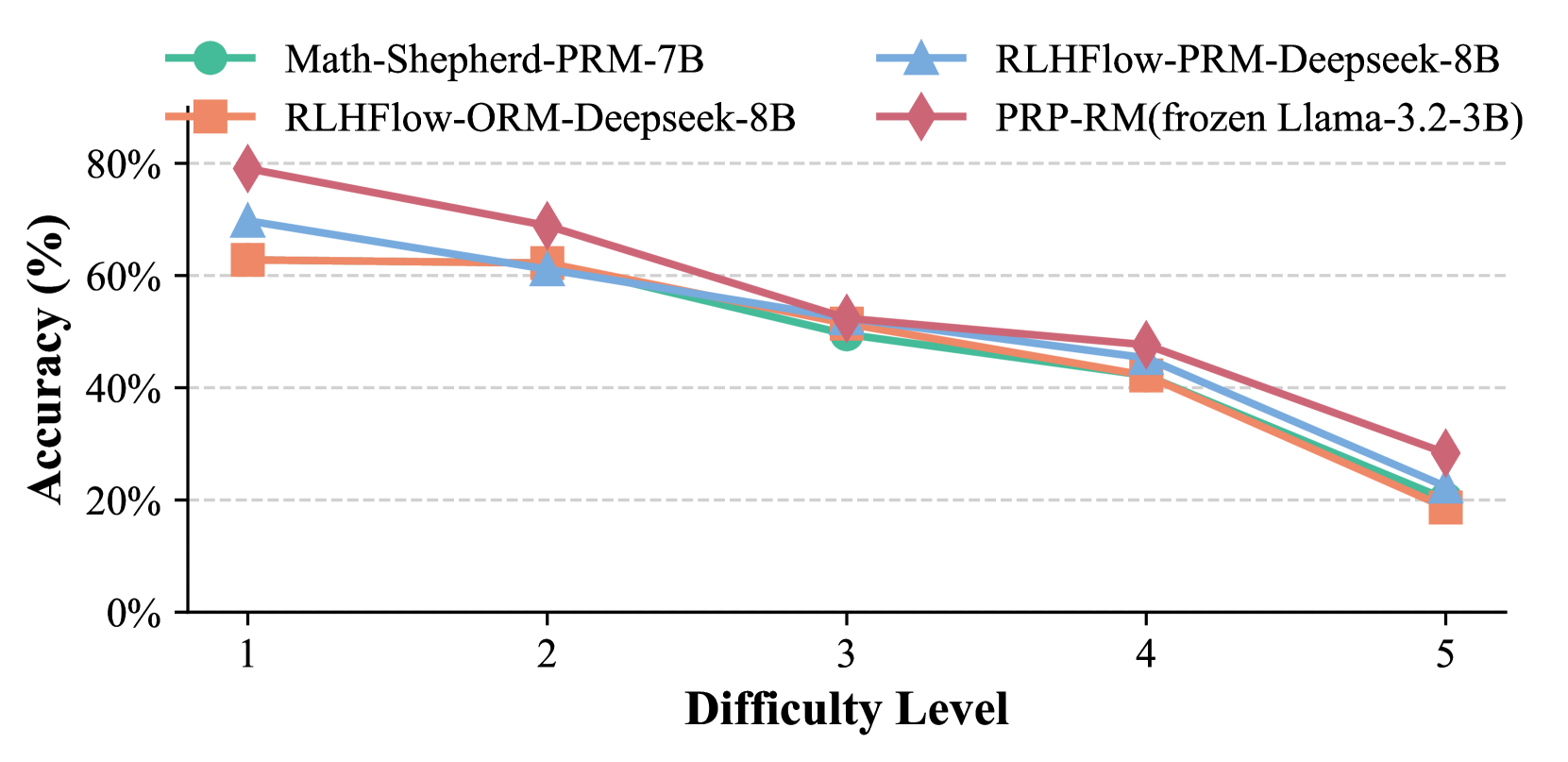

The image is a line chart comparing the accuracy of four different language models across five difficulty levels. The chart displays accuracy (in percentage) on the y-axis and difficulty level on the x-axis. Each model is represented by a distinct colored line with a specific marker.

### Components/Axes

* **X-axis:** "Difficulty Level" with numerical values 1, 2, 3, 4, and 5.

* **Y-axis:** "Accuracy (%)" with values ranging from 0% to 80%, incrementing by 20%.

* **Legend:** Located at the top of the chart, identifying each model by color and name:

* Green: Math-Shepherd-PRM-7B

* Orange: RLHFlow-ORM-Deepseek-8B

* Blue: RLHFlow-PRM-Deepseek-8B

* Red: PRP-RM(frozen Llama-3.2-3B)

### Detailed Analysis

**1. Math-Shepherd-PRM-7B (Green Line):**

* Trend: Generally decreasing accuracy as difficulty increases.

* Data Points:

* Difficulty 1: Approximately 68%

* Difficulty 2: Approximately 65%

* Difficulty 3: Approximately 50%

* Difficulty 4: Approximately 43%

* Difficulty 5: Approximately 18%

**2. RLHFlow-ORM-Deepseek-8B (Orange Line):**

* Trend: Relatively stable accuracy between difficulty levels 1 and 2, then decreasing.

* Data Points:

* Difficulty 1: Approximately 63%

* Difficulty 2: Approximately 63%

* Difficulty 3: Approximately 53%

* Difficulty 4: Approximately 41%

* Difficulty 5: Approximately 20%

**3. RLHFlow-PRM-Deepseek-8B (Blue Line):**

* Trend: Decreasing accuracy as difficulty increases.

* Data Points:

* Difficulty 1: Approximately 70%

* Difficulty 2: Approximately 61%

* Difficulty 3: Approximately 54%

* Difficulty 4: Approximately 46%

* Difficulty 5: Approximately 20%

**4. PRP-RM(frozen Llama-3.2-3B) (Red Line):**

* Trend: Decreasing accuracy as difficulty increases.

* Data Points:

* Difficulty 1: Approximately 79%

* Difficulty 2: Approximately 70%

* Difficulty 3: Approximately 54%

* Difficulty 4: Approximately 48%

* Difficulty 5: Approximately 28%

### Key Observations

* All models show a decrease in accuracy as the difficulty level increases.

* PRP-RM(frozen Llama-3.2-3B) generally has the highest accuracy across all difficulty levels, except at difficulty level 5, where RLHFlow-ORM-Deepseek-8B and RLHFlow-PRM-Deepseek-8B are slightly more accurate.

* Math-Shepherd-PRM-7B has the lowest accuracy at difficulty level 5.

* The accuracy of all models converges as the difficulty level increases, particularly at level 5.

### Interpretation

The chart demonstrates the performance of different language models on tasks of varying difficulty. The consistent decrease in accuracy across all models as difficulty increases suggests that all models struggle with more complex tasks. The PRP-RM(frozen Llama-3.2-3B) model appears to be the most robust, maintaining higher accuracy across most difficulty levels. However, the convergence of accuracy at higher difficulty levels indicates that the gap in performance between the models narrows as the tasks become more challenging. This could imply a ceiling effect, where the inherent limitations of the models or the nature of the tasks prevent further differentiation in performance.